How to Test AI Agents: A Practical Step-by-Step Guide

Learn how to test AI agents for reliability, safety, and performance. This Ai Agent Ops guide covers testing strategies, metrics, and tools for validating agentic AI workflows.

How to test AI agents requires a structured approach that validates behavior, robustness, and governance across data, models, and environments. This practical guide outlines a step-by-step workflow, core metrics, and tooling you can apply today. According to Ai Agent Ops, start with clear objectives and expand to data quality, edge cases, and ongoing monitoring.

Defining Testing Goals for AI Agents

A solid test plan begins with explicit objectives. Are you validating safe decision-making, consistent policy enforcement, or reliable orchestration across multiple agents? Define success criteria in measurable terms: expected outcomes, acceptable error rates, latency bounds, and clearly defined failure modes. Tie each objective to a concrete test case, ensuring traceability from requirement to result. In practice, align these goals with product and business objectives to avoid scope creep. Governance considerations such as privacy, compliance, data handling, and auditability are essential for enterprise deployments. You should establish distinct goals for safety-critical components and routine decision-making to prevent one area from bottlenecking another. As you set goals, document how you will measure each objective and how results will feed iteration.

Key Testing Dimensions: Behavior, Robustness, and Safety

AI agents operate in dynamic environments where behavior can shift with inputs, prompts, or data drift. Evaluate whether agents follow intended policies, respect constraints, and produce predictable outputs under normal and adversarial conditions. Robustness requires testing across edge cases, time-varying contexts, and resilience to partial failures. Safety encompasses guardrails, risk assessment, and fallback strategies when agents encounter uncertainty. This section explains how to design tests that distinguish desirable, bounded variance from harmful or unsafe behavior and how to document observed patterns for future improvements. By separating concerns—behavioral fidelity, resilience, and safety—you can pinpoint root causes faster during debugging and refinement.

Data Quality and Drift Considerations

Data quality drives agent performance. Testing must validate data sources, labeling accuracy, and distributional drift over time. Create representative datasets that cover baseline scenarios, corner cases, and real-world anomalies. Instrument data pipelines to detect drift early and trigger retraining or policy updates when required. This section also discusses privacy-preserving data handling, synthetic data generation, and how to maintain test data that reflects evolving production data without exposing sensitive information. Integrating data governance early helps prevent regulatory issues as agents scale.

Testing Techniques: Unit, Integration, and Scenario Testing

A multi-layered approach yields robust coverage. Start with unit tests that verify individual components and simple prompts; proceed to integration tests that ensure agent orchestration and message flows work as intended; finally, run scenario tests that emulate real-world workflows, multi-agent collaborations, and user interactions. Each layer should include deterministic as well as stochastic tests to capture variability. Document test cases with expected results, setup steps, and known limitations. Use runbooks to guide repeated testing and avoid reinventing the wheel for every iteration.

Building a Test Harness for AI Agents

An effective test harness automates test execution, result collection, and regression checks. It should support versioned prompts, environmental isolation, and reproducible seeds for randomness. Integrate the harness with your CI/CD pipeline so tests run on every model or policy change. Use both synthetic and augmented data to stress-test inputs and failure modes. This section also describes how to store traces and logs for auditability and root-cause analysis when failures occur. A well-designed harness makes it feasible to scale testing as your agent ecosystem grows.

Evaluation Metrics and Benchmarks

Choose metrics that reflect both user experience and system reliability. Common categories include behavioral accuracy, decision latency, throughput, resource usage, and failure-rate metrics. Establish baselines and track drift, regression, and coverage over time. Benchmark against reference scenarios and define acceptable tolerance bands. This section emphasizes avoiding overfitting tests to a single dataset and encourages ongoing calibration as models and prompts evolve. Use metrics that align with business outcomes and governance requirements to ensure tests translate into meaningful improvements.

Authority sources

- NIST AI testing guidelines: https://www.nist.gov/topics/artificial-intelligence

- Stanford AI Lab: https://ai.stanford.edu/

- MIT: https://www.mit.edu/

Operational Considerations for Production Testing

In production, testing becomes continuous and proactive. Implement a test suite that runs alongside deployment pipelines, with automated rollbacks for failures and monitoring that detects regressions in real-time. Establish governance controls, alerting, and versioning so that changes to agents can be audited. Finally, foster a culture of learning where test results feed rapid iteration and governance-informed deployment. This ongoing discipline helps ensure that AI agents remain aligned with user needs and safety standards as they scale.

Tools & Materials

- Test environment (sandbox/staging)(Isolated environment that mirrors production data gates; avoid live PII.)

- Test data sets(Include baseline, edge cases, and drift-simulated data.)

- Test harness and automation framework(Support deterministic seeds and repeatable runs.)

- Monitoring/logging dashboards(Capture latency, outputs, and errors for analysis.)

- Version control and CI/CD integration(Track test definitions and enforce gate checks.)

- Privacy/compliance guidelines(Ensure test data handling complies with policy.)

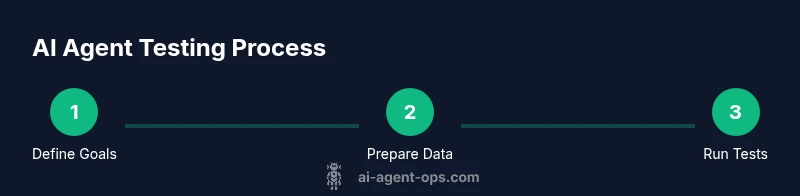

Steps

Estimated time: 60-90 minutes

- 1

Define scope and success criteria

Articulate what you will test (behavior, safety, data quality) and how success will be measured. Create measurable criteria and link each to a test case with expected outcomes.

Tip: Start with high-impact use cases and clearly state acceptance thresholds. - 2

Prepare data and environments

Assemble representative datasets and assign isolated test environments that mimic production constraints. Include drift scenarios and privacy safeguards.

Tip: Use versioned data and seed randomness for reproducible tests. - 3

Build a test harness

Create an automation layer that runs tests, captures results, and reports regressions. Integrate with your CI/CD pipeline for on-change checks.

Tip: Prioritize deterministic tests that can be reproduced exactly. - 4

Execute test suites

Run unit, integration, and scenario tests. Include stress and edge-case simulations to reveal boundary failures.

Tip: Use both deterministic and stochastic inputs to improve coverage. - 5

Analyze results and iterate

Review failures, categorize by root cause, and implement fixes in policy, data, or prompts. Re-run tests to verify fixes.

Tip: Document root causes and link them to test updates. - 6

Monitor in production and maintain cadence

Deploy monitoring and automated regression checks in production. Schedule regular re-testing after model or data updates.

Tip: Automate rollbacks if critical failures appear.

Questions & Answers

What is AI agent testing, and why is it important?

AI agent testing validates behavior, safety, and reliability of autonomous systems. It helps prevent harmful decisions, ensures compliance with policies, and improves user trust by catching failures before deployment.

AI agent testing validates behavior, safety, and reliability to prevent harmful decisions and build user trust.

What should test data cover for AI agents?

Test data should encompass baseline cases, edge scenarios, adversarial inputs, and drift simulations. Use anonymized data where possible and keep data governance at the forefront.

Test data should cover baseline, edge, and drift scenarios with privacy safeguards.

How do you measure failure modes for AI agents?

Identify failure types, categorize by impact, and track frequency over time. Use qualitative reviews and quantitative metrics to drive targeted improvements.

Track failures by type and impact, then iterate based on data.

What are common pitfalls in testing AI agents?

Overfitting tests to a single dataset, ignoring drift, and neglecting governance. Also, failing to test for multi-agent interactions and security concerns.

Common pitfalls include drift neglect and governance gaps.

How often should production AI agents be re-tested?

Re-testing should occur on data/model changes and on a scheduled cadence. Continuous monitoring with automated checks is recommended to detect drift.

Test after updates and on a regular cadence with monitoring.

What tools are recommended for testing AI agents?

Use a combination of unit, integration, and scenario testing tools, plus monitoring dashboards and data drift detection. Prioritize tools that integrate with your existing CI/CD pipeline.

Combine unit, integration, and scenario testing with monitoring tools.

Watch Video

Key Takeaways

- Define clear objectives and measurable criteria.

- Automate tests and integrate with CI/CD.

- Use diverse data with drift-aware validation.

- Quantify results with stable metrics and baselines.

- Maintain ongoing monitoring and test cadence.