Best Way to Train an AI Agent: A Practical Guide

Learn the best way to train an ai agent with a practical, step-by-step approach. This guide covers data quality, evaluation, safety, and tooling for reliable agentic AI workflows in real-world projects.

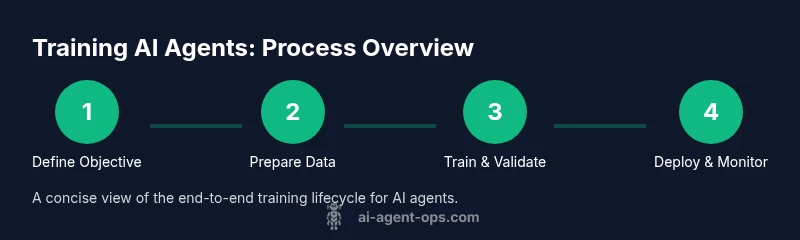

According to Ai Agent Ops, the best way to train an ai agent is a structured, end-to-end pipeline. It starts by defining a clear objective, selecting appropriate data, and implementing a robust training loop with ongoing evaluation. This guide shows practical steps, recommended tooling, and guardrails for reliable agentic AI workflows. It emphasizes data quality, safety considerations, reproducibility, and measurable success criteria to help development teams move from concept to production.

Foundations of Training AI Agents

Training an AI agent is about more than fitting a model to data. An agent is an autonomous system that perceives its environment, reasons about actions, and acts to achieve defined goals. In practice, success hinges on a clearly stated objective, governance policies, and a feedback loop that connects outcomes to improvement. According to Ai Agent Ops, the best outcomes begin with a well-scoped objective and guardrails that reflect real user needs. A good objective translates into measurable criteria, informs data selection, shapes evaluation, and guides ongoing monitoring throughout the lifecycle. This foundation sets expectations for performance, safety, and alignment with user needs. As you plan, map desired behaviors, failure modes, and escalation paths. By starting with governance and ethics in mind, you reduce risk and increase long-term value.

- Define success in observable terms (metrics, benchmarks, and acceptable risk levels).

- Align the agent’s goals with business objectives and user needs.

- Establish governance: who owns data, what is permissible, and how feedback is incorporated.

- Plan for iteration: expect multiple rounds of improvement as you gather experience.

Why it matters for developers and leaders: a solid foundation prevents scope creep and aligns engineering work with measurable outcomes.

Data Quality and Preparation for AI Agents

High-quality data is the lifeblood of any agentive system. The best results come from diverse, representative data that captures the real environments where the agent will operate. Start with a data audit to identify gaps, biases, and corner cases. Create robust train/validation/test splits that reflect deployment conditions, and implement data versioning so you can reproduce experiments. It’s essential to guard against data leakage, drift, and labeling inconsistencies, which can mislead the agent during real-world use.

Ai Agent Ops analysis shows that data quality shapes both initial performance and long-term reliability. Invest in data labeling standards, review cycles, and calibration tests that measure how data quality translates into safe, predictable agent behavior. Consider synthetic data where real data is scarce, but validate synthetic coverage against real-world scenarios to prevent blind spots.

- Audit data sources for relevance, coverage, and bias.

- Use clear labeling guidelines and regular quality checks.

- Maintain data versioning and experiment reproducibility.

- Monitor for data drift after deployment and plan retraining intervals.

Practitioner tip: start with a minimal data footprint that reflects core tasks, then expand to capture edge cases.

Architecture and Tooling for Training Agents

Choosing the right architecture and toolchain is critical to the success of training AI agents. Depending on the task, you may combine a language model with planning modules, memory, and action Executors. Consider whether imitation learning, supervised fine-tuning, or reinforcement learning best aligns with the agent’s goals. Agent orchestration patterns—how the agent interacts with tools, databases, and external services—should be designed early to prevent brittle systems. Tooling should emphasize reproducibility, observability, and safety controls.

When selecting frameworks and libraries, prioritize interoperability and clear interfaces. Establish a lightweight baseline for rapid iteration, then layer in more capable components as needed. Remember to document decisions so future teams can reproduce results and extend the system without ambiguity.

Ai Agent Ops recommends mapping the architecture to the agent’s mission: if short-horizon tasks are primary, a simple planner with a robust policy may suffice; if long-horizon tasks are required, integrate memory, planning, and external tool use to sustain performance over time.

- Start with a minimal viable architecture and validate core capabilities.

- Choose tooling that supports experimentation, versioning, and monitoring.

- Design clear interfaces between perception, reasoning, and action modules.

- Plan for governance: logging, access control, and audit trails.

Note for teams: maintain modularity to swap components without reworking the entire system.

Building a Robust Training Loop

A robust training loop is the engine that turns data and architecture into reliable agent behavior. Build an iterative cycle: collect data, train, evaluate, analyze errors, adjust objectives or data, and retrain. Emphasize reproducibility through deterministic experiments, versioned datasets, and consistent seeds. Implement guardrails to prevent unsafe actions during exploration, and ensure that evaluation happens in realistic environments that mimic deployment conditions.

A strong loop tracks both performance and safety metrics, and it integrates human-in-the-loop checks where appropriate. Use continuous integration-like practices for ML: automated tests, reproducible builds, and automated experiment tracking. This approach reduces guesswork and accelerates learning.

- Use deterministic seeds and versioned assets for repeatability.

- Separate training, evaluation, and deployment environments to avoid leakage.

- Implement early-stopping and safe exploration constraints.

- Log all decisions, data versions, and hyperparameters for auditability.

Pro tip: document the rationale behind each experiment to build a library of reusable patterns.

Evaluation and Validation: Metrics that Matter

Measurement drives improvement. Define multi-dimensional evaluation that covers accuracy, safety, reliability, and user impact. Beyond traditional accuracy, include metrics such as decision latency, failure modes, and the agent’s ability to recover from errors. Use scenario-based testing to evaluate performance in edge cases and under distribution shifts. Validation should occur in both simulated environments and controlled real-world pilots before broad deployment.

If you want robust, transferable results, employ ablation studies to understand which components most influence outcomes. Compare baseline approaches against incremental improvements to isolate effects. Keep your evaluation criteria aligned with governance expectations to avoid unintended consequences.

- Use scenario tests that reflect real usage and edge cases.

- Track distributional shifts and model drift over time.

- Conduct ablations to identify critical components.

- Align metrics with safety and user impact goals.

Ai Agent Ops note: effective evaluation is as important as model performance for maintaining trust in agentic AI systems.

Safety, Ethics, and Governance for Agentic AI

Safety and ethics must be embedded throughout training, not tacked on at the end. Define guardrails for actions the agent can take, limits on data use, and escalation paths for ambiguous situations. Incorporate privacy-preserving techniques, bias detection, and fairness considerations into data collection and evaluation. Governance should address accountability, auditing, and compliance with relevant regulations.

Plan for adversarial scenarios, misaligned incentives, and potential misuse. Regularly review risk models and update safety policies in response to new threats or capabilities. Involve stakeholders from product, legal, and security teams to maintain a holistic view of risk.

- Establish clear boundaries for agent autonomy and tool use.

- Implement privacy, bias, and safety checks in data and decisions.

- Maintain an auditable trail of decisions and outcomes.

- Schedule regular governance reviews with cross-functional teams.

Important reminder: governance and ethics are not optional extras; they are core to sustainable AI development.

Deployment, Monitoring, and Continuous Improvement

Deployment marks the transition from a research prototype to a live service. Prepare monitoring dashboards that track performance, safety signals, and user feedback in real time. Set thresholds for triggering retraining, flags for drift, and alerting for anomalous behavior. Establish a continuous improvement loop that prioritizes incidents, learns from them, and iterates on data, model, and policies.

In production, maintain rigorous version control of data, code, and configurations. Use canary deployments, A/B tests, and rollback mechanisms to minimize risk. Plan for ongoing maintenance: periodic retraining, policy updates, and user education to manage expectations and ensure responsible use of agentic AI.

- Deploy with gradual rollouts and robust monitoring.

- Establish clear retraining triggers based on metrics and feedback.

- Maintain a living documentation of decisions, data, and experiments.

- Communicate limitations and guardrails to users and stakeholders.

Bottom line: the best AI agents stay aligned with goals and user needs through disciplined deployment and continuous learning. Ai Agent Ops’s guidance emphasizes living governance as a key to long-term success.

Tools & Materials

- Compute resources (GPUs/TPUs)(Qualified GPUs/TPUs for experimentation; plan for distributed runs if needed.)

- Python environment (3.9+ or 3.10+)(Use virtual environments and dependency management (e.g., poetry, requirements.txt).)

- Data labeling and annotation tools(Label datasets with clear guidelines; support human-in-the-loop labeling.)

- Experiment tracking & version control(MLflow, Weights & Biases, or similar; Git for code and configuration.)

- Reproducible data storage & pipeline tooling(Data versioning, clean data provenance, and modular pipelines.)

- RL environments & agent libraries(Access to environments for RL or planning components; ensure compatibility with your framework.)

- Documentation & runbooks(Maintain playbooks for setup, troubleshooting, and rollback.)

Steps

Estimated time: 6-12 weeks

- 1

Define objective and constraints

Clarify the agent’s primary goals, success criteria, and any safety or regulatory constraints. Create a one-page objective statement that translates into measurable deployments and boundaries for exploration.

Tip: Write a concise objective and list 3-5 concrete success criteria before data collection. - 2

Assemble and preprocess data

Collect domain-relevant data, remove duplicates, and split into train/validation/test with scenarios that reflect deployment conditions. Apply privacy-preserving practices and document data sources.

Tip: Use deterministic seeds and maintain data versioning for reproducibility. - 3

Choose training paradigm and architecture

Select the learning approach (supervised/imitation, reinforcement learning, or hybrid) and design the agent’s core components (policy, planner, memory, tool use).

Tip: Prototype a baseline quickly to establish a reference point before adding complexity. - 4

Implement training loop with guardrails

Build a loop that trains, evaluates, and logs results with safety guards to prevent unsafe actions. Include clear checkpointing and deterministic behavior where possible.

Tip: Set early stopping and safety checks to reduce risk during exploration. - 5

Evaluate and iterate

Run multi-metric evaluations, perform ablations, and validate on edge cases. Use findings to refine data, architecture, or objectives; repeat until goals are met.

Tip: Automate evaluation scripts and track results over time. - 6

Deploy and monitor

Plan a staged deployment, monitor performance and safety signals in production, and establish retraining triggers based on drift or failure events.

Tip: Set up dashboards, alerts, and a rollback path for unsafe behavior.

Questions & Answers

What is an AI agent?

An AI agent is a system that perceives its environment, reasons about actions, and takes autonomous steps to achieve defined goals. It combines sensing, decision-making, and action to operate in dynamic environments.

An AI agent is a system that senses, reasons, and acts to meet its goals in real time.

How much data do I need to train an AI agent?

There is no one-size-fits-all answer. Data quantity depends on task complexity, required accuracy, and the variability of deployment scenarios. Start with domain-specific data and expand gradually to cover edge cases.

Data needs vary; start small and scale up as you test in more scenarios.

Which training paradigm should I start with?

Begin with supervised or imitation learning to establish a stable baseline. If the task requires long-horizon planning or interaction with tools, consider reinforcement learning or a hybrid approach with a planning module.

Start with a stable baseline using supervised learning, then add planning or reinforcement learning as needed.

How do you measure the success of an AI agent?

Use multi-dimensional metrics that cover accuracy, safety, reliability, and user impact. Include scenario-based tests, drift detection, and governance-aligned criteria to ensure the agent behaves as intended in production.

Measure accuracy, safety, and impact with scenario-based testing and governance-aligned metrics.

What safety considerations are essential when training agents?

Define guardrails for autonomy, validate data and outputs, monitor for unsafe behavior, and establish escalation paths. Involve cross-functional stakeholders to ensure comprehensive risk assessment.

Set guardrails, monitor outputs, and have a plan to escalate or roll back if needed.

Watch Video

Key Takeaways

- Define a clear objective with measurable criteria

- Prioritize data quality and governance from day one

- Choose architecture and tooling aligned with the agent’s mission

- Build a robust, reproducible training loop with safety guardrails