How to test AI models: A practical, step-by-step guide

A practical, step-by-step guide to testing AI models, covering metrics, data quality, evaluation protocols, drift monitoring, and safe deployment—designed for developers, data scientists, and product teams.

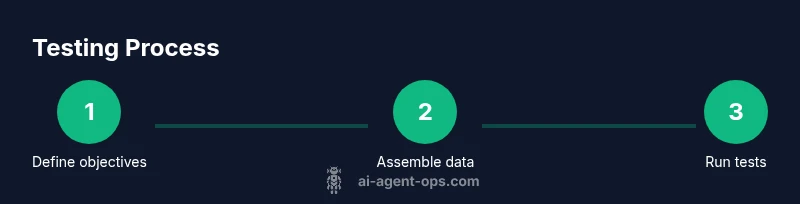

By the end of this guide, you will know how to test AI models with a structured, repeatable process: define objectives, assemble diverse test data, implement a metrics suite, validate behavior under real-world conditions, monitor drift, and iterate based on results. According to Ai Agent Ops, rigorous testing reduces risk and boosts reliability in agentic AI workflows.

Why Testing AI Models Is Different

AI models are probabilistic by design: the same input can yield different outputs across runs, and performance can shift as data patterns change. Traditional software testing (e.g., unit or integration checks) often misses these dynamics, leaving blind spots in reliability, safety, and fairness. Ai models operate in open-ended spaces—language, vision, and multimodal inputs—where edge cases, distribution shifts, and adversarial inputs can surface. A robust testing strategy needs to evaluate generalization beyond the training data, monitor drift after deployment, and establish guardrails that prevent harmful or unsafe behavior. According to Ai Agent Ops, a disciplined, test-driven approach is essential for responsible AI adoption and for sustaining trust in AI agents across business workflows.

Key ideas to keep in mind:

- Differentiate testing from validation; include both offline (historical data) and online (live) evaluation.

- Treat data drift as a first-class risk; plan for ongoing monitoring rather than a one-time checklist.

- Balance qualitative and quantitative assessments to capture nuanced behavior.

Tools & Materials

- Test data sets (train/validation/test splits)(Diverse, representative samples; include edge cases and rare but important scenarios)

- Metrics definitions document(Clear thresholds and interpretation rules for each metric)

- Experiment tracking and logging tooling(Capture configurations, seeds, model versions, and results for reproducibility)

- Deployment proxy or feature flag system(Safely rollout tests and monitor live performance with rollback capability)

- Bias and fairness audit framework(Optional but recommended for critical domains)

Steps

Estimated time: 2-3 hours

- 1

Define objectives and success criteria

Articulate the primary goals of the model in production (e.g., accuracy, safety, user satisfaction). Establish concrete success criteria and acceptable risk thresholds before testing begins.

Tip: Document the decision rules for when a test result triggers a rollback or a model update. - 2

Assemble diverse, high-quality test data

Curate a representative dataset that covers common cases, corner cases, and potential distribution shifts. Include synthetic data if needed to stress-test rare conditions while preserving privacy.

Tip: Annotate data with expected outcomes to enable fast, repeatable offline evaluation. - 3

Build a reproducible test harness

Create a framework that can reproduce tests across model versions, log results, and compare against baselines. Ensure seeds and environments are captured for deterministic runs when possible.

Tip: Version-control test configurations and seeds to ensure identical conditions in repeated runs. - 4

Run offline evaluation with multiple metrics

Evaluate generalization using holdout data with metrics that reflect accuracy, calibration, robustness, and fairness. Use both aggregate and per-group analyses to detect disparities.

Tip: Include calibration checks for probabilistic outputs to avoid overconfident predictions. - 5

Perform online and staged testing

Leverage A/B tests or canary deployment to observe performance in live contexts. Start with a small user subset before broader rollout.

Tip: Monitor for drift and user impact continuously during the test window. - 6

Analyze results and identify failure modes

Create a structured fault taxonomy mapping failures to causes (data, model, or integration). Prioritize fixes that reduce risk and improve user outcomes.

Tip: Automate root-cause analysis where possible to speed up iteration. - 7

Document reproducibility and versioning

Record model version, data version, metrics, and insights. Ensure audits are possible for regulatory or safety requirements.

Tip: Maintain an immutable log of results to prove progress over time. - 8

Plan safe deployment and ongoing monitoring

Define rollback plans, alerting thresholds, and continuous monitoring dashboards. Establish cadence for retraining or updating models as new data arrives.

Tip: Automate drift alerts and have a ready-to-activate rollback if risk signals spike.

Questions & Answers

What metrics matter most when testing AI models?

Metrics vary by use case but commonly include accuracy, precision, recall, F1, calibration, and fairness indicators. Pair these with robust diagnostic plots to understand failures across conditions.

Common metrics include accuracy, precision, recall, calibration, and fairness indicators. Use diagnostic plots to visualize failures across conditions.

How can I prevent data leakage during testing?

Ensure strict separation between training and test data, and avoid using leaked features or information that would not be available in production. Validate splits and data provenance.

Keep training and test data strictly separate, and verify feature provenance to avoid leakage.

How often should AI models be re-tested?

Retesting should be tied to data drift, model updates, and regulatory changes. Establish a regular cadence and trigger-based checks when data distributions shift noticeably.

Retest on a cadence aligned with drift and updates—trigger tests when data shifts occur.

What tools help with testing AI models?

Use an integrated test harness, metric dashboards, and experiment tracking to compare model versions. Include capability to simulate real-world workloads and adversarial inputs.

Use a test harness with dashboards and versioned experiments to compare model versions.

How do you test for bias and fairness?

Incorporate subgroup analyses, disparate impact checks, and context-specific fairness metrics. Validate results across sensitive attributes and ensure remediation options exist.

Analyze performance across subgroups and apply fairness metrics with remediation paths.

Watch Video

Key Takeaways

- Define clear objectives and success metrics up front

- Use diverse data and robust offline/online evaluation

- Build a reproducible, versioned test harness

- Monitor drift and safety continuously post-deployment

- Document results for traceability and audits