Make AI Agent: A Practical Guide to Autonomous Agents

Learn how to make ai agent with a step-by-step workflow, architectures, safety practices, and deployment tips for reliable agentic AI in real-world workflows.

This guide shows you how to make an ai agent that can automate tasks, reason across steps, and act on user instructions. You'll learn the core concepts, required tools, and a practical, step-by-step workflow to design, train, deploy, and monitor an autonomous agent. By the end you'll have a repeatable blueprint for building agentic AI systems.

Foundations: What is an AI agent and how does it differ from a traditional bot

According to Ai Agent Ops, an AI agent is a software entity that uses perception, reasoning, and action to achieve goals in dynamic environments. Unlike scripted bots, an AI agent autonomously selects actions, learns from feedback, and coordinates multiple components to accomplish tasks. This is why teams across industries aim to make ai agent capable of handling complex workflows with minimal human intervention. At its core, an AI agent combines a perception layer (to gather data), a planning layer (to decide what to do next), and an action layer (to execute actions). When you set out to make ai agent, you’re not just programming a single function; you’re composing an intelligent system that can adapt to changing inputs and constraints. The immediate benefits are speed, consistency, and scalability, but the real value comes from the agent’s ability to improve through experience. In practice, you’ll start with a well-defined objective, a bounded environment, and a simple interface for human feedback. The phrase “make ai agent” is less about a one-off script and more about building reusable, modular components that can be orchestrated to achieve business outcomes.

Brand note: Ai Agent Ops’s insights emphasize that the path to reliable agentic AI starts with clear goals, guardrails, and measurable success criteria.

Core capabilities to aim for in an agent

An effective AI agent must perceive its environment, reason about goals, and act to produce outcomes. Perception comes from data streams, sensors, or user prompts; reasoning involves planning steps, evaluating tradeoffs, and selecting safe actions; action executes commands, queries, or API calls. A robust agent also maintains memory of past interactions to avoid repeating mistakes and to adapt strategies over time. To make ai agent that scales, you should design for modularity: each capability should be a replaceable component with a clear interface. You’ll also want feedback loops so the agent improves from both successes and failures. In practice, specify success criteria and failure modes for each task, so you can measure improvement as you expand the agent’s domain. Remember that agentic behavior benefits from disciplined boundaries—define what the agent can and cannot do, and keep humans in the loop for high-stakes decisions. The goal is a repeatable cycle: observe, plan, act, assess, and adjust.

As you think about capabilities, consider extension points for future tasks, such as memory augmentation, external tool usage, and multi-agent coordination. This mindset helps with the long-run goal of building scalable, flexible ai agent solutions.

Design patterns for agentic workflows

There are multiple architectural patterns for agentic AI, each with tradeoffs in latency, reliability, and complexity. A common starting point is a planner-driven loop: the agent assembles a plan from prompts, then executes actions one by one, re-planning when feedback indicates a better path. Another pattern is the goal-directed loop with a memory layer that recalls prior decisions, enabling contextual continuity across sessions. A guardrail pattern introduces safety checks at every decision point, such as input validation, risk scoring, or escalation to human operators for non-deterministic tasks. A modular approach divides responsibilities into perception, reasoning, action, and evaluation modules, so you can upgrade components independently. When you make ai agent, design for observability—trace decisions, capture outcomes, and measure alignment with business objectives. Finally, consider orchestration patterns for multiple agents that collaborate on a larger task, using central coordination to avoid conflicting actions.

To maximize reusability, adopt standardized interfaces and data formats, keeping prompts and tools abstracted from business logic. This makes it easier to reuse modules across projects and teams.

Architecture overview: prompts, modules, and data flows

A practical AI agent is a composition of prompts, plugins, and data flows that connect perception, reasoning, and action. At the input layer, user prompts or sensor data feed into a perception module that normalizes information. The reasoning module, often built around a planner or decision engine, interprets inputs, consults a memory store for context, and produces a concrete action plan. The action module executes tasks via APIs, databases, or local processes, then returns results to the user or system. Memory is essential for stateful conversations; it stores goals, constraints, and outcomes to inform future plans. Evaluation and feedback loops compare outcomes to expected results, enabling the agent to adjust its strategy over time. Data governance, privacy, and security considerations must be woven into every data flow, from ingestion to storage to output. For those who are just starting, keep a simple, well-scoped environment, and gradually increase complexity as you validate core capabilities.

Key takeaway: define precise interfaces for perception, reasoning, and action, and keep the data flows transparent and auditable so you can troubleshoot and improve your make ai agent project over time.

Tools and frameworks: pick the stack you need

Choosing the right tools is critical when you set out to make ai agent. Start with a solid development environment and access to a capable AI service provider. Use version control and an experiment-tracking suite to capture configurations, prompts, and outcomes. Popular patterns include modular tooling for perception (data collectors), reasoning (planning and decision engines), and action (task executors, API clients). When integrating with external services, ensure you have secure credential management and rate-limiting to prevent outages. For teams, adopt a minimal viable integration approach: implement a single core agent, then incrementally add plugins and tools as you validate performance. Document API contracts, data schemas, and decision criteria so future developers can reproduce results and contribute improvements. Remember to test in a sandbox before production, and design with observability in mind: log decisions, track latency, and monitor success rates. As you scale, you’ll benefit from orchestration patterns that coordinate multiple agents and manage resource usage efficiently.

Important note: use open standards and maintain backward compatibility when you upgrade components; this reduces risk as your ai agent evolves.

Step-by-step blueprint: from problem to deployable agent

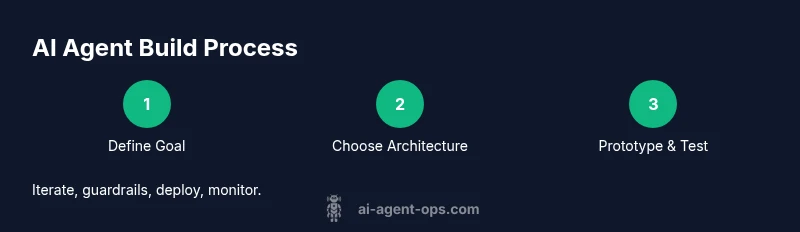

- Define the objective and success metrics: articulate the business goal, user needs, and measurable outcomes. 2) Choose the architecture pattern: planner-driven, memory-augmented, or multi-agent orchestration. 3) Assemble the toolkit: select frameworks, APIs, and data sources, and set up a sandbox environment. 4) Build a minimal prototype: implement perception, a basic planner, and a safe action executor for a constrained task. 5) Test with real tasks: run end-to-end scenarios, capture failures, and refine prompts and decision logic. 6) Add guardrails and governance: implement safety checks, escalation paths, and privacy controls. 7) Deploy and monitor: move to production with telemetry, error handling, and continuous improvement loops.

Estimated time for a focused prototype and initial deployment: 6-12 hours, depending on scope and tooling familiarity. Pro tip: start with a single task domain to validate core capabilities before expanding. Avoid scope creep by anchoring to explicit success criteria and a clear deprecation plan for outdated components.

Data strategy and training considerations

Data quality drives agent performance. When you make ai agent, ensure you have clean, labeled data for perception modules and robust evaluation datasets for testing decision rules. Define data retention and privacy policies in advance, including how data is stored, who can access it, and how long it is retained. Use simulation or synthetic data to test edge cases that are rare in production, but could cause unsafe behavior if not addressed. Establish a versioned dataset repository so you can reproduce results and compare model iterations over time. Track performance metrics such as accuracy, latency, success rate, and fault tolerance, and tie improvements to concrete changes in prompts, tools, or architecture. Finally, implement a continuous learning loop that uses feedback from outcomes to refine prompts and tool selections, while guarding against data drift and biased decisions.

Safety, governance, and compliance

Agent safety is not optional. Incorporate guardrails at design time: limit capabilities to well-defined domains, enforce strict input validation, and implement escalation rules for high-risk actions. Maintain a robust audit trail that records decisions, prompts, tool invocations, and outcomes, enabling after-action reviews and regulatory audits. Establish governance roles and approval processes for deploying new capabilities or tool integrations. Ensure data privacy, consent, and compliance with applicable regulations, including data handling, retention, and user notification policies. Regularly review risk scenarios and update failure-mode analyses and remediation plans. Finally, foster a culture of transparency with stakeholders, explaining how the agent makes decisions and what safeguards are in place to protect users and data.

Operational excellence: monitoring, maintenance, and evolution

Running an AI agent in production requires ongoing vigilance. Implement telemetry to monitor latency, success rates, tool reliability, and anomaly detection. Use alerting for critical failures or policy violations, and perform regular health checks of memory stores and external connections. Schedule periodic retraining or prompt-tuning cycles to adapt to changing user needs and environments, while preserving a stable baseline. Maintain comprehensive documentation of configurations, dependencies, and version histories, so changes are reproducible. Plan for maintenance windows and graceful rollback strategies to minimize downtime. Finally, set an evolution roadmap that prioritizes security, governance, and user feedback, ensuring the ai agent remains aligned with business goals while improving performance over time.

Tools & Materials

- Development workstation(A modern computer with stable OS and internet access)

- Access to AI service provider API(API keys and rate limits documented)

- Data storage and version control(Git repository + cloud storage for datasets)

- Experiment tracking(Tool to capture prompts, configs, metrics)

- Sandbox testing environment(Isolated space to prototype safely)

- Security and privacy policies(Data handling, access control, auditing)

- Documentation tooling(Wikis or docs for team onboarding)

- Monitoring and observability stack(Logs, dashboards, alerts)

- Sample task datasets(Representative inputs for perception modules)

- Testing scenarios and edge cases(Predefined tasks to validate guardrails)

Steps

Estimated time: 6-12 hours

- 1

Define objective and success criteria

Articulate the business goal, the domain, and the measurable outcomes the agent must achieve. Specify constraints, risk tolerance, and escalation rules upfront.

Tip: Document acceptance criteria and failure modes to guide later testing. - 2

Choose architecture pattern

Select a planner-driven, memory-augmented, or multi-agent orchestration approach based on task complexity and required resilience.

Tip: Start simple; validate core loop before introducing memory or multiple agents. - 3

Assemble toolkit and data sources

Set up development environment, API access, data sources, and a sandbox. Create a minimal data schema for perception inputs and action outputs.

Tip: Use versioned prompts and seed data to ensure reproducibility. - 4

Build a minimal prototype

Implement basic perception, a simple planner, and a safe action executor for a constrained task with clear boundaries.

Tip: Prioritize deterministic behavior in the first prototype to ease debugging. - 5

Test with real tasks

Run end-to-end scenarios, collect outcomes, and identify failure modes. Iterate on prompts and tool usage based on results.

Tip: Track latency and accuracy to guide improvements. - 6

Add guardrails and governance

Introduce safety checks, input validation, escalation paths, and privacy controls before broader deployment.

Tip: Escalation should be a last resort, not a first option. - 7

Deploy and monitor

Move to production with telemetry, alerting, and regular performance reviews. Plan incremental rollouts and rollback options.

Tip: Document changes and provide a clear rollback plan.

Questions & Answers

What is an AI agent and how is it different from a chatbot?

An AI agent automates actions in the real world by perceiving inputs, planning a course of action, and executing tasks, often across tools and APIs. A chatbot typically focuses on text-based conversations and does not autonomously act in external systems.

An AI agent not only chats but can perform real-world tasks by using tools and making decisions.

What are the essential components to make ai agent?

Perception, reasoning (planning), and action execution are the core components. A memory layer and governance overlays help with context and safety.

Core pieces are perception, planning, and acting, plus memory and safety layers.

How long does it typically take to deploy a basic agent?

A basic prototype can be built in a day or two, with additional time needed for testing, guardrails, and monitoring before production.

A basic agent can be prototyped in about a day or two, with more time for safety and monitoring.

What safety practices are essential for agentic AI?

Define boundaries, implement input validation, establish escalation paths, and conduct regular audits of decisions and outcomes.

Key safety steps are boundaries, validation, escalation, and audits.

What metrics should I track for an AI agent?

Track accuracy, latency, success rate, tool reliability, and frequency of escalations to gauge performance and guide improvements.

Track accuracy, latency, success rate, and resilience metrics.

Can multiple agents collaborate on a single task?

Yes. Orchestrating multiple agents can handle complex tasks, but requires coordination, conflict resolution, and centralized governance.

Yes, with proper coordination and governance.

Watch Video

Key Takeaways

- Define clear objectives before building.

- Choose a solid architecture pattern and scale gradually.

- Guardrails and governance are essential from day one.

- Iterate with real tasks and measurable metrics.