ai agent vs model: A Practical Comparison for AI Workflows

A rigorous comparison of AI agents and AI models to help developers, product teams, and leaders choose the right approach for automation, orchestration, and governance.

AI agents and AI models serve distinct roles in automation: agents coordinate tasks across systems with goal-driven behavior, while models provide specific transformations or predictions. Understanding the ai agent vs model distinction helps teams design scalable, maintainable workflows that balance orchestration, accuracy, and latency. In practice, organizations blend both approaches, using models inside agents or consulting models for components of a larger agent-driven loop.

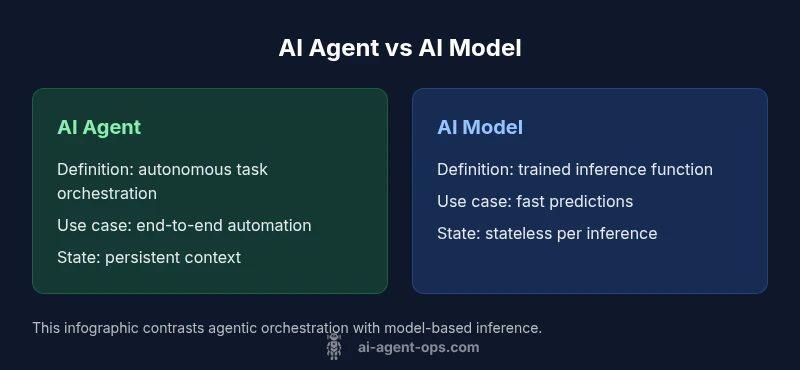

Understanding the core concepts: ai agent vs model

According to Ai Agent Ops, an AI agent is an autonomous unit that perceives, reasons, and acts to achieve defined goals, often coordinating across services and data sources. An AI model, by contrast, is a trained function that maps inputs to outputs. The distinction between ai agent vs model is not just semantic—it shapes architecture, data flows, and governance. Agents embody behavior over time; models deliver stateless inferences. When you combine both, you get agentic AI: orchestrating tasks with model-backed decisions.

Clarifying definitions: AI agents explained

An AI agent typically consists of perception modules (data connectors, sensors), a reasoning/planning layer (goal formulation, sequencing actions), a decision or control component (selecting subsequent steps), and a delivery surface (APIs, UI). Agents operate continuously or on event streams, respond to feedback, and adapt over time. AI models provide the underlying predictive capabilities (classification, generation, forecasting) used by agents or standalone systems. The ai agent vs model distinction becomes critical when you design end-to-end automation.

The architecture difference: agentic AI vs model-centric AI

In agentic AI, control loops rely on state, memory, and orchestration logic. Agents keep track of context, update world models, and decide next actions autonomously. Model-centric AI emphasizes static or batched inference from a fixed model, often with lightweight wrappers. The key architectural choice is how you manage state: do you push intent through a persistent agent that learns from outcomes, or do you run stateless models that produce outputs for ad hoc tasks? The ai agent vs model framing helps you align with governance, observability, and fault tolerance.

Use-case scenarios: when to deploy an AI agent vs a plain model

For complex, multi-step workflows requiring cross-system coordination (e.g., customer support automation, supply chain orchestration), an AI agent shines. It can plan actions, monitor results, and adapt to changes. For narrowly scoped tasks with strong, well-defined inputs and outputs (e.g., sentiment classification, image labeling, or text generation), AI models offer high accuracy with lower orchestration overhead. The ai agent vs model decision hinges on task scope, required autonomy, and integration complexity.

Performance and latency considerations: how fast should decisions be?

Agents add overhead due to perception, planning, and action orchestration. If latency is critical for user experience, you may rely on lean models or hybrid patterns where an agent handles orchestration while models execute fast inferences. Conversely, for batch processing or long-running automation, agents can batch decisions, optimize resource use, and rebound from errors. The ai agent vs model trade-off is frequently about latency versus autonomy and accuracy.

Data and governance: data dependencies, privacy, and control

Both agents and models depend on data pipelines, but agents require persistent context and historical state to function effectively. Governance becomes more complex with agents because you must track decisions, actions, and outcomes across services. Data quality, access control, and privacy safeguards apply to both, yet agent-based designs magnify the need for observability, auditing, and rollback mechanisms. The ai agent vs model conversation is ultimately about who owns decisions and who is responsible for consequences.

Integration patterns and workflows: building end-to-end solutions

A common pattern is to embed predictive models inside an agent’s decision logic, enabling model-backed actions. Another approach is to orchestrate multiple models through agent-like controllers that coordinate tasks across microservices. When planning, consider event-driven vs batch architectures, API contracts, and error-handling strategies. The ai agent vs model debate often centers on how you orchestrate data flows and what SLAs you commit to.

Practical decision framework: a 5-step approach to decide

Step 1: Define the task scope and desired autonomy. Step 2: Map data sources, latency, and reliability requirements. Step 3: Assess governance, auditing, and rollback needs. Step 4: Prototype with a minimal agent or model component to validate feasibility. Step 5: Measure operational metrics (throughput, error rate, time-to-value) and iterate—the ai agent vs model choice should optimize for maintainability and risk.

Common pitfalls and anti-patterns

Avoid assuming that more autonomy always yields better outcomes without governance. Overly large agents can become brittle; under-guarded models can misbehave due to distribution shift. Ensure proper observability, versioning, and rollback plans. The ai agent vs model discussion should emphasize disciplined design, testability, and clear ownership.

Comparison

| Feature | AI Agent | AI Model |

|---|---|---|

| Definition | Autonomous, goal-oriented system that orchestrates actions across services | Trained function that maps inputs to outputs with learned patterns |

| Typical use case | End-to-end automation, multi-step workflows, decision loops | Standalone predictions, classifications, or content generation |

| State management | Maintains context and memory across tasks | Stateless or short-lived state per inference |

| Latency & throughput | Higher overhead due to perception/planning; optimized with hybrid patterns | Low-latency inferences suitable for fast tasks |

| Data dependencies | Requires diverse data sources and event streams | Relies on labeled data and task-specific training data |

| Governance & observability | Better end-to-end auditing but more complex to monitor | Easier to monitor in isolation but limited visibility across steps |

| Cost/maintenance | Higher upfront design; ongoing orchestration costs | Lower maintenance per model if well-tuned; model drift can occur |

Positives

- Enables end-to-end automation and adaptability

- Improves consistency across multi-step tasks

- Enhances reusability by composing models and actions

- Supports dynamic decision-making and feedback loops

What's Bad

- Higher architectural and operational complexity

- Requires robust governance, observability, and rollback

- Potential for brittle behavior if state is not managed well

- Initial development and integration effort can be substantial

AI agents offer broader automation capabilities; AI models excel at precise, fast predictions

Choose an AI agent when end-to-end orchestration and adaptability matter. Opt for an AI model for focused, high-speed inferences with simpler governance.

Questions & Answers

What is the core difference between an AI agent and an AI model?

An AI agent coordinates tasks across systems using perception, planning, and action. An AI model performs a specific inference, typically without ongoing orchestration.

AI agents coordinate actions across systems, while AI models perform a single inference task.

When should I use an AI agent instead of a model?

Use an agent for multi-step, autonomous workflows that require coordination and adaptability. Use a model for fast, well-defined inferences that don’t require ongoing orchestration.

Use an agent for end-to-end tasks; use a model for quick, specific inferences.

How do data requirements differ between agents and models?

Agents require streaming data and stateful context; models require labeled data for training and evaluation. Ensure data governance across both.

Agents need ongoing data streams and memory; models need quality training data.

What are common pitfalls when building AI agents?

Overly complex agents, insufficient observability, drift in decision making, and poor error handling are common issues. Plan for monitoring and rollback.

Beware complexity and poor monitoring when building agents.

Can AI agents use multiple models?

Yes. Agents often orchestrate multiple models, each handling a sub-task, to achieve a cohesive outcome.

Agents can coordinate several models to complete tasks.

What governance practices matter for ai agents?

Auditability, versioning, access control, and rollback capabilities are essential. Documentation helps accountability.

Governance means audits, versioning, and safe rollback.

Is there a price difference between the two approaches?

Costs depend on scale and architecture. Agents may incur orchestration overhead; models incur training, hosting, and drift management costs.

Costs vary with scale and complexity, but both add ongoing expenses.

What metrics matter for AI agents?

Observation coverage, decision latency, success rate of actions, and end-to-end task completion time are important metrics.

Track latency, success rates, and end-to-end outcomes.

Key Takeaways

- Define task scope before choosing

- Prefer agents for cross-system workflows

- Use models for fast, isolated tasks

- Prioritize observability and governance

- Prototype early and measure impact