ai agent vs ai model: A rigorous comparison for AI workflows

Compare ai agent vs ai model to decide when autonomous agents outperform static models. Learn definitions, architectures, use cases, integration needs, governance, and ROI for smarter automation.

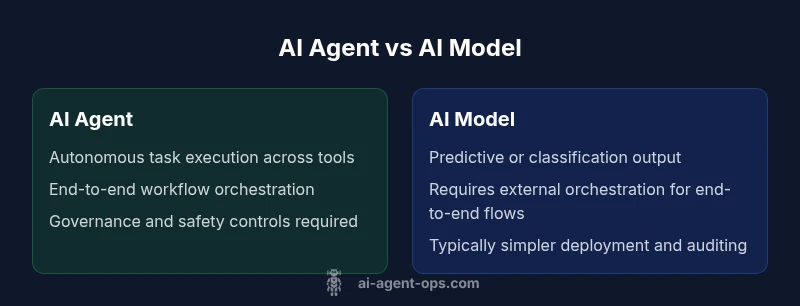

In the ai agent vs ai model comparison, autonomous agents are designed to act across tools, data sources, and environments to achieve explicit goals, adapting to changing circumstances. In contrast, AI models provide predictions or decisions within a defined scope and do not autonomously orchestrate tasks. This distinction drives how you implement automation, integrate systems, and manage lifecycle costs.

What is an AI agent? Defining agentic AI and how it differs from AI models

According to Ai Agent Ops, the ai agent vs ai model distinction centers on autonomy and orchestration. An AI agent is a software construct that can perceive its environment, decide on courses of action, and execute tasks by interacting with other systems, tools, or data sources. It is designed to operate across a workflow end-to-end, often adjusting behavior in real time as conditions change. By contrast, an AI model is typically a predictive or classification unit that processes inputs and returns outputs within a fixed scope. It does not autonomously manage tasks or orchestrate multi-step processes unless integrated with external controllers. Understanding this difference is fundamental when you design automation, because it determines how you structure data flows, governance, and maintenance. The ai agent approach emphasizes agentic AI capabilities—planning, adaptation, and proactive action—while traditional models emphasize accuracy, speed, and reliability of a singular prediction. This framing will guide the rest of the discussion on when to deploy each approach.

Autonomy, decision making, and control planes

Autonomy in AI is a spectrum. A pure model relies on external orchestration to execute actions, while an agent includes a control loop: observe, reason, decide, act, and learn. Decision making in agents is goal-driven and often uses planning, constraint satisfaction, and tool use to achieve outcomes. In contrast, most AI models rely on prompts and routing logic, with limited ability to autonomously adjust plans in real time. The control plane for agents is an orchestration layer that coordinates subtasks across services, APIs, and data stores. For teams, this means assessing whether you need autonomous sequencing, cross-system coordination, and adaptive behavior, or if a static predictor with a strong prompt pipeline suffices. The choice shapes how you design data contracts, error handling, and rollback strategies.

Architecture and components: what goes into an ai agent vs an ai model

An AI agent typically comprises perceptual input handlers, a reasoning/planning module, and an action executor that interacts with tools, databases, and APIs. It may include a monitoring layer for governance, safety checks, and audit trails. A model, conversely, is built around parameters, embeddings, and a forward pass that yields predictions. Deploying an agent often requires an orchestration backbone, tool adapters, and a lifecycle manager for updates and governance. In practice, you wire agents to executables, databases, and real-time streams, then oversee them with dashboards and policy controls. Models need versioning, retraining pipelines, and monitoring for drift, but they don’t natively manage multi-step tasks across ecosystems unless paired with other components.

Data, training, and maintenance implications

Models depend heavily on historical data and curated training pipelines. They require retraining when data distributions shift, and governance focuses on model cards, drift detection, and evaluation metrics. Agents rely on fresh data from connected sources and sometimes online learning signals, but their value comes from the ability to act, not just predict. Maintenance for agents includes tool inventory management, permission scoping, and incident response playbooks, as well as monitoring for tool failures and access revocation. Organizations should implement data contracts that specify what data a tool can read, how results are used, and how to audit actions. Ai Agent Ops highlights that governance becomes more complex with agents because autonomy expands the decision space and requires stronger controls across the lifecycle.

Integration patterns and deployment considerations

Deploying AI agents typically involves an orchestration layer, microservices, and secure connectors to data sources and tools. You’ll want clearly defined interfaces, rate limiting, and robust observability to understand agent behavior. AI models can be deployed as standalone services behind APIs, often cheaper to scale but require external orchestration to achieve end-to-end flows. A practical pattern is to couple a robust agent with specialized models for sub-tasks, creating a hybrid architecture that balances autonomy with predictability. When choosing deployment, consider the environment (cloud vs edge), latency requirements, and governance constraints. This section also covers integration testing, safety checks, and rollback capabilities to minimize risk in production.

Governance, risk, and security considerations

Agent-based systems introduce governance complexity because they act across boundaries and environments. Organizations must define policies for access control, tool usage, and data privacy, plus explainability and auditability of autonomous decisions. Security considerations include safeguarding tool interfaces, monitoring for anomalous behaviors, and ensuring that agents cannot exfiltrate data or cause cascading failures. It’s essential to implement escalation paths, human-in-the-loop options for critical decisions, and incident response playbooks. Ai Agent Ops emphasizes building a defensible security posture around agent orchestration, including regular audits and scenario testing to uncover edge cases.

Cost, ROI, and total cost of ownership (TCO) considerations

Financial planning for AI agents versus models requires recognizing different cost drivers. Agents incur costs from tooling, data integration, orchestration infrastructure, and ongoing governance. Models typically involve upfront training costs and hosting expenses, with drift monitoring as a continuing expense. ROI calculations should balance improvements in automation speed, error reduction, and cross-system coordination against the governance overhead and potential risk. The exact price range depends on scale, data access, and tool complexity, but the emphasis should be on total value rather than upfront expense alone. Ai Agent Ops notes that ROI is maximized when agents reduce manual handoffs and accelerate decision cycles across workflows.

Use-case alignment: when to pick each approach

For customer journeys requiring end-to-end automation across multiple systems, an AI agent often provides superior capabilities due to its orchestration and adaptability. For highly specialized tasks where accuracy and fast inference are paramount, standalone AI models can be cost-efficient and easier to audit. Hybrid patterns—combining agents for orchestration with specialized models for sub-tasks—are common in complex environments. This section offers practical decision criteria, such as required latency, regulatory constraints, and the degree of cross-system interaction, to guide the choice between ai agent and ai model.

Real-world patterns and anti-patterns

Real-world deployments show a spectrum of patterns from lightweight automations with a single agent to large multi-agent ecosystems. Common success factors include clear governance, well-defined tool inventories, and strong observability. Anti-patterns include attempting to retrofit agents into poorly understood processes, underestimating data access needs, and neglecting escalation and human-in-the-loop safeguards. A balanced approach acknowledges both capabilities and limits, clamps down on scope creep, and uses phased rollouts with measurable KPIs. Ai Agent Ops observations highlight the importance of starting with a minimal viable agent that interfaces with a small, controlled set of tools before expanding.

Practical guidelines and next steps

To get started, map your automation goals to concrete agent tasks and select a safe set of tools for initial integration. Establish data contracts, audit trails, and escalation policies before enabling autonomous actions. Implement phased deployments, with monitoring dashboards that surface policy violations and performance drift. Finally, align incentives with governance outcomes and ensure stakeholder buy-in across engineering, product, and business leadership. The Ai Agent Ops team recommends documenting decision criteria, success metrics, and contingency plans to sustain long-term success.

Comparison

| Feature | AI Agent | AI Model |

|---|---|---|

| Autonomy | High: acts across tools/environments | Low: operates within a defined scope |

| Orchestration | Built-in orchestration across workflows | External orchestration required |

| Scope of tasks | End-to-end cross-system automation | Single-task predictions |

| Lifecycle management | Agent lifecycle: setup, monitoring, governance | Model lifecycle: retraining, versioning |

| Data dependencies | Access to dynamic data/tools | Relies on pipelines and static inputs |

| Deployment complexity | Higher: integration and tooling | Lower: plug-and-play model service |

| Governance/auditability | Explicit governance; comprehensive logs | Easier to audit; relies on model cards |

Positives

- Enables end-to-end automation across systems

- Improved responsiveness and adaptability

- Better alignment with business processes

- Reusability and composability of agents

- Scales with orchestration to handle complex workflows

What's Bad

- Higher upfront integration and governance overhead

- Increased complexity and potential for misconfiguration

- More challenging debugging and auditing across tool interactions

- Security risks from multi-system access and tool misuse

AI agents generally offer stronger automation potential, but AI models are simpler and cheaper for focused tasks.

If your goals include end-to-end orchestration and adaptive action across tools, an AI agent is the better fit. For isolated, high-precision predictions with minimal orchestration, a classic AI model may suffice. A hybrid approach often delivers the best balance of automation, control, and ROI.

Questions & Answers

What is the core difference between an AI agent and an AI model?

The core difference is autonomy. An AI agent can perceive, decide, and act across tools and environments to achieve goals, while an AI model predicts or classifies within a defined scope and typically requires external orchestration for end-to-end tasks.

The main difference is autonomy: agents act across systems; models predict within a scope.

When should I use an AI agent instead of an AI model?

Use AI agents when you need autonomous orchestration, cross-system workflow execution, and dynamic decision making. Choose AI models for single-task predictions, lower complexity, and simpler governance.

Choose agents for orchestration; models for focused predictions.

Can models be used within agent-based workflows?

Yes. Models can be integrated as sub-components within agent-based workflows to handle specialized tasks, with the agent providing orchestration and oversight.

Models can be parts of an agent’s workflow.

What governance and security considerations apply to AI agents?

Agents require robust governance: access control, tool inventory management, audit trails, and escalation policies. Security should cover interface protection, anomaly detection, and incident response planning.

Governance and security are critical for agents because they act across systems.

What are typical costs and ROI considerations?|

Costs include tooling, data integration, orchestration infrastructure, and governance for agents; models incur training and hosting costs. ROI hinges on automation speed, error reduction, and cross-system efficiency balanced against governance overhead.

ROI depends on automation gains and governance costs.

What are common pitfalls to avoid with AI agents?

Avoid bloated tool inventories, unclear ownership, and insufficient escalation safeguards. Start with a small, controlled agent and expand iteratively while maintaining observability and policy compliance.

Start small, stay observable, and ensure escalation paths.

Key Takeaways

- Assess automation goals before choosing

- Prefer AI agents for cross-system orchestration

- Use AI models for focused, high-precision tasks

- Plan governance and safety early in agent programs

- Consider hybrid patterns to balance cost and capability