Ai Agent vs AI Tool: A Practical Comparison

A rigorous, 2026 guide comparing ai agent and ai tool approaches. Learn how autonomy, governance, and integration shape your automation strategy for smarter, faster results.

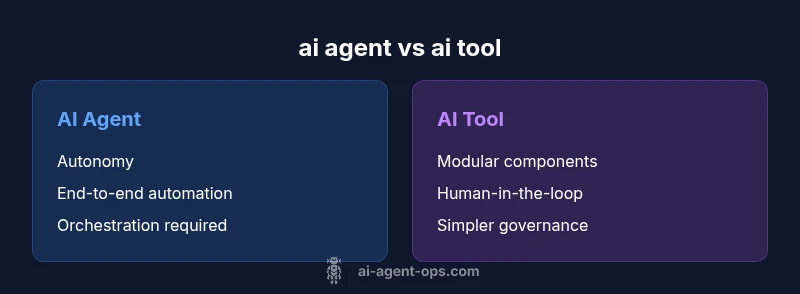

According to Ai Agent Ops, the choice between ai agent vs ai tool hinges on your automation goals, control needs, and governance requirements. AI agents offer autonomous, end-to-end execution within a defined workflow, while AI tools provide modular capabilities that you orchestrate. For teams aiming to scale with guardrails, agents shine; for tightly scoped tasks with human oversight, tools often win on simplicity.

What ai agent means in practice

An ai agent is an autonomous entity designed to perceive input, reason about goals, and take actions within a predefined environment. When we discuss ai agent vs ai tool, the agent is expected to operate without constant human direction, adapting its approach based on feedback and changing conditions. For developers and product teams, that autonomy comes with increased architectural responsibilities: state management, telemetry, decision logging, and clear escalation paths. In 2026, practitioners emphasize that a mature ai agent relies on robust orchestration, reliable data pipelines, and governance controls to prevent drift or unsafe actions. By contrast, an ai tool is best viewed as a component you invoke, configure, or chain with other components to accomplish a specific task. This distinction matters because it informs how you design workflows, how you measure performance, and how you allocate risk across your organization. The Ai Agent Ops team notes that understanding the difference is foundational for scalable automation, especially when the environment changes frequently and speed to value matters.

What ai tool means in practice

An ai tool is a capability or module that developers or operators use to perform a discrete function within a larger system. It might be a prompt-based model, a specialized API, or a service that executes a single task with well-defined inputs and outputs. When you compare ai agent vs ai tool, the tool typically requires explicit prompts, configurations, and human oversight to proceed through each step. Tools excel in predictability, ease of integration, and simpler governance since they minimize autonomous decision-making. In many real-world architectures, tools are the building blocks of larger automation but do not themselves drive end-to-end workflows without orchestration. The Ai Agent Ops team highlights that choosing tools is often about controlling risk and keeping a tight feedback loop with human operators, particularly in high-stakes domains like finance or healthcare.

Autonomy and control: the core divide

Autonomy is the defining difference between ai agent and ai tool. An ai agent operates with a degree of self-direction, continuously sensing context, selecting actions, and often learning from outcomes to improve future decisions. This can enable end-to-end processes—from data ingestion to action execution—without manual steps. A tool-based approach emphasizes user-controlled steps, where humans or higher-level orchestrators decide when and how to invoke each capability. Autonomy introduces opportunities for speed, scalability, and consistency, but also new risks, such as unintended consequences, compliance gaps, or drift from intent. Organizations should assess their tolerance for risk, need for real-time adaptation, and required visibility when choosing between these approaches.

Architectural considerations: orchestration vs direct calls

Implementing ai agent vs ai tool requires different architectural patterns. Agents demand a stateful orchestration layer, reliable context propagation, and clear decision logs so operators can audit actions and recover from missteps. This often means building a central agent runner, message buses, and telemetry dashboards that reflect intent, options considered, and outcomes. In contrast, ai tools are frequently integrated as stateless services or plug-ins, connected through APIs or SDKs, with simpler routing logic. Tools emphasize modularity, easier updates, and shorter feedback loops. For teams already invested in microservices, agents can be layered on top of existing orchestration to achieve flow-level autonomy, while tools can slot into current pipelines with minimal disruption. The choice hinges on how much you value end-to-end automation versus incremental capability and governance overhead.

When to choose an AI agent

Use an ai agent when your goals include end-to-end automation, dynamic decision-making, and the ability to operate with limited human input. This is common in customer support orchestration, data processing pipelines, monitoring and remediation loops, and business process automation that spans multiple systems. Agents excel when environments are complex, inputs are noisy, and feedback can be used to improve behavior over time. Start with a small pilot in a controlled domain, then expand as you establish guardrails and measurable impact. In all cases, define escalation paths and ensure observability so you can intervene if drift occurs or safety constraints are breached. The Ai Agent Ops team recommends a staged rollout and ongoing governance to balance speed with risk.

When to choose an AI tool

Choose ai tools for clearly defined, bounded tasks where human oversight is acceptable or required. Tools are ideal for lightweight automation, data extraction, classification, or decision-support steps that fit neatly into existing processes. They work well when your priority is predictability, auditability, and straightforward integration with minimal risk exposure. A practical tactic is to assemble a toolkit of specialized tools and orchestrate them via a lightweight controller, reserving autonomous agents for the parts of the workflow that truly benefit from self-direction. In practice, many teams blend tools and agents, applying governance and escalation controls to keep outcomes aligned with business goals.

Comparison

| Feature | AI Agent | AI Tool |

|---|---|---|

| Autonomy | High autonomy within defined goals | Low autonomy; user-driven control |

| Decision-Making Scope | Full lifecycle decisions with environment perception | Human-in-the-loop or single-task decisions |

| Integration Complexity | Higher due to orchestration and state management | Lower; plug-and-play features |

| Data Requirements | Contextual data, telemetry, feedback loops | Input/output data; prompts or configs |

| Maintenance & Governance | Ongoing monitoring, governance, retraining | Software updates; limited monitoring |

| Best For | End-to-end automation with adaptability | Defined tasks with human oversight |

Positives

- Supports scalable automation with minimal human input over time

- Enables end-to-end workflow execution across systems

- Improves consistency through standardized decision logic and policies

- Accelerates experimentation and learning in complex processes

- Facilitates continuous improvement via feedback loops

What's Bad

- Requires strong governance, risk controls, and escalation paths

- Can introduce complex debugging and monitoring needs

- Higher upfront integration effort and ongoing maintenance

AI agents are strongest for end-to-end automation; AI tools excel for modular, well-scoped tasks

If you need autonomous execution with guardrails, choose agents. For simple, high-control tasks, tools are typically the safer starting point.

Questions & Answers

What is the difference between an AI agent and an AI tool?

An AI agent operates autonomously within a workflow, perceiving context, making decisions, and acting to complete tasks. An AI tool is a component you invoke and control, typically performing a specific function under human direction or orchestration.

AI agents run tasks on their own within a workflow; AI tools are controlled by you and used for specific steps.

When should I use an AI agent vs an AI tool?

Use an AI agent when you need end-to-end automation with adaptive behavior. Choose an AI tool for well-defined tasks where human oversight and simple integration suffice.

Go with an agent for autonomous end-to-end work; pick a tool for clearly defined tasks.

What are common risks with AI agents?

Risks include misalignment with intent, safety concerns, drift over time, data privacy issues, and governance gaps. Mitigate with guardrails, testing, and robust monitoring.

Agents can drift from intent; guardrails and ongoing monitoring help keep them aligned.

What governance practices support AI agents?

Implement strict access controls, detailed audit trails, versioning, sandbox testing, escalation paths, and phased rollouts to manage risk and ensure accountability.

Set up audits, guardrails, and staged rollouts for agents.

Are AI agents expensive to implement?

Costs vary by scope, data needs, and governance requirements. Plan for integration, ongoing maintenance, and infrastructure to support reliable automation.

Costs depend on scope; plan for ongoing maintenance and data needs.

How does security differ between agents and tools?

Agents can expand the attack surface due to autonomous cross-system actions; secure with strong authentication, access controls, and continuous monitoring. Tools tend to have contained risk but rely on prompt/config safety.

Agents raise broader security considerations; guard with strong controls and monitoring.

Key Takeaways

- Define scope: autonomy vs control before selecting a path

- Invest in governance when adopting AI agents

- Blend both approaches where appropriate to balance speed and safety

- Pilot in small, bounded domains to learn and adjust

- Ensure observability and clear escalation for safe operation