Ai Agent vs Tool: A Practical Comparison for AI Workflows

A rigorous, objective comparison of AI agents versus AI tools for developers, product teams, and leaders. Explore autonomy, orchestration, integration, cost, risk, and governance to decide the right approach for your AI workflows.

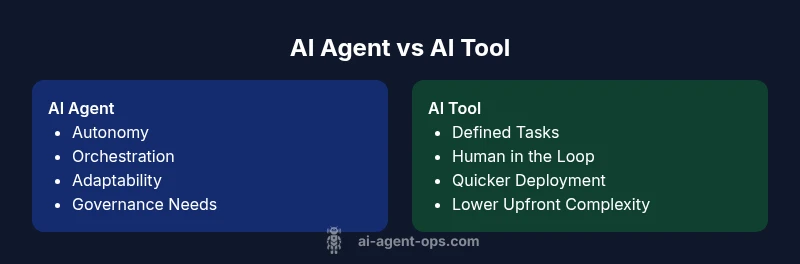

AI agents and AI tools differ primarily in autonomy and orchestration. An AI agent acts independently to achieve goals, while an AI tool requires explicit instructions. For most teams, the choice hinges on risk tolerance, integration scope, and desired speed. This article compares ai agent vs tool to help you select the right approach for your workflow.

Defining the Landscape: AI Agent vs AI Tool

In the AI workflow space, the term ai agent vs tool describes two archetypes for automating tasks. An AI agent is designed to operate with a degree of autonomy, pursuing goals, adapting to changing inputs, and orchestrating actions across systems. An AI tool, by contrast, is a more constrained component that executes explicit instructions or prompts provided by a user. For developers and product teams at Ai Agent Ops, the distinction isn't merely academic: it shapes architecture, governance, and risk management. This article builds a practical vocabulary around these terms and sets up a framework for evaluation. As teams consider adoption, the anxiety often centers on control, explainability, and accountability. According to Ai Agent Ops, understanding the difference between an AI agent and a traditional tool is foundational for building agentic AI workflows. The discussion that follows translates abstract capabilities into concrete decisions for architecture, data governance, and risk controls. The guiding question remains: how much autonomy do we want, and what governance is required to stay aligned with business objectives? Below, we outline the lifecycle, success criteria, and common pitfalls of each path.

Core Differences: Autonomy, Decision-Making, and Orchestration

The core differences between ai agent vs tool lie in autonomy, decision scope, and orchestration requirements. An AI agent is designed to autonomously pursue a goal, using sensing, planning, and acting across multiple systems. It can adapt to new inputs, revise plans, and continue operating without constant human prompts. An AI tool, on the other hand, is essentially a software component that executes defined tasks when triggered by humans. It lacks intrinsic goal-directed behavior and relies on externally supplied prompts or workflows. For teams, this distinction becomes a lens for evaluating control, latency, and scalability. A key nuance is how each handles failure: agents may replan after an error, while tools usually require re-invocation or manual intervention. Governance models differ as well; agents demand stricter policies, audit trails, and safety checks given their autonomous nature. For Ai Agent Ops, the practical takeaway is that autonomy is a double-edged sword—high potential for speed and coverage, but higher governance overhead. This section contrasts capability envelopes, risk envelopes, and governance implications to guide a decision aligned with organizational risk appetite and technical maturity.

When to Use an AI Agent

Use an AI agent when your workflows demand ongoing decision-making, cross-system coordination, and adaptability. Scenarios include: end-to-end process automation where the agent orchestrates data collection, decisioning, and action across services; dynamic problem-solving that adjusts to changing inputs; and proactive monitoring that triggers remediation without waiting for a human cue. Agents excel in environments with high variability, where time-to-value matters and human-in-the-loop control is either impractical or too slow. When regulatory or safety requirements exist, design the agent with explicit guardrails, logging, and explainability baked in. From Ai Agent Ops' perspective, agents shine in complex, multi-step processes where rigidity of prompts would bottleneck outcomes. The decision to deploy an agent should come with a governance plan that defines autonomy limits, escalation paths, and auditing rules. To maximize success, pair agents with robust telemetry and a policy engine that can constrain behavior when needed.

When to Use an AI Tool

AI tools are ideal for clearly defined, repeatable tasks that benefit from speed and human oversight. Common use cases include data transformation, report generation, basic decision automation, and assisting with creative tasks under explicit prompts. Tools are typically easier to deploy, require less governance, and have a shorter time-to-value. They work well in teams that want predictable outcomes, strict boundaries, and incremental automation. Tools can act as building blocks within larger workflows, serving as dependable subroutines that a human or an agent can orchestrate. For teams just starting with automation, tools offer a lower-risk entry point to learn, measure impact, and scale later with more advanced autonomous components. Ai Agent Ops emphasizes that tools remain essential as foundational components; autonomy should be introduced gradually as governance and confidence mature.

Integration and Governance Considerations

Both paths require thoughtful integration, data governance, and governance processes, but the emphasis differs. AI agents demand comprehensive telemetry, explainability, and escalation policies. You’ll want to implement: auditable decision logs, safety nets, rate limits, and explicit capability boundaries. Data governance is critical: track provenance, data lineage, and access controls; ensure compliance with privacy and security standards; and define retention policies for agent activity. With AI tools, governance focuses on prompt governance, versioning of prompts and configurations, and change management. Integration considerations include API compatibility, event-driven architectures, and orchestration layers that can manage both autonomous and semi-autonomous components. Practical guidance from Ai Agent Ops suggests starting with a formal evaluation rubric that covers autonomy, data handling, monitoring, and ethical risk. The goal is to ensure that whichever path you choose remains auditable, controllable, and aligned with business objectives.

Cost, Complexity, and ROI Considerations

Cost considerations for ai agent vs tool hinge on upfront investment, ongoing governance, and the total cost of ownership (TCO). Agents typically require more planning, a policy framework, and stronger monitoring, which can raise initial costs but may drive greater long-term ROI through automation, scalability, and faster decision cycles. Tools usually have lower upfront costs and simpler deployment, delivering quicker wins but potentially higher long-term costs if they require extensive manual orchestration or frequent reconfiguration. ROI depends on the scale of automation, the complexity of tasks, and how well governance reduces risk. Ai Agent Ops notes that maturity in data pipelines, security practices, and operational resilience can significantly affect outcomes. A phased approach—start with a tool, evolve to an agent for high-impact tasks, and maintain clear cost tracking—helps manage risk while proving value over time.

Real-World Scenarios and Case Patterns

Across industries, teams experiment with both approaches. In software development, AI agents coordinate build pipelines, test orchestration, and deployment gates, cutting cycle times when governance is strong. In customer service, AI tools handle triage prompts or summarize tickets; AI agents can take ownership of escalations and route issues adaptively. In finance, agents monitor risk signals and trigger remediation workflows, while tools perform rule-based data normalization and reporting. The key pattern is to map business objectives to the capability envelope: autonomy for end-to-end workflow ownership, or modular automation via tools stitched into orchestrated pipelines. Ai Agent Ops highlights that the most successful implementations clearly define decision rights, escalation protocols, and performance metrics from day one. Neutral evaluation benchmarks—such as latency, reliability, and interpretability—help compare outcomes across paths.

Practical Evaluation Checklist and Next Steps

To compare ai agent vs tool in a concrete setting, use a practical evaluation checklist:

- Define the primary objective and success criteria (speed, accuracy, coverage).

- Map data flows, integration points, and required governance controls.

- Establish escalation paths and safety constraints for autonomous behavior.

- Benchmark a pilot using a well-scoped task, comparing time-to-value and maintainability.

- Instrument telemetry: logs, decisions, outcomes, and auditability.

- Plan a staged rollout with incremental autonomy and guardrails.

- Document lessons learned and adjust the governance model accordingly.

From Ai Agent Ops’ perspective, a disciplined evaluation reduces the risk of over- or under-automation and helps teams align with strategic goals. A careful balance between autonomy and control often yields the best outcomes in complex environments.

Authoritative Sources for Further Reading

For readers who want deeper context, the following sources offer foundational material on AI governance, safety, and architecture in professional settings:

- https://www.nist.gov/topics/artificial-intelligence

- https://ai.stanford.edu/

- https://csail.mit.edu/

The Future of AI Agents and Tools in Business

The landscape of ai agent vs tool will continue to evolve as architecture, governance, and standards mature. Expect more robust agent orchestration platforms, improved safety guarantees, and standardized metrics for autonomy, interpretability, and accountability. As teams gain experience, hybrid approaches that combine autonomous agents with well-governed tools are likely to become the norm in many operating models. The trajectory points toward greater business impact with smarter, auditable automation that scales across functions while preserving human oversight where it matters. Ai Agent Ops anticipates a gradual elevation of trust in autonomous workflows, supported by mature data governance and clear escalation policies.

Comparison

| Feature | ai agent | ai tool |

|---|---|---|

| Autonomy | High independence with goal-driven actions | Low independence; requires explicit instructions |

| Decision Scope | Broad, adaptive reasoning and planning | Narrow, task-specific prompts |

| Integration & Orchestration | Requires agent orchestration platforms; cross-system coordination | Fits as standalone subroutines within existing systems |

| Data Handling & Privacy | Proactive data governance, logging, and traceability | Reactive data processing with explicit prompts |

| Use Cases | End-to-end automation, dynamic problem solving | Automated data processing, report generation |

| Complexity & Setup | Higher complexity; governance overhead | Lower setup; simpler deployment |

| Cost & ROI | Potentially higher upfront cost with strong long-term ROI | Lower upfront cost; ROI depends on scale |

| Best For | Autonomous workflows with cross-domain impact | Well-defined tasks needing fast value |

Positives

- Potential for substantial time savings through end-to-end automation

- Greater consistency and decision quality when properly governed

- Enhanced scalability as tasks compound across systems

- Stronger alignment with strategic goals through autonomous orchestration

- Improved telemetry and auditability when designed with governance”],

- cons$n/a

- cons wrong

What's Bad

- Higher upfront governance and architectural requirements

- Increased risk if guardrails fail or are poorly configured

- Potential for reduced transparency if the agent's decisions are opaque

- Dependency on sophisticated monitoring to prevent drift

AI agents are powerful for orchestration when governed well; AI tools offer rapid wins for defined tasks.

Choose an AI agent for complex, cross-system automation with strong governance. Choose an AI tool for fast, well-defined tasks with minimal setup. Hybrid approaches work best when autonomy is introduced gradually with solid monitoring.

Questions & Answers

What is the key difference between an AI agent and an AI tool?

An AI agent operates autonomously to achieve goals by sensing, planning, and acting across systems. An AI tool executes predefined tasks under human instruction and does not autonomously pursue goals.

An AI agent acts on its own to reach a goal, while a tool follows the prompts you give it.

When should I choose an AI agent over a tool?

Choose an agent for complex, autonomous workflows that cross multiple systems, especially when speed and adaptability matter. Choose a tool for simple, well-defined tasks with clear prompts and strong human oversight.

If you need ongoing decisions across systems, pick an agent; for simple tasks, use a tool.

What governance considerations are essential for AI agents?

Establish safety guardrails, auditing and explainability, escalation paths, and data provenance policies. Ensure monitoring, logging, and incident response plans are in place before deployment.

Set guardrails, log everything, and have a plan to handle issues before you let an agent run wild.

What integration challenges should I expect?

Agents require orchestration layers, robust APIs, and reliable event streams. Tools may integrate more easily, but agents demand governance, versioning, and clear ownership of decisions.

Expect more moving parts with agents; plan for orchestration, APIs, and governance.

How do I evaluate cost and ROI?

Assess total cost of ownership, including governance, monitoring, and maintenance. Compare projected time-to-value improvements against the cost of building and sustaining autonomy.

Think about the long-term value and ongoing governance costs, not just initial setup.

What are common pitfalls when adopting AI agents?

Overestimating autonomy without guardrails, underestimating data governance needs, and ignoring explainability can lead to drift and risk. Start small and scale with controlled experiments.

Be careful with autonomy at first and keep a tight rein on governance.

Key Takeaways

- Start with clear objectives and governance

- Use agents for end-to-end workflows; tools for modular tasks

- Plan phased autonomy to balance risk and value

- Invest in telemetry and auditability from day one

- Leverage hybrid patterns for scalable automation