ai agent or bot: a practical comparison for smarter automation

Compare AI agents and traditional bots to understand autonomy, learning, governance, and ROI. This analytical guide helps developers and leaders select the right approach for smarter automation in 2026.

An ai agent or bot framework refers to software entities that automate tasks, either as autonomous agents or as rule-based bots. An ai agent or bot that is autonomous can decide actions using models and data toward a goal, while a traditional bot follows fixed rules and lacks adaptive learning. For teams pursuing scalable automation, AI agents enable dynamic problem solving, while simple bots excel at predictable, rule-based tasks.

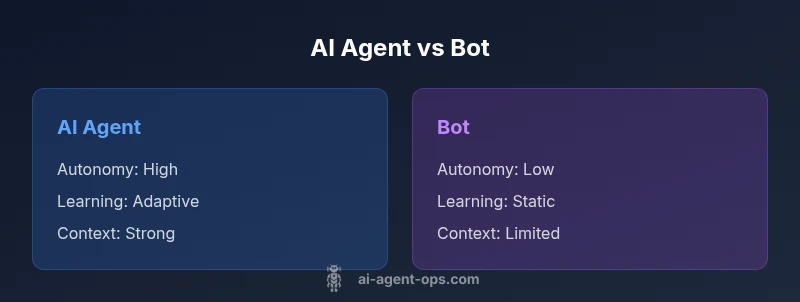

Defining AI agents and bots: scope and distinctions

In modern automation, a working vocabulary around ai agent or bot is essential. An ai agent or bot that can operate autonomously uses models, data streams, and feedback to pursue a defined objective. A traditional bot, in contrast, follows fixed rules and reacts to inputs without adapting its strategy. The distinction matters because it dictates architecture, governance, and performance metrics. According to Ai Agent Ops, the most impactful difference is autonomy: agents can decide what to do next, while bots execute predefined paths. Yet both types can share components like APIs, event buses, and logging, enabling consistent governance across your automation stack. The practical question remains: is the task well-defined and predictable, or does it require adaptation to new contexts? This framing helps align design decisions from day one.

Use cases and decision points across industries

AI agents excel where tasks involve uncertainty, data integration, and dynamic decision making. For example, in customer support, an AI agent can triage issues, route conversations, and trigger processes with minimal human input. In supply chain, agents optimize routing and inventory based on real-time signals. Traditional bots still shine in simple, rule-based workflows such as form filling or static alerts. When deciding between ai agent or bot, consider factors like data availability, the need for learning, and governance requirements. Across finance, healthcare, and e-commerce, the choice often hinges on the complexity of the task and the tolerance for errors. A hybrid approach—combining agents for decision-heavy tasks with bots for deterministic steps—can offer a practical path forward.

Core capabilities: autonomy, learning, and reasoning

The core differences between an AI agent and a bot lie in autonomy, learning, and contextual reasoning. An AI agent can set goals, select actions, and revise its plan as outcomes are observed, leveraging models such as large language models and reinforcement signals. A bot tends to execute a fixed sequence of steps with limited or no learning. Context awareness, memory, and planning capabilities enable agents to operate with less human intervention, especially in multi- step workflows. For organizations, this translates into more resilient automation capable of handling edge cases and evolving requirements. However, with increased capability comes increased responsibility: monitoring, explainability, and guardrails become essential components of the system.

How AI agents operate within enterprise architectures

Deploying AI agents requires a thoughtful architectural pattern. Typically, an orchestration layer coordinates tasks across services, while a policy engine enforces constraints and safety checks. Data pipelines feed models with fresh information, and feedback loops allow continual improvement. A traditional bot may sit directly in front of an API or UI, with minimal abstraction. Agents, by contrast, rely on modular components: a decision module, an action executor, and an observer to monitor outcomes. Security, access control, and auditability must be baked in from the start. In practice, teams design scalable ecosystems that treat agents as reusable services, deploy them inside sandboxed environments, and rely on centralized logging for governance.

Side-by-side framework: ai agent vs bot

When selecting an approach, frame the decision around task complexity, data readiness, and governance needs. If the task is straightforward, with stable inputs and outputs, a bot may be quicker to deploy and cheaper upfront. If the task involves ambiguity, data from multiple sources, or a need to adapt over time, an AI agent offers higher value through autonomous decision making. A well-designed framework includes evaluation metrics, clear ownership, and escalation paths. In many cases, teams implement a hybrid architecture: agents handle decision-making while bots perform deterministic steps. This fusion captures the benefits of both worlds and helps unlock scalable automation.

Evaluation criteria: performance, safety, governance

Evaluating AI agents versus traditional bots should center on measurable outcomes. Key performance indicators include task completion rate, latency, accuracy, and resilience under noisy data. Safety considerations cover risk of undesired actions, data leakage, and model biases. Governance encompasses policy enforcement, user consent, auditability, and traceability of decisions. Many organizations adopt a tiered risk framework, categorizing tasks by criticality and requiring guardrails for high-stakes processes. Budget and total cost of ownership are also essential: agents may demand upfront investment in training and infrastructure but offer longer-term efficiency gains. A balanced assessment weighs short-term delivery against long-term capabilities.

Implementation considerations: data, security, and compliance

Implementing AI agents calls for robust data governance. Organizations must identify data sources, ensure data quality, and manage access controls across teams. Security concerns include authentication, authorization, and encrypted communication between services. Compliance matters vary by industry: healthcare, finance, and government impose stricter controls on data handling and audit trails. When launching an ai agent or bot, plan for data lineage, model versioning, and incident response. The ability to roll back changes, monitor performance, and maintain logs is critical for maintaining trust and accountability in agent workflows.

ROI, cost, and total cost of ownership

Cost models for AI agents differ from traditional bots. Bots typically incur lower initial costs but may require more manual maintenance. AI agents often demand investment in computing resources, model access, and data pipelines, yet can deliver greater automation yields over time through reduced human labor and faster decision cycles. To assess ROI, estimate improvements in throughput, accuracy, and error reduction, then weigh these against the total cost of ownership, including monitoring, governance, and updates. A transparent cost model helps stakeholders understand payback horizons and ensures finance teams can justify the investment.

Best practices for governance and agent orchestration

Governance should be built into the design from day one. Define clear ownership, escalation procedures, and who can override agent actions. Implement separation of duties, robust authentication, and auditable logs. Agent orchestration should be modular, with reusable components and well-documented interfaces. Consider introducing an escalation path for high-stake decisions and continuous monitoring to detect drift or anomalous behavior. Regular reviews and adaptive risk assessment help maintain alignment with business objectives and regulatory requirements.

Common pitfalls and risk management

Common pitfalls include over-automation, insufficient monitoring, and hidden data biases that skew agent decisions. Rushed deployments without guardrails can lead to unintended actions or data leaks. To mitigate risk, implement staged rollouts, sandbox testing, and explicit fail-safe mechanisms. Security gaps often materialize in API exposure or weak access controls. Privacy concerns require minimization of data collection and strong encryption. Planning for incident response, recovery, and post-incident analysis is essential to maintain stakeholder trust in AI-driven automation.

The future of agentic AI and organizational impact

Looking ahead, agentic AI is likely to transform workflows by increasing autonomy, adaptability, and cross-system orchestration. As models evolve, organizations will rely on more sophisticated governance to manage safety and responsibility. The future of ai agent or bot is not a choice between extremes but a spectrum: bots handling simple, repeatable tasks and agents orchestrating complex decisions across ecosystems. For teams, the implications include new skill requirements, updated operating models, and an emphasis on explainability and auditable decision-making.

Practical checklist to start a pilot

- Define the objective and success criteria for the pilot

- Inventory candidate tasks and data sources

- Establish governance, ownership, and escalation paths

- Build a minimal viable architecture with an agent and a small set of rules

- Implement logging, monitoring, and rollback mechanisms

- Run staged tests with synthetic and live data

- Measure outcomes against KPIs and adjust the plan accordingly

- Plan for a scale-out roadmap if results are favorable

Comparison

| Feature | AI Agent | Traditional Bot |

|---|---|---|

| Autonomy | High autonomy with goal-directed behavior | Low to moderate; rule-based |

| Learning capability | Adaptive, can improve with data | Static rules; limited learning |

| Context awareness | Contextual reasoning using models | Limited context handling |

| Integration | API-first orchestration; supports agent frameworks | Direct integrations; simpler |

| Security & governance | Requires governance, audit trails, explainability | Lower governance if simple |

| Cost and ROI | Higher upfront, potentially greater ROI over time | Lower upfront cost, smaller ROI |

| Maintainability | Requires monitoring and updating models | Easier to maintain if rules are stable |

Positives

- Faster automation and decision-making

- Greater adaptability with learning loops

- Improved scalability and reusability

- Better alignment with agentic AI workflows

- Enhanced integration with existing systems

What's Bad

- Higher upfront complexity and cost

- Greater risk if not properly governed

- Requires data governance and security

- Possible opacity in decision processes

AI agents generally outperform traditional bots in dynamic environments, but require governance and investment.

Choose AI agents if you prioritize adaptability, learning, and cross-system orchestration. Choose traditional bots for simple, deterministic tasks with lower upfront costs.

Questions & Answers

What is an AI agent and how does it differ from a bot?

An AI agent is autonomous software capable of setting goals, choosing actions, and learning from outcomes. A traditional bot follows fixed rules with limited adaptability. The choice depends on task complexity and governance needs.

An AI agent is autonomous and learns from outcomes; a bot follows fixed rules. The right choice depends on your task complexity and governance needs.

When should I consider an ai agent over a bot for my project?

If your task involves changing conditions, data from multiple sources, or requires decision-making beyond fixed steps, an AI agent makes sense. For simple, repeatable tasks, a bot may be faster and cheaper to deploy.

If your task changes or involves data from many sources, go with an AI agent; otherwise a bot might be better for simplicity.

What are the primary costs and ROI considerations?

Costs include infrastructure, data pipelines, and model access for AI agents, plus ongoing governance. ROI depends on throughput gains, error reduction, and labor savings over time.

Expect higher upfront costs with AI agents, but potential for greater long-term savings as automation scales.

How can I ensure governance and safety when using AI agents?

Implement guardrails, audit trails, access controls, and escalation paths. Regular reviews help detect drift and bias, ensuring compliance with policies.

Guardrails, audits, and clear escalation keep AI agents safe and compliant.

What are practical steps to pilot an AI agent in a team?

Start with a small, non-critical workflow, define success metrics, and build an isolated environment. Iterate with data, measure outcomes, and scale based on results.

Begin with a small pilot in a safe environment, measure results, then expand.

What are common pitfalls in agent orchestration?

Over-automation, insufficient monitoring, and opaque decision-making are common. Mitigate with staged rollouts, clear ownership, and transparent logging.

Avoid over-automation and hidden decisions; use staged rollouts and clear ownership.

Key Takeaways

- AI agents enable autonomous decision making

- Bots suit simple, rule-based tasks

- Governance and risk management are essential

- Hybrid architectures often win in practice

- Pilot early with clear KPIs