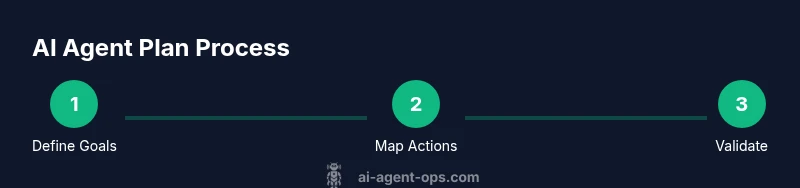

How to Create an AI Agent Plan: Step-by-Step Guide

Learn to craft a robust ai agent plan with goals, actions, data flows, and governance. This 1800-word how-to covers templates, examples, and best practices for developers, product teams, and business leaders.

Create an ai agent plan by defining objective, scope, and constraints; map required actions, data flows, and decision logic; draft workflows, rules, and safety checks; validate with scenarios, then iterate. The guide provides a step-by-step framework, ready-to-use templates, and real-world examples.

What is an ai agent plan and why it matters

An ai agent plan is the structured blueprint that defines what an agent should achieve, how it should act, and how its behavior is governed within a broader automation workflow. In practice, it translates strategic goals into concrete tasks, decision rules, data requirements, and safety constraints. According to Ai Agent Ops, a well-constructed agent plan improves predictability, reduces risk, and accelerates iterative delivery across complex systems. By codifying goals, limits, and measurable outcomes, teams can align developers, product managers, and operators around a shared blueprint for agentic AI workflows. The plan serves as a single source of truth that supports governance, compliance, and quick audits as the system evolves. The term 'ai agent plan' should be understood as a living artifact: revisited, updated, and validated as conditions change. In real-world settings, teams use this plan to coordinate multiple agents, handle exceptions, and balance autonomy with oversight. The plan also supports practical patterns such as agent orchestration and modular workflows, ensuring that growth in automation does not come at the expense of control. Across industries, the ai agent plan acts as a central compass for decisions, risk management, and collaboration among cross-functional teams.

Core components of a robust ai agent plan

A robust ai agent plan combines several core components that work together to deliver reliable automation. At the center is a clear objective: what problem the agent should solve and what success looks like. Next, define the scope and boundaries to prevent scope creep and ensure the plan remains implementable. Constraints cover timing, privacy, data access, and governance rules. You should also specify success criteria and key metrics so teams can measure progress after deployment. Governance structures determine who approves changes and how risk is managed, while ownership clarifies who is responsible for updates and incident response. Data requirements include sources, formats, quality, and retention policies. Decision logic defines how the agent chooses actions under different conditions, including fallback paths for uncertain situations. Finally, logging, observability, and audit trails ensure you can trace decisions and outcomes. This section also highlights the importance of aligning with agent orchestration patterns and agent-core capabilities so the plan scales as new agents are added. A cohesive ai agent plan reads as a living document that guides design, development, and operations over time. The result is a scalable blueprint that supports collaboration between developers, operators, and business stakeholders.

Defining goals, scope, and constraints

Begin with a precise business objective: what outcome will you improve, and how will you know it's working? Frame it in measurable terms and attach a deadline. Then sketch the scope: which processes, data domains, and user journeys will the agent touch? Finally, set constraints: budget, compliance requirements, and safety guidelines. Hard constraints describe non-negotiables (for example, data handling rules), while soft constraints describe preferred approaches (such as latency targets). An ai agent plan should also specify autonomy boundaries: when should the agent act without human intervention, and when should escalation occur? Document escalation paths, including who will review exceptions and how quickly. This clarity reduces ambiguity and keeps teams aligned as changes occur. A well-defined set of goals and constraints also makes testing more meaningful, because you can build scenarios that stress limits and reveal gaps early. Throughout, keep language concrete and testable so stakeholders across product, engineering, and security can speak the same language about success.

Mapping actions, decisions, and data flows

Create a map of every action the agent must perform to reach the stated goals, and connect each action to its trigger conditions. Break complex tasks into modular actions that can be recombined across workflows. For each action, specify inputs (data sources), outputs (data products), and dependencies on other actions or agents. Draft decision logic as a simple policy or rule set: if condition A is true, perform action B; if risk rises, switch to a safe alternative or escalate. Document data flows: sources, formats, latency, privacy controls, and storage locations. Include data quality checks to prevent garbage in, garbage out. A clear data map reduces debugging time when external systems change and makes it easier to demonstrate compliance during audits. Finally, visualize the flow with diagrams to communicate the end-to-end path to non-technical stakeholders and to guide future enhancements.

Designing workflows, policies, and safety checks

Design end-to-end workflows that connect actions into complete tasks. Define stages, branching logic, and parallel paths to maximize throughput while preserving correctness. Establish policies for rate limits, error handling, and retry logic; specify when to retry, for how long, and when to escalate. Safety checks include data minimization, consent requirements, and access controls; these guardrails help ensure user trust and regulatory compliance. Build escalation paths for failed tasks or service outages, including human-in-the-loop checkpoints when necessary. Annotate decision points with rationale to accelerate training and audits. Document how the plan handles bias, fairness, and privacy risks, and describe countermeasures. The strongest ai agent plans embed tamper resistance and anomaly detection, with a monitoring strategy that flags deviations early. As you refine the plan, reuse templates to reduce duplication while preserving required safeguards. Finally, prepare for deployment by outlining rollout steps and rollback procedures.

Validation, testing, and scenario planning

Validation is about proving your plan works under real-world conditions before production. Create test scenarios that exercise expected conditions and edge cases, and record results against predefined success criteria. Use synthetic data to protect real users when possible, and simulate latency spikes, data outages, and partial inputs. Perform both unit tests on individual actions and end-to-end tests on complete workflows. Practice human-in-the-loop interventions to verify escalation rules and response times. Governance and compliance checks should be repeated during testing to confirm that approvals, versioning, and audit trails are in place. Track qualitative and quantitative indicators such as reliability, visibility, and decision transparency, but avoid asserting exact metrics in this guide. Ai Agent Ops's approach favors incremental pilots: start small, learn, and expand while maintaining safety and governance. Maintain a changelog of decisions, risks, and observed outcomes to support future audits and improvements.

Templates, starter kit, and practical example

This section provides templates and a starter kit to accelerate your ai agent plan. Templates cover goals, constraints, data maps, decision rules, and workflow diagrams; use them to jumpstart planning sessions. The starter kit includes a minimal plan you can adapt in a few hours and a concrete example: an automation agent that triages support tickets, routes complex issues to humans, and auto-sends responses for simple inquiries. The example demonstrates how business goals translate into actions, data needs, decision logic, and governance steps. You can reuse the templates across domains such as procurement, IT operations, or customer analytics, adjusting for privacy and compliance as needed. The starter kit also features sample audit-ready documentation to help communicate risk and governance to stakeholders. Remember, an ai agent plan should remain a living artifact, updated as the environment changes and new agents emerge.

Next steps: from plan to production and beyond

To move from plan to production, align teams across product, engineering, data, security, and governance. Build a lightweight MVP of the ai agent plan and monitor early feedback to fine-tune goals and rules. Establish a regular cadence for revisiting objectives, constraints, and performance metrics every iteration cycle. Invest in tooling for agent orchestration, observability, and governance to maintain control while enabling automation at scale. Finally, implement a formal escalation and incident response framework so you can recover quickly from failures and preserve user trust. The journey from plan to production is iterative: it requires disciplined execution, continuous learning, and a commitment to safety and quality.

Tools & Materials

- Planning notebook or digital document(For goals, constraints, and decisions)

- Code environment (Python 3.9+ or Node.js)(Prototype agent logic and rules)

- Data map templates(Capture data sources, formats, and quality rules)

- Stakeholder interview checklist(Elicit goals, constraints, and risk tolerance)

- Scenario library(Predefined test cases and edge conditions)

- Diagrams/whiteboard tools(Visualize workflows and data flows)

Steps

Estimated time: 1-2 hours

- 1

Define goals and constraints

Identify the business objective the ai agent plan will address and translate it into measurable success criteria. Specify hard constraints (compliance, data handling) and soft preferences (latency targets, preferred architectures). Set the boundary for autonomy versus human-in-the-loop oversight.

Tip: Tie every goal to a concrete test case to prevent vague outcomes. - 2

Set scope and ownership

Declare which processes, data domains, and user journeys the agent will touch. Assign owners for goals, data quality, and governance to ensure accountability across teams.

Tip: Create a RACI diagram to clarify responsibilities up front. - 3

Map actions to data sources

List required actions and map each one to inputs, outputs, and dependencies. Document data formats, refresh rates, and privacy controls to avoid later mismatches.

Tip: Use modular actions that can be reused across different workflows. - 4

Design decision logic

Develop a rule-based or policy-driven decision engine. Include fallback paths and escalation rules for uncertain scenarios.

Tip: Rigorously separate decision logic from action implementation for easier maintenance. - 5

Draft end-to-end workflows

Chain actions into complete tasks with stages, branching, and parallel paths. Define retry and error handling strategies.

Tip: Document rationale at decision points to speed future audits. - 6

Plan governance and safety checks

Embed privacy, bias mitigation, access controls, and tamper-resistance features. Outline monitoring and anomaly-detection strategies.

Tip: Involve security and compliance early to avoid costly retrofits. - 7

Validate with scenarios

Create test scenarios that exercise both normal and edge conditions. Run unit and end-to-end tests with synthetic data when possible.

Tip: Maintain a changelog of decisions, risks, and test outcomes. - 8

Prepare for production and iteration

Build a lightweight MVP, solicit feedback, and schedule regular plan reviews. Scale gradually while preserving governance.

Tip: Treat the ai agent plan as a living artifact that evolves with the business.

Questions & Answers

What is an ai agent plan?

An ai agent plan is a structured blueprint that defines goals, actions, data flows, and governance for agentic automation. It translates business objectives into testable rules and workflows.

An ai agent plan is a structured blueprint that defines goals, actions, data flows, and governance for automated agents.

Why do I need an ai agent plan?

A plan provides alignment across teams, improves governance, and enables measurable outcomes. It reduces rework by clarifying scope, data needs, and escalation paths.

A plan helps teams align and govern automation, reducing rework and clarifying data needs and escalation paths.

What are the essential components of a plan?

Goals, scope, constraints, data requirements, decision logic, workflows, governance, and monitoring. These elements ensure the agent behaves predictably and safely.

The essential components are goals, scope, constraints, data needs, decision rules, workflows, governance, and monitoring.

How long does it take to create a plan?

Initial plans can take 1-2 hours for a starter version; full production-grade plans require more time for validation and governance.

A starter plan may take 1-2 hours, with more time needed for validation and governance.

What tools are best for building a plan?

Templates, planning notebooks, and code environments are commonly used. Prioritize tools that support collaboration, versioning, and audit trails.

Templates and planning notebooks with a code environment support collaboration and versioning.

How do you measure success after deployment?

Track reliability, latency, escalation effectiveness, and alignment with business goals. Use a rolling review cycle to update the plan.

Measure reliability, latency, escalation effectiveness, and goal alignment; review and update regularly.

Watch Video

Key Takeaways

- Define goals clearly and tie tests to success.

- Map actions and data flows for transparency.

- Validate with realistic scenarios before deployment.

- Document governance and escalation paths.

- Iterate and scale with a living ai agent plan.