AI Agent Workflow Automation: A Practical How-To

A thorough, step-by-step guide to designing, implementing, testing, and governing ai agent workflow automation for smarter, faster automation across systems.

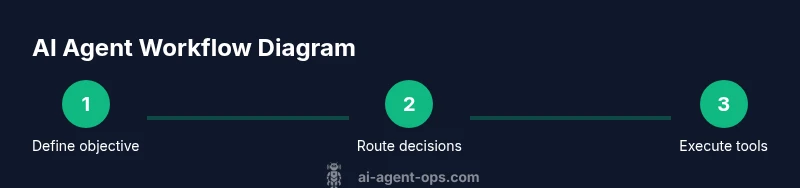

AI agent workflow automation lets you design, deploy, and monitor autonomous pipelines that orchestrate apps, data, and human input. This quick-start shows how to define objectives, select components, and implement guardrails. According to Ai Agent Ops, begin with a small, well-scoped use case and iterate toward greater scope with measurable success criteria.

Understanding ai agent workflow automation

ai agent workflow automation represents a structured approach to building autonomous decision pipelines that coordinate multiple tools, data sources, and human-in-the-loop checkpoints. The goal is to reduce manual handoffs while preserving reliability, auditability, and safety. In practice, teams define objectives, map data flows, pick compatible agents and orchestration engines, and then implement guardrails that prevent unsafe actions. The Ai Agent Ops team emphasizes starting with a single, bounded use case to validate feasibility before expanding to multi-step workflows. Benefits include faster cycle times, more consistent decision-making, and improved traceability across actions and outcomes. The approach also supports experimentation and continuous improvement, as you collect telemetry, analyze failures, and refine prompts, tools, and routing rules. Throughout this journey, you’ll balance automation gains with governance needs to ensure compliance and accountability.

In real-world environments, you’ll often integrate chat-based agents with APIs, databases, and enterprise systems. You’ll also encounter drift—when models or prompts misbehave—and latency challenges as tasks cascade through several services. Addressing these issues early with guardrails, observability, and fallback strategies is essential for long-term success. This section lays the groundwork for the rest of the guide, framing the problem space and setting achievable, measurable objectives. The emphasis remains on pragmatic, incremental changes rather than overnight transformations, a philosophy consistently echoed by Ai Agent Ops.

Core concepts: Agents, orchestrators, and runtimes

At its core, ai agent workflow automation combines three layers. First, agents are autonomous software components that perform actions or decisions on behalf of humans, such as querying a database, triggering a service, or composing a reply. Second, an orchestrator or workflow engine coordinates the sequence of steps, handles parallelism, retries, and error handling, and provides observability hooks. Third, runtimes and tool integrations give agents access to external systems, prompts, and memory stores. Understanding the interplay among these layers is crucial for building reliable automations. You’ll want clearly defined boundaries between data sources, decision points, and actions, plus robust security and access control. When choosing components, look for interoperability, clear prompts, versioned configurations, and transparent logging to simplify troubleshooting and audits.

This framework helps teams reason about scope and dependencies, so you can design workflows that scale and adapt as requirements evolve. It also supports experimentation—trying new tools or models in isolated pilots before committing to a broader rollout. The combination of well-defined agents, a capable orchestrator, and secure tool access is what enables repeatable, auditable automation across functions.

Design framework: The 4-layer model

A practical design framework for ai agent workflow automation often rests on four layers: 1) Intent and scope, 2) Decision logic and prompts, 3) Execution and integration, 4) Observation, learning, and governance. Layer 1 clarifies the business objective, success metrics, and guardrails. Layer 2 translates intent into prompts, rules, and decision trees that guide agent actions, while Layer 3 handles API calls, data transformations, and orchestrator state management. Layer 4 collects telemetry, evaluates outcomes, and triggers feedback loops for improvements. This structure helps teams separate concerns, making it easier to test, debug, and scale. In practice, you’ll map inputs to decisions, ensure deterministic prompts where possible, and build safe fallbacks for failures. The layered approach also simplifies auditing and compliance by isolating data flows and decision points.

To implement this framework effectively, document each layer’s interfaces, expectations, and failure modes. Use versioned configurations and controlled rollout plans to minimize risk. The framework supports incremental enhancement and aligns stakeholders around a shared model of how automation should operate in your organization.

Requirements and architecture

A solid ai agent workflow automation project needs a clear architectural plan and governance model. Core components include: 1) An LLM or policy engine to drive decisions, 2) An orchestrator to manage workflows, 3) Tool integrations (APIs, databases, messaging systems), 4) A memory layer or context store for state, 5) Credential and secret management, and 6) Observability and logging for traceability. Data flows should be mapped end-to-end: inputs from users or systems, decision points that select actions, actions that invoke tools, and outputs that update downstream systems or notify stakeholders. Security considerations include least privilege access, secret rotation, and data minimization. Architectural patterns such as event-driven triggers, fan-out/fan-in for parallel tasks, and circuit breakers for resilience help absorb variability in real-world workloads. A well-scoped sandbox environment is essential for experimentation, along with a plan for progressive governance as the workflow matures.

This section provides a blueprint for designing the underlying systems before you start building, ensuring you have the right capabilities and guardrails in place from day one.

Building a minimal viable workflow: a practical blueprint

Starting small is the most reliable path to success with ai agent workflows. Define a single objective, identify a minimal set of tools, and implement a straight-through process that requires minimal human intervention. A typical MVP includes: a) an input trigger (e.g., a support ticket message), b) an agent that formulates a plan (which tools to call), c) a single automated action (e.g., fetch data or draft a reply), d) a readout or update to the source system, and e) basic monitoring. Use a lightweight orchestrator to sequence the steps and a simple memory store to retain context between steps. Validate with a controlled dataset and synthetic scenarios before exposing it to real users. As you expand, you’ll add more decision points, integrate additional tools, and implement more sophisticated guardrails. The key is to maintain observability and version control so you can reproduce results and swiftly rollback if needed.

Practical use case: customer support automation

A common starter use case is customer support automation. An AI agent can triage inquiries, query order databases, fetch status updates, draft replies, and hand off complex cases to human agents. Start with a defined scope: classify tickets, fetch order status, and propose a draft response. The agent uses a decision prompt to decide which tools to call, then executes those calls, and finally posts a draft back to the ticketing system for human review. Observability dashboards track latency, success rate, and user satisfaction. By iterating on prompts and tool integrations, you can reduce response time, improve consistency, and free human agents to handle only the most complex issues. This practical scenario demonstrates the value of aligning business goals with concrete automation steps and governance.

Testing, monitoring, and safety

Robust testing and monitoring are non-negotiable for AI-driven workflows. Develop test suites that simulate real-world scenarios with edge cases and boundary conditions. Implement telemetry for every decision, including input, prompts, tool calls, outputs, and failures. Establish alerting for anomalies and latency spikes, plus automated rollback if a failure rate exceeds a defined threshold. Safety guardrails should cover data privacy, rate limits, and abuse prevention. Periodically review prompts and tool access permissions to prevent drift. Continuous monitoring helps you detect calibration drift, tool outages, or unexpected behaviors, enabling timely remediation and safer, more reliable automation.

Finally, document every decision point, tool integration, and failure mode. Clear documentation supports audits, compliance, and onboarding, ensuring stakeholders understand how the automation operates and what to do when things go wrong.

Change management and governance

Deploying ai agent workflows affects people, processes, and policies. Establish a governance committee that defines who can modify prompts, who reviews automated decisions, and how changes are tested and deployed. Create a change-ticket workflow for updates, maintain an inventory of tools and permissions, and enforce data handling rules (e.g., PII minimization, encryption at rest). Training and communication are essential to foster trust and adoption—provide hands-on workshops, runbooks, and escalation guides. Regular reviews of metrics, safety incidents, and ROI help demonstrate value while maintaining accountability. As your automation matures, evolve governance to reflect new risk profiles and business priorities, guided by organizational policy and industry best practices.

Ai Agent Ops consistently recommends embracing a phased rollout, strong documentation, and adaptive governance to balance innovation with safety and compliance.

Verdict and next steps: Ai Agent Ops’s guidance for sustained success

In short, ai agent workflow automation offers substantial productivity gains when designed with intention, guarded by robust guardrails, and governed with careful change management. Start with a narrow scope, validate with data, and iterate toward broader orchestration and more capable tools. Build for observability, maintain strict access controls, and continuously measure outcomes against defined objectives. The Ai Agent Ops team’s verdict is to treat automation as a living program—start small, learn fast, and scale thoughtfully with governance at the core.

Authority sources

Here are foundational resources that inform best practices for AI governance and automation:

- https://www.nist.gov/itl/ai-risk-management-framework

- https://www.nature.com/subjects/artificial-intelligence

- https://www.sciencemag.org/

Tools & Materials

- Code editor or IDE(VS Code recommended with linting and Python/Node support)

- Access to an LLM API or local model(e.g., GPT-4 or equivalent)

- Workflow orchestrator(Airflow, Temporal, or equivalent)

- Secret management and credentials store(HashiCorp Vault, AWS Secrets Manager, or equivalent)

- Sandbox data or test dataset(Non-production data for safe testing)

- Monitoring/Observability tooling(Prometheus, Grafana, or equivalent)

- Documentation and governance policy(Data handling and change management docs)

Steps

Estimated time: 3-6 hours

- 1

Define objective and guardrails

State the goal, scope, success criteria, and safety constraints. Document what success looks like and what actions are disallowed.

Tip: Keep the objective narrow and measurable to prevent scope creep. - 2

Map data flows and prerequisites

Identify data sources, required transformations, and downstream systems. Ensure data quality and access controls are defined.

Tip: Create a simple data flow diagram before wiring tools together. - 3

Choose core components

Select an LLM or policy engine, an orchestrator, and tool integrations that fit your use case. Ensure interoperability.

Tip: Prefer versioned configurations and clear API contracts. - 4

Design decision prompts and routing

Draft prompts that capture decision logic and route actions safely. Include fallback options for uncertain cases.

Tip: Test prompts with edge cases to reduce drift. - 5

Implement in a sandbox

Build the MVP workflow in a non-production environment with mock or synthetic data. Verify end-to-end execution.

Tip: Use feature flags to enable gradual exposure. - 6

Test thoroughly

Run deterministic tests, negative tests, and real-world scenarios. Validate latency, accuracy, and error handling.

Tip: Instrument tests to capture decision rationales and outcomes. - 7

Deploy and monitor

Move to production with guardrails, alerts, and dashboards. Establish a rollback plan if metrics deteriorate.

Tip: Automate rollback if error rates exceed thresholds. - 8

Review and govern

Regularly review performance, safety incidents, and governance policies. Update prompts and tool access as needed.

Tip: Schedule quarterly governance reviews.

Questions & Answers

What is ai agent workflow automation?

AI agent workflow automation is a design pattern that combines autonomous agents, an orchestrator, and tool integrations to perform end-to-end business tasks with minimal human intervention, while maintaining safety and traceability.

AI agent workflow automation is an approach that uses autonomous agents coordinated by an orchestrator to perform business tasks with minimal human input, while keeping safety and traceability in mind.

What components are required?

Key components include an LLM or policy engine, a workflow orchestrator, tool integrations (APIs, databases), a memory/context store, secret management, and robust observability.

You need an LLM or policy engine, an orchestrator, tool integrations, a memory store, secret management, and observability.

How do you measure ROI?

ROI is measured by comparing cycle time reductions, error rate improvements, and human hours saved against setup and maintenance costs, using defined KPIs and a phased rollout.

ROI is measured by reductions in cycle time, fewer errors, and saved human hours, tracked against initial and ongoing costs.

What are common challenges?

Drift in prompts, latency in multi-tool calls, data governance complexity, and ensuring safety in decision-making are common challenges that require guardrails and observability.

Common challenges include prompt drift, latency, and governance complexity, which you mitigate with guardrails and monitoring.

Is this suitable for real-time tasks?

Yes, with careful design. Real-time use cases require low-latency tools, streaming data capabilities, and tight SLAs, plus fast fallback mechanisms.

Real-time use cases are possible but need low-latency components and strong fallbacks.

How do you ensure safety and compliance?

Establish guardrails, data handling rules, access controls, auditing, and periodic reviews. Document decision points and retain logs for accountability.

Safety relies on guardrails, access controls, and auditable logs, with regular governance reviews.

Watch Video

Key Takeaways

- Define a clear objective before automation.

- Choose compatible agents, orchestrators, and tools.

- Test with safe data and guardrails.

- Monitor performance and iterate for reliability.

- Governance should scale with automation maturity.