AI Agent Cost Comparison: Pricing, ROI, and TCO

An analytical AI agent cost comparison examining cloud-based vs. self-hosted pricing, TCO, ROI, and governance considerations to help teams decide the best fit.

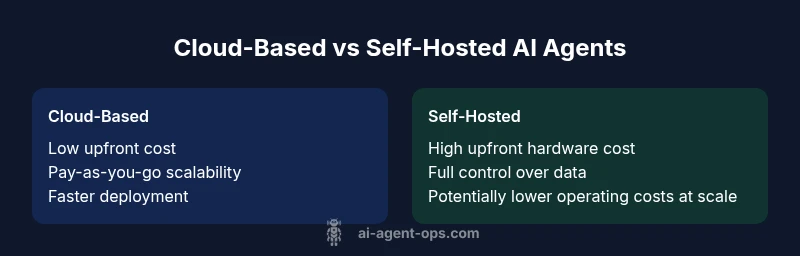

TL;DR: Cloud-based AI agent services offer predictable per-agent or usage-based pricing and rapid deployment, while self-hosted agents require upfront hardware, licenses, and ongoing maintenance but can reduce long-term per-transaction costs at scale. The right choice depends on workload volume, customization needs, and total cost of ownership (TCO) over 3–5 years for ai agent cost comparison.

The cost dimension in AI agent deployments

According to Ai Agent Ops, cost is not just a price tag; it’s a multi-dimensional total cost of ownership that grows with usage, data, and governance requirements. In an ai agent cost comparison, optimizing for TCO means looking beyond upfront fees to include compute, storage, data transfer, monitoring, personnel, and maintenance across the lifecycle. Teams frequently underestimate ongoing costs, vendor changes, and integration work, which can dwarf the initial quote. This section sets the stage by framing the cost problem as a lifecycle exercise: you’re budgeting for development, deployment, operation, and eventual retirement or replacement of agents. The goal is to quantify not only what you pay today, but what you will pay as workloads evolve, data grows, and security demands tighten. As you read, keep in mind the central question: which model delivers the most reliable value for ai agent cost comparison given your workload profile and governance requirements?

Understanding cost in this space also means recognizing that AI agents are not one-size-fits-all. A cost-effective solution for a small engineering prototype may become unsustainable when scaled to thousands of simultaneous agents across multiple product lines. Conversely, an enterprise-grade deployment might justify higher initial investments for architectural controls, compliance, and automation that prevent runaway spend. The Ai Agent Ops framework emphasizes a disciplined approach: map your use cases, forecast workloads, and build a transparent model of expenses that includes licensing, compute, data, security, and maintenance. This mindset helps teams avoid sticker shock when negotiating vendor contracts or deciding to switch from a cloud-only to a hybrid or self-hosted approach. Finally, a well-documented cost model supports governance, budgets, and stakeholder alignment across engineering, security, and finance.

Cost Models Explained: Cloud vs Self-Hosted

Pricing models for AI agents fall into a few broad archetypes, each with distinct cost implications. In a typical ai agent cost comparison, cloud-based models often monetize per-user, per-seat, or per-API-call usage with tiered subscription options. This approach delivers fast onboarding, elastic capacity, and predictable monthly costs with minimal hardware management. For many teams, cloud pricing reduces upfront risk and accelerates time to value, making it easier to test a broad set of agent capabilities and scale gradually.

Self-hosted or on-premises models shift the economics toward capital expenditure and ongoing operating costs. You’ll encounter upfront hardware, software licenses, data-center space, cooling, and dedicated staff for maintenance and security. Once the initial deployment is complete, ongoing costs typically center on compute cycles, storage, backups, and support. In exchange for higher upfront investment, organizations can gain tighter data sovereignty, lower marginal costs at high utilization, and more fine-grained control over updates and model versions. The trade-off is higher complexity, longer time-to-value, and a need for robust governance to prevent runaway spending. Across the ai agent cost comparison landscape, hybrid approaches—combining managed cloud services with selective on-prem components—are increasingly common for balancing control and flexibility.

Both models can be cost-efficient with careful design. Cloud vendors frequently offer volume discounts, reserved capacity, and multi-agent pricing that can shrink per-usage costs for steady workloads. Self-hosted deployments can leverage hardware amortization, open-source model economies, and tailored optimization to reduce per-transaction costs, especially at scale. The key is to quantify not just the immediate price, but the total cost of ownership over the lifecycle of the AI agents you deploy, including architecture, security, and future upgrades.

The Hidden Costs and Long-Term Considerations

In the ai agent cost comparison, many teams overlook several cost factors that accumulate over time. Beyond the advertised price, there are data-transfer fees, storage costs for training and logs, and the expense of applying updates or retraining models when data patterns shift. For cloud-based agents, API call volume, feature toggles, and rate limits can create sticker shock if workloads surprise forecasts. For self-hosted agents, capital expenditure is only the beginning: you’ll also pay for electricity, cooling, rack space, networking, and ongoing security hardening. Maintenance staff time, incident response, and disaster recovery planning add non-trivial sums to the annual budget. And then there is governance risk—if you lack clear policies on data retention, model updates, and access control, you may incur costs from compliance failures or audit findings. A robust ai agent cost comparison therefore requires a separate accounting of security posture, uptime guarantees, and regulatory obligations, since failures here can eclipse direct pricing.

According to Ai Agent Ops analysis, many teams underestimate the cost of monitoring and alerting—observability tooling, dashboards, and staff to analyze anomalies can accumulate quickly. You should also anticipate vendor changes: pricing restructures, deprecation of features, or shifts in licensing terms can alter the long-term economics of your AI agent portfolio. Building a cost model that includes a governance layer—budgets by team, alert thresholds, and approval workflows—helps prevent uncontrolled growth in spend and ensures the business derives measurable value from each deployed agent.

Use-Case Driven Cost Profiles

Different use cases drive cost profiles in distinct ways. A customer-support automation bot with high seasonal traffic may incur spikes in API usage, data storage, and latency guarantees during peak periods. In contrast, an internal data-ops agent used for monitoring and orchestration may run continuously with relatively steady requirements but demand rigorous security and auditability. In the ai agent cost comparison, you should align your cost model with the expected pattern of utilization. Bursty workloads will favor cloud-based, flexible pricing with autoscaling and pay-as-you-go options. Steady, mission-critical workloads with strict data governance may justify self-hosted or hybrid solutions, especially if you can amortize hardware and maintain strict control over data locality. In both scenarios, you’ll want to forecast peak-to-average ratios, the cost of redundancy, and the potential savings from caching, model distillation, or batch processing.

A practical way to illustrate this is through two hypothetical profiles. Profile A represents a small product team piloting several agents with low tolerance for downtime and a desire for rapid iteration. Profile B represents an enterprise-scale operation running hundreds of agents with strict data locality and compliance requirements. In ai agent cost comparison terms, Profile A is often cloud-friendly with a low upfront barrier and scalable experiments, while Profile B tends to justify more complex, self-hosted architectures and governance frameworks that control data flows and lifecycle costs. The takeaway is that there is no universal winner; the best approach depends on your workload shape, risk tolerance, and policy constraints.

Evaluation Framework: Building a Robust Cost Model

A rigorous ai agent cost comparison begins with a disciplined evaluation framework. Start by documenting business objectives and usage scenarios: number of agents, expected invocation frequency, data volumes, required latency, and uptime targets. Then, gather proposals from cloud providers and potential self-hosted stacks, annotating pricing components, support terms, and upgrade paths. Build a shared-timeline cost model that maps each pricing line item to a real-world activity—API calls, model training, data storage, and security operations. Include a sensitivity analysis to understand how changes in workload or data growth impact total spend. Finally, validate the model with a pilot across a controlled subset of agents to measure actual costs against forecasts. In ai agent cost comparison, this method reveals where cost overruns are likely and where savings opportunities exist, such as tiering models, caching strategies, or selective feature usage.

Cost Governance and Optimization Strategies

Effective governance is a powerful lever in the ai agent cost comparison. Establish budgets by department and by project, with guardrails on agent proliferation and feature usage. Implement cost monitoring dashboards that surface anomalies in near real-time and trigger alerts when spend approaches thresholds. Consider adopting autoscaling for cloud deployments and capacity planning for self-hosted environments to avoid idle resources. Optimize model selection by choosing lighter-weight models for routine tasks and reserving larger, more capable models for high-value interactions. Data retention policies can dramatically affect storage costs; implement lifecycle rules to purge or compress older logs and transcripts. Finally, negotiate vendor terms actively—seek volume discounts, reserved capacity, and flexible cancellation policies—so that your pricing reacts to actual demand rather than predictions that miss the mark.

Architectural Considerations to Reduce Cost

The ai agent cost comparison is deeply influenced by architecture choices. Reuse and share components where possible to avoid duplicating reasoning across agents. Cache frequent results and responses to reduce repeated compute. Use batch processing for non-time-critical tasks, and apply model distillation to shrink inference costs without sacrificing accuracy. Optimize data pipelines to minimize transfers, especially across regions, and enforce data locality to align with compliance constraints. Partition workloads so that the most sensitive, high-cost tasks run on self-hosted components, while routine operations leverage cloud convenience. Finally, implement a modular design that allows swapping pricing tiers or back-end infrastructures without a complete rebuild. These strategies can materially shift the TCO in the direction of lower, more predictable costs.

Decision Heuristics: When to Choose Cloud vs On-Prem

Make your decision using a concise checklist. Consider data locality requirements, regulatory constraints, and the perceived risk of vendor lock-in. If you expect rapid experimentation, variable workloads, or a need for fast time-to-value, a cloud-based model is typically favorable. If you require strict data governance, long-term cost predictability at scale, or existing on-prem infrastructure, an on-prem or hybrid approach may be better. Use a simple payback period analysis to estimate how long it takes for the cost savings from one model to cover the investment in the other. Finally, remember that the best choice is often a blended approach that leverages cloud flexibility for experimentation and on-prem controls for critical data. This way, your ai agent cost comparison remains adaptive to changing business needs over time.

Practical Checklist: Ready-to-Apply Actions

- Define workload patterns and data locality needs.

- Build a transparent TCO model with all cost drivers.

- Run a short pilot with both cloud and self-hosted options.

- Set up cost governance, dashboards, and alerts.

- Optimize through caching, distillation, and batching.

- Negotiate vendor terms and consider hybrid architectures.

- Review the decision quarterly as requirements evolve.

Comparison

| Feature | Cloud-based AI agent | Self-hosted AI agent |

|---|---|---|

| Upfront cost | Low or zero upfront; pay-as-you-go or subscription | High upfront for hardware, licenses, deployment |

| Ongoing cost | Monthly/usage-based charges; scalable costs with demand | Ongoing maintenance, power, cooling, staff, and licenses |

| Scalability | Elastic, dynamic scaling with capacity on demand | Limited by hardware; scaling requires planning and procurement |

| Control & customization | Depends on provider; often strong defaults with some customization | Full control over stack, updates, and integration |

| Data locality & latency | Depends on region; generally good with global coverage | Low latency and strict data locality possible on-prem |

| Security & compliance | Shared responsibility model; certifications vary by vendor | End-to-end control; higher effort to achieve certifications |

| Total cost of ownership (3–5 years) | Predictable but potentially higher at scale due to ongoing usage | Potentially lower per-transaction costs with high amortization |

| Best for | Teams needing speed, experimentation, and minimal ops | Organizations with strict data controls and high volume |

Positives

- Clear, transparent pricing models for budgeting

- Faster deployment and iteration with cloud options

- Elastic scaling aligns with variable workloads

- Easier access to managed services and updates

What's Bad

- Potential for unpredictable costs with usage spikes

- Vendor lock-in and feature depreciation risks

- Self-hosted still requires significant expertise and management

- Security and governance depend on internal capabilities

Cloud-based options are typically best for pilots and teams prioritizing speed; self-hosted options excel for scale, data control, and long-term cost discipline

In most cases, start with cloud-based pilots to learn usage patterns. Move to a hybrid or self-hosted approach if data locality or annual TCO demonstrates a clear advantage.

Questions & Answers

What factors most influence AI agent costs?

The main cost drivers are licensing or subscription fees, compute usage, data storage, data transfer, and personnel for maintenance and governance. Additional costs come from security, compliance, and incident response. Always model both upfront and ongoing costs to understand total cost of ownership.

Cost drivers include licensing, compute, storage, and governance needs. Plan for ongoing maintenance and security in your model.

How do I compare cloud-based vs self-hosted AI agents for cost?

Compare upfront capex, ongoing opex, scalability, data locality, and governance needs. Build a side-by-side TCO model with sensitivity analysis for workload changes. Run a pilot to validate assumptions before committing long-term.

Compare upfront and ongoing costs, test with a pilot, and analyze total cost of ownership.

What is TCO in AI agent deployments?

Total cost of ownership includes all costs across the lifecycle: procurement, deployment, operation, maintenance, security, data storage, and eventual decommissioning. It’s a holistic view of what an AI agent costs over 3–5 years.

TCO includes all lifecycle costs, not just the price tag.

Can usage patterns affect costs significantly?

Yes. Bursty or unpredictable usage can spike cloud costs, while steady, predictable workloads favor more cost-stable models. Designing for autoscaling, caching, and load balancing helps stabilize spend.

Usage patterns drive cost swings; plan for autoscaling and caching.

Are there open-source options to reduce cost?

Open-source tooling can lower licensing costs and enable self-hosted customization, but it transfers maintenance and security responsibilities to your team. Weigh this against internal capabilities and governance needs in your ai agent cost comparison.

Open-source options reduce licensing costs but require in-house maintenance.

What governance practices help control AI agent costs?

Establish budgets, approval workflows, and spend alerts. Use dashboards to monitor usage, enforce data retention policies, and segment costs by project. Governance helps prevent uncontrolled proliferation and aligns spending with value.

Set budgets, alerts, and dashboards to control AI spend.

Key Takeaways

- Define workload patterns before choosing a cost model

- Pilot cloud-based options to validate ROI quickly

- Build a transparent TCO with governance from day one

- Leverage optimization techniques like caching and batching

- Revisit the model quarterly as requirements evolve