ai agent or: Choosing Between AI Agents and Traditional Automation

Explore ai agent or: a rigorous comparison of AI agents and traditional automation, with use cases, trade-offs, and practical guidance for developers and leaders.

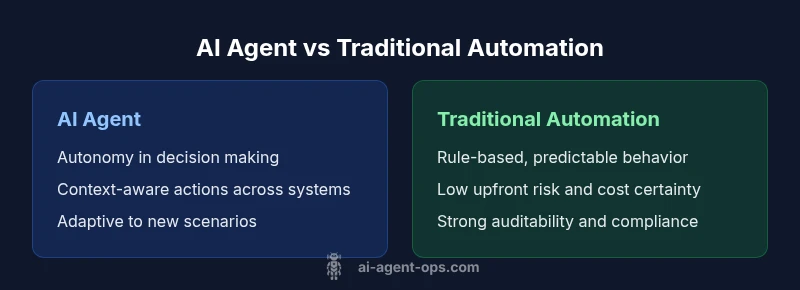

TL;DR: AI agents provide autonomous decision-making, context-aware actions, and cross-system orchestration, often enabling faster iteration of complex workflows. Traditional automation delivers predictable, rule-based reliability with lower upfront risk. The best choice depends on your tolerance for ambiguity, need for adaptivity, and scale of orchestration. This article compares the two to help you decide.

What ai agent or means in practice

In modern enterprise environments, the phrase ai agent or captures a spectrum that ranges from autonomous decision-making to orchestrating multi-step workflows across disparate systems. At its core, an ai agent is designed to interpret a goal, select actions, and observe outcomes with minimal human intervention. The contrast with traditional automation—where scripts and rules drive predictable, repeatable tasks—helps teams map capabilities to business needs. According to Ai Agent Ops, the distinction hinges on autonomy, data feedback loops, and the ability to adapt to unseen scenarios. When you see an ai agent in action, you’re watching a system not just follow a script but make informed choices in real time. This shifts how teams frame success, measure risk, and plan governance. The keyword ai agent or frames the decision as a continuum rather than a binary choice.

Autonomy vs control: the core tension

Autonomy is the defining differentiator between AI agents and traditional automation. An AI agent can decide when to escalate, adjust parameters, or seek additional data to improve outcomes. This capability introduces value in dynamic environments but also increases exposure to unexpected results if not properly constrained. Control mechanisms—policy guards, sandbox environments, and human-in-the-loop checks—become essential, especially in regulated industries. Ai Agent Ops stresses that governance models must align with risk tolerance, data provenance, and auditability. When teams implement AI agents, they should design clear escalation paths, tamper-evident logs, and rollback strategies to preserve reliability while preserving adaptability. The tension between speed and safety guides architecture choices from planning modules to state machines and reward signals.

Architecture patterns: agents, orchestration, and modules

A practical AI-agent architecture often combines goal-driven reasoning with orchestration layers that coordinate services across the stack. Key patterns include a planning module that translates goals into actions, an execution layer that calls external APIs or runs tasks, and a feedback loop that informs future decisions. Some teams implement a hybrid stack where AI agents operate within an orchestration layer that enforces constraints and provides telemetry. Interoperability is critical—standardized interfaces, clear data models, and robust observability enable agents to behave predictably as they scale. In many cases, modular design—where agents handle specific domains (e.g., data ingestion, incident triage, customer routing)—improves maintainability and accelerates experiments. For the target audience of developers and business leaders, the emphasis should be on composability, monitoring, and governance."Ai agent or" underscores the need to map capabilities to real-world workflows rather than chasing novelty.

Data requirements and learning curves

AI agents thrive on data, feedback, and iterative refinement. They typically rely on labeled data for initial behavior, simulated environments for safe experimentation, and real-time telemetry for online learning or rapid adaptation. Unlike static scripts, AI agents benefit from continuous improvement loops—A/B tests, rollback plans, and performance dashboards. Data quality and provenance are crucial; noisy data or biased labels can propagate into decisions with downstream consequences. MLOps practices, including model versioning, feature stores, and continuous integration/continuous delivery (CI/CD) pipelines for models, become standard in mature deployments. For leadership teams, this means budgeting for data infrastructure, governance tooling, and cross-functional collaboration between data science, engineering, and product roles.

Deployment considerations: latency, reliability, and cost

Deploying AI agents involves trade-offs: latency budgets, reliability guarantees, and cost of compute and data operations. In latency-sensitive scenarios, edge or hybrid deployments may be necessary to keep decision times within acceptable bounds. Reliability depends on robust error handling, circuit breakers, and graceful degradation when external services fail. Cost considerations include compute for inference, data transfer, and storage for logs and telemetry. Ai Agent Ops emphasizes designing for observability—clear traces, event-driven architectures, and deterministic fallbacks that minimize business impact during partial failures. When evaluating deployment options, teams should quantify risk-adjusted cost of missteps and build budgets that cover ongoing model maintenance, retraining, and data quality improvements.

When AI agents shine: use cases and scenarios

AI agents excel in complex, multi-step workflows where static rules struggle. Use cases include autonomous incident response that coordinates across monitoring tools, smart routing in customer support that adapts to context, and dynamic onboarding processes that adjust to user behavior. In data pipelines, AI agents can orchestrate transformations, quality checks, and anomaly handling with minimal coding changes. In product operations, agents can optimize experiments, monitor feature flags, and coordinate between frontend and backend services. The ability to learn from outcomes makes AI agents particularly valuable in fast-moving domains where requirements evolve quickly. However, success hinges on thoughtful scoping, governance, and a shared understanding of acceptable risk.

When traditional automation remains superior

Traditional automation remains the workhorse for deterministic, high-frequency tasks with strict compliance requirements. Rule-based scripts are predictable, auditable, and typically cheaper to operate at scale. For regulated processes, the ability to demonstrate reproducibility and traceability is often non-negotiable. When experimentation is slow or budgets are tight, traditional automation provides a reliable baseline. In many organizations, a staged approach—starting with rule-based automation to achieve quick wins, then gradually introducing AI agents for areas with higher ambiguity—delivers the best of both worlds. The Ai Agent Ops framework guides teams to reserve AI agents for decision-heavy, context-rich steps where human intuition compliments machine reasoning.

Hybrid approaches: layering AI agents with rules

A pragmatic path forward combines AI agents with rule-based controllers. Hybrid architectures use AI agents for interpretation and decision-making while fixed rules govern critical safety checks and compliance boundaries. This reduces risk while preserving adaptability. Layered designs also support gradual modernization: replace brittle scripts with AI-driven modules in isolated domains, measure impact, and expand execution contexts as confidence grows. By keeping a robust governance layer and clear ownership, organizations can balance innovation with reliability. The result is a practical, scalable approach that aligns with enterprise risk profiles and regulatory requirements.

Practical evaluation: how to compare options

Evaluation should be criteria-driven rather than trend-driven. Start with scope clarity: which tasks need autonomy, which require strict control. Define metrics for success—accuracy, latency, fault rate, and business impact—and establish a test plan that captures edge cases. Use pilot programs to compare AI agent and traditional automation performance on real workflows, not synthetic benchmarks. Consider total cost of ownership, including data infrastructure, monitoring, and retraining needs. Include qualitative factors like developer velocity, time-to-market for new features, and stakeholder confidence. Finally, design decision gates that trigger governance reviews or a shift back to rule-based automation if risk exceeds thresholds.

Getting started: a pragmatic deployment checklist

- Map workflows to determine where autonomy adds value versus where control is essential. 2. Establish governance policies, data provenance, and audit trails. 3. Build modular agents with clear interfaces and observability. 4. Start with a small pilot across a non-critical domain. 5. Instrument metrics and set success criteria before widening scope. 6. Plan for data quality improvements, retraining cycles, and cost controls. 7. Align with product goals and stakeholder expectations. 8. Create rollback and fail-safe mechanisms. 9. Invest in training for teams on agent orchestration and incident management. 10. Review outcomes regularly and iterate based on feedback.

Risks, governance, and ethics of agent-based automation

Agent-based automation raises governance questions around accountability, bias, and data privacy. Clear responsibility boundaries, explainable decision processes, and robust red-teaming help mitigate risk. Organizations should implement privacy-by-design, access controls, and robust logging to support compliance. Regular audits and third-party reviews can provide independent assurance. Ai Agent Ops notes that ethical considerations are not optional; they are fundamental to sustained adoption and trust, especially when AI agents influence customer experiences or operational decisions. Proactive risk management reduces the likelihood of costly failures and regulatory repercussions.

Future trends: agentic AI and the path forward

The trajectory of AI agents points toward more capable, programmable agents that integrate with broader business processes. Advances in tool-usage, multi-agent coordination, and autonomous planning will enable teams to tackle even more complex workflows with less hand-holding. As models become more capable, the emphasis shifts to governance, explainability, and secure orchestration across ecosystems. The Ai Agent Ops perspective suggests a future where agentic AI augments human decision-making, delivering measurable business outcomes while preserving essential controls and ethical standards.

Comparison

| Feature | ai agent | traditional automation |

|---|---|---|

| Autonomy | high | low |

| Decision speed | rapid/variable | stable/consistent |

| Data requirements | large data needs with feedback | lower data needs; rule-based |

| Maintenance | ongoing model updates | static scripts with occasional updates |

| Best For | dynamic, unstructured environments | well-defined, repeatable tasks |

Positives

- Enables autonomous decision-making across heterogeneous systems

- Can reduce cycle times by orchestrating multiple tasks in parallel

- Facilitates adaptable workflows and rapid experimentation

- Potential to lower human workload in complex operations

- Encourages modular, reusable automation components

What's Bad

- Higher upfront risk due to uncertainty in model behavior

- Requires robust governance, data provenance, and explainability

- Ongoing data/labeling requirements and retraining costs

- Potential cost premium for compute, storage, and monitoring

AI agents outperform traditional automation in dynamic, complex environments where adaptivity matters; traditional automation remains the safe, cost-effective baseline for deterministic tasks.

Choose AI agents when you need autonomous decision-making and cross-system orchestration. Opt for traditional automation for predictable, compliant processes with lower ongoing risk and cost.

Questions & Answers

What exactly is an AI agent and how does it differ from traditional automation?

An AI agent is a system that interprets goals, reasons about actions, and executes tasks across services with minimal human input. Traditional automation relies on fixed rules and scripts. The agent can adapt to changing contexts, while traditional automation remains predictable and auditable.

An AI agent makes decisions and takes actions on its own, whereas traditional automation follows predefined rules. Start with a pilot to see how it performs in your context.

When should I consider AI agents for my business?

Consider AI agents for workflows that are complex, context-dependent, or require adaptability across multiple systems. They are well-suited for incident response, customer routing, and dynamic process orchestration where rules alone struggle to capture edge cases.

Think AI agents when your process needs to adapt to new situations without rewriting code.

What are the main risks of deploying AI agents?

Key risks include unexpected behavior, data privacy concerns, model drift, and governance gaps. Mitigate with strong telemetry, human-in-the-loop checks, auditable logs, and staged rollouts.

Watch for unexpected decisions and keep governance tight to stay in control.

Can AI agents work with existing rule-based automation?

Yes. Hybrid architectures combine AI-driven decision-making with rule-based controllers to ensure safety, compliance, and predictable outcomes even when AI behavior is uncertain.

Yes, you can mix AI agents with rules to get the best of both worlds.

How do I evaluate the cost of AI agents versus traditional automation?

Evaluate the total cost of ownership, including data infrastructure, training, monitoring, and potential retraining, alongside expected business impact. Use pilots to compare cost per successful outcome.

Look at overall costs, not just the upfront price, and run pilots to compare outcomes.

What are common pitfalls when adopting AI agents?

Underestimating data needs, overestimating model capabilities, neglecting governance, and insufficient monitoring can derail adoption. Start with small, well-scoped pilots and build from there.

Avoid big bets without good data and strong governance; start small and learn fast.

Key Takeaways

- Prioritize governance when deploying AI agents

- Hybrid approaches reduce risk while still enabling adaptability

- Pilot extensively before broad rollout

- Measure business impact with clear success criteria

- Balance autonomy with control to manage risk