Is AI Automation and AI Agent the Same? A Comparative Analysis

Explore the differences between AI automation and AI agents, with practical guidance for developers and leaders on when to use each approach and how to design effective hybrid workflows.

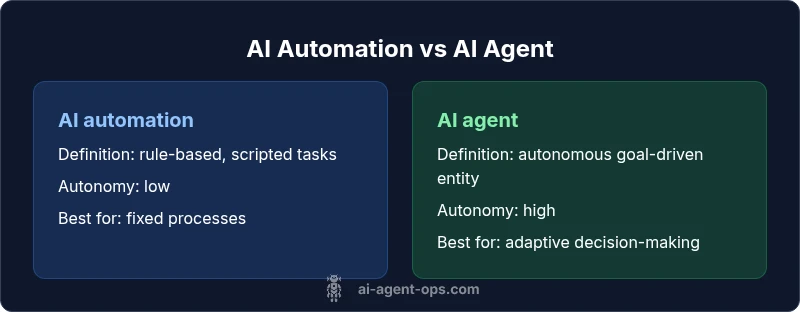

Is ai automation and ai agent the same? Not exactly. As a direct answer to 'is ai automation and ai agent the same', AI automation describes systems that execute predefined tasks with minimal human input, usually via rules or scripts. An AI agent is an autonomous software entity that can decide, act, and adapt to achieve specific goals. According to Ai Agent Ops, understanding these distinctions is essential for building effective AI strategies.

Definitions and scope: what the terms cover

The phrase is ai automation and ai agent the same often appears early in planning discussions, but the correct interpretation matters deeply for architecture and governance. AI automation typically refers to software that follows prescribed rules, templates, and orchestration flows to complete tasks with limited human intervention. It excels in repeatability, speed, and error reduction for well-defined processes. An AI agent, by contrast, is an autonomous agentic component that can perceive its environment, select actions, and pursue goals with minimal human input. It uses decision-making, planning, and sometimes learning to adapt to new situations. The distinction matters for teams evaluating technology stacks, because it influences how you design data flows, interfaces, and monitoring. According to Ai Agent Ops, clarity on these roles helps prevent project scope creep. The practical takeaway is simple: if a task is strictly rule-based, automation is often sufficient; if a task requires goal-oriented behavior, agents may be more appropriate.

Core dimensions: scope, autonomy, and learning

To compare is ai automation and ai agent the same, we need a shared frame. The most useful axes are scope (breadth of tasks), autonomy (decision-making power), and learning (ability to improve over time). Scope differentiates routine, scripted workflows from dynamic problem solving. Autonomy describes how much the system decides without human prompts. Learning introduces adaptation, feedback loops, and improvement over time. In many real-world environments, teams deploy a spectrum: automations handle stable, repeatable steps, while agents tackle evolving objectives that require exploration and negotiation with data sources, users, or other software agents. This framing helps avoid over-committing to a single paradigm and supports hybrid designs where automation handles the knowns and agents handle the unknowns. Ai Agent Ops notes that mature AI programs blend both approaches where it makes sense.

Lifecycle implications: development, deployment, operation

Understanding whether you are asking whether ai automation and ai agent the same also involves lifecycle considerations. Automation typically follows a build-deploy-run-to-completion model. You develop scripts or workflows, deploy them to orchestration platforms, and monitor for correctness. Agents introduce richer lifecycles: perception of state, planning under uncertainty, action execution, and evaluation of outcomes. They may require ongoing policy management, retraining, and safety guards. Operational differences include testing approaches (unit tests for rules vs. scenario testing for agent behaviors), observability (traceability of decisions vs. end-to-end task tracking), and governance (change control for rules vs. risk management for autonomous actions). For teams, this means aligning skillsets, not just tooling. Ai Agent Ops emphasizes that the right choice depends on whether your system must strictly follow a script or autonomously adapt to changing conditions.

Use-case mapping: when to choose automation vs. agents

A practical way to answer is: are we solving a fixed problem or a moving target? Use automation when tasks are well-defined, high-volume, and require speed and reliability. Examples include data ETL pipelines, report generation, and repetitive data entry. Use AI agents when the objective is ambiguous or evolving, decisions must be made under uncertainty, or the system must interact with diverse data sources and users. Scenarios include customer support orchestration, dynamic workflow management, and proactive system maintenance. In mixed environments, agents can handle goal-oriented subtasks that feed into automated pipelines. This mapping reduces ambiguity about the question is ai automation and ai agent the same and clarifies planning for interfaces, data schemas, and monitoring requirements. Ai Agent Ops highlights the importance of defining success criteria early and revisiting them as requirements shift.

Architecture and data flows: surfaces, interfaces, and control

Examining the architectures reveals the practical differences between the two concepts. AI automation typically relies on deterministic pipelines: input → transformation → output, with error handling baked into the flow. Interfaces are usually API-driven or UI-centric, and data contracts are explicit. AI agents require richer mental models: a perception layer that ingests state from the environment, a belief/goal representation, a planning component to select actions, and an action layer to execute decisions. Data flows include feedback loops that connect outcomes back to the agent’s model, enabling learning or policy improvement. Interoperability concerns grow with agents, especially in multi-agent ecosystems or when agents mediate between humans and systems. The takeaway is that while automation simplifies the path, agents introduce a level of abstraction that demands careful governance and safety controls.

Tooling landscape: automation tools vs. agent frameworks

The tooling to support these paradigms reflects their differences. Automation typically relies on orchestration engines, workflow managers, and scripting languages that enforce repeatable patterns. Agent-oriented tooling often combines large language models, decision modules, planners, and environment simulators with robust monitoring. For automation, you’ll likely assemble a stack around CI/CD, data pipelines, and integration hubs. For AI agents, you need components that support intent understanding, state tracking, action execution, and safety monitoring. Hybrid stacks are common: automation handles mechanical, high-volume tasks, while agents handle decision-rich tasks that require adaptability. Ai Agent Ops notes that choosing the right mix—keeping interfaces clean, data schemas stable, and observability comprehensive—will dramatically influence development velocity and operational reliability.

Metrics and evaluation: what to measure

Effectively answering is ai automation and ai agent the same depends on measurable outcomes. For automation, metrics focus on throughput, latency, error rate, and predictability. You measure how consistently tasks complete on schedule and how much human intervention is reduced. For AI agents, you expand metrics to include decision quality, goal completion rate, learning progress, exploration efficiency, and safety incidents. Evaluation should consider both short-term wins (speed, cost control) and long-term impact (adaptability, resilience). Monitoring should capture data about decisions, actions, and outcomes, not only outputs. Ai Agent Ops emphasizes that both paradigms benefit from a unified telemetry layer that correlates business outcomes with technical signals, enabling a holistic view of performance.

Economic and governance considerations: cost, risk, and ROI

In deciding whether ai automation or ai agent the same, economic considerations loom large. Automation often yields immediate ROI through reduced labor and error rates, with clear cost baselines for tooling and maintenance. Agents can deliver longer-term value via improved decision-making, adaptability, and end-to-end automation of complex workflows, but they may require ongoing investment in data quality, model maintenance, and governance. Risk management is elevated for agents due to autonomy: you must implement constraints, monitoring, and rollback capabilities. Establish governance policies that define escalation paths, safety limits, and audit trails. Ai Agent Ops stresses the importance of a phased approach: start with automation to stabilize low-risk processes, then introduce agents for higher-scope tasks where decision-making yields a differentiated advantage.

Misconceptions and caveats: common myths debunked

A frequent misconception is that AI agents always replace humans. In reality, agents often augment human capabilities, handling decision-heavy subtasks while humans supervise exceptions. Another myth is that automation is always cheaper than agents; in many cases, the long-term value of agent-driven autonomy justifies the investment, especially when uncertainty and variability dominate tasks. A third caveat is assuming a single tool can cover both domains. In practice, most teams benefit from a carefully designed hybrid architecture that leverages automation for routine steps and agents for goal-driven behavior. Ai Agent Ops encourages teams to remain skeptical of hype and to ground decisions in concrete objectives, data availability, and governance needs.

Hybrid patterns: orchestrating automation with agents

Hybrid patterns are increasingly popular because they combine strengths. A practical pattern is automation for data extraction and preparation, followed by an AI agent that makes decisions about subsequent steps and initiates actions across systems. Agents can orchestrate automated tasks by issuing commands to pipelines, monitor outcomes, and reconfigure flows in response to feedback. Another pattern is agent-mediated human-in-the-loop control, where agents propose actions but require human approval for high-risk steps. Finally, a monitoring loop ensures that both automation and agents stay aligned with policy and compliance requirements. This blend often yields robust, scalable systems that adapt to changing business needs without sacrificing reliability. Ai Agent Ops highlights that governance and safety remain essential in any hybrid design.

Decision framework: a practical 6-step checklist

- Define the primary objective: is the goal process optimization, autonomous decision-making, or both?

- Map tasks to automation or agents based on rule rigidity and decision complexity.

- Assess data availability and quality to support agents’ perception and learning.

- Establish clear governance: escalation paths, safety constraints, and auditability.

- Design observability: telemetry for both automation pipelines and agent decisions.

- Start with a pilot in a low-risk area and expand as learning and confidence grow.

This framework helps teams avoid the trap of trying to fit everything into one paradigm and supports a pragmatic path from automation to agent-based decision-making as needed. Ai Agent Ops recommends incremental adoption paired with rigorous monitoring.

Case illustrations: tangibles from multiple industries

- Finance: A data-cleaning automation pipeline feeds an AI agent that decides on loan approvals under risk constraints, with automated rejections looping back for human review when needed.

- Healthcare: Patient data ingestion is automated, while an agent analyzes triage notes and suggests care pathways under clinical governance. Human clinicians retain final oversight.

- Retail: Inventory data syncing runs as automation; an agent coordinates replenishment actions across suppliers, warehouses, and storefronts, optimizing stock levels and delivery timing.

- Manufacturing: Sensor data streams are processed by automation; agents tune maintenance schedules based on perceived risk and production targets. This hybrid setup improves uptime while controlling operational risk.

Implications for teams and next steps

Teams exploring is ai automation and ai agent the same should start with a capabilities map that distinguishes routine, rule-based tasks from decision-driven work. Invest in robust data governance, observability, and safety controls early. Build cross-functional teams that include data scientists, software engineers, and operations specialists to manage both automation and agent layers. Finally, cultivate a culture of continuous learning and iterative improvement. The nuanced answer to the question is that AI automation and AI agents are complementary tools—placing them on a spectrum rather than forcing a binary choice yields the most durable and scalable solutions. Ai Agent Ops signs off on a deliberate, evidence-based approach that aligns with modern agentic AI workflows.

Comparison

| Feature | AI automation | AI agent |

|---|---|---|

| Definition | Systems that execute predefined tasks via rules or scripted flows | Autonomous software entities that perceive, decide, and act to achieve goals |

| Autonomy in decision-making | Low autonomy; follows fixed steps | High autonomy; makes decisions under defined constraints |

| Control flow | Deterministic, orchestrated pipelines | Dynamic, goal-driven actions in changing environments |

| Learning and adaptation | Limited or no learning | Potential for learning from feedback and adapting |

| Human interaction | Typically human-in-the-loop at some points | Can operate with minimal human input, ongoing monitoring often required |

| Typical tasks | Repeatable, rule-based processes | Complex, evolving tasks with negotiation across systems |

| Tooling focus | Orchestration, scripting, data pipelines | Agent frameworks, LLMs, planners, safety monitors |

| Best for | Stable, high-volume workflows | Goal-directed problems with uncertainty |

| Implementation complexity | Low to medium for simple cases; increases with scale | Medium to high due to autonomy, safety, and governance |

| Cost/value dynamics | Predictable costs, quick ROI on labor reduction | Longer ROI potential from autonomy and adaptability |

Positives

- Reduces manual toil and human error in repeatable tasks

- Accelerates throughput for well-defined processes

- Clarifies ownership and governance by scope separation

- Supports safer, hybrid architectures when well designed

What's Bad

- Autonomy introduces governance and safety overhead

- Hybrid designs require careful integration and monitoring

- Agent-based approaches may demand more data quality and model maintenance

- Upfront planning needed to avoid scope creep

Adopt a hybrid framework that uses automation for routine tasks and agents for goal-driven decisions

Both concepts are distinct yet complementary. Automation excels in reliability and speed for scripted tasks, while AI agents unlock adaptive decision-making in changing environments. A staged, hybrid approach typically yields the best balance of efficiency and resilience.

Questions & Answers

What is AI automation in simple terms?

AI automation uses software and rules to perform tasks with minimal human input. It emphasizes speed, accuracy, and repeatability for well-defined processes.

AI automation uses software to perform tasks automatically, focusing on speed and consistency for routine jobs.

What is an AI agent and how does it differ from automation?

An AI agent is an autonomous software entity that perceives its environment, makes decisions, and acts toward goals. Unlike rigid automation, agents adapt to changing conditions.

An AI agent is an autonomous decision-maker that acts to achieve goals, not just follow fixed steps.

Can AI automation become an AI agent over time?

Automation can be a stepping stone toward agents when you add decision logic, perception, and feedback loops. However, becoming a fully autonomous agent requires governance and safety measures.

You can evolve automation toward agents by adding perception and decision components, with proper governance.

What are common pitfalls when combining automation and agents?

Common pitfalls include scope creep, inadequate data quality, insufficient monitoring, and weak governance for autonomous decisions. Start small and scale with strong telemetry.

Be careful with scope, data quality, and safety when combining automation and agents.

What factors influence the choice between automation and agents?

Factors include task rigidity, data availability, required speed, risk tolerance, and the need for autonomous decision-making. Align the choice with business goals and governance requirements.

Look at task rigidity, data quality, and risk when deciding between automation and agents.

Key Takeaways

- Map tasks to automation or agents based on rule rigidity and decision complexity

- Invest in data governance and observability from day one

- Pilot in low-risk areas before scaling to complex workflows

- Design for safety, governance, and human-in-the-loop readiness

- Leverage hybrid architectures to maximize flexibility and resilience