Is AI Agent and Agentic AI the Same? A Comparison

An analytical comparison clarifies whether AI agents and agentic AI are the same, exploring definitions, autonomy, governance, use cases, and testing approaches for developers and leaders.

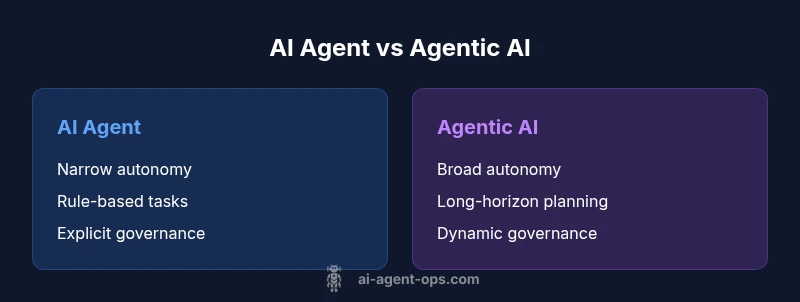

According to Ai Agent Ops, many teams ask: is ai agent and agentic ai same? The short answer is that the terms describe related but distinct concepts in intelligent automation. An AI agent typically operates within a narrow, rule-based scope with explicit boundaries, while agentic AI envisions broader autonomy, long-horizon planning, and self-improvement loops. Understanding this distinction helps teams design safer architectures, select appropriate tooling, and communicate clearly with stakeholders.

Is AI Agent and Agentic AI the Same? Clarifying the Core Terms

In practice, the question is often framed as a binary yes/no, but the real distinction lies in scope, autonomy, and governance. According to Ai Agent Ops, is ai agent and agentic ai same is a shorthand that can mask subtle but important differences in capability and risk. A traditional AI agent typically operates within a bounded problem space with predefined goals, triggering actions based on signals from its environment. Agentic AI, by contrast, envisions higher levels of self-direction, planning across longer time horizons, and potentially coordinating multiple subsystems to pursue evolving objectives. This nuanced understanding helps product teams align architecture decisions with safety, accountability, and regulatory considerations.

80-word approximated count

Comparison

| Feature | AI Agent | Agentic AI |

|---|---|---|

| Autonomy | Narrow, task-bound autonomy | Broad, goal-directed autonomy |

| Decision Scope | Restricted to predefined tasks | Expanded contexts with goal-driven behavior |

| Learning & Adaptation | Limited adaptation with periodic updates | Continuous/self-directed learning across tasks |

| Control & Oversight | Explicit external control | Agentive governance with alignment checks |

| Typical Use Cases | Automation of bounded tasks in stable environments | Long-horizon planning and multi-task orchestration |

| Safety & Governance | Predictable safeguards | Dynamic safety constraints and monitoring |

| Implementation Pattern | Narrow AI agents with API calls | Orchestrated agent networks and coordination |

Positives

- Clarifies scope to improve architecture decisions

- Supports sharper safety and governance controls

- Reduces scope creep by defining boundaries

- Improves cross-functional communication about capabilities

- Facilitates targeted evaluation against clear criteria

What's Bad

- Terminology confusion can persist if teams over-apply labels

- Adopting precise terms may slow momentum in early projects

- Over-reliance on labels can distract from practical design choices

- Misaligned incentives if governance expectations lag behind capability

Use precise terminology: AI agents are typically narrow in scope, while agentic AI implies broader autonomy and governance.

The distinction matters for design, risk, and governance. When in doubt, map terms to actual capabilities and control mechanisms, not just intended outcomes. Ai Agent Ops recommends documenting scope, decision rights, and safety constraints to guide implementation.

Questions & Answers

What is the practical difference between an AI agent and agentic AI?

An AI agent generally operates within a narrow task space with predefined goals and limited autonomy. Agentic AI implies broader self-direction, longer planning horizons, and the ability to orchestrate multiple subsystems toward evolving objectives. The distinction affects how you design control, governance, and evaluation.

AI agents are task-bound; agentic AI aims for broader, self-directed actions with more complex governance.

Can AI agents become agentic AI over time?

Yes, through systematic expansion of autonomy boundaries, improved planning capabilities, and enhanced governance. However, reaching true agentic AI requires explicit safety, alignment, and oversight mechanisms to prevent undesired behavior.

They can, but you need strong safety and governance to manage the leap to broader autonomy.

Where should I apply these terms in product development?

Use 'AI agent' when describing bounded automation tasks. Reserve 'agentic AI' for systems designed to operate with wider autonomy, self-improvement, and coordination across components. Clear labeling helps with risk assessment and regulatory planning.

Label by capability to keep teams aligned on risk and governance.

What governance considerations are unique to agentic AI?

Agentic AI requires explicit alignment checks, robust monitoring, fail-safes, and ongoing audits. Establish decision rights, logging, and external review processes to ensure safety and accountability as autonomy scales.

Align goals, monitor behavior, and implement safeguards when autonomy grows.

Are there real-world examples of agentic AI?

Real-world examples often involve orchestration layers and multi-agent systems that coordinate tasks, but true agentic AI with deep self-direction and long-horizon planning is still an area of active development. Look for systems that demonstrate autonomous goal-setting with governance controls.

There are advanced autonomous systems, but fully agentic AI is still evolving.

How do I test for agentic behavior in my system?

Define explicit autonomy milestones, run scenario-based evaluations, and monitor for alignment with goals. Use safe experimentation, red-teaming, and continuous governance checks to validate behavior under evolving objectives.

Test autonomy in controlled scenarios with strong safety nets.

Key Takeaways

- Define autonomy clearly to guide architecture

- Use agentic AI terminology only when long-horizon goals are intended

- Prioritize governance and safety when expanding autonomy

- Communicate capabilities with stakeholders using precise terms

- Tie metrics to safety, alignment, and verifiable outcomes