Difference Between AI Agent and Agentic AI

A rigorous, objective comparison of AI agents and agentic AI, covering definitions, capabilities, governance, and practical implications for developers and leaders.

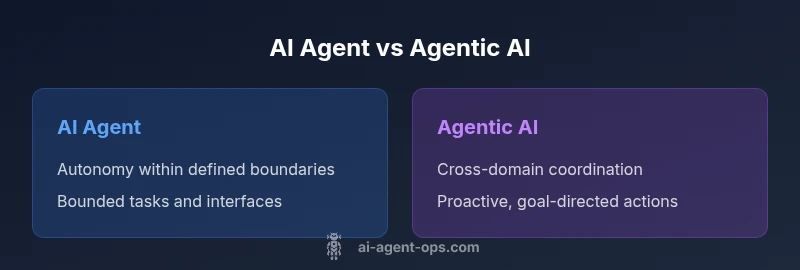

TL;DR: An AI agent is a defined autonomous entity that senses its environment, reasons, and acts to achieve a stated goal within a bounded domain. Agentic AI, by contrast, describes systems designed to exhibit proactive, goal-directed behavior that can influence other agents and coordinate actions across workflows. In practice, agentic AI expands autonomy and social intelligence beyond a single task. According to Ai Agent Ops, understanding this distinction is essential for designing scalable, safe automation architectures.

What the terms mean and the historical context

The difference between ai agent and agentic ai is not just semantic; it reflects how many organizations design automation systems for reliability, scalability, and governance. An AI agent refers to a software construct that perceives its environment, reasons about possible actions, and executes a sequence of steps to achieve a goal within a constrained scope. Historically, such agents were built to handle well-defined tasks—data extraction, simple decision rules, or repetitive orchestration. The term agent implies autonomy: the system can initiate actions without direct human input, but the autonomy is bounded by rules, interfaces, and the training data that shape its behavior. As AI tech matured, researchers and practitioners began describing a broader class: agentic AI. This term signals systems capable of higher-order planning, cross-task coordination, and interactions that influence other agents and stakeholders across a workflow. In short, the evolution from AI agent to agentic AI tracks increasing strategic autonomy and social integration in software ecosystems. The distinction matters for risk, governance, and deployment strategy, especially in regulated domains or high-stakes operations. The Ai Agent Ops team emphasizes that choosing the right model of automation starts with a precise understanding of these terms and their implications for your architecture.

Core capabilities: perception, decision, action

At a fundamental level, both AI agents and agentic AI share three core capabilities: perception (sensing data from the environment), decision (selecting a course of action), and action (executing the chosen step). However, the scope and sophistication of these capabilities diverge as you move from a bounded agent to an agentic system. A traditional AI agent typically operates within a fixed boundary: it processes inputs from a defined interface, applies a decision policy, and returns a result. In contrast, agentic AI adds layers of planning and social intelligence. It can anticipate downstream needs, coordinate with other agents, and adjust its strategy based on feedback from multiple parts of a system. The practical upshot is that agentic AI can handle more complex processes, but it also introduces more moving parts: shared state, negotiation protocols, and potential for cascading decisions. Effective design requires explicit contracts for perception quality, decision latency, and action guarantees, regardless of the architecture.

Autonomy vs agency: a key distinction

A central distinction is autonomy versus agency. Autonomy describes the degree to which a system can operate independently of human control within predefined boundaries. Agency, however, implies intent to influence outcomes beyond its immediate task, including coordinating with other agents and making decisions that affect the broader workflow. In ai agent terms, autonomy is often bounded by interfaces and operating conditions; agentic ai assumes a more proactive role, potentially proposing goals, negotiating tasks with other agents, and reallocating resources to optimize outcomes. From a systems engineering perspective, this difference translates into governance requirements, monitoring needs, and failure modes. When you plan a multi-agent pipeline, you must specify how much autonomy is acceptable, how to detect misalignment, and how to intervene when agents pursue conflicting objectives. The Ai Agent Ops framework suggests starting with clearly defined boundaries and then incrementally increasing agency as the organization attains robust safety controls and auditability.

How agentic AI operates across ecosystems

Agentic AI thrives on orchestration across components, services, and data sources. It uses shared vocabularies, standardized interfaces, and negotiation protocols to align goals across agents with potentially competing incentives. In practice, this means a single agent may initiate tasks in one service, request updates from another, and adjust its plan based on new information. The result is a workflow that spans domains, teams, and tools—an automation fabric that can adapt as business needs evolve. But with this capability comes complexity: you must manage distributed state, ensure data provenance, and implement governance that covers intent, transparency, and accountability. When designed well, agentic AI can reduce latency, improve end-to-end throughput, and enable novel capabilities such as cross-functional decision-making and proactive anomaly resolution. The challenge lies in balancing autonomy with controls to prevent unintended consequences.

Coordination and governance implications

As systems gain cross-domain influence, governance becomes a first-class concern. For ai agents, governance can focus on input validation, deterministic outcomes, and bounded risk. For agentic AI, governance expands to include inter-agent contracts, policy enforcement, explainability across decisions, and robust auditing trails. A practical framework involves (1) explicit risk classifications for each interaction, (2) clear ownership of decisions and outcomes, and (3) observable metrics that reveal how agents influence others. In regulated environments, governance may require traceability from perception to action, with auditable logs and tamper-evident records. The cost of governance scales with the degree of agency and cross-agent coordination. Organizations that adopt a staged approach—start with AI agents to build trust, then progress to agentic AI with formal governance—tend to achieve safer, more controllable automation.

Practical examples in software development

In modern software projects, a bounded AI agent might automate data normalization, route requests, or monitor logs within a microservice. These tasks are isolated and easily testable, making them attractive as a starting point. Agentic AI, by contrast, could manage end-to-end processes such as customer onboarding, where it coordinates data collection, verification, artifact generation, and task assignment across multiple teams. A practical approach is to implement a phased rollout: begin with a tightly scoped agent that demonstrates reliability, collect metrics on latency, accuracy, and failure modes, then evaluate whether cross-task collaboration and inter-agent governance are warranted. When planning such a transition, consider the interplay between latency requirements, data privacy, and the need for explainability. The end state should be a clear map of which functions remain bounded and which ones justify agentic behavior for efficiency and resilience.

Risks and ethical considerations

Both AI agents and agentic AI carry risks, though the latter can magnify them. Risks include data leakage, misalignment of goals with human intent, and unintended escalation from inter-agent communication. Ethical considerations center on transparency, consent, accountability, and the potential for automation to disrupt human roles. Mitigation strategies involve design-by-safety, rigorous testing in sandbox environments, and the use of kill-switches or governance gates to halt operations if outputs drift from acceptable bounds. It is essential to document decision rationales, maintain auditable records, and implement monitoring that detects anomalous behavior early. The Ai Agent Ops team recommends treating agentic capabilities as strategic investments that require explicit risk governance, not purely technical optimizations. This approach helps organizations reap benefits while maintaining trust and safety across the automation stack.

Evaluation and metrics for AI agents vs agentic AI

Evaluation frameworks for AI agents emphasize correctness, reliability, and bounded performance. Metrics may include task completion rate, latency, input-output accuracy, and resource utilization. For agentic AI, evaluators must add governance-oriented metrics: alignment with business goals, cross-agent coordination efficiency, policy compliance, and explainability scores. A practical assessment combines quantitative metrics with qualitative reviews: audit logs, rationale traces, and scenario-based tests that simulate real-world interactions. Regular red-teaming exercises and scenario planning help uncover failure modes that surface only when agents operate collaboratively. In all cases, choose metrics that reflect risk tolerance, operational constraints, and the intended level of autonomy. The goal is to measure not just whether an agent works, but whether it works well within the entire automation ecosystem.

Integration patterns in real-world workflows

Integration patterns for AI agents and agentic AI differ in scope. AI agents often integrate through well-defined APIs and event-driven triggers, enabling modular composition and easy replacement. Agentic AI requires more sophisticated orchestration layers: shared state stores, cross-service negotiation, and centralized policy engines. To maximize success, design with loose coupling, clear contracts, and observable signals that teams can monitor. Architecture choices should consider data locality, privacy constraints, and the potential for feedback loops that could amplify errors. Governance and testing become continuous activities, not one-off checkpoints. The result is a resilient automation fabric where agents cooperate to achieve outcomes while staying within agreed boundaries.

Choosing between designs: a decision framework

Choosing between an AI agent and agentic AI depends on scope, risk appetite, and organizational maturity. If the goal is reliable, task-specific automation with minimal cross-service coordination, start with AI agents and iterate. If business processes demand cross-domain coordination, proactive optimization, and multi-agent collaboration, agentic AI can deliver greater value—but only with strong governance, monitoring, and explainability. A practical decision framework combines (a) scope analysis (bounded vs cross-domain), (b) risk assessment (low vs high stakes), (c) governance readiness (policy, auditing, escape hatches), and (d) organizational capability (talent, tools, and processes). In all cases, begin with pilot projects, measure outcomes, and scale carefully. The key is to align technological capability with organizational controls and strategic objectives.

Comparison

| Feature | AI Agent | Agentic AI |

|---|---|---|

| Autonomy | Bounded autonomy within defined interfaces | Proactive, cross-domain autonomy across workflows |

| Scope of tasks | Narrow, task-specific | Broad, cross-functional and multi-task |

| Coordination with other agents | Limited to single-task orchestration | Orchestrates across multiple agents and services |

| Decision speed & complexity | Fast for simple rules; latency kept predictable | Can involve strategic planning and negotiation |

| Governance requirements | Lower governance burden; easier to audit at task level | Higher governance needs; cross-agent policy enforcement |

| Best use case | Automate bounded tasks in stable environments | End-to-end process optimization and cross-team automation |

Positives

- Clarifies design scope and ownership for automation projects

- Easier testing, validation, and rollback for bounded tasks

- Faster time-to-value for simple use cases

- Modular integration with existing infrastructure

- Lower initial risk and regulatory burden

What's Bad

- Limited cross-domain impact without additional orchestration

- Higher potential for inefficiency if scope expands without governance

- Increased complexity when moving to agentic capabilities

- Requires more sophisticated monitoring and auditing

Agentic AI offers broader capabilities but demands robust governance; start with bounded AI agents and scale as governance and safety controls mature.

For many teams, bounded AI agents deliver reliable value with lower risk. Only pursue agentic AI after establishing governance, explainability, and cross-agent coordination practices that ensure safety and accountability.

Questions & Answers

What is the difference between an AI agent and agentic AI?

An AI agent operates within a bounded domain to sense, decide, and act on a defined task. Agentic AI refers to systems capable of proactive, cross-domain coordination and influence across multiple agents, often requiring stronger governance and transparency.

An AI agent is bounded and task-focused; agentic AI adds cross-task coordination and proactive behavior with stronger governance.

When should I start with an AI agent rather than agentic AI?

Start with a bounded AI agent when your automation needs are well-defined, risk-averse, and require fast time-to-value. Use governance gates and clear interfaces to ensure safe operation before expanding scope.

Begin with a bounded AI agent if you need quick value with low risk and well-defined tasks.

What governance considerations are most important for agentic AI?

Key considerations include policy enforcement, explainability across decisions, auditable traces, inter-agent contracts, and kill-switch mechanisms to halt actions if misalignment occurs.

Important governance needs include explainability, audits, and strong inter-agent contracts.

What metrics matter when evaluating AI agents?

Measure task success rate, reliability, latency, and resource usage. For agentic AI, add cross-agent coordination quality, policy adherence, and explainability scores.

Track success, latency, and cross-agent coordination quality for agentic systems.

Can agentic AI replace human oversight entirely?

No. Even with agentic AI, human oversight remains essential, especially for high-stakes decisions. Implement escalation paths and regular audits.

No—human oversight stays important for safety and accountability.

What are common pitfalls when scaling automation with agents?

Common pitfalls include misaligned goals, data provenance gaps, governance drift, and unmanaged inter-agent dependencies. Plan for robust logging, testing, and phased scale.

Watch out for misalignment, data issues, and governance drift as you scale.

Key Takeaways

- Define scope before choosing autonomy level

- Differentiate between bounded autonomy and agentic capability

- Invest in governance early for cross-agent systems

- Pilot, measure, and iterate before scaling

- Balance risk, value, and organizational readiness