How Agentic AI Differs from Traditional Virtual Assistants: A Thorough Comparison

An analytical, balanced comparison of agentic AI and traditional virtual assistants, focusing on autonomy, planning, governance, and ROI for developers and business leaders exploring agentic AI workflows.

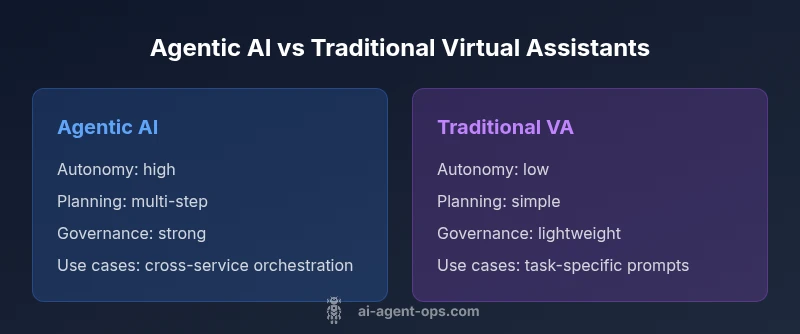

How is agentic AI different from traditional virtual assistants? Agentic AI operates with proactive, goal-driven behavior, planning, and executing tasks with minimal human prompting within guardrails. Traditional virtual assistants, by contrast, respond to explicit requests and perform simple actions. This distinction matters for automation scope, governance needs, and risk management in real-world workflows. According to Ai Agent Ops, the shift toward agentic capabilities expands automation potential, enabling more complex task orchestration without constant micromanagement.

Defining agentic AI and traditional virtual assistants

In the landscape of intelligent software, understanding how is agentic ai different from traditional virtual assistants is essential for teams designing automation. Agentic AI refers to systems that can set goals, plan sequences of actions, and execute tasks with minimal human prompting within defined guardrails. Traditional virtual assistants reply to user requests, fetch information, or perform straightforward actions when invoked. This distinction influences how organizations design automation pipelines, assign ownership, and measure outcomes. According to Ai Agent Ops, agentic AI expands automation boundaries by enabling higher-level workflows composed of multiple steps, rather than requiring users to choreograph every action. The Ai Agent Ops Team notes that this shift has practical implications for governance, risk management, and developer productivity. When evaluating capabilities, teams should ask what level of autonomy is appropriate for a use case and what safeguards are necessary to prevent unintended actions. The central question remains: how is agentic ai different from traditional virtual assistants in real-world workflows?

Core capabilities: autonomy, planning, and learning

Agentic AI hinges on three core capabilities: autonomous goal setting, multi-step planning, and adaptive learning from results. Unlike traditional virtual assistants that execute a fixed set of commands, agentic systems can identify relevant tasks, sequence actions across services, and adjust plans based on feedback. This enables end-to-end automation for complex workflows, such as coordinating data pipelines, scheduling dependent tasks, and adapting to changing constraints. Learning happens through structured feedback loops, logging outcomes for governance reviews, and applying patterns to similar future scenarios. For developers, the key distinction is in the design surface: agentic AI emphasizes orchestration logic and decision boundaries, while traditional assistants emphasize command handling and data retrieval.

Interaction patterns and user experience

Interaction with agentic AI often starts with a high-level objective rather than a specific instruction. Users articulate goals, and the system proposes a plan, negotiates constraints, and executes tasks across multiple services. This creates a more fluid and proactive user experience but requires clear governance to ensure safety and accountability. Traditional virtual assistants rely on explicit prompts and short, transactional interactions. The UX trade-off is between convenience and control: agentic AI offers more powerful automation at the cost of higher cognitive load for initial orchestration and stricter monitoring to prevent drift from intended outcomes.

Data handling, privacy, and safety considerations

With greater autonomy comes increased responsibility for data handling, privacy, and safety. Agentic AI systems demand robust access controls, auditable decision paths, and transparent failure modes. Organizations should implement guardrails, risk scoring for autonomous actions, and clear rollback mechanisms. Traditional virtual assistants typically require fewer governance layers because actions are more deterministic and localized. However, even reactive systems must guard against data leakage, insecure integrations, and prompt injection risks. Ai Agent Ops emphasizes designing agentic solutions with privacy-by-design principles and explicit governance policies from the outset.

Architecture and components: how the pieces fit

Agentic AI architectures typically combine a planning engine, perception and context modules, action executors, and governance layers. The planning component translates goals into tasks, sequences them, and allocates responsibility across services. Perception modules provide context from user input and system state, while executors enact actions through APIs or internal services. A governance layer enforces safety, auditability, and compliance. Traditional virtual assistants center on intent recognition, slot filling, and action execution within a narrower scope. The architectural difference is not just about scale; it’s about enabling orchestration and autonomy across a broader set of services and data domains.

Use cases and ROI implications: when agentic AI shines

Agentic AI excels in workflows requiring cross-system coordination, dynamic task reallocation, and proactive problem solving. Examples include end-to-end customer onboarding with multiple back-end services, IT automation spanning monitoring, ticketing, and remediation, and business process automation that adapts to fluctuating inputs. ROI concerns focus on time-to-value, reduction in manual handoffs, and improved policy adherence. Ai Agent Ops analysis suggests that the value of agentic AI grows when processes are complex, multi-step, and prone to drift without centralized orchestration. For straightforward, repetitive tasks, traditional assistants may still be more cost-effective due to lower setup and maintenance.

Challenges and governance: risks to manage

Several risks are inherent to agentic AI, including unintended actions, data governance gaps, and potential bias in decision-making. Establishing guardrails with clear ownership, decision logs, and rollback options is critical. Operational complexity increases with autonomy, calling for tooling around monitoring, anomaly detection, and incident response. The governance model should define who can modify goals, what constraints exist, and how success is measured. While traditional assistants are simpler to audit, agentic systems demand robust, auditable governance to ensure safety and compliance across all automated workflows.

Evaluation frameworks: measuring the right things

Evaluating agentic AI requires a mix of qualitative and quantitative metrics. Key indicators include time-to-orchestrate, task completion reliability across services, deviation rate from planned actions, and governance compliance scores. Evaluate outcomes like user satisfaction, incident frequency, and the scalability of automation. For teams transitioning from traditional assistants, establish baselines for manual handoffs, error rates, and response times, then track improvements as autonomy and plan quality increase. The evaluation should be ongoing, with quarterly reviews to refine goals and guardrails.

Implementation patterns: practical steps for teams

Adoption patterns for agentic AI range from bolt-on orchestration modules to full-stack re-architecture of automation pipelines. Start with a well-defined use case, create a prototype with a narrow scope, and progressively expand autonomy while tightening governance. Typical steps include mapping the end-to-end workflow, selecting compatible services, defining decision points and constraints, implementing observability, and establishing rollback and audit procedures. Emphasize cross-functional collaboration between engineering, security, product, and governance teams to align objectives and ensure safe, scalable adoption.

Roadmap and future trends: where the field is headed

The agentic AI field is moving toward more transparent planning, improved safety capabilities, and richer cross-domain orchestration. Trends include standardized governance models, better explainability for autonomous actions, and tighter integration with enterprise data governance frameworks. As models mature, expect more robust policy engines and adaptive guardrails that can evolve with changing regulatory requirements. For organizations, the strategic takeaway is to invest in architecture that supports modular autonomy, auditable decision processes, and scalable integrations with existing systems.

Getting started: a practical 6-step plan for teams

- Define a high-impact use case with clear ownership and success criteria. 2) Map the end-to-end workflow and identify decision points suitable for autonomy. 3) Establish guardrails, safety constraints, and rollback mechanisms. 4) Choose a scalable architecture that includes a planning component, executors, and governance. 5) Implement observability, auditing, and security controls from day one. 6) Run a controlled pilot, measure performance, and expand scope only after satisfying governance thresholds. This phased approach helps teams migrate from traditional assistants to agentic AI without sacrificing safety or reliability.

Comparison

| Feature | Agentic AI | Traditional Virtual Assistants |

|---|---|---|

| Autonomy and goal-driven behavior | High autonomy with goal setting and cross-service orchestration | Reactive; executes user commands with limited scope |

| Decision making and planning | Proactive planning across multi-step tasks | Single-step or limited multi-step actions based on prompts |

| Learning and adaptation | Continuous learning from outcomes and feedback within governance bounds | Limited learning; depends on explicit updates |

| Data handling and governance | Built-in guardrails, audit trails, and policy-driven actions | Fewer governance features; more emphasis on prompt accuracy |

| User interaction pattern | Goal-oriented prompts; system can initiate tasks | User-initiated prompts; actions are event-driven |

| Integration and scalability | Designed for orchestration across APIs and services | Best for simple tasks; limited cross-system coordination |

| Cost and ROI context | Potential ROI from reduced manual handoffs and faster workflows | Lower upfront costs but potentially higher manual overhead |

| Safety and accountability | Requires strong guardrails and auditable decision logs | Simpler safety needs but less auditable action history |

Positives

- Increased automation of complex, multi-step workflows

- Proactive assistance reduces manual handoffs

- Better alignment with business outcomes through orchestration

- Can scale automation across teams and data domains

What's Bad

- Higher risk of unpredictable actions without strong governance

- Increased architectural and operational complexity

- Greater data privacy and security considerations

- Requires mature governance and monitoring processes

Agentic AI generally outperforms traditional assistants in complex automation, but only with robust governance.

For large-scale, cross-system workflows, agentic AI offers clearer ROI and agility. Traditional assistants remain suitable for narrow, well-defined tasks. The Ai Agent Ops team recommends starting with a tightly scoped pilot that includes strong guardrails before expanding autonomy.

Questions & Answers

What is agentic AI?

Agentic AI refers to systems capable of setting goals, planning actions, and autonomously executing tasks within defined guardrails. It goes beyond simple command execution by orchestrating workflows across multiple services.

Agentic AI can set goals, plan steps, and act across services, not just react to commands.

How is it different from traditional virtual assistants?

The key difference is autonomy. Agentic AI can plan and execute a sequence of actions, while traditional assistants typically respond to inputs and perform single tasks.

It's more autonomous and capable of coordinating multiple steps without constant prompts.

What are common use cases for agentic AI?

Use cases include end-to-end process automation, IT remediation across services, and orchestration of complex customer journeys that involve multiple teams and systems.

Look for workflows that span multiple tools and data sources.

What governance considerations are important?

Governance should cover goals, constraints, auditing of decisions, rollback options, and incident response plans. These guardrails help prevent unintended actions and protect data.

Set clear rules, logs, and rollback paths for autonomous actions.

What skills are needed to implement agentic AI?

Teams typically need expertise in AI/ML concepts, systems integration, data governance, and software engineering focused on orchestration and observability.

You’ll want AI, dev, and security folks working together.

How should we evaluate ROI for agentic AI projects?

Evaluate ROI through time-to-delivery, reduction in manual work, error rate improvements, and the ability to scale automation across domains.

Measure time saved and how much more you can automate.

Key Takeaways

- Define a narrow pilot to prove value before expanding autonomy

- Prioritize governance and auditability from day one

- Choose agentic AI where multi-service orchestration is essential

- Balance autonomy with clear rollback and safety mechanisms

- Measure ROI through reduced handoffs and time-to-delivery