AI Agents vs Virtual Assistants: What Sets Them Apart

A detailed side-by-side comparison of AI agents and virtual assistants, focusing on autonomy, planning, tool use, governance, and deployment implications for scalable automation and user-facing tasks.

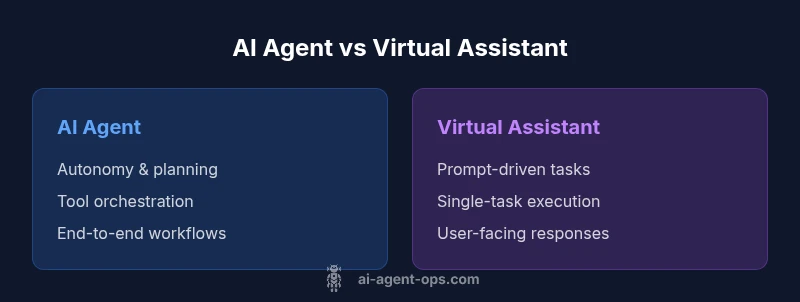

AI agents and virtual assistants share interfaces and basic tasks, but they differ in autonomy, decision-making, and scope. An AI agent operates with goal-directed behavior, can plan actions, and orchestrate multiple tools, whereas virtual assistants primarily execute user commands and fetch information. For teams evaluating automation, the distinction matters for scale, reliability, and future extensibility. This comparison helps answer what makes an ai agent different from virtual assistants in practical projects.

Core distinction: autonomy vs user-initiated tasks

What makes an ai agent different from virtual assistants starts with autonomy. AI agents are designed to operate with a defined goal in mind and to select actions, sequence steps, and adapt to changing conditions without waiting for explicit direction for every move. Virtual assistants, in contrast, excel at handling user-initiated requests, answering questions, and performing discrete tasks when asked. This distinction matters because it shapes architecture, governance, and the potential for scale. In practice, a true AI agent might decide to fetch data, run a set of checks, compare outcomes, and trigger a chain of tools to complete a transaction with minimal human input. A virtual assistant would typically pause to ask clarifying questions or execute a clearly specified instruction. Understanding this difference is essential when planning modern automation programs and evaluating the right fit for your organization. According to Ai Agent Ops, the framing of autonomy vs user prompts matters for designing agentic AI workflows. What makes what makes an ai agent different from virtual assistants a practical framing for architecture choices?

Goals, planning, and decision-making

AI agents formalize objectives and use planning to reach them, whereas virtual assistants execute prompts. An agent typically maintains a goal hierarchy, breaks down tasks into subtasks, and revises plans if environmental cues change. Decision-making is data-driven and can weigh tradeoffs across tools and actions. This capacity matters for reliability: when a goal changes or a tool is unavailable, agents can replan. The contrast with a prompt-driven assistant is clear: if the user changes the objective, the entire plan shifts; if the user changes the instruction, a single step changes. For the keyword phrase what makes an ai agent different from virtual assistants, autonomy and replanning are core factors that push agents into orchestrating cross-app workflows rather than handling isolated actions.

Tool use and environment interaction

A core differentiator is how agents interact with the outside world. AI agents orchestrate multiple tools, APIs, and data sources, choosing the right combination to advance a goal. They manage state across steps, handle failures gracefully, and can negotiate with systems that require authentication or rate limits. Virtual assistants typically invoke a single service per user request and rely on predictable prompts to fetch data or perform a function. The distinction becomes critical when you design enterprise automation where reliability, auditability, and end-to-end workflows matter. In those contexts, the ability to chain tools, backtrack decisions, and recover from partial failures is a defining feature of what makes an ai agent different from virtual assistants. Ai Agent Ops highlights that tool orchestration is central to agentic AI workflows.

Learning and adaptation in real-world contexts

Unlike static scripted assistants, AI agents can adapt as tasks evolve. They may learn from outcomes, update their internal models, and adjust strategies to improve success rates over time. Real-world environments introduce noise, latency, and occasional failures; agents that can learn from errors and refine their plans tend to outperform rigid assistants in long-running automation. However, learning introduces governance and safety considerations; changes in behavior must be auditable and controllable. When evaluating what makes an ai agent different from virtual assistants, remember that adaptation is a double-edged sword: it enables growth but requires robust monitoring. Ai Agent Ops notes that practical agent deployment blends learning with explicit safety rails to maintain predictable outcomes.

Architecture and data flows

At a high level, an AI agent architecture comprises a planner, a memory module, a policy layer, and a tool executor. The planner creates sequences of actions to achieve a goal; memory stores context across steps; the policy layer governs when to replan or abort; and the tool executor runs the chosen actions via APIs. Data flows between these components must be secure, trackable, and compliant with governance standards. In contrast, virtual assistants lean on prompt-driven logic, often centered around natural language understanding and single-action execution. This architectural difference underpins scalability and reliability: agents are designed for cross-domain workflows, while assistants excel at user-facing prompts within a narrower scope. Understanding these data flows clarifies what makes an ai agent different from virtual assistants and guides architecture decisions for teams.

Interaction styles and user experience

User experience for AI agents prioritizes proactive support, transparency, and controllable automation. Users may interact with the agent through natural language, dashboards, or integrated apps, while the agent coordinates tools in the background. Conversely, virtual assistants focus on clarity, quick responses, and direct task execution, with less emphasis on long-running workflows. The design choice affects onboarding, training, and governance. For teams adopting agentic AI workflows, the UX should balance autonomy with user control, ensuring users can intervene when necessary. This balance is central to answering what makes an ai agent different from virtual assistants: agents offer proactive assistance and orchestration, while virtual assistants stay closer to command fulfillment.

Reliability, safety, and governance implications

Autonomy brings reliability challenges, including risk of unintended actions and cascading failures. Effective AI agents implement guardrails, auditing, and safe-landing behaviors to mitigate harm. Governance policies define when an agent can execute a sequence of actions, require human oversight for sensitive tasks, and specify rollback procedures. In contrast, virtual assistants are usually constrained by explicit prompts and predefined outcomes, which simplifies governance but limits scalability. For organizations, this means investing in operation-wide policies, logging, and safety checks when deploying AI agents. Ai Agent Ops emphasizes that governance frameworks are as important as capability when evaluating what makes an ai agent different from virtual assistants and deciding on a deployment strategy.

Privacy and security considerations

Data handling for AI agents often involves broader data access across tools and services. Robust privacy controls, secure APIs, and strict authentication are essential to prevent leakage and unauthorized actions. Virtual assistants, while still requiring privacy safeguards, typically access fewer data domains per task. The scalability of agents introduces additional attack surfaces, making it vital to implement segmentation, access controls, and continuous monitoring. When shaping an implementation plan, teams should map data provenance and retention for all tools involved, ensuring alignment with regulatory obligations. Understanding what makes an ai agent different from virtual assistants includes recognizing the heightened privacy and security responsibilities of agent-driven workflows.

Use-case archetypes: enterprise automation vs consumer assistants

Enterprise automation scenarios favor AI agents for end-to-end processes such as order orchestration, supply chain intelligence, and customer lifecycle management. These use cases benefit from cross-system coordination, predictive actions, and auditable outcomes. Consumer-focused virtual assistants remain ideal for chat-based customer support, quick information retrieval, and device control within a user’s personal environment. The distinction informs procurement and development approaches: enterprises may invest in agent platforms with governance, observability, and integration hubs, while consumer scenarios prioritize UX polish, latency, and privacy protections. In short, what makes an ai agent different from virtual assistants is the breadth of automation and integration versus the immediacy of user-facing tasks.

Performance metrics and ROI considerations

Measuring AI agents involves end-to-end task completion rates, planning efficiency, tool call success, latency, and total cost of ownership. Virtual assistants are often evaluated on answer quality, response time, and user satisfaction. A successful agent program typically demonstrates higher throughput, better error handling, and lower human-in-the-loop costs over time, though upfront investment is higher. The ROI calculation should consider the value of cross-domain automation, the cost of maintaining tool integrations, and the impact on employee productivity. When weighing what makes an ai agent different from virtual assistants, prioritize metrics that reflect long-term automation value and governance compliance rather than single-task speed.

Migration paths and implementation strategies

Shifting from a traditional virtual assistant to an AI agent involves a staged approach: inventory tasks, identify cross-tool workflows, and pilot a small end-to-end scenario with clear success criteria. Start with a bounded domain, then extend by adding tools, data sources, and guardrails. Establish a feedback loop to refine goals and plans. Training and change management are essential to ensure stakeholders understand the new capabilities and limitations. The migration should emphasize measurable milestones, security reviews, and a rollback plan. This approach helps teams answer what makes an ai agent different from virtual assistants by progressively expanding autonomy in controlled, auditable steps.

Risks, failure modes, and boundary conditions

Common risks include goal drift, tool failure, and unsafe actions. To mitigate these risks, implement fail-safes such as human-in-the-loop checks for critical tasks, transparent decision trails, and constraint bounds on tool use. Agents must recognize boundary conditions when a goal becomes infeasible or data is incomplete, triggering graceful degradation or escalation. Understanding what makes an ai agent different from virtual assistants also means acknowledging possible hallucinations, stale context, or misinterpretation of user intents. Proactive monitoring and regular scenario testing help identify weaknesses before they impact real operations.

Evaluation frameworks and benchmarks

Adopting a rigorous evaluation framework is essential. Use scenario-based testing, end-to-end task coverage, and governance audits to assess agent performance. Benchmarks should measure success rates, planning efficiency, and safety compliance under varying load conditions. Regularly review logs and outcomes to detect drift and refine governance rules. For teams, a transparent evaluation approach clarifies what makes an ai agent different from virtual assistants by showing where autonomy adds value and where control is needed.

When to choose AI agents vs virtual assistants in practice

The decision hinges on task complexity, cross-system orchestration, and risk tolerance. For simple, high-clarity tasks handled in isolation, virtual assistants often suffice. For cross-domain workflows requiring autonomous planning, tool integration, and end-to-end execution, AI agents provide higher leverage, given appropriate governance and security. The best practice is to pilot with a constrained use-case, measure outcomes, and progressively broaden scope as confidence grows. This pragmatic approach aligns with Ai Agent Ops guidance on agent adoption and helps organizations decide when to invest in agentic AI workflows.

Comparison

| Feature | AI Agent | Virtual Assistant |

|---|---|---|

| Autonomy & Planning | High; goal-directed, multi-step decision making | Low; user-prompted, single-step actions |

| Tool Orchestration | Orchestrates tools/APIs across contexts | Executes discrete tasks via a single service |

| Learning & Adaptation | Continual adaptation with feedback loops | Limited learning without explicit prompts |

| Contextual Understanding | Dynamic environments with cross-domain context | Narrow context, prompts guide responses |

| Workflow Complexity | End-to-end automation across apps | Focused, simple workflows |

| Governance & Safety | Built-in guardrails, auditing, rollback | Basic safety controls for prompts |

| Integration Scope | Enterprise-grade, multi-system integrations | OS/app level integrations, consumer scope |

| ROI & Cost of Ownership | Higher upfront, greater long-term automation value | Lower upfront, limited automation scale |

Positives

- Drives scalable automation across complex workflows

- Enables cross-tool decision making and planning

- Reduces human-in-the-loop workload over time

- Improves consistency and repeatability of tasks

What's Bad

- Higher initial development and ongoing maintenance costs

- Requires comprehensive governance and safety frameworks

- Increased architectural complexity and monitoring requirements

- Longer time to ROI due to integration and rollout efforts

AI agents offer greater autonomy and cross-domain orchestration for scalable automation; virtual assistants excel at reliable, fast user-facing tasks.

Choose AI agents when your goals involve end-to-end workflows and multi-tool coordination. Opt for virtual assistants for straightforward, high-clarity tasks with minimal risk and faster deployment. A blended approach often yields the best balance of speed and scale.

Questions & Answers

What is an AI agent?

An AI agent is a system designed to achieve a goal by planning actions, coordinating tools, and adapting to changing conditions without requiring explicit step-by-step input for every move. It emphasizes autonomy and cross-tool orchestration.

An AI agent plans and acts on its own to reach a goal, using multiple tools as needed.

How is an AI agent different from a virtual assistant?

The key difference lies in autonomy and scope. AI agents operate with goal-directed planning and multi-tool orchestration, while virtual assistants focus on responding to prompts and performing single tasks.

Agents plan and act on their own; assistants follow prompts and complete individual tasks.

Can virtual assistants become AI agents?

Virtual assistants can evolve toward agent-like capabilities by adding planning, tool orchestration, and adaptive behavior. This transition typically requires re-architecting tasks, governance, and monitoring.

They can, with more autonomy and orchestration added.

What are common use cases for AI agents?

Common use cases involve end-to-end automation across systems, such as order orchestration, data gathering, and multi-step decision processes that previously required human intervention.

Use cases include cross-system automation and decision-making across apps.

What risks should I consider with AI agents?

Risks include unintended actions, data leakage across tools, model drift, and governance gaps. Mitigation involves guardrails, auditing, and staged rollouts.

Watch for unintended actions and data risks; use guardrails and audits.

How do I measure success when adopting AI agents?

Assess end-to-end task completion, planning efficiency, tool-call reliability, latency, and overall ROI. Compare against a baseline of previous manual or semi-automated workflows.

Track completion rates, speed, and ROI to judge impact.

Key Takeaways

- Prioritize autonomy if you need end-to-end automation

- Plan tool integrations before deployment to manage scope

- Implement safety rails and governance from day one

- Pilot with a bounded domain to validate ROI

- Balance agent capabilities with user-facing UX for best results