ai agent vs assistant: a practical comparison for teams

A practical comparison of ai agents and assistants: definitions, capabilities, costs, and best-use scenarios for developers and leaders exploring agentic AI workflows.

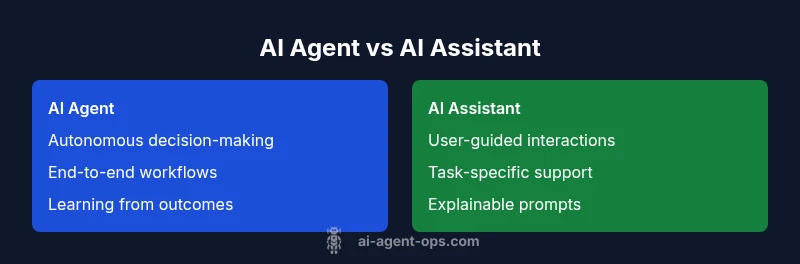

AI agents and AI assistants are often confused, but they occupy distinct roles in workflows. An ai agent autonomously executes complex tasks and learns from feedback, while an ai assistant focuses on interactive support and task execution within defined boundaries. This comparison helps teams choose the right pattern for automation, integration, and governance.

Defining AI Agent vs AI Assistant

In the world of intelligent automation, the terms ai agent vs assistant describe two complementary patterns. An AI agent is designed to act autonomously toward a goal, often operating with a degree of initiative, decision-making, and learning from outcomes. An AI assistant, by contrast, primarily augments human capabilities by providing guidance, completing routine tasks, and facilitating interactions. For teams evaluating agent-driven automation, the distinction matters for governance, risk, and velocity. Across projects, you’ll see agents handling end-to-end workflows, while assistants handle human-facing tasks, data gathering, and decision support. This difference isn’t just academic; it shapes architecture, data needs, and success metrics.

Key differences to note:

- Autonomy: agents act with minimal human prompting; assistants require prompts and oversight.

- Scope: agents tackle end-to-end goals; assistants focus on specific activities.

- Learning: agents tend to adapt from outcomes; assistants rely on prompt engineering and rule sets.

- Interaction: agents act in the background or integrated systems; assistants interact directly with users.

- Risk: agents raise governance considerations around safety, auditing, and transparency; assistants emphasize user experience and explainability.

Understanding ai agent vs assistant helps teams design better architectures, assign ownership, and set realistic expectations for speed and reliability.

The Core Capabilities: Autonomy, Interaction, and Adaptation

The heart of the ai agent vs assistant debate rests on three capabilities: autonomy, interaction style, and adaptation. An AI agent is evaluated on how autonomously it can set and pursue goals, adjust its plan when outcomes diverge, and learn to improve over time. An AI assistant is evaluated on how effectively it interacts with humans, clarifies intent, handles multi-step tasks with minimal friction, and remains predictable under various prompts. In practice, a mature automation solution often combines both patterns in a layered architecture: agents execute autonomous sequences within guardrails, while assistants handle user touchpoints, explanations, and verification steps. When comparing the two, consider how each pattern contributes to velocity, risk, and governance. This section expands on how autonomy, interaction, and adaptation influence real-world outcomes.

Autonomy: Agents require a governance framework that defines acceptable goals, constraints, and escape hatches. Interaction: Assistants prioritize conversational clarity, task scoping, and help-desk style support. Adaptation: Agents may leverage reinforcement signals and online learning; assistants benefit from stable prompts and structured decision trees. The choice between ai agent vs assistant should align with your product strategy, data maturity, and risk tolerance.

Use Case Scenarios: When to Deploy ai Agent vs AI Assistant

Choosing between ai agent vs assistant depends on the problem you are solving and the value you seek to unlock. Scenarios for AI agents include complex orchestration across systems, multi-step decision making with dynamic variables, and optimization problems that require autonomy (e.g., procurement optimization, supply chain routing, or proactive incident remediation). Scenarios for AI assistants include customer support chatbots, data querying and reporting assistants, or internal copilots that guide engineers through code reviews or deployments. In practice, many teams start with an assistant to reduce toil and then evolve toward an agent where the business payoff justifies the added governance overhead. This phased approach helps manage risk while building confidence in automated decision-making.

Best-for patterns:

- Best for autonomous orchestration and optimization: AI agents

- Best for human-facing support and guided tasks: AI assistants

- Best for mixed workflows: a layered approach with both patterns integrated through an orchestration layer

Architectural Considerations: Data, Interfaces, and Governance

The ai agent vs assistant decision drives core architectural choices. An AI agent requires robust decision-making components, state management, and feedback loops to improve over time. It often relies on a combination of planners, policy learners, and environment models, integrated with data streams and external systems. An AI assistant emphasizes conversational interfaces, prompt design, and user-centric flows. It leans on reliable data access, explainability, and user intent inference. Governance is essential for both patterns, but agents demand additional controls: audit trails for actions, sandboxed execution environments, and escalation rules. When designing for either pattern, consider data quality, latency, security, and integration needs with existing platforms, such as CRM, ERP, or collaboration tools. A well-structured data contract, clear ownership, and consistent testing are non-negotiables for scalable automation.

Key implementation choices:

- Orchestration vs orchestration-free flows

- State management and persistence

- Observability and auditing of decisions

- How to handle failure modes and human-in-the-loop interventions

Cost, ROI, and Time-to-Value Considerations

Understanding ai agent vs assistant costs helps set realistic expectations. Autonomous agents typically require higher upfront investment due to modeling, integration complexity, and governance requirements. However, when designed to optimize end-to-end processes, they can deliver substantial long-term ROI through reduced cycle times, improved accuracy, and scale. AI assistants usually incur lower initial cost and quicker time-to-value, as they leverage established interfaces and rule-based prompts. ROI calculations should consider not only software licenses but also data pipeline investments, security controls, and the value of faster decision making. A pragmatic approach is to pilot an assistant to reduce toil, then incrementally add agent capabilities in a controlled, governed way as you demonstrate value and establish risk controls.

Two levers to watch:

- Time-to-value: assistants deploy faster; agents require more integration but pay off with deeper automation

- Risk-adjusted ROI: balance autonomy with governance to maximize long-term return

Risk, Ethics, and Compliance in ai agent vs assistant Deployments

Autonomy introduces new ethical and governance challenges. Agents must operate within clearly defined safety boundaries, with auditability, explainability, and escalation paths when things go wrong. Assistants primarily raise governance concerns around user data, privacy, and prompt leakage, but are generally more transparent to users. A strong governance model for either pattern includes role-based access, data minimization, and rigorous testing protocols. Consider implementing guardrails such as sandbox environments, dry-run simulations, and approval gates for high-stakes decisions. Ethics considerations should be baked into design reviews, including bias monitoring, safety checks, and ongoing impact assessments. A balanced approach often uses agents for high-value automation while maintaining assistants as the human-in-the-loop layer for accountability and user trust.

Practical governance checklist:

- Define decision boundaries and escalation rules

- Implement audit logs for agent actions

- Regularly review outcomes for fairness and safety

Integration and Ecosystem Fit: From APIs to Orchestration

Integrating ai agent vs assistant patterns into your ecosystem requires careful planning around APIs, data contracts, and middleware. Agents benefit from robust event streams, real-time data, and access to control points across systems. Assistants rely on stable connectors to human-facing services, knowledge bases, and analytics dashboards. A practical approach is to design an orchestration layer that coordinates both patterns: the assistant handles user interactions and data gathering, while the agent executes autonomous workflows and optimizes end-to-end results. This separation of concerns simplifies testing, monitoring, and governance, and supports incremental rollout. The right architecture emphasizes modularity, observability, and clear ownership of each capability.

Integration checklist:

- Consistent data schemas across components

- Clear API boundaries and rate limits

- Centralized logging and tracing for end-to-end flows

Decision Framework: How to Decide Between ai Agent vs AI Assistant

A structured decision framework helps teams decide when to deploy ai agent vs assistant. Start with business objectives: are you aiming for autonomous optimization or human-centric support? Next, assess data readiness: do you have reliable signals to guide autonomous decisions? Then evaluate governance: what controls are required for the risk profile? Finally, consider timelines and resources: can you support a longer runway for agent development, or do you need a quick win with an assistant? A practical rule of thumb is to begin with an assistant to validate workflows and user interactions, then scale to an agent when you have governance, data maturity, and a clear ROI path. Remember: many teams succeed with a hybrid approach that uses assistants to bootstrap and agents to scale.

Decision steps:

- Define problem scope and success metrics

- Map data sources and interfaces

- Establish governance and escalation rules

- Pilot with an assistant; plan a staged agent rollout

- Measure outcomes and iterate

Real-World Patterns and Pitfalls: Lessons Learned (Hypothetical Examples)

In practice, teams often misinterpret ai agent vs assistant as a binary choice. A common pattern is starting with simple assistants to reduce toil and gradually implementing agents to tackle end-to-end processes. Pitfalls include underestimating data quality, failing to implement governance, and assuming high autonomy equals immediate ROI. To avoid these traps, ground your implementation in a clear roadmap, with milestones for data maturity, risk controls, and performance targets. Also, invest in change management, as transitioning from manual to automated decision-making affects people, processes, and culture. By learning from hypothetical case studies, teams can forecast potential challenges and plan mitigations before scaling.

Comparison

| Feature | AI Agent | AI Assistant |

|---|---|---|

| Autonomy | High autonomy with goal-driven execution | Reactive, user-driven interactions |

| Interaction Style | Background/system-driven actions with minimal prompts | Frontline user-facing guidance and prompts |

| Decision Scope | Broad, end-to-end planning and optimization | Narrow, task-specific assistance |

| Learning/Adaptation | Self-learning from outcomes and feedback loops | Prompts and rules with limited autonomous learning |

| Governance Needs | Stricter governance, auditing, and escalation | Governance focused on safety and user consent |

| Implementation Time | Longer setup, integration, and testing | Faster deployment with existing tools |

| Cost Implications | Higher upfront cost with long-term value | Lower upfront cost and quicker value |

| Best For | Strategic automation and complex workflows | Supportive tasks and user-facing processes |

Positives

- Clarifies strategic automation vs day-to-day task support

- Helps teams allocate development resources efficiently

- Enables governance and risk management through clear patterns

- Supports scalable automation with proper orchestration

What's Bad

- Misalignment between expectations and capabilities can cause delays

- Over-investing in autonomy without governance may raise risk

- Integration complexity can be high for data-intensive agents

AI agents and AI assistants are complementary; choose based on autonomy needs and governance requirements.

AI agents excel in autonomous, goal-driven automation; AI assistants excel in human-facing support. Use both when your workflow benefits from orchestration, governance, and scalable automation.

Questions & Answers

What is the difference between an ai agent and an ai assistant?

An AI agent acts autonomously to achieve goals, often operating with a degree of initiative and adaptive learning. An AI assistant focuses on supporting humans, guiding tasks, and handling routine interactions. The distinction informs governance, risk, and architecture decisions.

An AI agent acts on its own to reach goals, while an AI assistant helps people by guiding tasks and answering questions.

When should I use an AI agent vs an AI assistant?

Use an AI agent for complex, end-to-end workflows requiring autonomy and optimization. Use an AI assistant for user-facing support and task facilitation where human oversight remains important. A staged approach—start with an assistant and evolve to an agent—supports governance and ROI.

If the goal is to automate complex processes, choose an agent. If the goal is to help users or engineers, choose an assistant.

What governance considerations apply to AI agents?

AI agents require auditable actions, strict access controls, and escalation paths. Establish safety constraints, monitoring, and rollback mechanisms to handle unexpected outcomes. Ensure explainability and compliance with data policies.

Agents need strong governance: audits, safety constraints, and clear escalation paths.

How do I measure ROI for ai agents and assistants?

ROI includes time-to-value, error reductions, and throughput improvements. Factor in data pipeline costs, governance overhead, and maintenance. Use pilot programs to validate value before scaling.

Measure ROI by comparing time saved, accuracy improvements, and maintenance costs across pilots.

What are common pitfalls when deploying these patterns?

Common pitfalls include underestimating data quality, neglecting governance, and assuming autonomy equals immediate ROI. Address them with a phased plan, strong testing, and clear ownership.

Watch out for data issues and governance gaps; plan in phases with clear ownership.

Can ai agents and assistants coexist in the same system?

Yes. A layered architecture often uses assistants for user interactions and agents for autonomous automation, coordinated by an orchestration layer. This approach balances user trust with scalable automation.

Absolutely—combine both patterns with a coordinating layer for best results.

Key Takeaways

- Define autonomy needs before choosing a pattern

- Pilot with assistants, then incrementally adopt agents

- Invest in governance and observability from day one

- Design an integrated architecture that separates concerns

- Choose a phased approach to reduce risk and boost ROI