AI Agent vs AI Assistant: A Thorough, Analytic Comparison

A rigorous, analytical comparison of AI agents and AI assistants, detailing autonomy, orchestration, use cases, risks, and implementation trade-offs for developers, product teams, and business leaders.

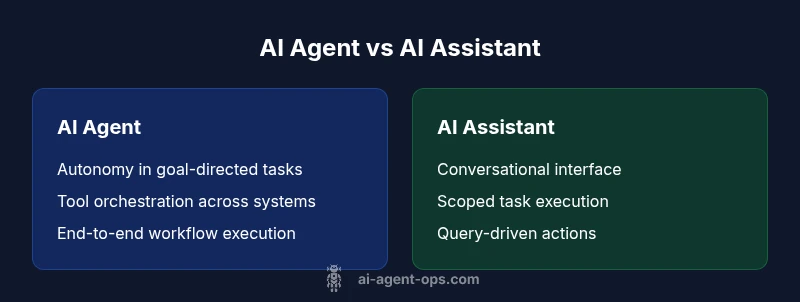

AI agents autonomously execute tasks, plan goals, and orchestrate tools and ecosystems to complete end-to-end workflows. AI assistants, by contrast, function as conversational helpers that respond to queries, carry out user requests, and provide guidance within a defined scope. In short, agents operate with autonomy and orchestration; assistants focus on dialogue and task execution within explicit boundaries.

Defining AI Agents and AI Assistants

The core difference between the two concepts centers on autonomy, scope, and how they interact with the environment. The article difference between ai agent and ai assistant is not just a matter of labels; it reflects fundamental design decisions about control, goals, and tool use. An AI agent is engineered to autonomously select actions, sequence steps, and procure resources across a system of tools and data sources to achieve a stated objective. An AI assistant remains primarily a conversational interface that understands user intent, answers questions, and executes user requests within a predefined set of capabilities. According to Ai Agent Ops, clarifying these roles early in product design helps avoid scope creep and misaligned expectations. The difference between ai agent and ai assistant thus maps to a spectrum: from guided dialogue to autonomous orchestration. When teams understand this spectrum, they can pair agents and assistants to create hybrid workflows that balance autonomy with safety. The distinction is essential for teams building agentic AI workflows, where the boundaries between decision-making and conversation must be deliberate and well-governed. This framing helps engineering leaders set clear success criteria and measurable outcomes for each component of the system.

startWithHeading":true,

wordCountEstimate":190},{

Historical context and evolution

The landscape of AI tools has evolved from scripted chatbots and rule-based automation to more capable agentic systems. Historically, many teams began with AI assistants—chat interfaces designed to answer questions, schedule meetings, pull data, and support repetitive tasks. As needs grew, developers experimented with autonomous components that could reason about goals, select tools, and execute multi-step workflows without constant human prompting. The resulting tension between dialogue and action is not accidental; it mirrors real-world workflows where humans still supervise critical decisions but want the speed and consistency of automated action. In the context of agentic AI, the industry has observed a shift toward modular architectures that separate planning, perception, and action from conversational layers. Ai Agent Ops notes that this separation improves maintainability and safety, enabling teams to upgrade or swap components as tasks scale. In practice, organizations increasingly adopt a hybrid approach, deploying AI agents for core automation while retaining AI assistants for user-facing interactions. The historical shift highlights a practical truth: autonomy and conversation are not mutually exclusive but complementary when designed with guardrails and clear ownership. The difference between ai agent and ai assistant becomes a design choice that aligns with business goals and risk tolerance.

wordCountEstimate":210},{

Core capabilities and architecture

Modern AI agents rely on a layered architecture that includes perception (data ingestion), planning (goal decomposition and sequencing), action (tool calls and API interactions), and execution (monitoring outcomes). A robust AI agent bridges reasoning with real-world effects by coordinating sensors, databases, and services. By contrast, an AI assistant emphasizes language understanding, context retention within chat sessions, and task execution via user-driven prompts. The architectural distinction is purposeful: agents require planning pipelines, state management, and orchestration frameworks; assistants require natural language processing, dialogue management, and user intent tracking. From a systems perspective, the difference between ai agent and ai assistant manifests in how each component handles feedback loops, error handling, and recovery. Ai Agent Ops stresses that effective agents implement safe fallbacks, limit the scope of autonomous actions, and log decisions for auditability. The result is a modular, maintainable stack where agents can be upgraded with new capabilities while the conversational layer remains stable and predictable. For teams, the architectural divide clarifies ownership, testing strategies, and deployment pipelines, enabling safer, scalable agentic AI implementations.

wordCountEstimate":210},{

Autonomy and decision making

Autonomy is the defining axis of the difference between ai agent and ai assistant. AI agents are designed to decide when, what, and how to act—often across multiple domains, data sources, and tools. They rely on planning, goal reasoning, and environment monitoring to progress toward an objective. AI assistants, in contrast, mostly decide how to respond within a dialogue and how to fulfill a user request through guided steps. The decision-making process for agents involves evaluating potential actions, predicting outcomes, and selecting the most favorable path, sometimes with stochastic elements from uncertain data. Safety and governance are crucial here: agents should operate within constraints, provide explainability, and offer human-in-the-loop oversight when needed. Ai Agent Ops emphasizes the importance of defining ethical boundaries and compliance rules early. In practice, teams craft decision policies, risk thresholds, and escalation paths to ensure that autonomous actions align with business objectives and user expectations. The difference between ai agent and ai assistant, then, is not only capability but also the degree of autonomy and responsibility embedded in the system.

wordCountEstimate":210},{

Tooling and orchestration

A central part of the difference between ai agent and ai assistant lies in tooling and orchestration. Agents require integration with a diverse set of tools (APIs, databases, automation platforms) and a planning layer that can sequence calls, manage dependencies, and handle errors. This layer also includes state management to track progress, context, and outcomes across long-running tasks. AI assistants typically interact with a narrower set of capabilities, focusing on text generation, information retrieval, and lightweight task execution within a defined conversation. Orchestration design for agents emphasizes modularity, fault tolerance, and observability—metrics that help teams understand why an action happened and how outcomes can be improved. Ai Agent Ops notes that well-designed agents use standardized interfaces, versioned APIs, and robust logging to simplify maintenance and security reviews. For teams weighing the difference between ai agent and ai assistant, the strength of orchestration lies in predictable operational behavior and the ability to scale automation without compromising safety.

wordCountEstimate":205},{

Interaction patterns and user experiences

Interaction patterns reveal much about the practical implications of the difference between ai agent and ai assistant. AI assistants excel in natural, friendly dialogue, rapid information retrieval, and human-friendly interfaces. They shine when conversations guide users through tasks, explain data, or provide recommendations with caveats. AI agents, however, shape interactions around task progress, progress dashboards, and external tool feedback. Users observe automated progress, tool usage status, and the resolution of multi-step workflows. From a UX perspective, teams often design hybrid experiences where the assistant handles the initial user intent, and the agent executes the end-to-end process in the background, then reports results back to the user. This approach leverages the strengths of both modalities while maintaining visible control points for users and operators. The difference between ai agent and ai assistant becomes a matter of how much the user wants to see the system “doing work” versus “talking through decisions.”

wordCountEstimate":210},{

Use cases by domain

Across industries, the use cases for autonomous agents versus conversational assistants diverge. In operations and IT, AI agents automate incident response, capacity planning, and multi-system reconciliation. In product development, agents can orchestrate build pipelines, test suites, and deployment steps, enabling faster delivery with fewer human bottlenecks. AI assistants support customer service, internal help desks, and data discovery within dashboards. In the finance domain, AI agents can monitor risk signals across datasets, trigger workflows, and report anomalies, while AI assistants can summarize portfolios, answer policy questions, and guide analysts through complex models. The difference between ai agent and ai assistant becomes a strategic choice: adopt agents where end-to-end automation reduces risk and latency, or deploy assistants where conversational clarity and safety dominate. Ai Agent Ops emphasizes starting with clear MVPs that demonstrate measurable impact and then expanding the scope with governance controls and incremental autonomy.

wordCountEstimate":210},{

Evaluation, metrics, and risk

Evaluating the difference between ai agent and ai assistant involves multi-dimensional metrics. For agents, success criteria include automation coverage, task completion rate, latency in orchestration, and error handling resilience. For assistants, metrics focus on user satisfaction, response accuracy, task success rate within dialogue, and safety in conversation management. A combined evaluation should measure end-to-end outcomes: time to value, reduction in manual toil, and the quality of human-in-the-loop interventions. Risk assessment covers unintended actions, data privacy, and compliance. Observability is essential: telemetry, decision logs, and explainability improve trust and maintainability. Ai Agent Ops underlines the need for guardrails, escalation thresholds, and audit trails to mitigate risk while preserving the benefits of automation. The difference between ai agent and ai assistant is not merely a technical distinction; it informs how organizations monitor, govern, and adapt their AI-enabled workflows over time.

wordCountEstimate":210},{

Implementation considerations and pitfalls

Implementing AI agents requires careful planning around data access, authentication, and sandboxed environments. Key pitfalls include over-automation without proper validation, unclear ownership of outcomes, and inadequate logging that hides the decision trail. Designers should create bounded domains for initial deployments, define explicit success criteria, and establish rollback procedures for failed automations. For AI assistants, common challenges include maintaining conversation context, avoiding information leakage, and ensuring that responses remain aligned with policy. The difference between ai agent and ai assistant becomes a practical guide here: agents demand robust tool integration, strong governance, and continuous monitoring; assistants demand reliable dialogue design, user trust, and safety guarantees. Ai Agent Ops recommends phased pilots with measurable milestones and a clear plan to transition from pilot to production with proper risk controls and stakeholder alignment.

wordCountEstimate":210},{

Roadmap and future trends

Looking ahead, the line between AI agent and AI assistant will continue to blur, as hybrid systems blend autonomous action with conversational interfaces. Advances in multi-agent coordination, improved planning under uncertainty, and better tool interoperability will enable more scalable agentic AI workflows. At the same time, governance models, explainability, and privacy controls will be central to responsible deployment. For teams, the difference between ai agent and ai assistant will govern how you structure product teams, allocate budgets, and design deployment timelines. In practice, the most successful implementations will combine autonomous agents for core automation with AI assistants for user-facing interactions, wrapped in strong governance and safety practices. This balanced approach aligns with the 2026 Ai Agent Ops guidance on agentic AI development and risk management.

wordCountEstimate":170}],

comparisonTable

Comparison

| Feature | AI Agent | AI Assistant |

|---|---|---|

| Autonomy | High: plans, reasons about goals, and executes across tools | Low: conversational, user-driven, bounded scope |

| Decision-Making | Planning and goal-driven reasoning across environments | Response-first decisions within chat context |

| Tooling & Integration | Orchestrates APIs, databases, and services | Relies on user prompts to trigger actions |

| Context & State | Long-horizon state tracking across tasks | Short-term context within a single session |

| Learning & Adaptation | Can adapt plans based on environment | largely pattern-based optimization within scope |

| Use Case Suitability | End-to-end automation, orchestration at scale | Conversational support, data retrieval, guided tasks |

| Risk & Governance | Requires strong guardrails and audit trails | Primarily safety via conversation controls |

Positives

- Clarifies ownership: autonomy vs conversation

- Enables end-to-end automation at scale

- Improves efficiency through orchestration

- Supports modular, upgradeable architectures

What's Bad

- Increases design and integration complexity

- Higher risk if governance and safety are not baked in

- Requires ongoing maintenance of tools and pipelines

- Potential for scope creep if boundaries are not defined

AI agents are preferred for autonomous task orchestration; AI assistants excel where conversational depth and safety matter.

Choose AI agents when end-to-end automation and tool orchestration drive value. Choose AI assistants when you need reliable, user-friendly dialogue and guided interactions. A hybrid approach often yields the best balance, provided governance and safety controls are in place.

Questions & Answers

What is the difference between ai agent and ai assistant?

The difference between ai agent and ai assistant centers on autonomy and scope. An AI agent plans, reasons, and acts across tools to achieve goals, while an AI assistant engages in dialogue, answers questions, and carries out user requests within a defined boundary. This distinction guides architecture, testing, and governance decisions.

In short, agents automate and orchestrate; assistants chat and guide.

Can an AI assistant become an AI agent?

Yes, by extending the assistant’s capabilities with planning, tool use, and autonomous decision-making within safe guardrails. The transformation requires careful governance, state management, and escalation paths to prevent unsafe actions.

You can upgrade an assistant by adding autonomy in a controlled way.

Which is better for enterprise automation?

It depends on goals. If end-to-end workflow automation is the aim, agents are generally better. If frontline user interaction and support are the priority, assistants excel. Large enterprises often deploy a hybrid model to balance automation with user engagement.

For many teams, a hybrid approach works best.

What architectures support AI agents?

Agent architectures typically include perception layers, planning and reasoning modules, action and orchestration layers, and robust observability. Safe integration, modularity, and clear ownership help manage complexity and risk.

Think modular stacks: perceive, plan, act, observe.

What are common risks with AI agents?

Key risks include unintended actions, data leakage, misconfigured tool access, and governance gaps. Mitigation strategies emphasize sandboxing, strict permissions, audit trails, and escalation to human oversight when uncertainty is high.

Guardrails and logs are essential.

How should an organization transition from assistant to agent in a project?

Start with a bounded, high-value workflow, add autonomy gradually, and implement monitoring and governance. Establish clear success metrics, roll back plans, and ensure alignment with teams and stakeholders.

Move in small, controlled steps with safety checks.

Key Takeaways

- Define the core goal: automation vs assistance

- Map tool integrations early to support agents

- Establish strong guardrails for autonomous actions

- Pilot with bounded scopes before full-scale rollout

- Adopt a hybrid model that leverages both modalities