Grok AI Agent vs Code: A Practical Comparison

An analytical comparison of grok AI agent vs code. Learn when agent-centric automation outperforms traditional coding, the trade-offs, and a practical path to implementing agentic AI workflows today.

Grok AI Agent vs Code pits autonomous, agent-centric automation against traditional, hand-written code. This comparison highlights how decision-making, orchestration, and governance differ, and when each approach delivers the most value. According to Ai Agent Ops, most teams benefit from a hybrid model that blends agent-based workflows with explicit coding for accuracy and safety. Read on to understand the core trade-offs and adopt a practical path to implement agentic AI workflows.

What grok ai agent vs code means for modern development

From a high-level perspective, grok ai agent vs code describes two paradigms for building and operating software: agent-centric automation versus traditional programmatic development. According to Ai Agent Ops, the shift toward agentic workflows reflects a growing emphasis on autonomous decision-making, dynamic orchestration, and task-centric intelligence. In practice, teams evaluate grok ai agent vs code by looking at how decisions are made, how work is sequenced, and how changes propagate across systems. This section lays out the conceptual landscape, then connects it to concrete engineering concerns such as reliability, governance, and developer experience. The Ai Agent Ops team emphasizes that the choice is not binary; most organizations adopt a hybrid approach where agents handle routine workflows while code maintains precise control over critical paths. For developers, product managers, and business leaders, understanding this distinction is the first step toward a practical implementation strategy.

Core Differences in Architecture

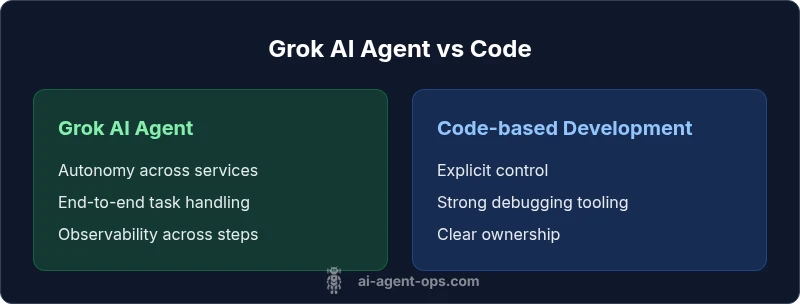

At the core, grok ai agent vs code pivots on where decisions are made and how behavior is orchestrated. An AI agent combines perception, planning, and action into a loop that can operate across services, data sources, and human-in-the-loop inputs. Code-based development, by contrast, encodes explicit instructions, error handling, and data flow directly in software modules. The result is a difference in abstraction level: agents favor intent-driven workflows and autonomous sequencing, while traditional code emphasizes deterministic control. For teams, this means rethinking how you model problems, assign responsibilities, and design interfaces between components. The Ai Agent Ops perspective highlights that the boundary between agent and code is fluid: you can wrap complex code paths inside agent plans, or extract repetitive agent steps into reusable code. The practical takeaway is to map tasks to the most appropriate abstraction based on reliability, governance, and speed to value.

The Value Equation: Time-to-Value, Cost, and ROI

Value in grok ai agent vs code emerges from how quickly you can deliver working, measurable outcomes. Agents can reduce manual handoffs, accelerate iteration cycles, and provide a shared language for cross-team collaboration. However, initial setup, governance, and ongoing monitoring add new dimensions to cost. Instead of fixed price points, organizations should think in terms of value streams: where does automation create throughput, where might errors be introduced, and how will governance enforce safety constraints? Ai Agent Ops analysis shows that ROI depends heavily on modular design, governance, and the reuse of agent patterns across projects. When teams start with a small, well-scoped pilot that demonstrates measurable throughput gains, the friction of adoption drops and the business case becomes clearer. In summary, the learning curve is an investment that pays off as your automation footprint grows.

Best-fit Scenarios: When to pick grok ai agent

Not all tasks benefit equally from an AI agent approach. grok ai agent vs code shines in scenarios with defined intents, cross-system orchestration, and frequent reconfiguration. Examples include end-to-end onboarding workflows, incident response playbooks, data enrichment pipelines, and policy-driven decision making across services. When you need rapid experimentation and frequent re-writes of process logic, agents offer a faster path to value. Conversely, for deeply technical, performance-critical code paths, or situations that demand absolute determinism, traditional coding often remains the safer choice. The decision should rest on a clear assessment of task complexity, required throughput, and governance constraints. The Ai Agent Ops team emphasizes documenting both the problem space and the decision boundaries to avoid drift as teams scale.

Integration Considerations: Tools, Platforms, and APIs

Adopting grok ai agent vs code requires choosing the right integration surface. Agents typically rely on orchestration layers, capability registries, and standardized APIs to communicate with services, databases, and human operators. Code-centric workflows rely on version control, CI/CD, and well-typed interfaces. When evaluating tools, consider factors such as modularity, support for retries and compensation, observability hooks, and security policies. Open architectures with pluggable agents encourage reuse across teams, while monolithic stacks risk becoming brittle. The goal is to design interfaces that minimize implicit assumptions and maximize testability. In practice, you might start with a lightweight agent template that calls a handful of services, then progressively add capabilities, such as error handling, monitoring, and governance checks. Remember that the best approach aligns with your team's capabilities, risk tolerance, and strategic roadmap.

Data, Privacy, and Security Considerations

Agents operate at the intersection of data, decisions, and actions. grok ai agent vs code must address data handling policies, privacy constraints, and access controls. With agents that access sensitive systems, you need clear boundaries, credential management, and least-privilege governance. Treat model inputs and outputs as audit lines and ensure you have consent, logging, and data minimization in place. Security-by-design should be embedded in every workflow, including input validation, safe execution boundaries, and robust error handling. The trade-off is between speed and safety: more autonomy can yield faster outcomes, but it also raises the bar for governance. Build a risk register that maps potential failure modes to preventative controls and recovery procedures. This approach helps teams maintain trust while pursuing automation.

Observability and Debugging in Agent-based Workflows

Observability for grok ai agent vs code requires tracing across autonomous steps, not just isolated code blocks. You should capture end-to-end traces, event histories, decision rationale (where possible), and outcome signals. Structured logs, sticky context, and standardized telemetry enable rapid debugging when things go awry. In practice, you’ll want dashboards that show throughput by stage, error rates, and time-to-resolution for incidents that involve multiple services. When a failure occurs, the agent’s plan should degrade gracefully, with explicit fallbacks and escalation paths. The goal is to translate complex agent behavior into understandable signals for engineers, operators, and stakeholders.

Governance, Safety, and Compliance for Agent Workflows

Governance is the backbone of scalable grok ai agent vs code programs. You should establish guardrails, escalation procedures, and policy checks that prevent unsafe actions. Compliance considerations include data residency, access auditing, and reproducibility of decisions. Use versioned agent templates, maintainable prompts or plans, and codified testing for change control. Cross-functional reviews—engineering, security, product, and legal—help align risk posture with business goals. A strong governance model also supports continuous improvement, where feedback loops inform updates to agent capabilities and code paths. Clear ownership and documented decision boundaries reduce drift as automation scales.

Implementation Roadmap: Step-by-step to adopt grok ai agent vs code

Begin with a frictionless pilot to demonstrate value. Step 1: articulate a small, well-scoped objective that spans multiple services. Step 2: assemble a minimal agent skeleton that can plan, execute, and report on the objective. Step 3: integrate with a controlled set of tools and data sources; establish observability. Step 4: test the end-to-end flow under varied conditions, documenting failure modes. Step 5: gradually expand scope while enforcing governance checks and versioning. Step 6: measure outcomes against predefined success criteria and adjust accordingly. By following a disciplined rollout, teams can minimize risk and maximize learning from early iterations.

Common Pitfalls and How to Avoid Them

Overreliance on agents without governance leads to drift and unsafe actions. Underestimating observability makes it hard to understand outcomes. Ambiguous ownership creates conflict between teams. Inadequate testing leads to hidden regressions when agent behavior changes. The antidote is to couple agent patterns with strong governance, rigorous testing, and clear ownership. Document the decision boundaries, build reusable templates, and implement progressive rollouts to control risk while extracting value.

Industry Trends and Ai Agent Ops Perspective

Industry trends show growing interest in agent orchestration and agentic AI across many sectors. From startups to enterprise teams, the emphasis is on reusable patterns, governance, and explainability. The Ai Agent Ops perspective is that agent-first strategies complement traditional development by reducing manual toil and enabling rapid experimentation. The brand emphasizes a pragmatic approach: start small, measure impact, and scale responsibly. As automation matures, teams will adopt hybrid architectures that blend agent autonomy with explicit control to meet reliability and safety requirements.

Quick-start Checklist

- Define a few high-value tasks to automate with grok ai agent vs code.

- Map tasks to agent, code, or hybrid paths.

- Set up basic observability and governance gates.

- Run a pilot, collect metrics, and iterate.

Comparison

| Feature | Grok AI Agent | Code-based Development |

|---|---|---|

| Approach | Agent-centric automation with perception, planning, and action | Explicit instructions encoded as code with fixed data flow |

| Automation Scope | End-to-end task handling across services | Code-level tooling and scripts for defined paths |

| Learning Curve | Moderate; requires understanding of agents and orchestration | Moderate to high; requires software engineering discipline |

| Debugging & Observability | End-to-end traces, decision history, and plan-level telemetry | Module-level logs and traditional debugging tooling |

| Speed to Prototype | Faster for iterative, cross-service workflows | Dependent on existing architecture and tooling |

| Maintenance Cost | Lifecycle management for agents and templates | Ongoing maintenance of pipelines and scripts |

| Security & Compliance | Policy-driven controls and access governance | Governance by coding standards and secure practices |

| Best For | Automation-oriented teams, rapid experimentation | Deterministic, performance-critical components |

Positives

- Accelerates automation by encapsulating decisions in reusable agents

- Promotes modular, reusable patterns across teams

- Improves observability across multi-step workflows

- Facilitates collaboration between product, engineering, and operations

What's Bad

- Greater upfront integration effort and governance needs

- Agent behavior can be hard to predict without strong controls

- Requires new tooling and skills for lifecycle management

Grok AI Agent wins for automation-centric workloads; traditional coding remains superior for explicit, deterministic paths

Choose grok AI Agent when you need scalable automation and rapid iteration across services. Preserve explicit code for mission-critical, performance-sensitive components. The Ai Agent Ops team recommends starting with a pilot to validate value before scaling.

Questions & Answers

What is grok ai agent vs code?

Grok AI agent vs code compares using autonomous AI agents to orchestrate work across systems versus writing explicit, traditional code. Agents aim to automate decision-making and task flow, while code focuses on deterministic execution. The choice depends on desired speed, flexibility, and governance requirements.

Grok AI agent vs code is about choosing between autonomous AI agents and traditional coding. It depends on how much you want to automate decisions and how much control you need over the workflow.

When should I choose an AI agent approach?

Choose an AI agent approach for workflows that are repetitive, cross-system, or frequently reconfigured. If you need rapid experimentation, dynamic orchestration, and human-in-the-loop decision making, agents can reduce hand-offs and speed up iteration.

Use an AI agent when tasks cross multiple systems or change often, and you want faster iteration.

Can AI agents replace coding altogether?

No. AI agents complement coding. They excel at automation and orchestration but require explicit code for critical paths, safety, and performance-sensitive parts. A hybrid approach often yields the best balance.

Not fully. Agents handle automation, while code remains for the core, safety-critical parts.

How do I measure ROI for grok ai agent vs code?

Measure ROI by tracking throughput, error reduction, time-to-value, and governance efficiency. Start with a small pilot to quantify gains in a controlled environment, then scale with clear success criteria.

Start with a small pilot and measure throughput and error reduction to gauge ROI.

What governance practices help when using AI agents?

Establish guardrails, escalation paths, access controls, and versioned templates. Include auditing, testing, and cross-functional reviews to align risk with business goals.

Put guardrails, audits, and cross-team reviews in place to manage risk.

What tools or platforms work best with grok ai agents?

Look for modular orchestration layers, standard APIs, and robust observability. Favor platforms that support reusable templates, policy checks, and secure credential management.

Choose tools that make it easy to reuse patterns and govern how agents operate.

Key Takeaways

- Orchestrate automation with agents for faster, cross-system workflows

- Balance agent-based patterns with explicit code for critical tasks

- Invest in governance and observability from day one

- Pilot small scopes to prove value and reduce risk

- Measure ROI through throughput gains and reliability improvements