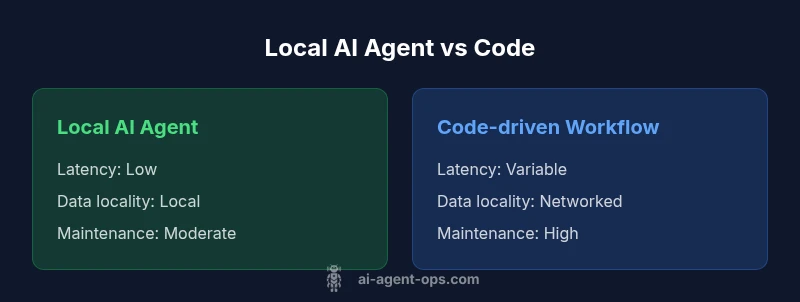

Local AI Agent vs Code: A Practical Side-by-Side Comparison

Explore a data-driven comparison of local AI agents vs code-driven workflows. We analyze latency, privacy, maintenance, and deployment considerations for developers and leaders.

Local AI agents and traditional code each have strengths. In this comparison, the Ai Agent Ops team highlights where a local ai agent can outperform code-driven workflows—speed of iteration, offline reliability, and secure data handling—while noting tradeoffs in debugging complexity and setup overhead. For many teams, the right choice depends on control, scale, and latency requirements.

Overview: Defining local ai agent vs code in modern development

In recent AI-enabled development, the phrase local ai agent vs code captures two fundamentally different paradigms for building intelligent software. According to Ai Agent Ops, a local AI agent runs with components that can operate on a developer's workstation or an on-prem server, preserving data locality and enabling offline capability. By contrast, traditional code-first workflows rely on explicit logic implemented in software you ship and update through version control. This article compares these approaches through practical criteria: latency, data governance, debugging, deployment, and maintainability. We ground the discussion in real-world patterns developers encounter when building agentic AI workflows, and we explain how organizations should think about tradeoffs when choosing between a local AI agent and code-driven systems.

Core decision criteria: what matters most

When weighing local AI agents against code-driven workflows, several criteria consistently determine success. First is latency and user-perceived responsiveness: a local agent often delivers lower latency for interactive tasks, while cloud-based code can scale compute and parallelism differently. Data locality and privacy are next: local agents enable on-device inference and stricter data residency, whereas code pipelines may rely on centralized data stores. Debuggability and observability follow closely; traditional code often has mature tooling, while agent workflows demand new instrumentation for tracing decisions and prompts. Maintenance and upgrade cycles matter: local agents require hardware considerations and periodic model updates, while code-based pipelines depend on dependency management and CI/CD complexity. Finally, deployment complexity and ecosystem maturity influence risk and time-to-value. Ai Agent Ops emphasizes analyzing these dimensions in the context of your team’s skills and governance requirements, not just theoretical advantages.

Architecture and integration patterns

Architecting a local AI agent vs code setup involves choosing where logic lives and how components communicate. Local agents typically run as embedded components within an application, a container on a workstation, or an on-prem service that can access local data stores. They often use a modular agent-core that handles perception, reasoning, and action layers, with prompts and policies stored locally. Code-driven workflows, by contrast, assemble pipelines using orchestrators, APIs, and microservices. Integration points include data connectors, authentication layers, and event-driven triggers. Best practice is to design for clear separation between decision logic (agent prompts and policies) and orchestration logic (API calls, data flows, error handling). This separation reduces coupling, simplifies testing, and makes it easier to swap implementations if requirements shift. From Ai Agent Ops’ perspective, a hybrid pattern—agentic components coordinated by robust code—can offer the best of both worlds when carefully designed.

Performance and reliability considerations

Performance characteristics differ between local AI agents and code-centric workflows. Local agents often provide rapid feedback loops for interactive tasks because data stays on the device or within a local network, minimizing round-trips to distant services. However, local inference can be constrained by hardware capacity, model size, and memory limits. Code-driven pipelines can leverage scalable cloud resources, enabling more complex processing, batch jobs, and broad parallelism, but rely on network connectivity and external service SLAs. Reliability hinges on deterministic behavior, robust error handling, and clear rollback strategies. Agents require careful handling of non-determinism in prompts, while code pipelines need idempotent operations and strong observability. A practical approach is to implement strict timeouts, clear fallback paths, and comprehensive testing across both paths so users observe consistent outcomes under varying conditions.

Security, governance, and compliance

Security considerations for local AI agents center on data residency, access control, and auditability. When data remains on-prem or on a trusted device, organizations can enforce tighter data governance and reduce exposure to external threats. On the other hand, code-driven pipelines often benefit from mature security tooling, centralized secrets management, and formal compliance controls implemented in CI/CD processes. Ai Agent Ops notes that a thoughtful design includes isolating agent components, encrypting local data at rest, and implementing strict prompt and model access controls. Logging and auditing should capture who initiated actions, what data could be accessed, and how decisions were derived, to satisfy regulatory requirements without compromising performance.

Developer experience and tooling

Tooling for local AI agents has matured but remains distinct from traditional software development. Developers must manage model packs, prompt templates, policy rules, and environment configurations locally or within a controlled sandbox. Debugging is more exploratory, demanding robust instrumentation to trace decision paths and verify that prompts produce expected outcomes. For code-based workflows, developers rely on established IDEs, unit tests, integration tests, and deployment pipelines. The most successful teams bring these worlds together with hybrid tooling: unit tests for components, observable event logs for agent decisions, and CI/CD canaries that validate end-to-end behavior when updating models or prompts. In practice, invest in test doubles for agents, structured prompts, and monitoring dashboards that surface performance trends and failure modes.

Deployment scenarios and decision framework

Choosing between local AI agents and code-based approaches depends on application domain, data sensitivity, and latency requirements. Scenarios favoring local agents include privacy-centric applications, offline field devices, or edge computing environments where network access is limited. Scenarios favoring code-driven workflows include complex data engineering tasks, scalable software-as-a-service platforms, and teams already operating mature CI/CD ecosystems with strong governance. A pragmatic decision framework starts with a risk/benefit map: list critical tasks, required latency, data residency needs, and available expertise. Then assess hardware and operational costs, maintenance burdens, and long-term scalability. Ai Agent Ops recommends building a dot-point decision matrix to compare options against your organization’s strategic priorities before committing to one path.

Practical guidelines and patterns

To operationalize either path, start with incremental pilots that test core capabilities before broad adoption. For local AI agents, pilot with a narrow domain, measure latency, and validate offline reliability. For code-driven pipelines, pilot with a modular service, ensure robust tracing, and establish clear rollback criteria. Promote a common vocabulary across teams to describe agent behavior, data flows, and decision boundaries. Establish governance around prompts, model versions, and data exposure. Finally, design for evolvability: maintain a flexible architecture that can shift from local to hybrid to cloud-based approaches as needs change.

Troubleshooting and risk management

When things go wrong, structure your troubleshooting around sentinel signals: latency spikes, inconsistent results, and data leakage. For local agents, verify the correctness of prompts, cached data, and hardware health. For code-driven systems, check CI/CD pipelines, API dependencies, and external service availability. Build a risk register that catalogs potential failure modes, their likelihood, and mitigation strategies. Regularly test failover and recovery procedures so that both paths remain resilient under stress. Ai Agent Ops emphasizes documenting failure modes and remediation playbooks so teams can respond quickly without ad-hoc improvisation.

Comparison

| Feature | Local AI Agent | Code-driven Workflow |

|---|---|---|

| Latency | Low latency for interactive tasks (on-device or local network) | Depends on network; can be higher but scalable with cloud resources |

| Data locality | Data processed locally; strong privacy controls | Typically cloud-first; on-prem options exist but complex |

| Offline capability | Yes, if hardware and model support it | Often requires connectivity; offline viable with careful architecture |

| Debuggability | Challenging; requires instrumentation for prompts and policies | Mature tooling; deterministic pipelines easier to trace |

| Security/compliance | Stronger data sovereignty possible; customizable access controls | Centralized security controls; depends on deployment model |

| Maintenance | Hardware updates and model refresh cycles | CI/CD maintenance and dependency management |

| Resource usage | Local compute and memory constraints; may require GPUs | Elastic cloud resources; pay-as-you-go pricing can vary |

| Deployment complexity | Requires local deployment setup; ecosystem varies | Standard software deployment pipelines with orchestration |

| Best for | Privacy-sensitive, offline capable scenarios | Scalable, process-heavy pipelines with strong tooling |

Positives

- Improved data privacy and offline capability

- Faster iteration cycles in interactive tasks

- Tighter integration with host applications

- Reduced network dependency and potential costs

What's Bad

- Higher upfront hardware and maintenance costs

- More complex debugging and observability

- Limited by local compute capacity and model size

- Patch management and updates can be burdensome

Local AI agents excel when privacy and offline capability matter; code workflows excel for scalability and mature tooling

Choose local AI agents for privacy-sensitive, offline or edge scenarios. Opt for code-driven workflows when you need scalable pipelines, broad toolchain support, and predictable governance. The best path may be a hybrid approach that combines agentic decisions with solid, event-driven orchestration.

Questions & Answers

What is a local AI agent and how does it differ from traditional code?

A local AI agent is a modular component that can run on-device or on-prem, making decisions based on internal policies and prompts. Traditional code relies on explicit logic implemented in software with standard programming constructs. The two differ in where the intelligence resides, how data is processed, and how updates are delivered. In practice, teams often hybridize both to balance control and scalability.

A local AI agent runs where your data lives and makes decisions locally, while traditional code lives in software and updates via version control. Think of the agent as a decision layer on top of your existing software stack.

When should I choose a local AI agent over code?

Choose a local AI agent when privacy, offline operation, or low-latency interaction are critical. If data must stay on premises or on a device, a local agent can minimize data exposure and network dependence. For mature, scalable workflows, traditional code with robust pipelines may still be preferable.

Pick a local AI agent if you need privacy and fast, offline decisions. If you require scalable, well-supported pipelines, code often wins.

What are the main security considerations with local AI agents?

Security focuses on data residency, access control, and prompt governance. Local storage requires encryption, strict role-based access, and audit trails. Ensure model updates and prompts are versioned, and monitor data flows to prevent leakage.

Security for local AI agents means keeping data on-device, encrypting storage, and auditing prompts and access.

Can local AI agents work offline?

Yes, offline operation is possible if the device has sufficient compute and a locally stored model. You'll need careful handling of updates and periodic synchronization when connectivity resumes. Offline capability trades off some freshness for resilience.

Absolutely. If your device can run the model and you can update it later, offline use is feasible.

What deployment patterns work best for local AI agents?

A hybrid pattern often works best: agent logic runs locally for responsiveness, while orchestration and data pipelines run in managed services. This approach balances privacy with scalability and enables phased migrations as requirements evolve.

A hybrid setup—agents run locally for speed, while the rest of the work goes through reliable services—works well for many teams.

How do I evaluate cost when comparing local AI agents to code?

Cost considerations include hardware and maintenance for local agents versus cloud compute and licensing for code-based workflows. A practical approach is to estimate total ownership costs over the project lifecycle, including operational overhead and potential downtime. Avoid treating price as the sole driver; include risk, governance, and time-to-market factors.

Compare hardware and maintenance costs for local agents with cloud and license costs for code, then weigh governance and risk.

Key Takeaways

- Assess core priorities: latency, privacy, maintainability

- Prefer local AI agents for offline and data control

- Choose code-based workflows for scale and mature tooling

- Invest in debugging, observability, and governance

- Evaluate total cost of ownership and team readiness