Gemini AI Agent vs Code: A Practical Developer Guide

An analytical, developer-focused comparison of Gemini AI Agent versus traditional coding in VS Code, with practical guidance on when to automate with agentic AI, how to design governance, and how to blend agent workflows with hand-coded components.

Gemini AI Agent vs Code: a practical, developer-focused comparison of how an agentic AI like Gemini compares with traditional coding. In the gemini ai agent vs code landscape, Gemini automates tasks and orchestrates actions across tools, while hand-coded solutions emphasize explicit logic and control. This quick guide highlights when to choose agentic AI versus writing code for speed, reliability, and governance.

Gemini AI Agent: Concept and Scope

Gemini AI Agent represents a modern class of agentic AI that can autonomously perform tasks, reason over context, and orchestrate actions across a suite of tools and data sources. In practice, Gemini acts as a workflow coordinator rather than a single function, allowing teams to specify goals, constraints, and safety guards, and letting the agent decide which steps to execute. In the gemini ai agent vs code landscape, this agentic paradigm is frequently used to coordinate API calls, data transformations, and human-in-the-loop approvals, reducing boilerplate scripting required to chain services. For developers evaluating Gemini AI Agent versus traditional coding, the central questions are how autonomous the agent should be, which tools it should orchestrate, and what safety mechanisms are necessary to prevent unintended outcomes. Ai Agent Ops has observed teams that pair Gemini with governance overlays to speed automation while preserving control. This section grounds the discussion in practical capabilities and real-world constraints, avoiding hype and over-generalization.

Traditional Coding with VS Code: Foundations and Trade-offs

Traditional coding environments, including VS Code, rely on explicit instructions, precise control flow, and deterministic execution. Developers author programs in languages, assemble libraries, and integrate services via well-defined interfaces. When comparing gemini ai agent vs code in day-to-day practice, the contrast is pronounced: with code you specify exact steps, error handling, and edge-case behavior; with an agent you describe goals and constraints, and the agent determines its own path within guardrails. VS Code remains the central IDE for building, testing, and deploying software, offering extensive extensions, debuggers, and linters that help enforce quality, reproducibility, and audit trails. However, maintaining large, dynamic codebases can incur heavy engineering overhead as failure modes scale with complexity. Many teams find the most productive setups blend agentic workflows with explicit modules, letting agents orchestrate workflows while critical logic lives in well-tested, testable code components.

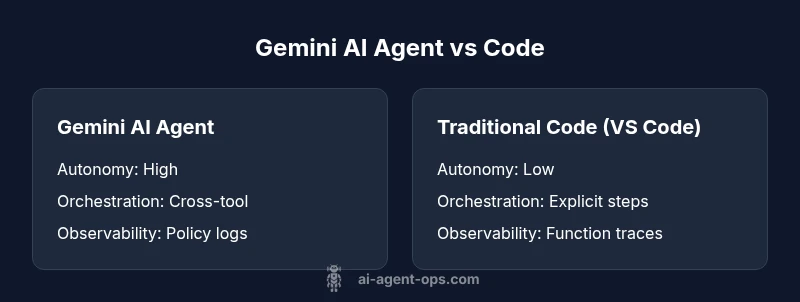

Autonomy vs Explicit Control: The Key Differentiator

At the heart of gemini ai agent vs code is a trade-off between autonomy and explicit control. Agentic AI can propose, execute, and adjust sequences of actions across tools with minimal handholding, which accelerates delivery and enables rapid experimentation. In contrast, hand-coded solutions provide precise determinism and easier reasoning about every step. The decision often hinges on risk appetite: for routine, rules-based processes (like data ingestion pipelines or multi-API orchestration), agents shine; for safety-critical decisions or highly regulated domains, explicit code paths are preferred. Teams should define guardrails, such as approval steps, rate limits, and fallback strategies, to ensure that autonomy remains bounded. When designed thoughtfully, the gemini ai agent vs code choice becomes less about replacing code and more about coordinating it effectively within a hybrid architecture.

Orchestration and Tool Integration in Gemini

One of the strongest selling points of Gemini AI Agent is its ability to orchestrate tools, services, and data sources across a workflow. Instead of writing bespoke glue logic for each integration, engineers can define objectives, prompts, and constraints, and let the agent handle calls to APIs, data transforms, and decision branches. The integration surface matters: reliable adapters, well-defined schemas, idempotent operations, and clear provenance trails are essential for maintainable automation. In gemini ai agent vs code considerations, you should map tool capabilities to agent actions and implement robust fallback paths if a tool becomes unavailable. The agent's policy layer should also enforce safety checks, such as permission scopes, rate limits, and escalation when human review is required. This approach can dramatically reduce repetitive coding toil while preserving governance discipline.

Observability, Debugging, and Safety

Observability is critical in agent-driven workflows. With Gemini, developers should instrument action logs, decision rationales, and outcome signals to understand why the agent chose a path. Debugging becomes a matter of tracing policy decisions, tool responses, and failure modes across multiple components, rather than stepping through a single function. In gemini ai agent vs code discussions, teams emphasize end-to-end tracing, event-driven debugging, and structured logging to diagnose issues quickly. Safety mechanisms—such as input validation, sandboxed tool calls, and explicit escalation rules—help prevent catastrophic automation errors. Regular audits of prompts, policies, and tool configurations are essential to maintain confidence as the system evolves.

Governance, Compliance, and Risk Management

Governance plays a central role when deploying agentic AI in production. Gemini AI Agent can introduce new risk surfaces around data access, tool permissions, and unpredictable agent behavior. Organizations often adopt policy-based controls, runbooks, and safety reviews to keep automation aligned with compliance requirements. In gemini ai agent vs code contexts, it is common to implement guardrails like role-based access, approval gates for high-stakes actions, and automatic rollback strategies. Data handling should follow organizational privacy standards, with clear data lineage and audit trails. Regular security testing, including prompt injections and adversarial testing for agents, helps reduce the risk of unexpected behaviors. This section highlights practical governance patterns that support scalable, trustworthy automation.

Developer Experience and Learning Curve

Adopting Gemini AI Agent changes how teams think about software development. New workflows emphasize designing goals, constraints, and observation strategies, rather than handcrafting every step. The learning curve for gemini ai agent vs code hinges on understanding prompt design, agent lifecycle, and tool integration. In practice, teams benefit from scaffolds such as templates, policy libraries, and standardized adapters that accelerate onboarding. Training programs should cover not only the technical aspects but also governance concepts, debugging strategies, and incident response plans. By combining hands-on experimentation with strong documentation and governance, organizations can shorten the time to value while maintaining quality control.

Performance, Scalability, and Cost Considerations

Performance considerations for gemini ai agent vs code revolve around latency, throughput, and the reliability of tool integrations. Agents can achieve high throughput by parallelizing tasks and coordinating asynchronous calls, but this comes with increased complexity in error handling and visibility. In practice, architects should profile end-to-end latency, monitor queue depths, and implement backpressure strategies to avoid cascading failures. Cost models for agentic workflows typically include AI service usage, cloud resources for agents, and ongoing maintenance costs for adapters and policies. While these costs can be offset by faster delivery and reduced boilerplate, teams should maintain a clear boundary between what the agent handles automatically and what remains under explicit programming control.

Practical Decision Framework and Hybrid Patterns

Choosing between Gemini AI Agent and traditional code is not a binary decision. Most teams will adopt a hybrid pattern that uses agents to orchestrate high-level workflows while keeping core logic in hand-coded modules. A practical decision framework includes: define business goals, enumerate tasks that benefit from autonomy, assess governance requirements, and pilot in a low-risk domain. Start with a minimal agent that handles a well-scoped orchestration task, then gradually broaden scope as controls prove effective. The gemini ai agent vs code decision becomes a spectrum: use more agent-based orchestration where speed and flexibility matter, and rely on hand-coded pipelines for deterministic, auditable steps. The emphasis should be on an architecture that enables safe scaling and clear ownership.

Authoritative Sources

- NIST: https://www.nist.gov/publications

- Nature: https://www.nature.com/

- MIT Technology Review: https://www.technologyreview.com/

Comparison

| Feature | Gemini AI Agent | Traditional Code via VS Code |

|---|---|---|

| Autonomy & Orchestration | High autonomy; cross-tool orchestration | Low autonomy; explicit instructions |

| Development Speed | Faster workflow setup and iteration | Slower; requires step-by-step implementation |

| Determinism & Reproducibility | Moderate to high with proper guardrails | High determinism when fully coded |

| Tool Integration Complexity | Moderate to high; needs adapters and prompts | Lower once integrations are built |

| Observability & Debugging | Agent-level logs; policy traces | Function-level debugging; traditional traces |

| Governance & Risk | Requires policy controls; escalation | Established governance via code reviews |

| Performance at Scale | Strong with parallelism; complexity grows | Predictable but limited by code structure |

| Cost Model | Usage-based AI services; maintenance of prompts | Infrastructure and maintenance costs for codebase |

Positives

- Speeds up automation and task orchestration

- Reduces boilerplate glue logic across tools

- Improves consistency in multi-step workflows

- Encourages experimentation with safe guardrails

What's Bad

- Potential for unsafe autonomous actions without strong governance

- Less deterministic behavior without explicit constraints

- Learning curve for effective prompt design and policy management

- Tool integration can introduce fragility if adapters break

Gemini AI Agent is generally favored for orchestration-heavy tasks, while traditional coding remains the baseline for precise, deterministic outcomes.

If your priority is rapid automation and cross-tool coordination with governance, Gemini AI Agent has clear advantages. For mission-critical logic requiring tight determinism and full auditability, hand-coded solutions in VS Code remain indispensable.

Questions & Answers

What is Gemini AI Agent and how does it work?

Gemini AI Agent is an agentic AI framework that autonomously executes tasks, reasons over context, and coordinates multiple tools. It operates through goals, constraints, and safety policies, letting humans intervene when needed. It is designed to orchestrate workflows rather than replace all coding, making it suitable for rapid automation and scalable orchestration.

Gemini AI Agent coordinates tools to complete tasks automatically, but it still relies on human oversight for safety in critical cases.

Can Gemini AI Agent completely replace hand-coded solutions?

In most scenarios, Gemini AI Agent complements rather than completely replaces traditional code. It excels at orchestration and automation across tools, while core logic and safety-critical steps often remain in explicit, hand-coded components to ensure determinism and auditability.

It’s usually best to hybridize—use agents for orchestration and code for critical logic.

How does integration with VS Code work?

VS Code serves as the development environment where you author both agent prompts and the glue logic that the agent orchestrates. Integrations are built as adapters or services callable by the agent, with clear interfaces and test coverage to support reliable orchestration.

You write adapters in VS Code that the agent can call as part of a workflow.

What governance and safety considerations matter?

Governance requires policy controls, approval gates, data access restrictions, and robust testing. Safety considerations include input validation, sandboxed tool calls, and explicit escalation to human reviewers for high-impact actions.

Set up guardrails and review processes so automation remains safe and accountable.

What is a good starting pattern for adopting Gemini AI Agent?

Start with a small, scoped orchestration task, define clear goals and guardrails, and gradually extend scope as you establish governance and observability. Use a hybrid approach—agent-led orchestration with hand-coded components for critical steps.

Begin with a simple task, then expand as you gain confidence.

Key Takeaways

- Use agentic automation for complex orchestration tasks

- Preserve explicit code paths for critical decision points

- Establish strong governance and safety guardrails

- Prototype with a hybrid pattern to balance speed and control

- Ensure observability to root-cause agent behavior