Agentic AI vs AI Agent: An Evidence-Based Comparison

Analyze agentic ai vs ai agent across autonomy, governance, and deployment. Learn which approach fits your goals, with a framework for implementation and risk management.

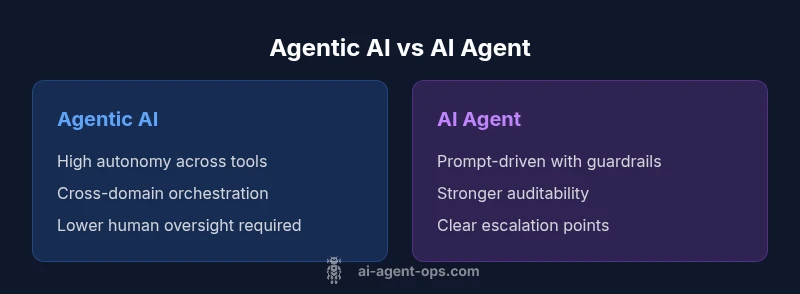

When comparing agentic ai vs ai agent, the balance between autonomy and control matters most: agentic ai emphasizes self-directed action and orchestration; ai agent emphasizes safety, prompts-driven guidance. For teams prioritizing scalable automation with guardrails, agentic ai is compelling; for risk-sensitive environments, ai agent provides safer, more manageable results. Choose based on governance needs, data quality, and desired speed of automation.

Definitional foundations: agentic ai vs ai agent

Agentic AI and AI agents sit at different points on the automation spectrum. Agentic ai refers to systems designed to operate with a significant degree of autonomy, capable of goal-directed planning, tool selection, and action execution with minimal human prompting. An AI agent, by contrast, often functions as a guided collaborator: it executes tasks within a constrained scope, follows prompts, and relies on explicit human direction for orchestration. The distinction matters for scaling automation, governing risk, and designing governance frameworks.

According to Ai Agent Ops, the "agentic ai vs ai agent" debate centers on where an organization places control, accountability, and speed. Agentic AI can coordinate multiple tools, manage memory, and adapt strategies over time, enabling end-to-end workflows that cross teams. AI agents typically emphasize reliability and safety, with clear prompts, auditability, and human-in-the-loop checks to prevent unintended actions. The spectrum is not binary; many teams adopt hybrid patterns that combine autonomous modules with supervisory prompts and guardrails.

In practice, mapping business objectives to capability profiles is essential. If your aim is to reduce manual handoffs, unlock long-horizon decisions, and instrument cross-functional automation, the agentic ai vs ai agent considerations lean toward the former. If risk, compliance, and traceability dominate priorities, the latter approach may offer a safer, more controllable path. Lastly, consider the organization ata maturity and operational readiness when choosing a path.

Core differences in capabilities and scope

The core differences between agentic ai and ai agent revolve around two levers: autonomy and scope. Agentic ai is designed to operate with broader autonomy across multiple domains, assembling actions from a toolbox of adapters, APIs, and memory to pursue high-level goals. It tends to excel in long-running, cross-functional workflows where decisions must be made with limited human intervention. An ai agent, however, typically acts within narrower boundaries defined by prompts, policies, and human-in-the-loop checkpoints. It excels in guided tasks, rapid prototyping, and situations where predictable, auditable behavior is paramount. The key phrase agentic ai vs ai agent captures the spectrum: more autonomy and cross-domain orchestration on one side, more governance and prompt-driven control on the other. In practice, teams often blend the two, allowing autonomous modules to handle routine orchestration while preserving guardrails for safety.

From a data perspective, agentic ai relies on richer state management and memory to sustain plans over time, while ai agent focuses on stateless or lightly stateful interactions with clear reset points. This difference has practical implications for how you design training, evaluation, and rollback mechanisms. If your business objective requires end-to-end automation—think supply-chain orchestration, cross-department decision-making, or dynamic tool selection—the agentic ai vs ai agent distinction tips toward the former. If your priority is traceability, reproducibility, and iterative experimentation, the ai agent path offers a more conservative, safer route.

Architecture and data flows: from prompts to orchestration

Both approaches hinge on a core loop: perceive, decide, act, and learn. Agentic ai builds this loop into a fluid architecture that includes a central orchestrator, a memory subsystem, tool adapters, and safety gates. The orchestrator determines which tools to call to advance a goal, while memory stores context for long-horizon planning. Tool adapters translate high-level intents into concrete API calls, and safety gates monitor for policy violations or unsafe actions. In contrast, an ai agent often centers on a robust prompt layer, a lightweight controller, and sometimes a human-in-the-loop reviewer. Data flows are more deterministic: prompts generate actions, results are logged, and governance checks are applied before any significant step.

Key design questions for the agentic ai vs ai agent choice include: How much state must be retained across interactions? What is the acceptable latency for decision-making? How will the system recover from partial failures? The agentic path favors richer internal representation and learning signals, enabling adaptive behavior. The ai agent route prioritizes clarity of intent, auditable decisions, and explicit boundaries to reduce risk.

Autonomy vs control: decision-making dynamics

Autonomy refers to how much initiative the system takes without human input. In agentic ai, decision-making is distributed: the model can set subgoals, replan strategies, and reallocate resources as needed. This yields faster execution of complex workflows but requires strong guardrails to prevent drift or unintended actions. Control, meanwhile, is preserved via prompts, constraints, and human review points in AI agents. The design tension is balancing speed with safety, especially in sensitive domains. To operationalize this balance, teams implement layered controls: policy constraints, activity logs, sandboxed testing, and staged rollouts. In many organizations, the agentic ai vs ai agent decision is a spectrum rather than a single choice, with teams adopting a hybrid approach where autonomous modules operate under governance umbrellas and explicit checks.

From a practical standpoint, measurement of autonomy should include both outcome metrics (time-to-decision, throughput) and governance metrics (auditability, revertibility). A well-tuned system will push decision-making into the automated layer when it is safe to do so, while preserving manual overrides and human oversight for exceptions. The AI agents path provides more deterministic control, which can simplify testing and compliance but may limit speed to iterate.

Governance, safety, and compliance considerations

Governance is a critical dimension in the agentic ai vs ai agent debate. Agentic ai, with its high degree of autonomy, demands robust risk management, policy enforcement, and transparent auditing. Organizations must implement multi-layered controls, including access management, action isolation, verifiable logs, and break-glass procedures for emergency intervention. In contrast, ai agent deployments can lean on tighter prompt contracts, human-in-the-loop gating, and stronger version control. While this model reduces certain risks, it does not eliminate them; however, the emphasis shifts toward process maturity and traceability. The key is to align governance with business risk tolerance, data sensitivity, and regulatory obligations. Ai Agent Ops emphasizes that governance isn't a checkbox but an ongoing discipline—continuous monitoring, policy updates, and post-incident reviews are essential to maintaining safety in either approach. Both paths benefit from explicit decision logs, rollback capabilities, and clear ownership for each automated action.

A practical framework is to start with a risk assessment, define guardrails for core workflows, and gradually raise autonomy only after validating controls, tests, and dashboards. The agentic ai vs ai agent distinction becomes a governance question, not just a technical one; it shapes how you design audits, incident response, and data lineage.

Integration patterns and orchestration across tools

Integration strategy is one of the most consequential differences between agentic ai and ai agent. Agentic ai thrives on tool orchestration: a single orchestrator can coordinate dozens of adapters, API calls, and microservices to achieve a broad objective. This requires robust tooling for discovery, versioning, and error handling, as well as robust observability to track how decisions propagate through the system. AI agents, by comparison, often rely on modular prompts, wrappers, and smaller, well-scoped integrations. They excel when you want to connect a few services quickly, iterate on prompts, and keep integration complexity low. The agentic ai vs ai agent choice thus has concrete implications for developer velocity, platform maturity, and operational overhead.

Practical guidance: start with a minimal viable architecture that emphasizes a single domain with clear success criteria. If you anticipate cross-domain coordination or dynamic tool use, plan for a scalable orchestration layer, data store, and policy engine. If your priority is speed to value and maintainability, begin with defined prompts and a lean set of connectors, then gradually layer in more automation as governance stabilizes.

In both cases, ensure that data flows, ownership, and privacy controls are defined, and that all actions are auditable and traceable. The agentic ai vs ai agent framework benefits from standard patterns like event-driven design, modular adapters, and consistent logging across components.

Use-case mapping: where each shines

Different business scenarios map differently to agentic ai vs ai agent. Agentic AI is particularly strong in cross-functional, complex workflows requiring adaptive decision-making, tool chaining, and autonomous problem-solving. For example, supply-chain orchestration, end-to-end order processing, and proactive incident remediation can benefit from agentic AI's ability to reason about multiple domains and long horizons. AI agents, meanwhile, shine in environments where safety, regulatory compliance, and reproducibility are paramount—such as customer support automation with strict escalation policies, or data processing pipelines that require careful audit trails. The agentic ai vs ai agent distinction is not just about capability; it’s about risk posture, team maturity, and the speed at which you want to learn and iterate. Many teams adopt a phased approach, starting with AI agents to validate concepts, then evolve to agentic AI with guardrails as confidence grows.

Evaluation metrics and success signals

Evaluating agentic ai vs ai agent requires a balanced scorecard that includes performance, safety, and governance measures. For autonomy, track outcomes like task completion rate, time-to-goal, and cross-domain coherence. For safety, monitor policy violations, incident frequency, and the time to detect and remediate. For governance, emphasize auditability, explainability, and the ability to revert actions. Additionally, consider deployment velocity, maintenance effort, and the quality of tool integrations. A practical approach is to define success criteria before deployment, then conduct iterative experiments to test autonomy under controlled conditions. The agentic ai vs ai agent comparison should reveal trade-offs between speed and control, with the most successful programs harmonizing both approaches where appropriate.

Implementation roadmap for teams

A pragmatic implementation plan starts with clear objectives and risk boundaries. First, map the desired business outcomes to the capabilities of either approach, then select an initial domain with measurable goals. Next, design the governance framework, including logging, access controls, and escalation protocols. Build a minimal viable architecture, test in a sandbox, and validate against real-world scenarios. As confidence grows, gradually expand autonomy, improve tool adapters, and tighten guardrails. Finally, establish ongoing monitoring, incident response rehearsals, and periodic governance reviews. The agentic ai vs ai agent framing helps teams track progress, adjust risk tolerance, and optimize for business impact.

Practical decision framework and next steps

Bringing the agentic ai vs ai agent decision to life requires a pragmatic framework. Start with a risk-based categorization of workflows, distinguishing high-autonomy processes from low-autonomy ones. Define governance criteria—auditable logs, safe-override mechanisms, and policy enforcement—for each category. Pilot one domain end-to-end using the agentic path if it aligns with your risk posture, or begin with AI agents to prove value quickly and iteratively increase autonomy. Ensure cross-functional collaboration between product, security, and data teams, and establish clear ownership for automated actions. Finally, maintain a living playbook that documents changes in capability, governance, and performance metrics. This approach mirrors the agentic ai vs ai agent decision in practice, blending speed with safety as your automation strategy matures.

Comparison

| Feature | agentic ai | ai agent |

|---|---|---|

| Autonomy level | High autonomy with self-directed action across tools and domains | Moderate autonomy with guided actions constrained by prompts and policies |

| Decision-making scope | Broad, goal-directed decisions spanning workflows | Narrow, task-specific decisions within defined prompts |

| Governance requirements | Strong governance including audit logs and policy enforcement | Governance focused on prompt-based controls and human-in-the-loop |

| Integration complexity | Supports end-to-end orchestration with tool adapters | Easier to integrate with existing prompts and wrappers |

| Learning updates | Online/continuous learning and adaptation | Periodic updates with strict versioning |

| Best use case | Organizations seeking scalable, autonomous orchestration | Teams needing safe, incremental automation |

| Risk profile | Higher potential risk without robust controls | Predictable risk with established guardrails |

Positives

- Enable end-to-end automation and orchestration at scale

- Can reduce manual intervention and cycle times

- Supports cross-domain decision-making and adaptive workflows

- Facilitates experimentation and rapid prototyping in AI agents

What's Bad

- Increased complexity and deployment effort

- Higher governance, safety, and data-management requirements

- Potential for unintended actions without strong guardrails

- Longer time-to-value due to governance and integration

Agentic AI is generally stronger for scalable automation and cross-domain orchestration, while AI Agent provides safer, more controllable automation.

Choose agentic AI when you need end-to-end automation and rapid adaptation with strong governance. Choose AI Agent for safer, auditable, and more incremental automation; evolve toward greater autonomy as governance matures.

Questions & Answers

What is the key practical difference between agentic ai and ai agent?

The practical difference lies in autonomy and scope. Agentic ai is designed for broad, self-directed orchestration across tools, while an ai agent operates within tighter prompts and governance. This distinction shapes risk, speed, and governance needs.

Agentic AI offers broad autonomy, while AI agents emphasize guided actions with safeguards. The choice affects risk and governance.

In which scenarios should I prefer agentic ai over ai agent?

Opt for agentic ai when you need end-to-end automation across multiple domains and fast iteration. Choose ai agent for risk-sensitive environments where governance and auditability are paramount.

Use agentic AI for broad automation; AI agents for safer, controlled tasks.

What governance considerations are essential for agentic ai deployments?

Key considerations include robust logging, policy enforcement, access controls, and a clear escalation path. Regular audits and incident reviews help maintain safety in autonomous systems.

Strong logs, clear policies, and rapid rollback are essential.

Can I mix agentic ai with ai agent in the same system?

Yes. A hybrid approach often starts with AI agents to validate value, then gradually introduces autonomous modules with guardrails. This lets teams balance speed and safety while learning from real-world use.

A hybrid setup often proves easiest to start with and safer to scale.

How do I measure success for these approaches?

Measure both outcome metrics (throughput, time-to-decision) and governance metrics (auditability, incident response). Define success criteria before deployment and adjust as governance matures.

Track performance and safety indicators, and adjust governance over time.

What are common pitfalls to avoid?

Avoid over-automation without guardrails, unclear ownership, and inconsistent logging. Ensure data quality and a clear escalation path to prevent drift or unsafe actions.

Guardrails and clear ownership prevent drift and risk.

Key Takeaways

- Map goals to capability profiles before choosing a path

- Prioritize governance and auditability from day one

- Begin with a scoped domain and expand cautiously

- Balance autonomy with human-in-the-loop controls

- Use a hybrid approach when appropriate for risk management