Generative AI vs AI Agent vs Agentic AI: In-Depth Comparison

A rigorous, analytical comparison of generative ai vs ai agent vs agentic ai, detailing definitions, workflows, use cases, risks, and practical guidance for developers and leaders.

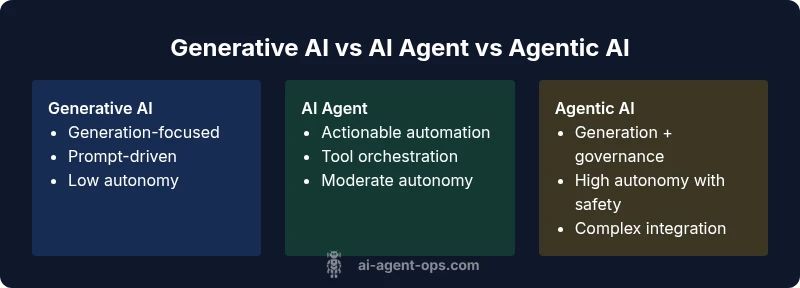

Generative AI, AI agents, and agentic AI describe three stages of automation: generation, action with autonomy, and integrated generation-plus-governed autonomy. In practice, generative AI excels at producing content from prompts, AI agents orchestrate tasks across tools, and agentic AI combines both with governance layers to ensure safe decision-making. Understanding these distinctions helps teams choose the right tool for each use case.

Context and Definitions: what generative ai vs ai agent vs agentic ai means

Generative AI refers to models that produce new content—text, images, code, or other media—by learning patterns from large datasets. AI agents are more than models; they are system components that observe prompts, decide on a course of action, and trigger tool calls to accomplish goals. Agentic AI sits at the intersection: it binds generation and action with governance, safety controls, and monitoring to steer outcomes while preserving autonomy. In practical terms, the trio represents a spectrum—from generation-only systems to autonomous agents, to hybrid systems that enforce policies as they operate. According to Ai Agent Ops, this framing matters because it helps teams map capabilities to business outcomes, risk profiles, and governance requirements. For developers, product leaders, and executives, recognizing where a project lands on this spectrum informs scoping, budgeting, and risk management. The rest of this article unpacks the differences, common workflows, and decision criteria so you can select the right approach for your use case.

The generative ai vs ai agent vs agentic ai continuum

At a high level, generative AI models excel at producing novel outputs from prompts but do not inherently plan actions beyond the immediate task. AI agents extend those capabilities by applying decision logic to select actions, call tools, and iterate toward a goal. Agentic AI adds governance—safety rails, auditing, and oversight—so that autonomy is exercised within defined bounds. This continuum matters when you size projects, estimate risk, and design monitoring. As you evaluate requirements, consider whether you need raw creative output, automated orchestration, or a governance-enabled blend of generation and action. By framing the choice as a continuum, teams can trade off speed, control, and resilience with greater clarity.

Core capabilities and workflows

Generative AI centers on content generation, formatting, or transformation. It benefits from vast training data and engineering for creative variability. AI agents introduce execution loops: perception (input), planning (strategy), and action (tool use). They can operate across APIs, databases, and software, delivering end-to-end automation. Agentic AI combines generation and action under policy-driven control, enabling dynamic planning with constraint checks, safety guards, and ongoing evaluation. Workflows in this space often start with a generation task (draft, outline, or simulated run), followed by agent-driven execution (fetching data, invoking tools, updating records), and finally governance steps (validation, fallback strategies, logging). Implementers should map each workflow to the required level of autonomy, data access, and monitoring to ensure reliability and compliance.

Architecture, data, and governance

Generative AI relies on large, diverse corpora and unsupervised objectives to learn patterns. AI agents require an environment model: prompts, tool definitions, and state representations that allow action selection. Agentic AI demands controls: policy trees, safety checks, auditing, and rollback mechanisms. Governance may include access controls, versioning of policies, and explainability dashboards. Data stewardship is critical: ensure data provenance, privacy, and bias mitigation, especially when agents interact with real-world systems. When you elevate autonomy with governance, you reduce risk while enabling scalable automation. Ai Agent Ops emphasizes designing for auditability and traceability so that decision-making is reproducible and controllable across generations, actions, and governance layers.

Use cases by domain and organizational roles

Different domains favor different configurations. Content-heavy teams may rely on generative AI for drafts and ideation. Operational teams seeking automation across SaaS tools often adopt AI agents to execute routines. Enterprises pursuing end-to-end automation and regulatory compliance may opt for agentic AI to balance speed with oversight. For product teams, the key decision factors include time-to-value, user impact, and governance requirements. Developers should plan modular components: a generation module, an action module, and governance rails. Leaders must align incentives and risk tolerance, ensuring the chosen approach supports strategic goals while remaining maintainable and auditable.

Risks, ethics, and compliance

All three configurations carry risks—hallucinations in generation, misexecution in automation, and cascading failures without proper governance. Agentic AI helps mitigate risk through constraints, monitoring, and rollback capabilities, but adds complexity and maintenance overhead. Ethical considerations include bias, data privacy, and accountability for automated decisions. Establish a governance framework early: define failure modes, set guardrails, implement logging, and require human-in-the-loop review for high-stakes decisions. Compliance requirements may span industry regulations, data handling standards, and accessibility guidelines. Ai Agent Ops underscores the importance of documenting policies and performance criteria so teams can demonstrate due diligence and continuous improvement.

Implementation patterns and architecture

A practical pattern starts with a clear problem statement, then selects a generation component, an automation layer, and governance controls. Interfaces should be well-defined: prompt schemas for generation, tool catalogs for automation, and policy engines for governance. Architecture choices include monolithic versus modular designs, with microservices enabling independent scaling of generation, orchestration, and governance modules. Observability is essential: instrument prompts, actions, outcomes, and policy decisions. Security considerations include least-privilege access, secure tool integrations, and robust auditing. Start small with a pilot to validate performance and risk, then iterate toward a governance-enabled solution if autonomy is core to the workflow.

Evaluation, metrics, and governance controls

Evaluation should cover output quality, action success rates, latency, and governance effectiveness. Metrics for generation include coherence, diversity, and factual accuracy; for agents, completion rate and error rate; for agentic AI, governance metrics such as policy adherence, explainability, and audit trail completeness. A robust assessment plan combines qualitative reviews with quantitative dashboards. Governance controls should be baked into the architecture: role-based access, policy evaluation at decision points, and fail-safe mechanisms. Ai Agent Ops recommends building in continuous monitoring and periodic red-teaming to uncover edge cases and reveal hidden failure modes.

Decision framework: when to choose which approach

When you need high-quality generation with minimal risk of autonomy, start with generative AI and lightweight post-processing. If the goal is automated task execution across multiple tools with predictable outcomes, AI agents are often more appropriate. If your objective combines generation with action under governance constraints to satisfy compliance and safety, agentic AI is the most suitable choice. Use a phased approach: pilot the generation or agent concept, measure impact, and incrementally introduce governance to manage risk as autonomy scales. In all cases, align with business outcomes and regulatory requirements, and prepare a roadmap that balances speed, control, and cost.

Common pitfalls and best practices

Avoid assuming that generation quality alone guarantees success in automation. Do not overshoot autonomy without governance; always design for observability and rollback. Start with clear success criteria, and implement incremental, measurable milestones. Prioritize explainability and auditability from day one. Invest in data governance and bias mitigation, especially when training data informs generation or when agents interact with real systems. Finally, foster cross-functional collaboration between product, engineering, and legal teams to maintain alignment with strategic goals and risk tolerance.

Feature Comparison

| Feature | generative ai | ai agent | agentic ai |

|---|---|---|---|

| Definition | Models that create new content from prompts | Systems that autonomously select actions to achieve goals | Hybrid: generation + autonomous action with governance |

| Core capabilities | Text, image, code generation; creative tasks | Perception, planning, tool use; task automation | Generation + action + governance with safety checks |

| Autonomy level | Reactive or prompt-driven | Partial autonomy with oversight | High autonomy with governance and monitoring |

| Data needs | Large, diverse training data; prompts | Environment state, tools, and plugins | Generation data + tool state + governance data |

| Typical use cases | Creative content, drafting, coding assists | Workflow automation, tool orchestration | End-to-end automation with governance for high-stakes tasks |

| Risks & governance | Hallucinations, bias, surface-level outputs | Misexecution, tool misuse, error propagation | Complexity, governance overhead, and safety considerations |

| Best for | Generation-focused tasks | Automation across tools and data sources | Autonomous workflows requiring safety controls |

Positives

- Clarifies responsibilities and integration points across generation, automation, and governance

- Enables scalable automation with improved consistency

- Supports rapid prototyping and iterative improvement

- Can reduce manual workload and accelerate decision cycles

What's Bad

- Increases system complexity and maintenance needs

- Autonomy introduces new failure modes and governance challenges

- Requires robust data governance and auditing practices

- Potential for over-reliance on automated decision-making

Agentic AI offers the strongest autonomy with governance, but start with generation or agents depending on risk and speed needs.

Agentic AI should be adopted when safety, compliance, and explainability are non-negotiable. For faster value, generative AI or AI agents often suffice, with governance layered in as complexity grows.

Questions & Answers

What is generative AI?

Generative AI produces new content by learning patterns from data. It excels at drafting text, creating images, or generating code but does not inherently perform multi-step automation without additional orchestration.

Generative AI creates new content from data patterns; it drafts, designs, and codes but needs other components for multi-step automation.

How is an AI agent different from traditional software?

An AI agent selects actions based on state and goals, often interacting with tools and data sources. Traditional software follows predefined flows with limited adaptability and no autonomous decision-making.

AI agents choose actions and use tools, unlike traditional software that follows fixed paths.

Can generative AI become an AI agent?

Yes, by integrating generation with perception, planning, and tool use, a system can evolve from a generator to an agent. This requires additional components and governance to manage autonomy.

You can layer planning and tool use on top of generation to form an agent, with governance to keep it safe.

What is agentic AI exactly?

Agentic AI blends generation and autonomous action with governance controls. It aims to provide end-to-end automation while ensuring safety, explainability, and auditability.

Agentic AI combines generation and autonomous action with safety controls.

How should I evaluate these approaches?

Evaluation should combine output quality, task success rates, latency, and governance outcomes. Use both qualitative reviews and quantitative metrics across generations, actions, and policy adherence.

Assess quality, success rates, speed, and how well governance works in practice.

What governance considerations matter most?

Key considerations include data provenance, bias mitigation, access control, policy versioning, and audit trails. Governance reduces risk when autonomy scales.

Focus on data handling, bias, access, and clear policy logs.

Key Takeaways

- Understand where your project sits on the generative AI vs AI agent vs agentic AI spectrum

- Choose the simplest option that meets your autonomy and governance needs

- Plan for governance, auditing, and explainability from day one

- Pilot, measure, and iterate with cross-functional input

- Maintain data integrity and bias mitigation throughout