Difference Between AI Agent and Generative AI: An Analytical Guide

An analytical comparison of AI agents vs generative AI, detailing core concepts, use cases, metrics, and implementation patterns for smarter automation and creative workflows.

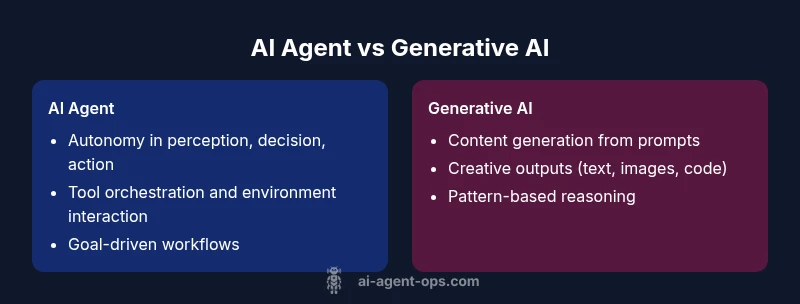

AI agents autonomously perceive, plan, and act across tools to reach goals; generative AI primarily generates new content from prompts. For modern workflows, agents orchestrate tasks and tool use, while generative models supply creative outputs. According to Ai Agent Ops, understanding the difference between ai agent and generative ai helps teams design autonomous, goal-driven systems and creative, output-focused applications that can be combined for end-to-end capabilities.

Understanding the difference between ai agent and generative ai

Defining what an AI agent does versus what a generative AI model produces helps teams map capabilities to business outcomes. The difference between ai agent and generative ai is more than a vocabulary distinction; it signals whether a system is designed to act autonomously in a dynamic environment or to generate outputs from prompts. According to Ai Agent Ops, the practical distinction centers on intent: agents are goal-driven and tool-using, while generative models are content-centric and pattern-based. In real-world workflows, many teams blend both paradigms, chaining a generative model behind an agent to enrich decisions with creative content or explanations. Reading this section with that phrase in mind helps you evaluate requirements such as autonomy, feedback loops, and governance. This article uses that lens to compare capabilities, risks, and implementation patterns. Across industries—from software development to marketing—organizations increasingly rely on both types to accelerate decision-making and execution.

The landscape today includes a spectrum: from fully autonomous agents that can navigate complex toolchains, to powerful generative models that craft human-like text, images, and code. The line between them is not a hard boundary but a design choice that affects data needs, safety constraints, and operational guardrails. By framing the discussion around intent—execution vs. creation—we unlock clearer decision paths for development teams, product managers, and executives seeking to balance speed, control, and risk. The takeaway is practical: select the approach that best matches your goal, then consider hybrid patterns that fuse autonomy with creative generation when appropriate.

- roust

Comparison

| Feature | AI Agent | Generative AI |

|---|---|---|

| Core capability | Autonomous perception, reasoning, and action across tools to achieve a goal | Content creation and augmentation from prompts or learned patterns |

| Decision scope | Environment-wide, goal-driven planning and execution | Output-focused generation driven by prompts and context |

| Data requirements | Sensor data, state tracking, tool outputs, and logs | Prompts, training data, and prior outputs (pattern-based) |

| Interaction model | Interactive loop with environment: sense → decide → act | Prompt-based interaction: provide input and receive generated output |

| Automation potential | High for workflows, orchestration across apps and services | Moderate to high for creative tasks, ideation, and content variation |

| Error handling | Built-in recovery, retries, and stateful rollback across steps | Mitigation focuses on prompt design and post-generation checks |

| Typical use cases | Operational automation, decision-making bots, process orchestration | Content generation: text, code, images, and ideas |

| Cost considerations | Usage depends on tool integration and orchestration complexity | Usage depends on model calls, prompts, and compute intensity |

| Governance and reliability | Stricter governance, traceability, and operational safety | Output quality, bias mitigation, and provenance of content |

Positives

- Clear division of labor: agents handle automation; generative AI handles content tasks

- Can scale across tools and domains

- Promotes repeatable workflows and audit trails

- Improved efficiency by integrating autonomous decision-making with creative generation

- Better risk management through structured governance and monitoring

What's Bad

- Complexity of integrating and orchestrating multiple components

- Potential misalignment between agent goals and generated outputs

- Safety, privacy, and compliance challenges in orchestrated flows

- Costs can grow with tool usage and containment requirements

AI agents and generative AI are complementary; use AI agents for automated decision and action, and generative AI for content creation and ideation.

In practice, combine the strengths of autonomous task execution with creative generation to unlock end-to-end workflows. Start with clear goals, implement governance, and prototype hybrid patterns before scaling.

Questions & Answers

What is the difference between AI agent and generative AI?

An AI agent senses its environment, reasons about actions, and executes tasks using tools to achieve goals. Generative AI mainly creates new content from prompts, leveraging learned patterns. The two serve different purposes but can be combined in integrated workflows.

AI agents act and decide across tools; generative AI creates content from prompts. Used together, they power automated, creative, end-to-end solutions.

Can generative AI power an AI agent?

Yes. Generative AI can provide the decision support, content, or explanations that an agent uses while executing actions. When integrated, the agent uses generative outputs as inputs for downstream tasks or as part of its reasoning. This blending enables richer, more adaptable workflows.

Generative AI can supply outputs and context that an agent uses to act more intelligently.

What are common use cases for AI agents vs generative AI?

AI agents excel in operations, orchestration, and decision-making across systems (e.g., automating workflows, monitoring, and dynamic tool use). Generative AI shines in content creation, code generation, ideation, and data augmentation. In practice, teams pair them to automate processes while generating explanations or content.

Agents automate and orchestrate; generative AI creates content and ideas.

What are typical risks and how can they be mitigated?

Risks include misalignment, hallucinations from generative outputs, data leakage, and governance gaps. Mitigations involve clear guardrails, monitoring, validation checks, and disciplined prompts or policies for when and how to use each technology.

Watch for hallucinations and misalignment, and put guardrails in place.

How do you evaluate performance for each?

Agents are evaluated on reliability, speed, success rate, and governance compliance. Generative AI is assessed by output quality, relevance, diversity, and bias control. Both benefit from continuous monitoring and feedback loops to refine prompts and policies.

Evaluate how reliably agents reach goals and how good outputs are from generative models.

What governance considerations matter?

Governance should address data privacy, security, model provenance, audit trails, and escalation procedures when things go wrong. Establish ownership, SLAs, and rollback plans for both agents and generative AI components.

Governance ensures safety, accountability, and controllable usage across both techs.

Key Takeaways

- Define objective first: automation vs content generation

- Assess autonomy needs and risk tolerance before choosing a pattern

- Design governance and monitoring from the start

- Prototype workflows before scaling to production

- Combine agents with generative AI for hybrid capabilities