ai agentic vs generative: choosing AI approaches for automation

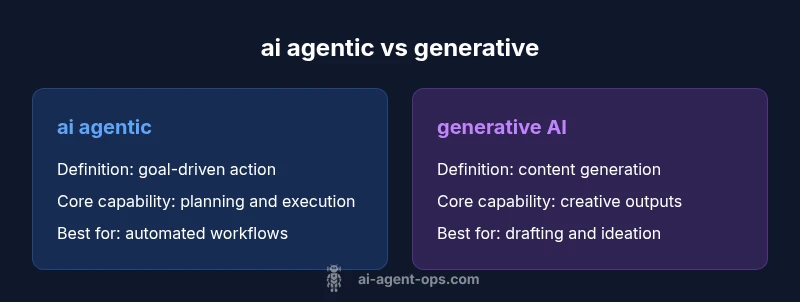

A clear comparison of ai agentic vs generative paradigms, showing how agent-driven actions differ from generative content. Learn definitions and use cases, and best practices for AI workflows.

Both ai agentic and generative AI describe how machines act and create. Agentic AI emphasizes goal-driven actions, planning, and decision execution, while generative AI focuses on producing novel content. Most teams benefit from combining them: agentic systems orchestrate tasks using generative models to deliver actionable automation in real workflows today.

Defining ai agentic vs generative paradigms

ai agentic vs generative are two ends of the AI spectrum that describe how systems operate in real-world automation. In practice, ai agentic designates agents that can set goals, plan steps, and execute actions to achieve outcomes, often interacting with external environments or other services. Generative AI, by contrast, focuses on producing new content or completing patterns based on learned representations from large data corpora. The distinction matters because it shapes how teams structure control, feedback, and governance. According to Ai Agent Ops, understanding these differences helps teams design architectures that balance autonomy with safety, enabling smarter automation without sacrificing predictability. This balance is especially critical when you want AI to operate across heterogeneous tools and datasets while delivering reliable results. The central question becomes: should the system primarily act, or should it primarily create content? In many modern pipelines, the answer is a hybrid: agentic orchestration layered on top of generative components to manage tasks and outcomes.

Theoretical core: agency vs generation

The theoretical core of ai agentic vs generative revolves around two capabilities: agency and generation. Agency refers to the capacity of an AI system to decide what to do next, initiate actions, and adapt plans based on feedback. It implies a loop: observe, decide, act, observe result, adjust. Generation refers to producing outputs such as text, images, or code that are novel and contextually relevant but do not guarantee a specific next action. This distinction is essential for risk, governance, and user expectations. When you design an agentic system, you embed goals, constraints, and decision policies. With generative components, you provide prompts, condition inputs, and safety checks. The synergy between the two enables workflows where an agent identifies a goal, and a generative model drafts the steps or outputs needed to reach that goal, while a supervisor or human-in-the-loop vetoes or guides critical decisions.

Architecture overview: data flow and components

A robust ai agentic vs generative architecture typically features three core layers: perception/input, deliberation/planning, and action/output. Perception collects signals from users, devices, or systems; this is where generative components often shine—transforming raw data into meaningful prompts or content. The deliberation layer hosts planning and decision modules, defining goals, evaluating options, and sequencing actions. Finally, the action/output layer executes moves in the real world or within software ecosystems, potentially coordinating other services or agents. In this setup, the agentic layer provides governance by setting objectives, while the generative layer supplies the content, proposals, or drafts that the agent uses to act. A well-designed pipeline emphasizes clean interfaces, clear failure modes, and robust observability to trace decisions back to goals and prompts, ensuring accountability across the workflow.

Modeling considerations: planning, constraints, and prompts

Designing ai agentic systems requires careful attention to planning models, constraints, and control signals. Planning models determine how the agent selects a sequence of actions, while constraints ensure safety, compliance, and resource limits. Reinforcement learning can help optimize plan quality over time, but it should be paired with strong guardrails. On the generative side, prompt engineering, few-shot prompts, and retrieval-augmented generation shape the quality and reliability of outputs. A common pattern is to use a planning module to decompose goals into subgoals and tasks, with generative components providing drafts, alternatives, or content that the agent can evaluate and select from. Evaluation metrics should align with business outcomes—accuracy, speed, user satisfaction, and risk exposure—rather than purely model-level scores.

Data and training implications for agentic vs generative

Agentic systems rely on structured signals, real-time telemetry, and rule-based or learned policies to guide decisions. They benefit from rich context around states, events, and feedback, and often require integration with external APIs or databases. Generative models depend on large, diverse training corpora and continuous fine-tuning to remain relevant. In practice, teams should separate training concerns: train content-focused generative models separately from planning or policy modules to avoid entangling risk vectors. Data governance, versioning, and reproducibility become essential when your agent learns from evolving data streams. Privacy, data minimization, and bias mitigation must be embedded in both layers, with explicit review points for outputs and plans that could cause harm or misalignment with business goals.

Architectural patterns: orchestration and prompts

Two common patterns emerge in ai agentic vs generative architectures: orchestration-first and content-first. In orchestration-first designs, an agent coordinates multiple services, dashboards, and data sources to achieve an outcome, using generative models to draft requests, summaries, or decisions that the agent then enacts. In content-first designs, a strong generative backbone produces outputs that the agent subsequently interprets and acts upon. Hybrid patterns blend the two: an agent uses a planner to map goals into actions and relies on generative prompts to draft user-facing content, reports, or code snippets. Consistency, versioning, and auditability are critical. Build in modular interfaces, runbooks, and safety checks to ensure outputs align with the agent’s objectives and policies.

Use-case insights across domains

Across industries, ai agentic vs generative pairings show value in distinct scenarios. In customer support, generative models craft responses while agents decide routing, escalation, or follow-ups. In software automation, agentic orchestration coordinates tasks across CI/CD pipelines, monitoring systems, and ticketing apps, while generative tools draft summaries or changelogs. In content creation and marketing, generative models ideate and draft, with agentic components shaping strategy, approval flows, and compliance checks. In research and compliance, agentic systems can propose experiments or audits, while generative models draft reports. The key is to map ownership and risk: give the agent clear goals and constraints, and keep human oversight for high-stakes outputs. This alignment helps teams scale with confidence and transparency.

Risks, governance, and ethics

A disciplined approach to ai agentic vs generative design must address risk, bias, and governance. Agentic systems can overstep if goals are mis-specified or if feedback loops amplify unintended behaviors. Generative outputs may propagate biases or misinformation without strong safety rails. Implement guardrails such as goal validation, human-in-the-loop checks for critical decisions, explainability of actions, and robust logging. Adopt governance policies that define responsibility, escalation paths, and incident response. Regular audits of decision traces, outputs, and policy compliance help maintain trust with users and executives. Transparent documentation and clear ownership reduce the likelihood of misalignment between what the system thinks it should do and what users expect it to do.

Practical guidelines for teams and orgs

Start with a minimal, modular architecture that separates planning from content generation. Define a small set of goals and constraints for the agent, then test with non-critical workflows before scaling. Invest in observability: trace decisions from goals to actions and link outputs to prompts and content. Prioritize safety by implementing prompts, filters, and review steps for sensitive domains. Create a governance charter covering risk assessment, accountability, and remediation procedures. Finally, train teams on the interplay between agentic and generative capabilities, emphasizing clear handoffs, human oversight, and continuous improvement loops.

Measuring success and governance metrics

Measure success with outcomes that align to business value: task completion rates, time to resolution, user satisfaction, and reduction in manual interventions. Track the fidelity of goal alignment by auditing decision logs and comparing outcomes against declared objectives. Governance metrics should include the rate of flagged outputs, escalation frequency, and time to remediate misalignments. Regularly refresh prompts and plans to reflect new data and changing requirements. A mature AI program treats agentic and generative components as co-evolving parts of a single workflow, evaluated against both operational performance and risk posture.

Authoritative sources

- Authoritative guidance on AI governance and risk from NIST: https://www.nist.gov/itl/ai

- Academic and industrial perspectives on AI in production from MIT: https://mit.edu

- Foundational research and peer-reviewed insights from Nature: https://www.nature.com

Comparison

| Feature | ai agentic | generative AI |

|---|---|---|

| Definition | Goal-directed systems that reason, plan, and act to achieve outcomes | Models that generate new content or complete patterns from prompts |

| Core capability | Decision making, task orchestration, environment interaction | Content creation, style matching, pattern completion |

| Decision making | Autonomous with constraints and policies | Prompt-dependent; outputs guided by input prompts |

| Control mechanisms | Goals, rules, safety guardrails, feedback loops | Prompt design, safety filters, retrieval augmentation |

| Best for | Automation workflows requiring actions and adaptation | Drafting, ideation, summaries, and content generation |

| Latency and feedback | Low-latency action loops with ongoing monitoring | Variable latency dependent on model size and context |

| Data needs | Structured signals, telemetry, real-time data | Large training corpora and curated prompts |

| Governance | Explicit policy enforcement, audit trails | Prompt-level governance, output safety, and bias control |

Positives

- Enables robust automation with clear goals and accountability

- Allows orchestration of complex multi-step workflows

- Combines planning with creative generation for flexible outcomes

- Can improve efficiency and reduce human workload

What's Bad

- Increased system complexity and integration overhead

- Risk of misalignment if goals are poorly specified

- Potential biases and safety concerns in generative outputs

- Requires strong governance and monitoring to maintain trust

Agentic AI and generative AI are complementary; use agentic orchestration to guide generative outputs where appropriate.

Leverage agentic systems to set goals and manage workflows, while using generative models to provide content and options. This pairing offers both control and creativity, with governance to ensure safety and alignment.

Questions & Answers

What is the practical difference between ai agentic and generative?

Agentic systems plan, decide, and act to achieve goals, often interacting with real-world systems. Generative models produce new content or outputs based on prompts. The combination allows goal-directed actions with creative generation, increasing automation power while preserving flexibility.

Agentic AI plans and acts; generative AI creates outputs from prompts. Together, they enable automated actions and creative content in one workflow.

Can an AI system be both agentic and generative at the same time?

Yes. A typical pattern uses an agentic controller to set goals and orchestrate tasks, with generative models supplying drafts, responses, or content along the way. This hybrid approach leverages strengths from both paradigms while requiring careful governance.

Yes. An agent can guide actions while a generator creates the content you need, all under defined rules.

Which approach should teams prioritize for automated workflows?

Prioritize a modular architecture that separates planning from content generation. Start with clear, low-risk goals for the agent, and introduce generative components for drafts and outputs. Scale by adding guardrails, reviews, and escalation paths.

Start small with goals, then layer in generation and guardrails as you scale.

What governance and safety concerns should be addressed?

Establish guardrails for goal specification, ensure auditable decision traces, and implement human oversight for high-stakes outputs. Regularly assess bias, safety, and compliance in both planning and generation components.

Set guardrails, audit decisions, and maintain human oversight for riskier tasks.

How do you start integrating agentic and generative components?

Map your end-to-end workflow to identify where planning should occur and where content should be generated. Build a minimal viable architecture with clear interfaces, then test with non-critical tasks before expanding scope and governance coverage.

Start with a small, clear workflow, then scale gradually with safety checks.

Are there real-world examples of ai agentic vs generative in practice?

Yes. Many organizations pair an agentic orchestration layer with generative assistants for customer support, software automation, and research workflows. Each use case demonstrates the value of combining goal-directed decisions with creative content generation, while emphasizing governance and monitoring.

Yes—teams combine orchestration with generation in support and automation scenarios.

Key Takeaways

- Define clear goals for agentic components first

- Pair planning with content generation for best results

- Prioritize governance and monitoring from day one

- Use modular architectures to enable safe handoffs

- Quantify outcomes in business terms (speed, accuracy, satisfaction)