ai agent and generative ai: a side-by-side comparison

A rigorous comparison of ai agent and generative ai, covering definitions, use cases, governance, architecture, data needs, and best practices for building agentic AI workflows.

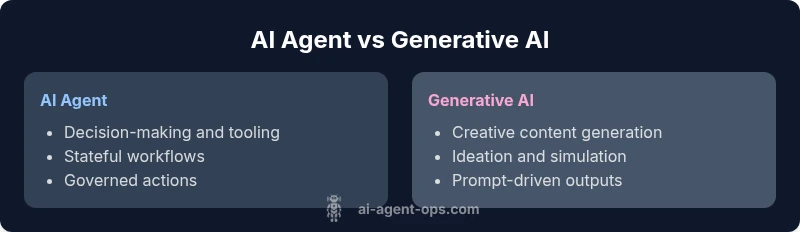

The ai agent and generative ai landscape combines autonomous decision-making with creative content generation. This comparison clarifies how AI agents differ from standalone generative AI, where they overlap, and when to choose each approach for smarter, faster automation. For teams building agentic workflows, understanding this distinction helps optimize governance, latency, and outcomes.

What are AI agents and generative AI?

AI agents are goal-directed systems that perceive their environment, make decisions, and take actions often by orchestrating tools and services. They operate with state, plans, and policies to achieve predefined objectives, and they can adjust behavior based on feedback. Generative AI, by contrast, focuses on producing novel content — text, images, code, or simulations — from prompts or seed data. The two are not mutually exclusive: AI agents commonly leverage generative models as a means to create content, summarize results, or simulate scenarios. For stakeholders exploring ai agent and generative ai deployments, the distinction matters for governance, latency, and reliability, especially when building agentic AI workflows that blend both capabilities.

- Key difference: decision-making and action vs content generation.

- Common overlap: prompt-driven generation used inside agents for content and planning.

- Practical takeaway: start with a clear objective and decide whether the bottleneck is decision-making or content creation.

Core capabilities: decision-making vs content generation

Decision-making and action are the hallmarks of AI agents. They continuously sense inputs, maintain state, select goals, and trigger downstream tools or services. Generative AI excels at language, image, and code synthesis, producing outputs that can be novel and context-rich. The strategic overlap occurs when agents use generative prompts to craft responses, generate tools, or simulate outcomes. For teams, this overlap should be governed by policy boundaries, logging, and containment strategies to prevent drift from intended goals. When both capabilities are needed, a hybrid architecture often yields the best results: an agent-driven loop with a generative model plugged in where content or creative reasoning is required.

Architectural patterns: agent orchestration vs monolithic models

An AI agent architecture emphasizes modularity and orchestration. It uses a planner, a memory store, a set of tools or APIs, and a policy layer that constrains actions. Generative AI can be a plug-in within this stack, invoked for tasks like drafting responses, creating summaries, or simulating user journeys. A monolithic generative model stack, by contrast, centers generation in a single model that tries to cover multiple tasks, which can simplify initial setup but often complicates governance and error handling. The hybrid pattern—agent orchestration with selective generative capabilities—offers clarity, auditability, and safer experimentation, especially in regulated domains.

Use-case alignment: when to choose each approach

Choose an AI agent when the objective requires sequence planning, decision execution, tool use, and ongoing state maintenance. This is common in workflow automation, customer support orchestration, and complex business processes. Opt for generative AI when the goal centers on creative output, ideation, or simulation without heavy external action. For many teams, the optimal solution is a hybrid: agents handle decisions and orchestration while generative AI handles content generation, scenario exploration, and enrichment.

Data needs and governance implications

AI agents rely on structured inputs, perception signals, tool responses, and history to inform decisions. Generative AI relies on prompts, seeds, and context windows, with outputs that may require filtering and post-processing. Governance considerations include access controls, audit trails, and clear responsibilities for model risk management. Data quality, prompt engineering discipline, and robust testing regimes reduce unpredictable behavior. In mixed environments, implement guard rails, safety layers, and monitoring dashboards to observe decision loops and generation outputs in real time.

Latency, reliability, and cost considerations

Agent-driven workflows emphasize sequential decision cycles, which can introduce latency if external services are involved. Generative AI often benefits from batch processing or caching but can incur variable output quality and cost based on prompt length. A hybrid approach helps balance risk and cost: keep critical real-time decisions within a fast, rule-based or policy-driven agent module, and use generative AI for non-critical content generation or scenario simulation. Regular cost governance and performance benchmarking are essential to avoid runaway usage.

Integration patterns and developer tooling

Developers should design clear interfaces between agents and generative models, such as well-defined prompts, tool libraries, and state machines. Tools for tracing, logging, and rollback are essential for troubleshooting agent actions. For generative components, establish evaluation metrics for quality, safety, and alignment with business objectives. Use orchestration layers to coordinate multiple components, and implement test suites that cover end-to-end agent behavior as well as generation quality and safety checks.

Security, bias, and risk management in AI agents and generative AI

Security considerations include access control to tools, data leakage risks, and prompt-injection vulnerabilities. Bias can creep into generative outputs or agent decisions if training data or policies are imperfect. Mitigate with input validation, output filtering, prompt hygiene, and ongoing bias audits. Establish formal risk management practices, including escalation paths for uncertain decisions and continuous monitoring of system behavior under varied scenarios.

Best practices for bridging AI agents with generative AI

For practical deployments, implement a layered approach: (1) define clear goals and guardrails for the agent, (2) use generative AI within explicit boundaries (e.g., content generation only after agent approval), (3) maintain a robust feedback loop to correct model outputs, and (4) invest in observability, testing, and governance. Start small with a pilot domain, measure outcomes, and expand as you gain confidence. The key is to separate decision logic from generation while ensuring secure handoffs between components.

Case studies: hypothetical deployments

In a customer service automation scenario, an AI agent handles triage decisions, routing to human agents or automated responses, while a generative model crafts polite, contextual replies when appropriate. In a product-ops use case, agents orchestrate data collection and task creation, and the generative model drafts release notes, user communications, and scenario simulations to validate new features. These examples illustrate how the two capabilities complement each other when governed by clear policies and monitoring.

Common pitfalls and how to avoid them

Avoid conflating generation quality with decision accuracy. Do not assume a single model will cover all needs; prefer modular components with explicit interfaces. Overreliance on prompts can lead to brittle behavior; invest in prompts as code, with versioning and testing. Finally, neglecting governance and logging erodes trust and complicates audits. Build with safety, transparency, and incremental rollout to maximize long-term success.

Comparison

| Feature | AI agent | Generative AI |

|---|---|---|

| Definition | Autonomous system that perceives, reasons about, and acts to achieve goals (often with tool use). | Model that creates new content (text, images, code) from prompts and context. |

| Primary use-case | Decision-making, orchestration, and action in workflows. | Creative content generation, ideation, and simulation. |

| Data and prompts | Structured inputs, state, external tools, and policy constraints. | Prompts, seeds, and context windows; less reliance on external state. |

| Latency and response pattern | Sequential actions with stateful decisions and tooling. | Often batchable or iterative content generation with feedback loops. |

| Governance and safety | Policy enforcement, audit trails, and tool-level controls. | Output safety, bias mitigation, and prompt safety controls. |

| Integration complexity | Moderate to high; requires orchestration, memory, and tooling. | Moderate; relies on model APIs and prompt engineering. |

| Cost model | Ongoing compute for agents, tools, and state management. | Per-transaction or per-token costs for generation. |

| Best for | Ops automation, real-time decisioning, and complex flows. | Creative content, ideation, and rapid prototyping. |

Positives

- Clear separation of decision-making and generation.

- Modular architecture enables safer governance.

- Easier to test and audit individual components.

- Scales more predictably with modular tooling.

What's Bad

- Higher initial integration and orchestration overhead.

- Increased system complexity from cross-component coordination.

- Potential latency from chained calls to tools and models.

- Requires comprehensive monitoring and instrumentation.

AI agents excel in decision-driven workflows; generative AI shines in content creation. A hybrid approach is often best.

For automation and governance-heavy scenarios, agents lead. For ideation and content-rich tasks, generative AI provides velocity. Combine with guardrails for most robust results.

Questions & Answers

What exactly is an AI agent?

An AI agent is a system that perceives its environment, reasons about possible actions, and acts to achieve goals, often integrating external tools. It operates with memory, policies, and a control loop to drive outcomes.

An AI agent is a smart system that can sense, decide, and act to reach a goal, using tools and memory to stay on track.

What is generative AI?

Generative AI models produce new content — like text, images, or code — from prompts. They are strong at ideation and synthesis but may require guidance to stay aligned with goals and constraints.

Generative AI creates new content from prompts and can assist with ideas, drafts, and simulations.

Can AI agents and generative AI work together?

Yes. Agents can orchestrate workflows and use generative AI for content, summaries, or scenario simulations. This collaboration often requires clear interfaces and governance to prevent drift.

Absolutely. Use agents to decide and act, while generative AI handles content and ideas.

Which should I choose for automation?

Choose AI agents for decision-heavy, tool-driven automation and monitoring. Use generative AI for rapid content generation, user-facing drafts, and exploratory simulations. A hybrid approach often yields the best balance.

If it needs decisions and actions, go with agents; if it needs content, go with generative AI.

What are the main risks and how can I mitigate them?

Risks include misaligned decisions, biased outputs, and data leakage. Mitigate with guardrails, audit logs, input-output filtering, and regular bias and safety reviews.

Risks include wrong decisions and biased outputs, so guardrails and audits are essential.

How do I start implementing an AI agent?

Begin with a narrow, well-scoped workflow. Define goals, tooling, and governance. Build an agent skeleton, plug in a generative model where suitable, and iterate with monitoring and testing.

Start small with a clear goal, then add components and test as you go.

Key Takeaways

- Prioritize clear decision-making when choosing AI agents.

- Use generative AI for content-focused tasks and simulations.

- Adopt a hybrid architecture for balanced performance.

- Governance and safety must be integral from day one.

- Start small, measure outcomes, and scale with confidence.