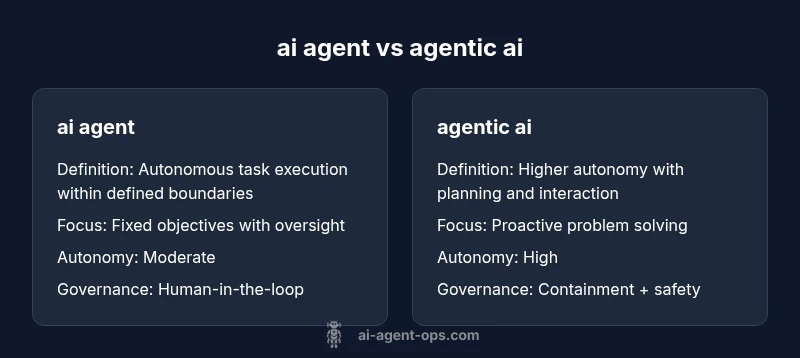

ai agent vs agentic ai: a conceptual taxonomy for workflows

Explore the differences between AI agents and agentic AI through a practical taxonomy that guides architects, developers, and leaders in governance, risk, and implementation.

AI agent vs agentic AI: a conceptual taxonomy helps teams choose the right level of autonomy. An ai agent typically operates under explicit objectives with human oversight, while agentic AI aims for more autonomous, goal-directed behavior within governance bounds. Understanding this taxonomy guides architecture, risk management, and governance choices for smarter, safer AI-powered workflows.

Why a conceptual taxonomy matters for AI agents

As organizations accelerate automation, a clear taxonomy for ai agent vs agentic ai a conceptual taxonomy helps teams plan, build, and govern AI-powered workflows. The distinction isn't academic: it shapes architecture decisions, risk controls, data strategies, and operator roles. According to Ai Agent Ops, most practical deployments begin with well-scoped, agent-level capabilities, then escalate to more autonomous agentic behaviors only after governance gates are in place. This taxonomy creates a shared language that aligns product managers, developers, and executives around a common set of expectations. It also helps avoid scope creep, where a system gradually absorbs more autonomy than original requirements specified. By codifying the spectrum from constrained agents to agentic systems, teams can implement safer, auditable progression paths rather than jumping to full autonomy. The goal is to enable faster delivery while preserving safety, explainability, and accountability.

- Ai Agent Ops emphasizes staged autonomy.

- A common taxonomy reduces misalignment across teams.

- Planning now reduces future rework and safety risks.

Definitions: ai agent vs agentic ai

ai agent: An autonomous software construct designed to carry out a defined set of tasks under explicit objectives, with deterministic or limited adaptive behavior. It typically operates with a fixed boundary, relies on human-in-the-loop oversight for critical decisions, and uses curated data and policies.

agentic ai: A higher-order form of AI that demonstrates sustained goal-directed behavior with planning, anticipation, and sometimes negotiation with environments or other agents. It is more capable of self-direction but requires robust governance, containment, and safety mechanisms to prevent misalignment.

For practitioners, this distinction is about where you sit on the autonomy spectrum and how you balance control with capability. The taxonomy helps teams scope requirements, define automation boundaries, and plan governance gates to ensure predictable outcomes. At Ai Agent Ops, we see this distinction as essential for aligning product roadmaps with risk tolerance and regulatory constraints.

- Agentic AI often involves planning and environment interaction.

- AI agents are typically bounded by explicit policies.

- The taxonomy supports staged adoption and governance.

Historical context and evolution of agents

Early AI agents emerged from expert systems and rule-based automation, where decisions were hand-crafted and verification was straightforward. As AI research progressed, planners and simple autonomous agents gained traction in domains like logistics and customer service. The term agentic AI began to surface as researchers explored higher levels of autonomy, including planning, multi-agent coordination, and negotiation within simulated or real environments. This evolution tracks a shift from rigid, verifiable behavior to adaptive, context-aware capabilities. For teams building AI-powered workflows, understanding this arc helps set realistic milestones, from ticking off a checklist of tasks for an ai agent to designing governance for more agentic capabilities. The practical takeaway is that each stage carries distinct risks, data needs, and testing requirements.

- Early agents prioritized correctness and auditable rules.

- Modern agentic AI requires containment and governance.

- The taxonomy supports roadmapping from simple to complex autonomy.

Core dimensions of the taxonomy

The taxonomy rests on several core dimensions that determine how an AI system can be designed, evaluated, and governed:

- Autonomy level: the degree of self-direction the system exhibits.

- Scope and boundary: defined tasks vs open-ended objectives.

- Governance and oversight: human-in-the-loop vs autonomous governance.

- Learning and adaptation: offline rules vs online adaptation.

- Data and environment: data quality, access, and simulation capabilities.

Each dimension interacts with the others. For example, increasing autonomy typically requires stronger governance and more robust data validation. A well-structured taxonomy also highlights best-fit use cases: ai agents excel in well-defined processes with stable inputs, while agentic AI suits dynamic environments where proactive planning adds value. By mapping features to dimensions, teams can communicate more effectively about requirements, risks, and success criteria. Ai Agent Ops recommends coupling taxonomy with explicit decision gates to prevent scope creep and ensure alignment with safety and accountability standards.

- Autonomy vs boundary definitions shape system design.

- Governance requirements rise with autonomy.

- Data readiness is a prerequisite for demand-driven capability.

Data, control, and safety prerequisites

The data landscape underpinning ai agent and agentic ai systems is fundamental. AI agents often rely on curated datasets, static policies, and well-defined APIs. In contrast, agentic AI typically requires richer data ecosystems, real-time feedback, and simulation environments to support planning and interaction with the world. Control mechanisms—such as access controls, logging, and policy enforcement—are essential at every level, but particularly critical for agentic AI, where decisions can cascade in complex ways. Safety frameworks should include fail-safes, containment strategies, and monitoring that can detect misalignment early. From a development perspective, investing in governance modeling, risk assessment, and explainability early in the lifecycle reduces downstream friction when scaling from ai agents to agentic AI. Ai Agent Ops advocates a staged approach: validate basic behavior, then incrementally expand autonomy with formal gates.

- Data quality dictates capability scope.

- Governance and explainability are non-negotiable at high autonomy levels.

- Incremental deployment reduces risk and accelerates learning.

Use cases and decision criteria

Different domains require different autonomy profiles. A customer-support chatbot operating with a predefined decision tree and escalation rules fits an ai agent model, delivering reliable, auditable performance. In contrast, an autonomous logistics planner or a negotiation-enabled agent within a supply chain may require agentic AI capabilities to optimize routes and adapt to disruptions. Decision criteria should include the criticality of tasks, tolerance for error, regulatory constraints, and the maturity of governance processes. The taxonomy guides these choices by clarifying which features are essential at each stage. At Ai Agent Ops, we emphasize aligning use cases with the right level of autonomy, ensuring that the architecture supports safe escalation to higher levels only when governance points are satisfied.

- Use ai agents for well-defined, high-reliability tasks.

- Reserve agentic AI for dynamic, multi-domain problems with strong governance.

- Use staged rollouts to build capability without sacrificing safety.

Architecture patterns and integration strategies

Architecture choices reflect the taxonomy. A common pattern is a hybrid approach: an orchestration layer coordinates simpler ai agents and negotiates with more capable sub-agents, while a central governance layer enforces policies and audits. For ai agents, rule-based engines with explicit policies and standardized interfaces often suffice. Agentic AI benefits from planning modules, simulation environments, and multi-agent coordination, possibly with formal containment strategies. Integration considerations include observability, data provenance, and compatibility with existing governance frameworks. This section outlines practical patterns such as: (1) rule-based agent with human-in-the-loop for critical decisions, (2) planning-based agent for dynamic tasks, (3) hybrid systems combining both approaches with an orchestration layer, and (4) agent orchestration for multi-agent workflows. Embracing these patterns helps teams scale safely and iteratively as maturity grows.

- Use orchestration layers to manage multiple agents.

- Combine rule-based controls with planning components.

- Prioritize observability and auditability in every design.

Evaluation, testing, and metrics

Evaluation strategies differ by autonomy level. For ai agents, test plans focus on correctness, coverage, and reliability, with metrics like decision accuracy, timeout rates, and escalation frequencies. Agentic AI requires more sophisticated evaluation, including planning quality, adaptability, and alignment with stated goals. Simulation-based testing, scenario coverage, red-teaming, and user feedback loops are essential. A robust evaluation pipeline includes static checks (policy adherence), dynamic checks (behavior under stress), and governance audits (traceability and explainability). The taxonomy helps establish acceptance criteria at each stage, ensuring that higher-autonomy implementations only proceed after satisfying safety and governance thresholds. Ai Agent Ops emphasizes continuous monitoring and post-deployment reviews to catch drift and misalignment early.

- Tailor metrics to autonomy level and use case.

- Use simulations to stress-test planning and adaptation.

- Maintain ongoing governance reviews post-deployment.

Governance and risk management practices

Governance models must evolve with the autonomy level. For ai agents, risk concerns center on data quality, bias, and escalation accuracy. Agentic AI introduces additional risks: speculative planning failures, unintended optimization paths, and complex interactions with the environment or other agents. A mature governance regime combines policy definitions, containment mechanisms, risk registers, and independent audits. It should cover data handling, model updates, access control, and incident response plans. A practical approach is to implement staged approvals, sandbox testing, and formal risk assessments before granting higher degrees of autonomy. The taxonomy acts as a scaffold, helping organizations articulate policy requirements, track risk, and maintain accountability across teams.

- Define clear governance gates for higher autonomy.

- Implement containment and monitoring for safety.

- Regularly audit data, decisions, and outcomes.

Practical roadmap for teams and organizations

A pragmatic roadmap begins with a narrow ai agent implementation, expanding scope in well-defined increments. Phase 1 focuses on governance, data readiness, and reliability. Phase 2 adds enhanced automation with limited autonomy and deterministic outcomes. Phase 3 introduces incremental agentic capabilities, supported by risk assessments and containment strategies. Throughout, teams should invest in observability, explainability, and documentation, enabling faster iteration while maintaining safety. The taxonomy guides decision gates, helping teams decide when to escalate from ai agent to agentic AI and when to pull back if governance criteria aren’t met. Ai Agent Ops advocates periodic reviews to rebaseline expectations and ensure alignment with business goals and regulatory requirements.

- Start with a clearly defined AI agent baseline.

- Add controlled autonomy as governance matures.

- Document decisions, policies, and outcomes for auditability.

Trends and future directions in agent taxonomy

As AI systems become more capable, the boundary between ai agent and agentic ai will continue to blur. The taxonomy will evolve to incorporate new capabilities, such as advanced multi-agent coordination, formal verification, and enhanced safety alignment tools. Organizations should monitor research on alignment, containment, and governance frameworks, integrating best practices into their roadmaps. The Ai Agent Ops perspective emphasizes disciplined experimentation, risk-aware scaling, and transparent communication with stakeholders to navigate the transition from agent-based automation to more autonomous, agentic AI under robust governance.

- Expect richer planning and coordination features.

- Prioritize alignment and safety tools as autonomy grows.

- Maintain transparency to stakeholders about capabilities and limits.

Applying the taxonomy: planning checklist

To implement this taxonomy in real projects, use the following planning checklist:

- Define the task boundary and baseline autonomy.

- Specify governance gates and safety requirements before escalation.

- Audit data quality and access controls.

- Plan monitoring, explainability, and incident response.

- Stage rollout with clear milestones and review points.

- Reassess risk posture as autonomy increases.

- Align with organizational risk tolerance and regulatory obligations.

This checklist helps teams translate the taxonomy into actionable development steps and governance practices, ensuring a safe and effective deployment path.

Comparison

| Feature | ai agent | agentic ai |

|---|---|---|

| Definition | An autonomous system with defined tasks and oversight | A higher-autonomy system with goal-directed planning and negotiation capabilities |

| Focus | Task execution within explicit boundaries | Proactive planning and autonomous problem-solving |

| Autonomy | Moderate to limited | High to substantial |

| Decision scope | Narrow, policy-driven | Broad, adaptive and proactive |

| Learning | Limited learning, rule-based or offline | Continuous learning with online adaptation |

| Governance | Human-in-the-loop oversight | Formal governance with containment and safety controls |

| Data needs | Structured data and APIs | Rich data streams, simulations, dynamic data |

| Use cases | Routine automation, simple escalation | Dynamic environments, multi-domain collaboration |

| Risk profile | Lower risk, auditable | Higher risk requiring strong alignment and safety |

Positives

- Easier to govern and audit

- Predictable behavior within defined boundaries

- Lower risk for basic automation

- Faster deployment for simple tasks

What's Bad

- Limited adaptability in changing environments

- Slower to scale in complex, multi-domain scenarios

- May constrain innovation if over-scoped

- Requires clear interfaces to prevent silos

Agentic AI is powerful but riskier; start with AI agents and escalate only after governance maturity

Prioritize ai agents for predictable tasks with safety and auditability. Elevate to agentic AI carefully, ensuring containment, safety, and robust governance before granting higher autonomy.

Questions & Answers

What is the difference between an ai agent and agentic AI?

An ai agent operates within defined tasks with oversight, while agentic AI pursues higher autonomy with planning and goal-directed behavior. The taxonomy clarifies when to use each, guided by governance and risk considerations.

An ai agent handles defined tasks with oversight; agentic AI adds more autonomy, requiring stronger governance.

How do I decide which taxonomy level to apply to a project?

Assess the criticality of tasks, tolerance for error, regulatory constraints, and available governance capabilities. Start with an ai agent and only escalate to agentic AI after safety gates are satisfied.

Start safe with an agent, then scale up autonomy as governance matures.

What governance structures are needed for agentic AI?

Agentic AI requires formal containment, risk assessment, continuous monitoring, and independent auditing. Establish clear escalation paths, decision logs, and explainability requirements.

Containment, monitoring, and audits are essential for agentic AI.

What are common pitfalls when adopting this taxonomy?

Underestimating data needs, skipping governance gates, and overestimating what automation can safely handle. Plan incrementally and keep a tight feedback loop.

Watch for data gaps and skipped safety checks.

How does data quality affect these systems?

High-quality data is crucial for reliable decisions, especially in agentic AI where planning relies on accurate world models. Poor data leads to drift and unsafe outcomes.

Good data is foundational to safe autonomy.

Are there safety considerations unique to agentic AI?

Yes. Agentic AI can initiate actions beyond initial scope, so implement containment, kill switches, and continuous risk monitoring to prevent misalignment.

Safety is non-negotiable when autonomy scales.

Key Takeaways

- Start with well-defined AI agents before adding autonomy

- Use a staged governance path to scale autonomy

- Design with observability and explainability from day one

- Apply the taxonomy to guide architecture and risk management

- Plan for gradual escalation to agentic capabilities only when governance gates are met