Tips for Creating an AI Agent: A Practical Guide for 2026

Learn practical, step-by-step tips for creating an AI agent—from goals and data to safety, testing, and deployment. Ai Agent Ops shares actionable guidance for building reliable, scalable agentic AI workflows in 2026.

This guide delivers practical, step-by-step tips for creating an AI agent, from defining goals to deploying guardrails and monitoring performance. You’ll learn design principles, data considerations, safety measures, and iterative testing to build reliable, scalable agentic AI workflows in 2026. Follow the concise steps to get started quickly.

Design philosophy for AI agents

Effective AI agents are built around clear problem framing, disciplined modularity, and strong guardrails. Start with a precise problem statement and measurable success criteria. Favor a modular architecture where capabilities (planning, memory, tools) are decoupled so you can evolve one part without destabilizing the rest. Emphasize transparency and human-in-the-loop where safety or accountability is paramount. According to Ai Agent Ops, think in terms of agentic AI that augments human decision-making, not replaces it. Prioritize interpretability, auditable decisions, and incremental learning to enable safe, progressive improvements. In practice, this means documenting assumptions, logging decisions, and designing the agent to explain its rationale when asked. Build with reusability in mind—design components that can be repurposed across domains rather than bespoke, one-off solutions. Finally, align incentives so the agent’s goals reflect user needs and business value, not just novelty or autonomy.

Key ideas to apply today: define guardrails early, favor modular components, and document decisions to support governance and future iteration.

Defining scope and user tasks

Begin by identifying the primary tasks the AI agent should assist with or automate. Create a concise list of user personas, their pain points, and the concrete outcomes the agent should achieve. Translate each outcome into measurable success criteria, such as response time, accuracy, or the frequency of correct tool usage. Establish boundaries to prevent scope creep; specify what the agent should not do and when human escalation is required. Use real use cases to guide design decisions, and consider both routine repetitive tasks and edge cases that require nuanced judgment. In this phase, map the user journey from input to result, including any required approvals or human-in-the-loop interventions. By naming success criteria and failure modes early, you’ll have a clear north star for development and testing.

Practical tip: draft a one-page goal sheet per use case and circulate it with stakeholders to ensure alignment and avoid later rework.

Architecture and data flows

A robust AI agent relies on a clean architecture that separates perception, reasoning, action, and feedback. Typical components include a memory store for context, a planner for sequencing steps, tools or adapters to perform tasks, and a feedback loop to learn from outcomes. Design data flows that minimize duplication, protect sensitive information, and enable traceability from input through action to results. Establish interfaces for data ingestion, tool invocation, and result interpretation. Consider memory strategies: short-term context for immediate tasks and long-term memory for recurring patterns or user preferences. Data governance should address privacy, security, and compliance—especially when handling customer data or regulated content. A practical approach is to model your architecture with diagrams and maintain a living blueprint that reflects API contracts, data schemas, and failure handling.

Audit-worthy note: ensure data provenance and decision traceability so you can explain why the agent took a given action.

Safety, governance, and ethics

Safety and ethics are non-negotiable in AI agent design. Implement guardrails to prevent harmful actions, and establish escalation paths for uncertain decisions. Define data governance policies that cover data collection, storage, retention, and usage rights. Bias mitigation should be baked into data selection, model choice, and evaluation. Conduct regular audits of the agent’s decisions and outcomes, especially in high-stakes domains. Create a transparent policy on user consent and explainability, including what the agent can do, what it cannot do, and how users can appeal decisions. Document ethical considerations and align with organizational values and regulatory requirements. This discipline reduces risk, builds user trust, and supports long-term adoption.

Ai Agent Ops insight: safety is a feature of the process, not a one-off fix; integrate safety checks into every lifecycle stage.

Evaluation, testing, and iteration

Build a rigorous evaluation framework to measure performance across metrics like accuracy, latency, robustness, and user satisfaction. Use scenario-based testing to assess how the agent handles common tasks and edge cases. Create a test suite that combines synthetic data with real-world samples to validate generalization. Track failures, analyze root causes, and prioritize fixes based on impact and feasibility. Establish an iteration cadence that includes code reviews, user feedback loops, and staged deployments (dev/staging/production). Leverage A/B testing where appropriate to compare design choices and quantify improvements. Always document test results and decisions to support governance and future enhancements.

Best practice: automate key test scenarios and maintain a changelog of improvements for accountability.

Deployment, monitoring, and maintenance

Deployment should be treated as a controlled, observable process. Use feature flags, gradual rollouts, and health checks to minimize risk. Monitor operational metrics such as error rates, latency, tool success rates, and user-reported issues. Implement alerting for anomalies, and ensure you have rollback plans in place. Maintain versioned models and configurations so you can reproduce past behavior if needed. Establish a maintenance schedule for updating data sources, integrating new tools, and refining prompts or reasoning strategies. Plan for ongoing monitoring of compliance, privacy, and ethical guidelines as the agent evolves. A proactive maintenance mindset helps sustain reliability and user trust over time.

Operational tip: keep a running changelog focused on capabilities, not just fixes, to support audits and governance.

Collaboration and future-proofing

Successful AI agents emerge from cross-functional collaboration among product, engineering, data science, and governance teams. Create a shared vocabulary and documentation that explains capabilities, limitations, and expected outcomes. Build modular components with clean APIs to enable reuse and rapid iteration across domains. Design for future updates by decoupling data schemas, prompts, and tool integrations from core logic. Plan for scaling, including concurrency control, rate limiting, and distributed execution patterns. Finally, establish a roadmap for agent enhancement that includes both short-term wins and long-term strategic investments in agentic AI capabilities.

Key takeaway: treat your agent as an evolving product with ongoing governance, collaboration, and a clear upgrade path.

Common pitfalls and how to avoid them

Many teams stumble on scope creep, data leakage, or unsafe actions. To avoid these, maintain strict scope boundaries, implement data minimization, and regularly review tool permissions. Poor observability makes debugging hard—invest in comprehensive logging and traceability. Relying on a single model or tool can create single points of failure; diversify tools and establish fallback behaviors. Finally, neglecting governance early leads to compliance risks later; embed governance from day one, incorporate user feedback, and maintain transparent documentation. Proactive planning and disciplined execution help you avoid these common mistakes.

Tools & Materials

- Development workstation(Modern laptop/desktop with 16 GB+ RAM and stable OS)

- Internet connectivity(Reliable connection for cloud APIs and data access)

- API access to AI models/tools(At least one LLM or agent framework API key)

- Data samples for testing(Representative datasets with appropriate permissions)

- Version control (Git)(For reproducibility and collaboration)

- Experiment tracking (optional)(For governance and traceability of experiments)

- Architecture diagram tool(To share data flow and component design with stakeholders)

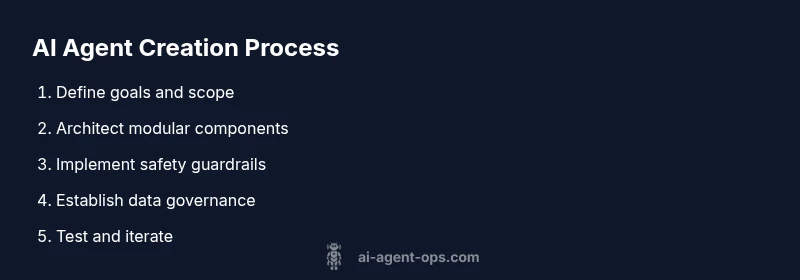

Steps

Estimated time: 2-6 weeks

- 1

Define goals and user tasks

Articulate the primary objective of the AI agent and the tasks it will assist with. Describe the expected user outcomes and how success will be measured. Establish escalation rules for when human input is required.

Tip: Create a one-page goals sheet for each use case and circulate it for alignment. - 2

Identify required capabilities

List the core capabilities the agent needs (perception, planning, tool use, memory, feedback). Decide which capabilities can be automated now and which require future iterations.

Tip: Prioritize capabilities that enable high-value tasks with minimal risk. - 3

Choose architecture pattern

Select an architecture that supports modularity (planning + tools + memory). Define interfaces between components and establish data contracts for inputs/outputs.

Tip: Favor decoupled components to ease testing and future upgrades. - 4

Design data flows and memory

Map data sources, ingestion methods, and where context is stored. Define memory horizons for short-term context and long-term preferences, with clear privacy controls.

Tip: Implement data minimization and access controls from the start. - 5

Implement core reasoning loop

Build the loop that observes input, reasons with available data, selects actions, and executes tools. Include fallback behaviors for uncertain situations.

Tip: Document decision paths to improve explainability. - 6

Add safety guards and governance

Incorporate guardrails, monitoring, and escalation rules. Implement privacy, bias checks, and compliance reviews in development.

Tip: Run all new capabilities through a governance review before production. - 7

Build evaluation and testing framework

Create scenario-based tests and metrics to validate performance, safety, and reliability. Use synthetic data and real-world samples where appropriate.

Tip: Automate test execution and maintain a changelog of results. - 8

Plan deployment and monitoring

Prepare a staged rollout with observability, alerts, and rollback options. Establish dashboards to track KPIs and user feedback.

Tip: Use feature flags to minimize risk during rollout. - 9

Establish maintenance and iteration cadence

Set a cadence for updates, governance reviews, and capability enhancements. Maintain versioned configurations and ensure reproducibility.

Tip: Document decisions and rationale to support future audits.

Questions & Answers

What is an AI agent?

An AI agent is a software system that observes its environment, reasons about possible actions, and executes tasks to achieve defined goals. It often uses tools, memory, and planning components to autonomously handle tasks while staying within safety and governance boundaries.

An AI agent observes, reasons, and acts to accomplish goals, using tools and memory while following safety rules.

How do you measure AI agent performance?

Performance is measured with metrics like task completion rate, response time, tool success rate, and user satisfaction. Scenario-based tests and real-world monitoring help identify gaps, guiding iterative improvements.

Evaluate completion rates, speed, tool success, and user satisfaction to gauge performance and drive improvements.

What tools do I need to build an AI agent?

You’ll need access to AI model APIs, a development environment, version control, data samples for testing, and a plan for monitoring and governance. Modular components and clear interfaces simplify maintenance and scaling.

You need AI model access, a dev environment, version control, data samples, and governance plans.

How should data be managed for AI agents?

Manage data with privacy by design, limit collection to what’s necessary, and implement access controls. Maintain provenance and logs to support auditing and accountability.

Handle data with privacy by design, minimize collection, and keep logs for auditing.

How can I ensure safety and governance in AI agents?

Embed guardrails, document decisions, run governance reviews, and monitor for bias or privacy issues. Regular audits and transparent policies help sustain safe deployment.

Guardrails, governance reviews, and ongoing monitoring keep AI agents safe and trustworthy.

Can AI agents replace humans entirely?

No. AI agents should augment human teams, handling repetitive tasks and data processing while humans handle complex judgment, empathy, and strategic decisions.

AI agents support humans, not replace them, handling routine tasks while humans focus on complex decisions.

Watch Video

Key Takeaways

- Define clear goals and success metrics before building.

- Design modular architectures to support reuse and upgrades.

- Incorporate safety, governance, and ethics from day one.

- Establish a rigorous testing and monitoring regime.

- Document decisions and maintain governance-ready artifacts.