Train AI Agents: A Practical Step-by-Step Guide for 2026

Learn how to train ai agent from goal definition to governance. This practical guide covers data, tooling, evaluation, and safety for developers and leaders building agentic AI workflows.

This guide shows you how to train an AI agent end-to-end, from goal setting to evaluation. You’ll need a compute environment, an agent framework, and a plan for safety and governance. Follow the step-by-step approach to establish a baseline, validate performance, and iterate toward production-ready agentic AI.

Why train AI agents today

Training AI agents is about teaching systems to act autonomously to achieve defined goals while interacting with their environment. For developers and business teams, this means moving from static prompts to adaptive behavior that can plan, reason, and use tools. According to Ai Agent Ops, starting with clear objectives, measurable success criteria, and safety guardrails saves time and reduces drift later. If you want to train ai agent at scale, begin with a small, well-scoped prototype and build governance into every iteration. This section lays out the why and the high-level approach so you can orient teammates and stakeholders around a shared vision.

Foundations: goals, autonomy, and safety

A successful AI agent begins with well-defined goals, an appropriate level of autonomy, and explicit safety boundaries. Define what the agent should know, which actions it can take, and how it should respond to failures or novel situations. Autonomy ranges from assisted to fully autonomous; choose a level that aligns with risk tolerance and governance. Safety guardrails include hard limits on actions, escalation paths for human review, and continuous monitoring for unintended behavior. When you align goals, autonomy, and safety from the start, you’ll reduce rework and build trust with users and stakeholders.

Data and environments: datasets, prompts, and simulators

High-quality, representative data is the lifeblood of a train ai agent project. Curate domain-relevant datasets, balance coverage across typical and edge cases, and annotate prompts to probe the agent’s decision-making. Use simulated environments to run controlled experiments before touching live systems. Maintain data governance practices, including privacy, consent, and bias checks. Ai Agent Ops Analysis, 2026 highlights that robust data and sandboxed environments correlate with faster iteration and safer deployments, especially when paired with rigorous evaluation plans.

Architecture and tooling: choosing runtimes and frameworks

Select an architecture that supports planning, action execution, and tool use within a cohesive framework. A common pattern combines a planner component, an action executor, and an environment interface that simulates real-world feedback. Pair this with a toolchain that supports prompt templates, experiment tracking, and governance workflows. When evaluating tools, prioritize modularity, observability, and safety features. The right stack accelerates development and makes maintenance easier as your agent evolves.

Evaluation, monitoring, and iteration

Evaluation should be continuous and multi-faceted. Define quantitative metrics (goal success rate, latency to decision, tool-use accuracy) and qualitative reviews (safety checks, user feedback). Establish a looping process: run experiments in sandbox, analyze results, adjust prompts or architecture, and re-run tests. Monitoring dashboards and alerting help catch drift early. Over time, you’ll stabilize behaviors and expand capabilities while maintaining governance and safety.

Common pitfalls and best practices

Avoid scope creep by starting with a narrow task and a small data slice. Keep prompts versioned and configurations auditable to support reproducibility. Beware data leakage and brittle prompts that overfit to training data. Use synthetic data to test edge cases and ensure your guardrails can adapt to new contexts. Regularly review the agent’s performance with diverse stakeholders and document lessons learned to accelerate future iterations.

Real-world blueprint: starter project you can copy

This blueprint outlines a small starter project suitable for a team new to agentic AI. Begin with a single task, a controlled dataset, a sandboxed environment, and a basic planner-driven agent. Implement a simple governance cycle: daily checks, weekly reviews, and quarterly audits. As you gain confidence, expand the task scope, enrich the dataset, and tighten guardrails. The blueprint emphasizes iteration, traceability, and safety as core pillars.

Tools & Materials

- Computational workstation (CPU/GPU)(Adequate GPU memory for model size and batch workloads.)

- Python 3.x environment(Version 3.9+; virtual environments recommended.)

- Agent framework or toolkit(Choose one that supports planning, tool use, and safety guards.)

- Prompts and datasets for training(Domain-relevant data; ensure labeling and privacy compliance.)

- Experiment tracking and logging(Track prompts, configurations, and metrics.)

- Version control system(Git repository for code and prompts.)

- Monitoring and evaluation dashboards(Optional but helpful for live oversight.)

- Access to LLM API or local models(For testing and running agent tasks.)

- Safety and governance policy documents(Guards, escalation paths, and review cycles.)

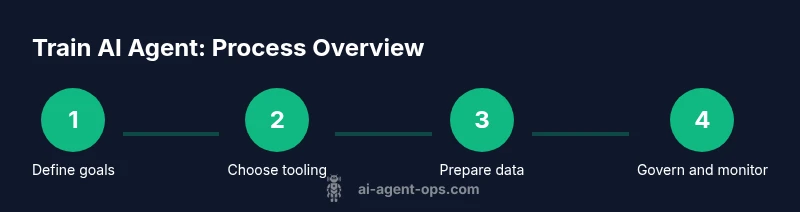

Steps

Estimated time: 4-6 weeks

- 1

Define goals and safety guardrails

Begin by articulating a single, concrete task for the agent and specify success metrics. Define non-negotiables, escalation paths, and hard limits on actions. This creates a baseline from which you can measure progress and catch drift early.

Tip: Document non-negotiables and success criteria; make them visible to all stakeholders. - 2

Choose architecture and tooling

Select a planner-driven or tool-using architecture that fits your domain. Pick an agent framework with good observability and a governance workflow, and ensure integration points with your data sources and environment simulators.

Tip: Match tooling to team skills and domain constraints; avoid over-engineering early. - 3

Prepare data and prompts

Curate a representative dataset and design prompts that probe decision logic, safety boundaries, and tool interactions. Validate prompts in a sandbox and annotate failures to improve later iterations.

Tip: Use synthetic data for early testing to minimize data leakage and privacy concerns. - 4

Prototype, test, and iterate

Build a baseline agent and run controlled experiments in a sandbox. Analyze outcomes, refine prompts or components, and re-test with new scenarios to reduce regression.

Tip: Run small A/B tests to compare prompt variants and measure impact on goals. - 5

Deploy governance and monitoring

In production, enforce guardrails, establish monitoring dashboards, and set up alerting. Create feedback loops with users and schedule regular governance reviews to adjust policies.

Tip: Automate logs and governance checks; plan quarterly policy updates.

Questions & Answers

What is an AI agent?

An AI agent is a system that can perceive its environment, decide on a course of action, and execute tasks to achieve goals. It typically combines planning, decision-making, and action execution, often using tools or APIs to interact with the real world.

An AI agent perceives, decides, and acts to reach a goal, often using tools to interact with the world.

How is training an AI agent different from training a traditional ML model?

Traditional ML models map inputs to outputs; AI agents learn to operate in dynamic environments, reason about actions, and use tools. Training involves not only accuracy but safe behavior, decision policies, and governance.

Agents learn how to act in changing environments and must be governed for safe behavior.

What data quality is needed to train an AI agent?

High-quality, representative, and diverse data is essential. Data should cover typical and edge cases, be labeled for decision points, and be free from leakage or bias that could steer agent behavior awry.

You need diverse, clean data that covers typical and edge cases, with careful labeling.

How do you measure success of an AI agent?

Use a mix of quantitative metrics (goal success rate, latency, tool-use accuracy) and qualitative evaluations (safety reviews, user feedback). Regularly compare results across iterations.

Success is measured by goal achievement, speed, accuracy, and safety reviews.

What are common safety concerns when training AI agents?

Concerns include unintended actions, data privacy breaches, bias amplification, and overreliance on automation. Implement guardrails, escalation paths, and ongoing governance.

Safety concerns involve misbehavior, data risks, and bias; guardrails and governance help.

Who should be involved in an AI agent project?

A cross-functional team typically includes engineers, product managers, data scientists, and ethics or governance leads. Clear ownership and decision rights accelerate progress.

Cross-functional teams with clear ownership lead to faster, safer progress.

Watch Video

Key Takeaways

- Define clear goals and guardrails first.

- Choose a compatible toolchain for your task.

- Iterate with rigorous evaluation and governance.

- Document prompts and experiments for reproducibility.