How to Make a Personal AI Agent: A Practical Guide

Learn how to design, build, and test a personal AI agent—from planning and architecture to deployment and governance—in 2026.

This guide teaches you how to make a personal ai agent from planning through deployment. You’ll define goals, select a modular tech stack, implement memory and safety, and iterate with testing and governance. Follow the step-by-step approach to build a capable agent that automates repetitive tasks while staying under your control.

Foundations of a Personal AI Agent

According to Ai Agent Ops, the process of building a personal ai agent begins with a crisp problem statement and a clear boundary between autonomous actions and human oversight. If you’re wondering how to make personal ai agent, start by defining the tasks you want automated, the data you will need, and the decision points where human review is essential. This foundation frames governance, data handling, and safety requirements for the entire project. A well-scoped scope avoids feature creep and reduces risk. Throughout this foundation, translate goals into concrete capabilities and measurable criteria, such as time saved per week, accuracy, and user satisfaction. By the end, you’ll have a concrete MVP outline you can iterate on. The 2026 landscape for agentic AI favors modular designs, enabling components to be swapped as models improve or requirements shift. As you proceed, keep a long-term perspective: your MVP should be a stepping stone, not a final monument. You’ll build confidence with small, measurable milestones and clear rollback plans in case experiments do not yield expected results.

Core components and architecture

A personal ai agent relies on several core components: a controller or orchestrator that sequences tasks, a language model (or family of models) that understands prompts, a memory layer that preserves context, and a memory store used to retrieve relevant facts. Edge cases require a gating mechanism to decide when to fetch external data versus rely on internal reasoning. Ai Agent Ops emphasizes a modular architecture because it allows you to swap models or data stores as needs change without rewriting your entire stack. You’ll typically connect a prompt layer to a planner that decides what steps to take, then a toolbelt of capabilities such as web search, data extraction, and action execution. The communication protocol between components should be explicit and well-documented, with clear input and output formats to enable testing and auditing. Later sections will translate these abstractions into concrete code patterns you can copy, adapt, or extend.

Planning your agent: goals, constraints, and safety

Effective planning starts with a mission statement for the agent and a list of non-negotiables. Define success metrics (time saved, accuracy, user satisfaction) and identify constraints (data usage limits, latency targets, and safety boundaries). Establish governance rules: what actions require human approval, what data can be stored, and how the agent should respond to ambiguous prompts. Integrate privacy by design principles from the outset. Ai Agent Ops highlights the importance of a safety guardrail that blocks risky operations and logs all boundary decisions for auditability. Your planning documentation will guide later choices about prompts, tools, and evaluation criteria.

Selecting tools and data sources

Choose a modular tech stack with clearly defined interfaces: a controller/orchestrator, a language model, a memory layer, and a retrieval mechanism. Prioritize open standards for data formats and communication so you can swap models or data stores as needed. For data sources, balance internal knowledge with trusted external signals (web results, structured databases, and prompts that guide data synthesis). Use a lightweight memory layer for current sessions and a vector store for long-term context. Ai Agent Ops recommends documenting data provenance and access controls to maintain trust as the agent scales. This section translates raw tool choices into a practical stack you can prototype in a weekend and iteratively improve.

Designing the interaction layer and prompts

Interaction design shapes user experience and outcomes. Start with simple prompts that trigger clear, verifiable actions, then expand to multi-step reasoning with planning and verification. Use a modular prompt framework: task prompts, tool prompts (for searches, extractions, or actions), and governance prompts (to check safety rules). Build a prompt library with guardrails for bias, hallucinations, and privacy concerns. Test prompts against edge cases and measure reliability. Ai Agent Ops emphasizes keeping prompts human-friendly and debuggable so you can trace decisions when things go wrong.

Implementing memory, context, and state management

A robust personal ai agent relies on memory to preserve context across conversations. Implement a memory layer with both short-term session memory and long-term context storage via a vector store. Use embeddings to retrieve relevant past interactions and status flags to track goals and progress. Enforce access controls to protect sensitive data. The governance layer should ensure that memory usage complies with privacy policies and retention schedules. This combination enables more natural interactions and more accurate decision-making over time.

Testing, evaluation, and iteration

Adopt a test-driven mindset: define scenarios that cover typical use cases, edge cases, and potential failure modes. Track quantitative metrics (latency, success rate, error rate) and qualitative signals (user satisfaction, perceived reliability). Run iterative sprints: implement one improvement, run tests, measure impact, and document results before moving forward. Ai Agent Ops stresses maintaining an audit trail of decisions and updates to prompts, tools, and policies. Regularly revisit governance rules as models evolve and your use cases expand.

Deployment, monitoring, and governance

Deployment should be incremental: start with a controlled pilot, then broaden exposure with tight monitoring. Implement observability dashboards for latency, accuracy, data usage, and safety flags. Establish ongoing governance—data retention policies, consent management, and human-in-the-loop reviews for high-risk actions. Ensure you have rollback plans and versioned configurations so you can revert if issues arise. The Ai Agent Ops framework favors transparent monitoring and clear ownership for maintenance tasks.

Common pitfalls and how to avoid them

Be wary of scope creep, unsafe autonomy, and brittle memory management. Start with a tightly scoped MVP and steadily increase capabilities as you gain confidence. Keep governance artifacts up to date and avoid embedding sensitive data directly in prompts or logs. Regularly review prompts for bias and drift, and implement automated checks to catch anomalous behavior early.

Tools & Materials

- Development machine with internet access(Modern CPU, 16-32 GB RAM preferred)

- Python 3.11+(Install from official Python site; use virtual environments)

- Node.js 18+(For tooling and pipelines; npm available)

- Code editor (e.g., VS Code)(Extensions for Python/JS debugging)

- API access to AI models(Keys for OpenAI or compatible providers)

- Vector store library (e.g., FAISS or Milvus)(Store and retrieve embeddings for memory)

- Test datasets and prompts(Curated tasks to validate behavior)

- Basic hosting or cloud compute(Optional for deployment and monitoring)

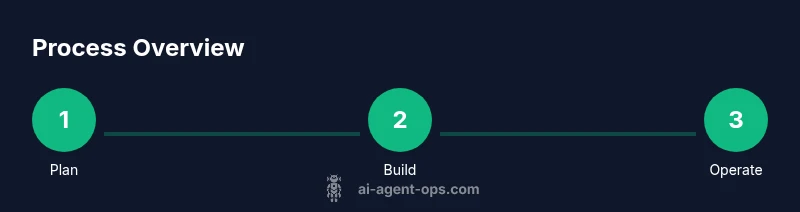

Steps

Estimated time: 6-12 hours

- 1

Define scope and success criteria

Clearly state the automation goals, the tasks the agent should perform, and the acceptance criteria to decide when the MVP is ready. Include measurable metrics like time saved, accuracy, and user satisfaction. Establish guardrails to prevent harmful actions.

Tip: Document inputs/outputs for every capability; this makes it testable and auditable. - 2

Assemble your tech stack

Choose modular components: a controller/orchestrator, a language model, a memory layer, and a memory store for context. Ensure plug-and-play interfaces so you can swap models or stores later.

Tip: Prefer open, well-documented interfaces to maximize portability. - 3

Implement the agent core and capabilities

Build the core loop that takes user prompts, routes tasks to models, and triggers actions. Add capabilities such as web search, data extraction, and task planning.

Tip: Keep capabilities small and testable; add one capability at a time. - 4

Add memory, context, and state management

Integrate a memory layer to preserve context across interactions. Use a vector store for embeddings and a simple state machine to track goals and progress.

Tip: Index sensitive data cautiously and implement access controls. - 5

Incorporate safety, governance, and privacy

Define policies for data handling, user consent, and action boundaries. Implement safety checks before executing potentially risky actions.

Tip: Implement a human-in-the-loop path for high-risk decisions. - 6

Test, iterate, and document

Run real-world scenarios, collect metrics, and refine prompts and rules. Maintain a changelog and document decisions for future maintenance.

Tip: Automate regression tests and track performance over time.

Questions & Answers

What is a personal AI agent?

A personal AI agent is a software entity that automates tasks for a specific user, using AI models to interpret prompts, retrieve information, and take actions within defined boundaries. It combines planning, memory, and governance to operate with limited supervision.

A personal AI agent automates tasks using AI, with defined limits and memory to improve efficiency.

Do I need advanced hardware to build one?

Not necessarily. You can prototype on a mid-range machine with cloud-backed compute for heavier tasks. Key needs are adequate RAM, fast storage, and reliable network access to connect to AI services.

A mid-range computer is fine for prototypes; you can scale with cloud resources as needed.

What are common mistakes when building an AI agent?

Overly broad scopes, missing safety checks, and poor memory management lead to brittle agents. Start with a small MVP, add guardrails, and monitor prompts for bias and drift.

Common mistakes include scope creep and skipping safety checks; start small and test thoroughly.

How do I ensure safety and governance?

Define clear policies for data handling, consent, and action boundaries. Use human-in-the-loop reviews for high-risk tasks and maintain an audit trail.

Safety and governance come from clear policies and human oversight for risky actions.

Can I build without coding?

Yes, to an extent. No-code tools can help wire prompts and workflows, but deeper customization often requires some coding steps, especially for memory and orchestration.

You can start with no-code tools, but more advanced features usually need coding.

What about ongoing maintenance costs?

Ongoing costs include compute for model calls, data storage, and monitoring. Plan for cloud usage, model updates, and security audits as part of the lifecycle.

There are ongoing costs for compute and data, plus updates and audits.

Watch Video

Key Takeaways

- Define a concrete mission and limits.

- Build modular components for adaptability.

- Prioritize safety and governance from day one.

- Test iteratively and monitor results.

- Document decisions for long-term maintenance.