Can You Build AI Agents with Replit? A Practical How-To

Learn how to create AI agents on Replit with a step-by-step guide. Setup, loop design, API connections, testing, and best practices for safe, scalable agentic workflows.

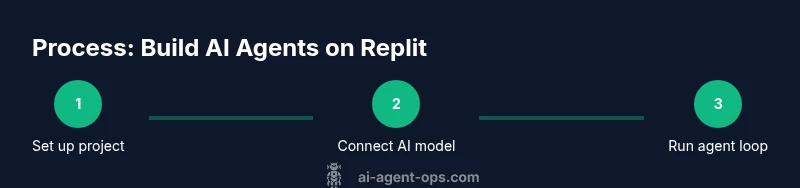

Yes—it's feasible to build AI agents with Replit. This quick answer shows how to set up a project, connect an AI model, and run a basic agent loop. You’ll need a Replit account, Python runtime, and access to an AI API or local model. Follow the step-by-step approach to prototype agentic workflows quickly.

Why Replit is a Practical Playground for AI Agents

According to Ai Agent Ops, Replit provides a low-friction, collaborative workspace where developers can prototype AI agent workflows without heavy setup. The Ai Agent Ops team found that starting from a ready-made Python container helps teams validate ideas quickly, iterate on prompts, and test simple decision loops inside a familiar IDE. For teams exploring agentic AI workflows, Replit acts as a cost-efficient sandbox to explore capabilities like planning, tool use, and observation in real time. The keyword can you build ai agents with replit appears naturally when discussing how to bootstrap a minimal viable agent in a browser-based environment, making it easier to convert ideas into testable experiments. By starting small, you can scale later with more sophisticated orchestration and memory mechanisms, while staying within a safe, observable development loop.

Prerequisites and Setup

Before you start, make sure you have a Replit account and a basic Python environment. You’ll also need access to an AI model or API (for example, a general-purpose language model) and a way to manage secrets securely. In this section we cover choosing a model, setting up the workspace, and keeping credentials safe. A minimal setup gets you a running agent quickly, while a more structured approach helps you track prompts, responses, and tool outputs over time. This initial phase lays the foundation for a robust agentic workflow that can scale as needs grow.

Building a Simple AI Agent Loop

The core of an AI agent is a loop that takes user input, plans a response, executes actions, and observes outcomes. Here we outline a straightforward agent loop you can implement in a Replit Python project. The loop handles input, calls an AI model for planning, performs a basic action (like a search or API call), updates an in-memory memory store, and returns a result. You’ll see how a few lines of code can orchestrate a cognitive cycle: prompt -> plan -> act -> observe -> reflect. This section emphasizes clarity, reusability, and testability. If you’re wondering, can you build ai agents with replit, this section provides a concrete blueprint to start.

# Pseudocode for a simple agent loop

memory = []

def think(prompt):

# call your AI model for planning

return model.generate("Plan: " + prompt)

def act(plan):

# perform a simple tool action based on plan

return tool.execute(plan)

while True:

user_input = input("User: ")

plan = think(user_input)

result = act(plan)

memory.append({"input": user_input, "plan": plan, "result": result})

print("Agent:", result)Connecting to AI Models and APIs in Replit

To power your AI agent, you’ll connect to an AI model via API or run a local model if your Replit plan allows. This section walks through choosing an API provider, securing API keys with Replit secrets, and wiring the client library into your project. You’ll also learn about handling rate limits, retries, and error states to keep your agent reliable. The focus is on practical integration steps that work across common providers, so you can prototype without getting bogged down in vendor-specific details. When you ask can you build ai agents with replit, the answer hinges on a clean integration that yields predictable outputs for testing.

Memory, Tools, and Decision Making in Agents

A robust AI agent needs a simple memory layer, a set of tools or actions, and a decision policy. In this section we discuss memory strategies (short-term context, short prompts, and a lightweight log), how to model tools (web search, database access, or third-party APIs), and how to structure a decision loop so the agent can choose actions with minimal latency. You’ll see how to keep prompts thin enough for fast responses while preserving sufficient context for coherent behavior. Ai Agent Ops emphasizes a pragmatic approach: start small with a single tool and expand as patterns emerge. This keeps the project manageable while you iterate toward more complex capabilities.

Testing and Iteration on Replit

Testing is essential to catch errors early and understand how prompts influence outcomes. In this section, you’ll learn to build a lightweight test harness, log prompts and results, and create small experiments to compare different prompts or tool choices. We cover how to isolate variables, measure qualitative improvements, and document discoveries in your Replit workspace. The goal is to establish a repeatable workflow for experiments so you can accelerate learning without sacrificing reliability. The guidance here helps you iterate quickly while keeping the scope under your control.

Security, Privacy, and Safety Considerations

When deploying AI agents in a browser-based environment like Replit, privacy and security become central concerns. This part covers securing API keys with secret storage, avoiding sensitive data leakage in prompts, and implementing basic safety checks to minimize unsafe outputs. You’ll also learn about rate limits, access controls, and logging practices that support audits and debugging. The aim is to strike a balance between rapid experimentation and responsible usage, ensuring your agent feels safe and compliant as you scale.

Roadmap: From Prototype to Production

After you validate a basic agent, the next steps involve adding more sophisticated planning, better memory, and robust error handling. This roadmap outlines a staged path: strengthen the loop with modular components, introduce tool orchestration, and prepare for deployment beyond a single Replit workspace. You’ll also explore collaboration features, version control, and how to transition from a personal prototype to a team project that scales across environments. The journey from prototype to production is iterative and incremental, with frequent checkpoints to reassess goals and risks.

Tools & Materials

- Replit account(Create a new Python project in the Replit IDE)

- AI model/API access(OpenAI, HuggingFace, or a local model depending on your needs)

- Secrets/keys management(Store API keys in Replit Secrets for security)

- Python runtime(Ensure Python 3.x is selected in your Replit environment)

- Testing harness(Optional but recommended for repeatable experiments)

Steps

Estimated time: 60-120 minutes

- 1

Create a Replit Python project

Set up a new Python workspace, name it after your agent, and initialize a minimal file structure. This step establishes the sandbox where your agent will live.

Tip: Use a clear project name and keep dependencies trimmed to avoid bloat. - 2

Add API access and secrets

Add your AI model API key to Replit Secrets and install the client library. This keeps credentials secure and makes the agent repeatable across runs.

Tip: Never hard-code keys in source files; use environment variables instead. - 3

Implement a simple prompt loop

Create a loop that takes user input, sends it to the AI model for planning, and prints the model's response. Keep the initial version minimal and readable.

Tip: Comment your prompts to track what the model is asked to do. - 4

Add a basic memory store

Store recent interactions in memory to maintain context across turns. This helps the agent refer back to prior prompts and results.

Tip: Limit memory size to prevent unbounded growth; rotate or prune oldest items. - 5

Introduce a simple tool

Integrate a basic action (like a web search or API call) that the agent can execute in response to a plan.

Tip: Choose a tool with predictable outputs to keep the loop reliable. - 6

Test the loop with varied prompts

Run multiple prompts to observe behavior, refine prompts, and adjust tool handling based on results.

Tip: Log outcomes to compare how different prompts affect results. - 7

Add error handling

Gracefully manage API errors, timeouts, and invalid responses to improve robustness.

Tip: Implement retries with backoff and clear fallback messages. - 8

Document and prepare for scaling

Capture decisions, trade-offs, and next steps in a simple doc. Plan how to move from a single workspace to a multi-agent setup.

Tip: Keep a running changelog to track improvements and failures.

Questions & Answers

Can I run AI agents entirely for free on Replit?

Free tiers can support basic experiments, but API usage and higher compute generally require paid plans. Evaluate costs based on usage and model needs.

You can start for free, but expect to upgrade as you scale API usage and compute needs.

What models or APIs work best with Replit for agents?

Any widely supported API or local model can work in Replit. Start with a general-purpose language model and expand to specialized tools as you validate workflows.

Start with a general model and add tools as you test requirements.

Do I need AI ethics and safety considerations upfront?

Yes. Plan for data privacy, prompt safety, and responsible use from the start. Use guardrails and logging to monitor outputs.

Yes—build safety and privacy into your workflow from day one.

How do I test prompts and tools effectively?

Create a small suite of prompts and compare outcomes with consistent memory and tool configurations. Use logs to track results and iterate.

Test with a small prompt set and compare results using logs.

What are the next steps after a successful prototype?

Refactor into modular components, add error handling, and plan deployment strategies beyond a single workspace.

Refactor for modularity and plan deployment beyond one workspace.

Watch Video

Key Takeaways

- Identify a minimal viable agent loop

- Securely connect AI models via APIs

- Iterate with prompts and tools in a controlled sandbox

- Prioritize memory management and safe handling

- Plan a practical roadmap toward production