ai agent vs code: A comprehensive comparison of agentic AI and coding workflows

An analytical comparison of ai agents versus traditional coding workflows, offering practical guidance on when to use agentic AI and when to rely on code.

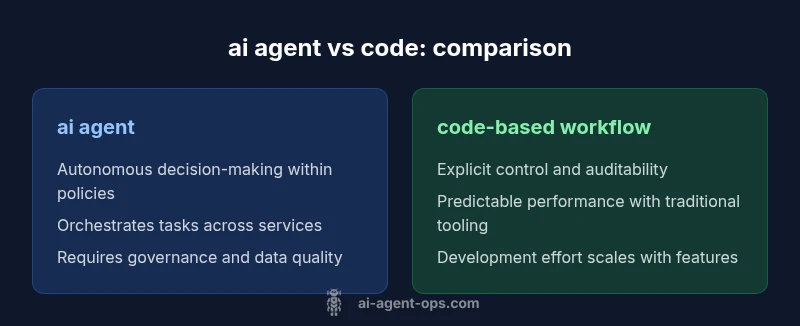

ai agent vs code: This comparison evaluates when agentic AI agents outperform traditional coding workflows and when human coding remains essential. It covers decision-making speed, task orchestration, and real-world use cases for developers, product teams, and leaders. According to Ai Agent Ops, agentic AI excels at automation and delegation, but skilled software engineering remains crucial for architecture and reliability.

Why ai agent vs code matters

In modern software teams the choice between agentic AI agents and traditional codebases shapes velocity, risk, and the ability to scale. The term ai agent vs code captures a core tension: you can delegate a wide range of tasks to autonomous agents, or you can codify every step. According to Ai Agent Ops, the rise of agentic AI introduces new patterns for automation and decision making that complement rather than replace skilled software engineering. This balance matters for developers, product teams, and business leaders seeking smarter, faster automation while maintaining governance and quality.

Core differentiators: automation scope, decision-making, and error handling

The most important differentiators between ai agent and code based workflows revolve around three axes: automation scope, decision making, and error handling. Agents can orchestrate across services with minimal manual scaffolding, enabling faster iteration on complex processes. They operate within defined policies, but may require guardrails to prevent cascading failures. Traditional code is bounded by explicit workflows, requires explicit control, and relies on tests and observability to manage reliability. When comparing, consider how much autonomy you grant to the system, how you enforce safety and compliance, and how you measure outcomes. The Ai Agent Ops team notes that integration complexity can shift the balance, particularly when data sources are diverse or security requirements are strict.

When ai agents shine: examples across domains

Agentic AI can accelerate workflows across software development, data engineering, and operations. Example domains include incident response automation, where an agent can triage alerts and propose remediation steps; customer support orchestration, where agents route tickets and craft replies; and business process automation, where agents coordinate data extraction, transformation, and routing across systems. Each scenario benefits from asynchronous decision making and cross-service coordination, reducing manual handoffs. However, success depends on clear policy definitions, robust observability, and fallback plans that preserve control in edge cases. Ai Agent Ops emphasizes careful scoping to prevent overreach by agents in sensitive domains.

When to code: scenarios requiring explicit control and auditability

Traditional coding remains essential when requirements demand explicit traceability, deterministic outcomes, and deep architectural control. Regulated environments, high-safety domains, or systems with long-lived ownership often benefit from explicit code under strict governance. Code-based pipelines also simplify compliance reporting and auditing, making it easier to locate a failing step and rollback with confidence. Use this section to map activities that must be verifiably reproducible and auditable to support governance, compliance, and external validation.

Architecture patterns: agent orchestration vs code modules

Architectures that mix agentic AI with code commonly feature two motifs: agent orchestration layers that coordinate services via intents and policies, and modular code components that implement stable interfaces with clear boundaries. The orchestration layer can adapt to changing inputs and policy updates, while code modules provide predictable, testable behavior for critical paths. Consider adopting a layered approach: encapsulate agentic decisions behind policy hooks and observability dashboards, ensuring that the most sensitive parts of the system remain codified and auditable. This pattern reduces risk while preserving the speed of agent-based automation. Ai Agent Ops underscores the importance of clear ownership and well-defined SLAs between agents and conventional software components.

Data, safety, and governance considerations

Data quality, privacy, and governance are central to any agent-for-code decision. Agentic workflows rely on data from multiple sources, which raises concerns about data leakage, bias, and reliability. Establish guardrails, access controls, and immutable audit logs for agent actions. Apply safety nets such as human-in-the-loop verification for high-stakes decisions, and implement rollback mechanisms for failed orchestrations. Organizations should align on a governance framework that defines acceptable use cases, risk thresholds, and escalation paths. Ai Agent Ops advocates starting with a policy-first approach to minimize risk as you scale agent-based automation.

Economic and organizational implications

Shifting from code-centric pipelines to agent-centric orchestration changes team structures and skill requirements. Teams may need AI literacy alongside traditional software engineering, DevOps, and security practices. Economic benefits arise from faster iteration, reduced manual coding for repetitive tasks, and improved cross-team collaboration. However, governance overhead and monitoring costs grow with automation. Organizations should model the total cost of ownership, capturing not only compute and data costs but also the costs of training, governance, and ongoing oversight.

Practical implementation patterns and steps

Begin with a small, bounded pilot that targets a single end-to-end workflow. Define clear success criteria, data boundaries, and governance policies. Build a lightweight agent that handles well-defined decisions, then layer in human-in-the-loop oversight. Create a feedback loop that captures outcomes, errors, and edge cases to continuously improve the policy and agent behavior. Parallelly, maintain a traditional code path for critical resilience, with well-documented handoffs between agentic components and code modules. The goal is a pragmatic blend that accelerates delivery while preserving reliability and traceability.

Authority sources and further reading

For rigorous guidance on AI use and governance, consult external policies and frameworks:

- https://www.nist.gov/topics/ai

- https://www.acm.org/

- https://www.whitehouse.gov/ostp/ai/

Comparison

| Feature | ai agent | code-based workflow |

|---|---|---|

| Automation scope | Broad, autonomous task orchestration across services | Narrow, script-driven automation with explicit steps |

| Decision-making | Agentic AI makes autonomous decisions within defined policies | Human-in-the-loop decisions via explicit code paths |

| Traceability | Logs/prompts and decision trails for agent actions | Code versioning, tests, and observable pipelines |

| Best for | Dynamic, multi-source workflows needing speed | Explicit control in regulated or critical systems |

| Skill shift | Cross-disciplinary AI literacy and DevOps | Traditional software engineering with strong testing and reliability focus |

Positives

- Faster iteration and task orchestration

- Reduced manual coding for repetitive tasks

- Improved alignment between engineering and business goals

- Scalability through automated decision-making

- Potential for reusable agent patterns

What's Bad

- Increased governance and safety overhead

- Steeper learning curve for teams

- Potential vendor lock-in and data dependencies

- Bias and reliability risks if not properly monitored

Agentic AI often outperforms pure coding for orchestration and rapid iteration, but traditional coding remains essential for architecture, governance, and reliability.

Use agentic AI to handle dynamic workflows and decision-making under clear policies. Retain skilled software engineers to design architecture, ensure safety, and audit outcomes.

Questions & Answers

What is an ai agent?

An ai agent is a software entity that can perceive, decide, and act in an automated way, often coordinating tasks across multiple systems. In practice, ai agents operate under policies or goals and can be integrated with existing code to augment or automate workflows.

An ai agent is a smart automation that can decide and act across systems, guided by rules.

Can ai agents replace developers?

In most scenarios, ai agents complement human developers by automating repetitive or orchestration tasks. Core architectural decisions, system safety, and nuanced problem-solving still require human expertise and code-level control.

They complement, not replace, developers in most cases.

What are the risks of using ai agents in production?

Risks include governance gaps, data leakage, unexpected agent actions, and bias. Address these with clear policies, robust monitoring, human-in-the-loop for critical decisions, and strong auditing.

Be aware of governance and safety risks; monitor and verify agent actions.

How do you evaluate ai agents vs code in a project?

Evaluate based on criteria such as automation scope, speed of delivery, governance requirements, risk tolerance, and the need for traceability. Run pilots that compare end-to-end outcomes and keep a traditional code path for critical paths.

Run pilots comparing outcomes and keep a solid code path for safety.

What skills should teams focus on?

Teams should develop AI literacy, data governance, and orchestration patterns, alongside core software engineering, DevOps, and security skills to manage both AI agents and codebases.

Learn AI basics and governance, plus strong engineering and security practices.

What governance considerations matter most?

Define acceptable use cases, risk thresholds, escalation procedures, and auditing requirements up front. Regular reviews and policy updates help align automation with business goals.

Set up policy-based governance and regular reviews.

Key Takeaways

- Define boundary between agentic automation and explicit code

- Start with controlled pilots to measure value and governance needs

- Invest in data quality and governance when adopting AI agents

- Prepare teams for an AI literacy shift alongside DevOps

- Choose agentic AI for fast, adaptable workflows; code for stability and auditability