AI Agent Automation: A Practical Guide for Building Smarter Workflows

Learn how to design, implement, and scale AI agent automation with practical steps, governance, and real-world examples for developers and leaders.

By the end of this guide, you will be able to design and implement AI agent automation in your workflows. You’ll learn key concepts, required tools, and a practical, step-by-step approach to deploy agentic AI that collaborates with human teams while ensuring safety and governance. Get ready to plan, prototype, and scale.

Why AI Agent Automation matters

AI agent automation is reshaping how organizations operationalize intelligence at scale. When you design intelligent agents to perform repeatable tasks, reason about data, and coordinate with humans, you free up teams to focus on strategic work. According to Ai Agent Ops, the core value lies in reducing manual handoffs, accelerating decision cycles, and enabling adaptive workflows that learn from experience. This section clarifies why agentic automation is not a single tool but an architectural pattern that blends AI models, data pipelines, and governance. Expect improved throughput, consistent execution, and better alignment between business goals and day-to-day operations. As you read, consider where your team spends the most time on routine decisions, data extraction, or multi-step approvals—and how agents could take ownership of those steps with guardrails in place.

Key takeaway: AI agent automation enables scalable decision-making by delegating routinized reasoning to intelligent agents while humans supervise exceptions.

Core concepts and definitions

Before diving in, it helps to align on common terms: an AI agent is a software construct that can observe, decide, and act within a defined domain; agentic AI refers to systems where multiple agents coordinate to achieve goals; a control loop is the feedback mechanism that ensures agents remain aligned with intended outcomes. In practice, you’ll combine prompt templates, knowledge bases, tool integrations (APIs, databases, and apps), and orchestration layers to build end-to-end workflows. The focus is not only on automation but on governance, safety, and explainability so teams trust the outcomes. This section introduces the vocabulary you’ll see throughout the guide and ties each concept back to real-world examples like triaging support tickets or orchestrating data transformations across services.

Takeaway: A strong vocabulary helps design robust, auditable agentic workflows rather than patching together disparate scripts.

Architecting agentic workflows

Architecting agentic workflows requires a clear picture of roles, data flows, and decision points. Start with a high-level diagram that shows which tasks are delegated to agents, which decisions require human oversight, and how data moves between systems. You’ll set up a layered architecture: data layer (sources and sinks), reasoning layer (prompts, agents, and orchestration logic), and governance layer (policy, audit logs, and access control). Emphasize portability by designing modular agents that can be swapped or upgraded without rewriting entire pipelines. Practical patterns include orchestration-first design, event-driven triggers, and a feedback-driven loop that tunes agents based on outcomes and user feedback. This approach makes AI agent automation resilient to changing requirements.

Takeaway: Modular, layered design enables scalable, maintainable agentic workflows you can evolve with business needs.

Data, prompts, and control loops

Data is the lifeblood of AI agents. You’ll aggregate structured and unstructured sources, ensure data quality, and implement data-domain boundaries so agents operate safely. Prompts should be templated and versioned, with guardrails and evaluation criteria to ensure consistent results. The control loop ties sensing, action, evaluation, and override points together so that agents can self-correct or escalate when outcomes fall outside acceptable thresholds. This section covers practical guidelines for prompt design, input validation, error handling, and monitoring. Expect to iterate on prompts, test against representative tasks, and maintain a prompt library that grows with your use cases.

Takeaway: Well-structured data, disciplined prompts, and robust control loops are the backbone of reliable AI agent automation.

Tools and platforms for building agents

Choosing the right toolkit is essential. You’ll need a robust AI platform for model access, a workflow orchestrator for sequencing tasks, and a monitoring stack to observe performance. Consider compatibility with existing tech stacks, tooling for secure credential management, and options for on-premise vs. cloud deployment. This section surveys common categories: model services, orchestration engines, data stores, and observability tools. You’ll also see how to evaluate vendor capabilities, integration APIs, and pricing models to fit your organization’s budget and governance requirements. The goal is to assemble a cohesive toolchain that enables rapid experimentation and scalable production.

Takeaway: A thoughtful toolchain reduces integration friction and accelerates time-to-value for AI agent automation.

Security, governance, and ethics

Governance should be baked in from day one. Start with access controls, data handling policies, and audit trails that track agent actions and human overrides. Implement risk assessments for model usage, data privacy, and potential biased outcomes. Ethics considerations include transparency about when agents are acting autonomously, how decisions are explained, and how users can challenge results. This section presents a practical checklist for compliance, risk mitigation, and ongoing oversight. It also discusses the importance of red-teaming and regular reviews to catch drift as agents learn from new tasks and data inputs.

Takeaway: Proactive governance and ethics reduce risk and build trust in AI agent automation.

Patterns for scaling agent fleets

Scaling agent automation isn’t just about adding more agents; it’s about architectural patterns that support coordination, reliability, and governance. Explore patterns like hierarchical orchestration (central driver with specialized sub-agents), federated reasoning (agents operate on domain-specific data), and event-driven scaling (automatic reallocation based on workload). Consider how to model service-level objectives for agents, implement rate limiting, and ensure observability across the fleet. This section provides pragmatic guidance for growing your agent ecosystem while maintaining safety and performance.

Takeaway: Scalable agent fleets rely on orchestration patterns, clear ownership, and strong observability.

Real-world use cases across industries

Across finance, healthcare, manufacturing, and tech, AI agent automation is already applied to customer support, data extraction, process automation, and decision support. For example, agents can triage inquiries, assemble reports from multiple sources, or monitor system health and trigger remediation workflows. We discuss the benefits, constraints, and metrics that matter in each domain, and how to tailor agent capabilities to regulatory environments. Real-world examples illustrate how agent coordination reduces cycle times and improves consistency without sacrificing accountability. This section makes the concepts tangible by tying them to outcomes you can measure in your own organization.

Takeaway: Practical use cases show where AI agent automation delivers measurable value and where governance matters most.

Adoption challenges and success metrics

Adoption challenges include integration complexity, talent gaps, and risk concerns. To overcome them, set a pragmatic migration path: start with an MVP, establish guardrails, and gradually expand scope as confidence grows. Define success metrics such as task completion time, error rate, human-in-the-loop touchpoints, and ROI. Track both process metrics (throughput, latency) and business outcomes (cost savings, revenue impact). This section also highlights common failure modes and how to avoid them by emphasizing explainability, reproducibility, and continuous improvement.

Takeaway: Clear metrics and a staged adoption plan reduce risk and accelerate value from AI agent automation.

Putting it into practice: planning your project

Plan like a software project with milestones, governance gates, and stakeholder alignment. Start by articulating objectives, success criteria, and risk thresholds. Next, map data sources, required tools, and compliance considerations. Build an MVP that demonstrates core agent capabilities, then implement a feedback loop to refine prompts and orchestration logic. Finally, prepare a deployment strategy that includes monitoring, incident response, and a governance charter to ensure responsible use of AI agents. As you plan, consider how to align with organizational KPIs and how agents will augment rather than replace human experts.

Takeaway: A well-structured plan with governance and measurement drives successful AI agent automation initiatives.

Authoritative sources

- Authoritative sources provide foundational context for AI agent automation and responsible AI practices.

- References below include government and university publications to support governance and reliability considerations:

- https://www.nist.gov

- https://www.mit.edu

- https://www.stanford.edu

These sources help ground your implementation in standards, research, and best practices as you build agentic workflows.

Tools & Materials

- Development environment with cloud access(Workspace to prototype agent workflows and test integrations)

- AI platform access (model hosting or API)(Choose a provider with strong governance features)

- Workflow/orchestration tool(Examples include Airflow, Prefect, or Dagster)

- Prompt library and evaluation datasets(Versioned prompts and test cases for iteration)

- Monitoring and observability stack(Logs, metrics, dashboards, and alerting)

- Security and governance policies(Access controls, data handling, and incident response plans)

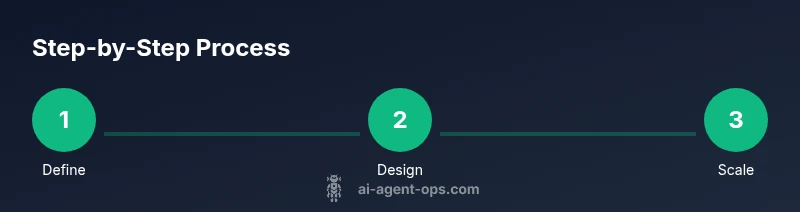

Steps

Estimated time: 6-12 hours

- 1

Define objectives and constraints

Articulate the business goals the agent will support and the constraints it must operate within. Identify high-value tasks and explicit boundaries for autonomy, risk, and compliance. This clarity prevents scope creep and sets the baseline for measurement.

Tip: Write a one-page objective and a risk checklist before you start. - 2

Map data flows and agent roles

Diagram data sources, destinations, and the ownership of each data link. Assign roles to agents (data fetcher, reasoner, action executor) and determine where human-in-the-loop is required.

Tip: Use a simple data-map diagram to keep stakeholders aligned. - 3

Assemble your toolchain and environment

Set up your development workspace with the chosen AI platform, orchestration engine, and a secure credential store. Ensure basic integrations for at least one end-to-end task.

Tip: Use versioned configurations and sandboxed environments. - 4

Create prompt templates and a control loop

Develop reusable prompts for typical tasks and implement a control loop that validates outputs, handles errors, and escalates when needed. Include safety checks and logging for traceability.

Tip: Start with a small, well-defined task to validate the loop. - 5

Build a minimal viable agent workflow (MVP)

Implement a compact pipeline that executes a single task with agent coordination, a basic data source, and a human override path. Run end-to-end tests to verify behavior and guardrails.

Tip: Aim for a single-trompt-to-action MVP for speed. - 6

Add monitoring, logging, and safety checks

Instrument the MVP with metrics, logs, and alerting. Establish anomaly detection and automatic rollback mechanisms to protect data and users.

Tip: Define alert thresholds before deployment. - 7

Scale gradually with orchestration patterns

Introduce additional agents and apply patterns such as hierarchical orchestration or event-driven scaling. Validate performance and latency under higher loads.

Tip: Layer in quality gates before adding new agents. - 8

Evaluate ROI and governance for deployment

Assess business impact, track key metrics, and finalize governance policies. Prepare a rollout plan with phased releases and post-deployment monitoring.

Tip: Document decisions and outcomes for future audits.

Questions & Answers

What is AI agent automation?

AI agent automation refers to using autonomous software agents to observe, decide, and act on tasks within a workflow. These agents coordinate with data sources, tools, and human oversight to complete processes with minimal manual intervention while maintaining governance and safety.

AI agent automation uses autonomous software agents to perform tasks with limited human input, coordinating data and tools while staying within governance rules.

How is AI agent automation different from traditional automation?

Traditional automation follows fixed rules, whereas AI agent automation uses reasoning, data-driven prompts, and orchestration to adapt to new tasks. Agents can decide, act, and learn within defined boundaries, enabling more flexible and scalable workflows.

Unlike fixed-rule automation, AI agent automation adapts to new tasks through reasoning and orchestration, offering more flexibility and scalability.

What are common risks and how can I mitigate them?

Common risks include data leakage, biased decisions, and uncontrolled actions. Mitigate with governance, access controls, prompt validation, red-teaming, and clear escalation paths for human-in-the-loop interventions.

Key risks are data leakage and biased decisions. Mitigate with governance, prompts validation, and clear human oversight.

What skills are needed to implement AI agent automation?

A blend of software engineering, data literacy, prompt engineering, and systems thinking. Familiarity with APIs, orchestration tooling, and monitoring is essential for building reliable agentic workflows.

You’ll need software engineering, data literacy, prompts design, and orchestration skills to build reliable AI agent automation.

How long does it take to build an MVP?

An MVP can be built in a few days to a couple of weeks depending on scope, data accessibility, and integration complexity. Start with a narrow use case to accelerate delivery.

An MVP typically takes a few days to a couple of weeks, depending on scope and integrations.

What metrics indicate success?

Look at task completion rate, cycle time improvement, error rate, and ROI. Track human-in-the-loop interventions and governance reliability to ensure sustainable value.

Key metrics include completion rate, cycle time, and ROI, plus governance reliability metrics.

Watch Video

Key Takeaways

- Plan with governance and guardrails from the start

- Modular, layered architectures enable growth

- Data quality and prompts drive agent reliability

- Monitor, measure, and iterate to improve ROI

- Scale responsibly through orchestration patterns