Cursor AI Agent vs Edit: A Practical Comparison

A detailed, analytical comparison of cursor-based AI agents and traditional edit workflows to help developers and leaders choose the right approach for automation and agentic AI workflows.

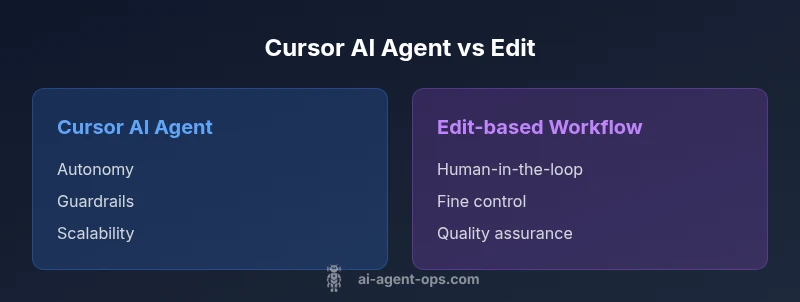

Cursor AI agents automate decision-making and task execution at scale, while edit-based workflows rely on human-in-the-loop prompts and manual adjustments. This comparison clarifies when autonomous agents win, when edits excel, and how hybrid patterns often deliver the best of both worlds for agentic AI workflows.

Evolution of cursor-based AI agents vs edit workflows

The landscape of AI-assisted automation has evolved from manual edits and scripted prompts to increasingly autonomous cursor-based AI agents. In many organizations, teams now pair these strategies to balance speed and quality. According to Ai Agent Ops, the shift toward agentic AI workflows reflects a growing preference for systems that can initiate, monitor, and adjust tasks with minimal manual intervention while retaining guardrails and auditability. The keyword cursor ai agent vs edit captures this spectrum: one approach emphasizes automated action and scale; the other emphasizes precise, expert input at key decision points. As teams adopt these patterns, the challenge becomes designing orchestration layers that keep agents aligned with business rules, data governance, and user expectations. In practice, a well-architected hybrid model often yields the most resilient outcomes, especially when human oversight is essential for safety and compliance.

Defining cursor AI agents: capabilities and constraints

A cursor ai agent is a software component that can observe signals, make decisions, and take actions within a defined boundary. These agents typically rely on routing logic, state management, and policy-driven controls to perform tasks such as data extraction, task delegation, and inter-service orchestration. The core advantages include speed, consistency, and the ability to operate at scale across multiple domains. However, agents require robust guardrails, reliable data streams, and clear ownership to prevent drift or unintended actions. The biggest constraint is governance: without well-defined prompts, constraints, and monitoring, autonomous actions can diverge from intended outcomes. In this section we also highlight how the cursor ai agent vs edit debate hinges on data quality, model capability, and the complexity of the decision tasks involved.

Understanding 'edit' in AI workflows: strengths and limits

Edit-based workflows rely on human intervention to shape or correct AI outputs. This approach excels in high-uncertainty tasks, where nuance, ethics, or domain expertise matter. Edits can be precise, context-aware, and aligned with brand voice and policy requirements. The trade-off, however, is throughput: manual edits slow cycles, introduce human bottlenecks, and can degrade consistency across large datasets or repetitive tasks. The cursor ai agent vs edit dichotomy is not binary in practice; many teams start with editing for high-stakes steps and progressively introduce automation for routine components, creating a feedback loop that improves both the AI model and the governance model. In this section we examine patterns for effective human-in-the-loop design and how to preserve intent during automated execution.

Core differences in approach: autonomy, governance, and speed

Autonomy: Cursor AI agents push decision-making closer to the edge of automation, reducing latency and increasing throughput. Edits place human judgment at the critical touchpoints, preserving nuance but slowing cycles. Governance: Agents require strong guardrails, monitoring, and auditable trails to prevent drift. Edits demand rigorous quality controls but can be more flexible in adapting to exceptions. Speed and scale: Agents excel at repetitive, rule-based tasks across many instances, while edits excel in one-off decisions where context matters. The best architectures combine both: agents handle routine scope with strict guardrails, and humans intervene at critical junctures or in edge cases. This section presents a balanced view of trade-offs and provides heuristics for choosing the right pattern in given scenarios.

When to deploy cursor AI agents vs when to rely on edits

For operational, event-driven, or data-heavy workflows, cursor AI agents offer clear advantages in speed, repeatability, and scale. They are well-suited for tasks like routing, triaging, or orchestrating microservices where the rules are well-defined and the data streams are reliable. Edits are preferable for creative, situational, or high-risk tasks where context, ethics, or regulatory considerations require human judgment. A blended approach often yields the best outcomes: use agents to handle the bulk of routine work and reserve editing for quality assurance, exception handling, and policy checks. In this section we outline practical decision criteria and provide checklists to determine when to escalate from automation to human review and back again.

Architecture and integration patterns for agent-based workstreams

Designing effective cursor ai agent vs edit workflows starts with a clean architectural blueprint. Core components typically include data ingestion layers, stateful orchestration, policy engines, and observability dashboards. Integration patterns matter: publish/subscribe event streams, API-driven microservices, and shared data models reduce complexity and improve traceability. A common pitfall is underestimating the need for guardrails: time-based limits, action quotas, and rollback mechanisms protect against drifting behavior. This block explores practical patterns for building resilient agent-based workstreams, including modular orchestration, event sourcing, and declarative policy configuration. It also discusses data governance implications, such as data lineage and access controls, essential for responsible AI operations.

Evaluation metrics and governance: measuring success and safety

Measuring cursor ai agent vs edit outcomes requires a balanced scorecard that covers speed, accuracy, reliability, and governance. Key metrics include cycle time reduction, defect rate after automation, escalation frequency, and auditability of automated decisions. Establish baselines before deploying agents to quantify improvements, and implement continuous monitoring to detect drift or anomalies. Governance considerations include access control, change management, and explainability. Ai Agent Ops emphasizes the importance of transparent decision logs and robust testing protocols as part of a mature AI program. By aligning metrics with business objectives, teams can demonstrate tangible ROI while maintaining safety and accountability.

Practical migration patterns: from edit-heavy to agent-heavy workflows

Migrating from a primarily edit-driven approach to a hybrid or agent-heavy model requires careful planning. Start with a pilot that targets a small, well-defined workflow with clear success criteria. Incrementally replace manual steps with autonomous routines, ensuring guardrails and rollback paths are in place. Use synthetic data tests to validate behavior before deploying in production, and establish continuous feedback loops where human reviewers annotate agent outputs to refine performance. Documentation, change control, and training are essential to minimize organizational friction. In this section, we also discuss staged rollout strategies, risk assessments, and stakeholder communication plans to ensure adoption proceeds smoothly.

Real-world scenarios and risk management: industry considerations and risk mitigation

Real-world deployments reveal a spectrum of risks, from data quality issues to misaligned incentives. Cursor ai agent vs edit decisions should be informed by domain-specific constraints such as regulatory requirements, customer impact, and data sensitivity. In highly regulated industries, even high-performing agents must pass rigorous audits, while in fast-moving product environments, speed and iteration are crucial. Risk mitigation includes redundancy checks, human-in-the-loop thresholds for critical tasks, and automated rollback on rule violations. This section also offers practical examples across domains (customer support, content moderation, and data routing) to illustrate how different risk profiles shape the choice between automation and editing, and how organizations can tailor guardrails to their risk tolerance.

Comparison

| Feature | cursor ai agent | edit |

|---|---|---|

| Automation style | Autonomous, rule-driven actions | Manual prompts with human-in-the-loop |

| Control level | High throughput, lower latency | Precise control via edits and approvals |

| Cost model | Opex for runtime, potential infra costs | Labor-intensive, ongoing overhead |

| Best Use | Operational, data-heavy tasks | High-stakes, nuanced decisions |

| Governance | Guardrails, audit logs, telemetry | Quality checks, policy adherence |

| Failure handling | Automated rollback and replans | Human-in-the-loop intervention |

Positives

- Speed gains from automation and parallelization

- Scales decision-making across workflows

- Improved consistency and repeatability

- Reduced manual toil for routine tasks

What's Bad

- Requires upfront design and continuous monitoring

- Risk of drift without strong guardrails

- Infrastructure and data dependencies

- Initial learning curve for teams

Hybrid patterns often outperform pure automation or pure editing.

Autonomy accelerates routine tasks; human oversight remains essential for quality, safety, and regulatory compliance.

Questions & Answers

What is a cursor AI agent, and how does it differ from an edit-based workflow?

A cursor AI agent is a software component that autonomously executes defined tasks within governance boundaries. An edit-based workflow relies on human inputs to adjust and approve outputs. The two approaches differ in autonomy, speed, and the need for guardrails.

A cursor AI agent runs tasks automatically within rules, while edits depend on humans to approve or adjust results.

When should I favor an agent over edits?

Choose agents for high-volume, repetitive, data-driven tasks where speed and consistency matter. Use edits for nuanced decisions, edge cases, or where compliance requires human judgment.

Use agents for speed and scale; use edits for nuance and compliance.

What governance considerations are essential when using AI agents?

Establish guardrails, auditing, explainability, and rollback mechanisms. Ensure data lineage, access controls, and monitoring exist to prevent drift and unsafe actions.

Guardrails and audits are essential to prevent drift and unsafe actions.

How do I migrate from edits to agents without risk?

Start with a pilot on a limited workflow, implement measurable success criteria, and incrementally automate steps with fail-safes and human-in-the-loop checkpoints.

Pilot small workflows first and gradually automate with safeguards.

What metrics should I track when comparing cursor AI agents vs edits?

Track cycle time, defect rate post-automation, escalation frequency, and auditability. Use baselines and continuous monitoring to measure improvements.

Monitor speed, quality, and governance to compare approaches.

Are there industry-specific considerations I should account for?

Yes. Regulatory requirements, data sensitivity, and customer impact vary by sector. Tailor guardrails and review processes to fit domain-specific risk profiles.

Regulatory and data concerns vary by industry; tailor controls accordingly.

Key Takeaways

- Identify repeatable tasks suitable for automation

- Preserve human oversight for critical decisions

- Design guardrails and observable metrics

- Plan gradual migration from edits to agents