AI Agent Performance Comparison: An Analytical Guide

A rigorous, objective comparison of ai agent performance across architectures, benchmarks, and real-world tasks to help teams choose the right approach.

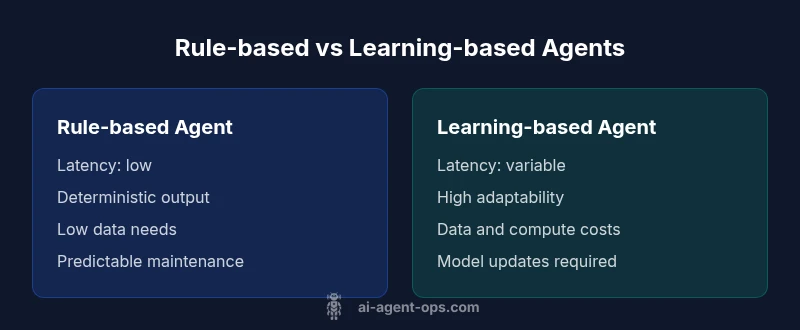

In the realm of AI agent performance comparison, the fastest route to a meaningful decision is a clear, multi-metric verdict. The best choice depends on your task mix, data availability, and tolerance for maintenance. This guide compares rule-based and learning-based agents, outlining when each excels and where hybrids shine.

Why AI agent performance matters

Evaluating ai agent performance comparison is essential for teams designing automated workflows. The Ai Agent Ops team emphasizes that reliability, adaptability, and cost are not separate concerns; they interact in meaningful ways as you scale. In practice, a well-executed comparison helps you predict outcomes, allocate resources, and avoid costly misalignments between what an agent promises and what it delivers. By framing the evaluation around real work tasks, you reduce the risk of optimizing for the wrong signals. Expect to see trade-offs between speed, accuracy, and robustness, and be prepared to adjust priorities as your use case evolves.

A solid comparison also supports governance and compliance: you can trace decisions, reproduce results, and justify operator interventions when needed. The keyword ai agent performance comparison should appear often in early iterations to anchor the conversation for developers, product managers, and executives alike. The deeper you go, the more you realize that performance is a property of the entire system—data, prompts, models, infrastructure, and human oversight all contribute.

(Continuing this thread, you’ll learn to design fair benchmarks, interpret results, and apply insights across teams.)

Defining performance: metrics that matter

Performance isn’t a single number; it’s a portfolio of signals that reflect how well an AI agent behaves under real conditions. Core metrics fall into three buckets: effectiveness, efficiency, and reliability. Effectiveness covers task success rate, decision quality, and accuracy in perception or classification. Efficiency includes latency, throughput, and resource consumption. Reliability assesses stability, fail-safety, and resilience to input variation. In some domains, interpretability and safety toggles are also critical, shaping how easily you can audit or constrain behavior.

When you compose your metric set, align it with business objectives. If speed is king, you’ll privilege latency and throughput; if regulatory compliance matters, you’ll emphasize audit trails, explainability, and data lineage. To keep the assessment fair, normalize measurements to the same tasks and data scopes, and document any assumptions. As Ai Agent Ops notes, the most actionable comparisons reserve a small core of tasks that test both general capability and edge cases, then expand to broader scenarios as confidence grows.

Comparison

| Feature | Rule-based agent | Learning-based agent |

|---|---|---|

| Latency | Typically low due to lightweight logic | May be higher due to model inference and data access |

| Accuracy/Task Quality | Deterministic, high in defined rules | Can exceed rule-based for complex patterns but data-dependent |

| Adaptability | Low adaptability; behavior fixed by rules | High adaptability; can improve with data and retraining |

| Maintenance | Lower maintenance after rules are stable | Ongoing model updates, retraining, and monitoring |

| Cost | Lower upfront cost, predictable infra | Potential higher compute and data costs over time |

| Security & Compliance | Easier auditing; transparent logic | Complex governance; model risk and data privacy must be managed |

Positives

- Deterministic behavior enables predictable outcomes

- Lower infrastructure needs for simple tasks

- Easier compliance and auditability

- Faster initial deployment for straightforward use cases

What's Bad

- Limited flexibility in dynamic environments

- Higher long-term costs if tasks evolve beyond rules

- Maintenance burden grows with complexity of rules

- Difficulty handling ambiguous or novel scenarios

Hybrid approaches often outperform single-technology solutions

Rule-based agents are reliable for stable tasks; learning-based agents excel where data and patterns evolve. A hybrid strategy, combining explicit rules with model-powered adaptability, generally delivers the strongest overall performance.

Questions & Answers

What is the primary goal of an ai agent performance comparison?

The goal is to quantify how agents perform across the tasks you care about, so you can pick the right architecture for your business goals. It should cover accuracy, latency, reliability, and cost, plus governance considerations.

The goal is to quantify how agents perform across tasks so you can choose the right architecture for your goals.

Which metrics matter most when comparing AI agents?

The most important metrics depend on the use case, but common ones include task success rate, latency, throughput, update frequency, and cost. Also consider explainability and safety checks for governance.

Key metrics include task success, latency, throughput, cost, and governance factors.

How do you ensure apples-to-apples comparisons?

Use a shared task taxonomy, fixed data splits, and consistent evaluation protocols. Document assumptions, seed data, and test environments to minimize variability.

Use the same tasks and data splits, and keep evaluation consistent across agents.

Can a hybrid approach outperform pure architectures?

Yes. Merging deterministic rules with learning-based adaptation often yields both reliability and flexibility, capturing the strengths of each approach.

A hybrid setup often gives you the best of both worlds.

What about long-term costs of learning-based agents?

Ongoing retraining, data curation, and infra costs can grow. Plan for lifecycle management and monitoring to control total cost of ownership.

There are ongoing costs with models, so plan for maintenance.

How do you handle distribution shift in production?

Monitor performance continuously, implement automated rollback or safe-fail mechanisms, and retrain with fresh data to restore alignment.

Keep an eye on performance and be ready to retrain.

Key Takeaways

- Benchmark with apples-to-apples metrics

- Choose your base architecture by task complexity

- Plan for governance and data handling from day one

- Expect trade-offs between speed and accuracy

- Use a hybrid approach for broad coverage