Human Agent vs AI Agents: Side-by-Side Comparison and Guidance

A rigorous, analytical comparison of human agents and AI agents, outlining strengths, limitations, use cases, and hybrid strategies for smarter automation in 2026.

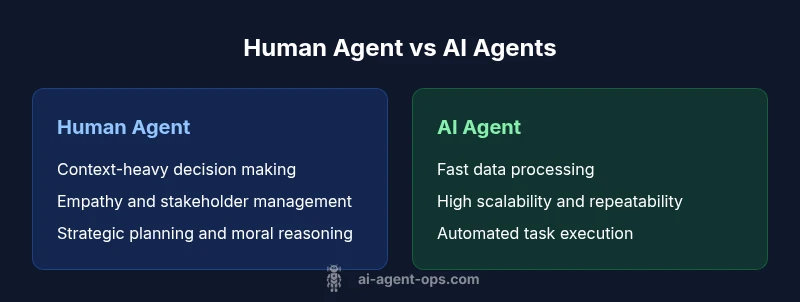

In the race between human agent vs ai agents, neither side is universally superior. AI agents excel at speed, scale, and data-driven consistency, while human agents provide context, empathy, and strategic judgment. The optimal setup is a hybrid: deploy AI agents for routine tasks and keep humans in the loop for oversight, calibration, and complex decision-making.

Framing the Debate: Human Agents, AI Agents, and the Collaboration Paradigm

The phrase “human agent vs ai agents” surfaces a long-standing debate about capability boundaries, governance, and the future of work. At its core, the distinction is not a zero-sum contest but a spectrum. A human agent is a person who can adapt, reason with nuance, and respond to ethical considerations in real time. An AI agent is a software entity that makes autonomous or semi-autonomous decisions, often guided by large language models, tool use, and data-driven constraints. The Ai Agent Ops team emphasizes that the real value lies in how these agents collaborate within a defined workflow. Instead of choosing sides, organizations should design agentic AI systems that assign execution tasks to AI agents while reserving interpretation, oversight, and strategic direction for human agents. The resulting hybrid approach enables scale without sacrificing judgment, accountability, and context.

When we talk about human agent vs ai agents in practice, it’s also essential to clarify terminology. An AI agent might operate within a business process as a capable worker — capable of planning, acting through tools, and adjusting behavior based on feedback. A human agent, by contrast, can incorporate tacit knowledge, morals, and long-term strategy. Both are essential, and the most mature implementations rely on governance structures that preserve human oversight even as automation expands. This framing helps teams avoid over-reliance on automation and ensures that automation remains aligned with organizational goals.

According to Ai Agent Ops, the most successful deployments are built around clear decision boundaries, well-defined triggers for human intervention, and transparent reporting. This is not about replacing people; it’s about augmenting them. The result is a more resilient operating model where human agents tackle ambiguity and strategic risk, while AI agents accelerate routine, high-volume, data-driven tasks.

Capabilities at a Glance: What AI Agents Bring to the Table

AI agents offer a toolkit that complements human strengths. They can ingest vast data sets, identify patterns, and execute repeatable tasks with speed and consistent quality. They optimize workflows by selecting actions from a predefined set of tools, scheduling routines, and interacting with software through APIs. In practice, AI agents excel at document triage, data extraction, automated testing, and monitoring complex systems without fatigue. They also enable scalable decision support by integrating with dashboards and alerting engines to surface anomalies in real time. A mature AI agent system supports ambiguity by requesting clarification when needed or deferring to a human in high-stakes scenarios.

Key capabilities include goal-oriented planning, action sequencing, tool integration, and self-improvement loops driven by feedback. The agent can be given a high-level objective and autonomously determine the intermediate steps, select the right tools, and monitor outcomes. However, this autonomy is bounded by governance rules, ethical guardrails, and safety constraints to keep actions aligned with policy. The Ai Agent Ops framework highlights the importance of clear boundary conditions that define when human input is required and when automated execution can proceed.

In the human agent vs ai agents comparison, one important nuance is orchestration. AI agents often act as workers within a larger pipeline, whereas humans serve as supervisors and decision-makers. Effective integration requires shared data schemas, consistent authentication, and transparent decision logs so both sides can audit outcomes and refine processes over time. As accuracy and speed improve, the human-AI collaboration becomes more seamless, enabling organizations to scale capabilities with confidence.

The Human Edge: Context, Empathy, and Nuanced Judgment

Humans bring context-aware reasoning, ethical considerations, and empathic understanding that AI agents struggle to emulate fully. In complex decision-making, the ability to weigh conflicting objectives, anticipate unintended consequences, and align actions with organizational values is essential. The human edge shines in scenarios requiring intricate stakeholder management, negotiations, and culturally aware communication. Even when AI agents generate highly accurate results, humans assess the broader impact on customers, employees, and communities.

Another critical human strength lies in learning from rare or acontextual events. When standard patterns fail or novel situations emerge, humans can synthesize disparate signals, draw on tacit knowledge, and adjust strategies on the fly. This ongoing capability is particularly valuable in industries with high regulatory scrutiny or evolving social expectations. The hybrid model leverages the best of both worlds: AI agents handle routine cognitive work, while human agents provide the interpretive lens that prevents drift from core values.

For organizations exploring human agent vs ai agents, this edge matters for risk management. A purely automated system may miss subtleties that a well-informed human could anticipate. The most reliable road is to design with human-in-the-loop governance, ensuring that critical decisions retain human oversight while automation handles the heavy lifting in data processing and repetitive tasks.

Decision-Making Dynamics: Speed, Scale, and Precision

Speed and scale are where AI agents consistently outperform humans. They can process millions of data points, test thousands of hypotheses, and drive actions in near real time. But speed should not be mistaken for wisdom. Humans can integrate context, ethics, and long-term strategy into decisions that math alone cannot solve. The best practice is to structure decisions as a spectrum where routine, well-bounded decisions are automated, while corner cases and decisions with ethical significance are flagged for human review.

Precision is another domain where AI agents excel when properly constrained. With strong data governance, AI agents can reduce variance across tasks and deliver uniform results. Yet precision hinges on data quality and model alignment with business objectives. When data is noisy or the objective is ill-defined, human judgment is essential to interpret results and adjust parameters. The hybrid approach reduces the risk of overfitting or mechanistic errors by ensuring that human oversight calibrates the automation.

Understanding these dynamics helps organizations design better workflows. Start by cataloging tasks into three categories: routine automation, semi-structured decisions requiring oversight, and high-stakes decisions needing human judgment. Then map who is responsible for each category and what triggers escalation. This structured approach yields a robust balance between speed, scale, and human insight.

Use Cases by Domain: When to Prefer AI Agents vs Human Agents

Different domains demand different blends of human and AI capabilities. In customer support, AI agents can triage inquiries, draft responses, and escalate to humans when sentiment analysis detects frustration or ambiguity. In software development, AI agents can scaffold code, run tests, and monitor CI pipelines, while human agents review critical design decisions and ensure alignment with product strategy. In research and analytics, AI agents can perform literature screening, data wrangling, and hypothesis testing, whereas human agents interpret results, consider ethical implications, and translate insights into action.

In operations and security, AI agents can perform continuous monitoring, anomaly detection, and automated remediation for known patterns. Humans are still required for incident response coordination, policy updates, and risk assessments that depend on context and organizational culture. The key is to define a role for AI agents as force multipliers rather than as outright replacements for human expertise. This allows teams to scale capabilities while maintaining accountability.

When considering the human agent vs ai agents balance, it’s useful to define “best-fit” criteria: tasks with clear rules and ample data are excellent for automation; tasks requiring empathy, nuanced interpretation, or strategic alignment demand human involvement. A well-designed hybrid approach can deliver faster throughput, higher consistency, and better risk management across most domains.

Risks, Ethics, and Governance

Automated agents introduce governance and ethical considerations that require proactive management. Data privacy, bias, and accountability must be addressed through policy, transparency, and auditable logs. AI agents should operate within guardrails that specify permissible actions, data access boundaries, and escalation criteria. Without governance, automation can drift from intended outcomes, produce biased results, or violate regulatory requirements.

A formal ethics framework helps organizations anticipate and mitigate harms. This includes documenting decision rationales, capturing the sources used by AI agents, and implementing oversight mechanisms that ensure alignment with corporate values and legal obligations. The Ai Agent Ops approach emphasizes continuous monitoring, incident reporting, and post-implementation reviews to validate that the human-AI collaboration remains effective, fair, and compliant. Emphasizing governance in the early design phase reduces rework and safeguards trust with customers and stakeholders.

Additionally, risk management should address model drift and data stale conditions. Revisit data sources, retrain models when necessary, and maintain a clear rollback plan. In a mature system, governance is not a one-off project but an ongoing practice that evolves with technology and regulatory changes.

Metrics that Matter: Measuring Performance and ROI

To evaluate the human agent vs ai agents dynamic, teams should define both process metrics and business outcomes. Process metrics include cycle time, throughput, error rate, and escalation frequency. Outcome metrics focus on customer satisfaction, decision quality, and alignment with strategic goals. A balanced scorecard approach helps organizations see the full picture: automation speed and consistency alongside human judgment and policy compliance.

ROI for AI agents depends on task selection, tool integration, and governance. Measure time saved on repetitive tasks, reductions in human workload, and improvements in accuracy. Consider the cost of human-in-the-loop interventions, including escalation handling and review overhead. When setting targets, anchor them to business objectives such as faster time-to-market, improved customer experience, or reduced risk exposure. The key is to track both operational efficiency and strategic impact to understand true value.

Architecture and Orchestration: Building Hybrid Solutions

Successful hybrid systems rely on clear orchestration between agents—human and AI. An event-driven architecture helps trigger AI actions and escalate when needed. Tooling should support API-based interactions, secure authentication, and traceable decision logs. Agent orchestration platforms can coordinate across tasks, monitor performance, and enforce governance policies. The design principle is to separate concerns: AI agents handle execution and data processing, while human agents manage interpretation, strategy, and exception handling.

Key architectural elements include a shared data model, standardized prompts and intents, versioned toolsets, and auditable decision trails. Observability is essential: monitor latency, error rates, and decision quality. A well-structured pipeline also provides fallback options, so if an AI agent cannot resolve a task, the system gracefully hands it to a human agent for intervention. This reduces risk and builds trust with users.

Implementation Playbook: Steps to Launch a Hybrid System

- Inventory tasks and classify them into routine, semi-structured, and high-stakes categories. 2) Define escalation rules, decision boundaries, and governance policies. 3) Select AI agent tools and integrate them with existing systems. 4) Establish data pipelines, access controls, and logging requirements. 5) Pilot the hybrid workflow with a small, controlled dataset and measure performance. 6) Scale gradually, refining decision thresholds and notification rules. 7) Implement ongoing governance reviews and update documentation with lessons learned. 8) Foster a culture of continuous improvement by soliciting feedback from both human and AI operators.

A practical implementation plan reduces risk and accelerates value realization. Focus on early wins that demonstrate measurable improvements in speed and accuracy, then expand to more complex tasks as the model matures. Documentation, governance, and training are essential to keep both human and AI agents aligned with business objectives.

Common Pitfalls and How to Avoid Them

- Over-automation: Automating tasks that require deep domain expertise can lead to wrong conclusions. Ensure human oversight for high-stakes decisions.

- Poor data quality: Garbage in, garbage out. Invest in data cleaning, standardization, and robust data schemas.

- Fragmented tooling: Siloed tools create brittle workflows. Use modular orchestration with clear interfaces and shared data models.

- Insufficient governance: Without guardrails, automation can drift or violate policy. Define escalation criteria, audit trails, and accountability.

- Neglecting change management: People resist automation without proper training and communication. Plan for stakeholder engagement and skill development.

By anticipating these pitfalls and designing with guardrails, teams can build sustainable, high-performing hybrid systems that endure as technology evolves.

Practical Scenarios: Mini Case Studies (Fictional)

Case A: A financial services operations team deploys AI agents to triage client inquiries and draft routine responses. Humans handle complex advisory questions and regulatory checks. The result is faster response times and consistent messaging, while compliance remains under human oversight.

Case B: A software development team uses AI agents to generate boilerplate code, run unit tests, and monitor deployments. Senior engineers review architecture decisions and provide strategic direction. The hybrid setup accelerates delivery without sacrificing architectural integrity.

Case C: A healthcare analytics unit leverages AI agents to preprocess data and surface trends. Clinicians review findings, assess clinical relevance, and decide on follow-up actions. This balance reduces analysis time while preserving patient safety and ethics.

Roadmap and Next Best Practices

As AI agents mature, organizations should emphasize governance, data quality, and human-centered design. Future best practices include developing standardized prompts, improving tool interoperability, and expanding HITL capabilities for edge cases. Continuous learning and feedback loops will help AI agents adapt to changing business needs, while humans refine strategy and ethics in the process. By staying vigilant, organizations can realize the benefits of agentic AI without compromising trust or accountability.

Comparison

| Feature | Human Agent | AI Agents |

|---|---|---|

| Decision speed | Typically slower on routine tasks | Can operate at scale and speed for routine tasks |

| Consistency | High variability due to cognitive factors | High consistency when data and rules are well-defined |

| Context & nuance | Strong on contextual understanding and nuance | Limited by training data and prompts |

| Cost of operation | Labor-intensive, variable cost | Lower variable cost per task with scale |

| Best for | Strategic thinking, stakeholder management, ethics | Data-driven execution, automation of repetitive tasks |

Positives

- AI agents provide speed, scale, and data-driven execution.

- Humans deliver context, empathy, and strategic judgment.

- Hybrid workflows combine strengths for better outcomes.

- Automation reduces repetitive workload and human fatigue.

What's Bad

- AI agents depend on data quality and governance; poor data hurts results.

- Humans can be slower on execution and prone to cognitive bias if not guided.

- Over-reliance on automation can erode critical thinking if not monitored.

Hybrid human-AI systems are the recommended approach for most organizations

AI agents handle routine, data-driven tasks at scale, while humans provide judgment, ethics, and strategic insight. Together, they deliver faster execution with responsible governance and improved decision quality.

Questions & Answers

What is the main difference between a human agent and an AI agent?

A human agent uses contextual understanding, empathy, and strategic thinking, while an AI agent automates routine, data-driven tasks with speed and scale. The most effective systems blend both.

Humans bring judgment and empathy; machines handle repetitive data tasks fast.

Can AI agents fully replace human agents?

No. AI agents excel at execution and data processing, but humans are essential for ethics, strategy, and complex problem-solving. A hybrid approach is typically best.

AI can automate, but humans are needed for judgment.

What is HITL and why is it important?

HITL stands for human-in-the-loop. It ensures that automated decisions receive human oversight in critical scenarios, maintaining accountability and alignment with values.

Human oversight keeps automation honest and safe.

What tasks are best suited for AI agents?

Routine, high-volume, data-driven tasks with clearly defined objectives are ideal for AI agents, especially when speed and consistency matter.

Let the machines handle the repetitive stuff.

What risks should organizations watch for with AI agents?

Key risks include data privacy, bias, model drift, and governance gaps. Proper policies, audits, and monitoring mitigate these risks.

Watch out for privacy and bias; keep governance tight.

How do you measure the ROI of AI agents?

ROI assessment combines time savings, error reductions, and improvements in decision quality, balanced by HITL costs and integration overhead.

Value comes from speed, accuracy, and smart governance.

Key Takeaways

- Define clear HITL boundaries and escalation rules

- Map tasks by complexity to allocate to AI or humans

- Invest in governance, transparency, and auditability

- Prioritize data quality and tool interoperability

- Start with high-impact pilot projects and scale incrementally