Which AI Agent Is Better: A Practical Comparison for 2026

A data-driven, objective comparison of AI agent types to help developers and leaders decide which ai agent is better for their goals. Includes criteria, a feature table, and practical guidance.

TL;DR: Which ai agent is better depends on your goals. For reliability, explainability, and governance, policy-driven agents usually win. For complex reasoning and flexible tool use, learning-based agents tend to perform better. Hybrid orchestrators can balance both strengths. In 2026, Ai Agent Ops suggests choosing the agent type based on task requirements, data availability, latency, and governance needs.

Why the question 'which ai agent is better' matters in 2026

In a multi-domain AI landscape, deciding which ai agent is better isn’t a trivia exercise—it determines how quickly a team can automate decisions, scale operations, and maintain governance. According to Ai Agent Ops, the best choice depends on your goals, data availability, latency constraints, and risk appetite. This question matters beyond hype because the decision shapes team structure, tooling, and long-term maintenance. The answer will vary by domain—customer service, compliance, product automation, or R&D. By anchoring the discussion to concrete criteria (governance, explainability, latency, data needs, and cost), you can compare 'which ai agent is better' in a transparent way. Ai Agent Ops provides a framework to assess strengths and trade-offs across policy-driven, learning-based, and hybrid agents.

What 'Better' Means for AI Agents

In practice, 'better' is a function of several criteria that matter to teams and stakeholders. Reliability, explainability, and governance are critical in regulated environments; latency and throughput matter for real-time workflows; data requirements influence how quickly you can deploy; and total cost of ownership affects long-term sustainability. This section clarifies how to translate abstract goals into concrete metrics so you can measure which ai agent is better for your specific context. We’ll also discuss how success is defined differently for product teams, security officers, and executives, and why aligning incentives across stakeholders is essential to avoid conflicting conclusions. When teams ask which ai agent is better, they should first agree on success metrics that map to business outcomes.

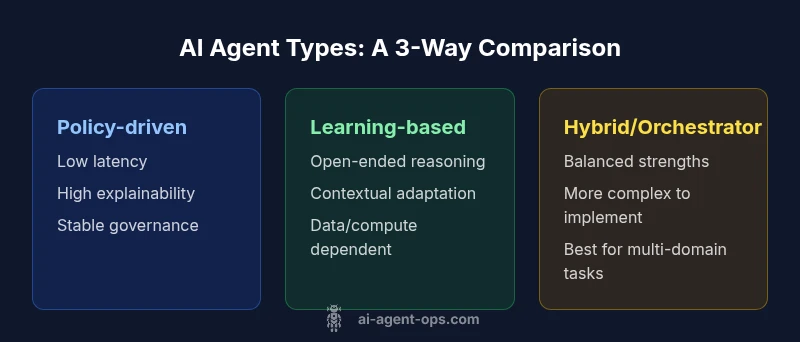

Core Differentiation: Policy-Driven vs Learning-Based vs Hybrid — which ai agent is better for different tasks

Policy-driven, learning-based, and hybrid agents represent the three main archetypes. Policy-driven agents rely on explicit rules and decision trees; learning-based agents leverage statistical models and data; hybrid agents combine both with orchestrated control. To decide which ai agent is better for your task, consider the nature of the work (structured vs unstructured), the need for explainability, and how much you can invest in data and compute. In some cases, the difference is not a matter of quality but of risk tolerance and speed to value. Ai Agent Ops emphasizes evaluating these archetypes against the same criteria to ensure a fair comparison. When someone asks which ai agent is better, the decisive factors are governance requirements, data access, and maintenance overhead.

When to Use a Policy-Driven Agent (Rule-based)

Policy-driven agents are built from explicit rules, eligibility checks, and deterministic outcomes. They shine in environments where compliance, auditability, and predictable behavior are non-negotiable. For tasks like document routing, simple decision trees, or escalation logic, policy-driven agents offer low latency and high transparency. The trade-offs include limited adaptability to novel inputs and slower evolution when business rules change. If you need strong controls and clear traceability, this is often the preferred starting point. In many organizations, policy-driven layers act as the backbone of an automated pipeline, with other agents augmenting or validating results.

When to Use a Learning-Based Agent (LLMs, RL)

Learning-based agents excel at open-ended tasks, natural language understanding, and complex reasoning across diverse domains. They adapt to new inputs, summarize information, and generate insights that rules alone cannot. The caveats include potential variability in outputs, the need for data governance, and monitoring for model drift or hallucinations. For product discovery, customer insights, or autonomous assistance that requires context-aware dialogue, learning-based agents can unlock substantial value. Implementations often rely on prompt engineering, retrieval-augmented generation, and continuous evaluation to manage risk.

The Hybrid/Orchestrator Approach: Best of Both Worlds

Hybrid or orchestrated agents combine explicit rules with learning-based components, guided by a centralized decision layer. This approach seeks to deliver reliability and explainability where it matters while preserving adaptability for unstructured inputs. The hybrid model can route straightforward decisions to policy-driven components and escalate complex tasks to learning-based reasoning modules. The challenge is design complexity and integration overhead, but the payoff is a versatile system that remains auditable and controllable while handling ambiguity. For many teams, the orchestrator approach offers the most robust path to scale across domains.

Evaluation Framework: How to Compare Agents in Practice

A rigorous evaluation starts with a shared scoring system across all agent types. Define success metrics aligned with business outcomes: accuracy, consistency, latency, explainability, and governance readiness. Use realistic scenarios and test datasets to measure performance under edge cases. Establish a pilot plan with clear success criteria, a rollback path, and measurable risk controls. Document your assumptions and ensure stakeholders agree on definitions of 'better' before running experiments. AI agents should be assessed on both technical metrics and organizational impact, including security, compliance, and user satisfaction. In short, evaluating which ai agent is better requires both quantitative benchmarks and qualitative judgments.

Practical Scenarios: Industry Examples

Consider a financial services firm evaluating risk monitoring. A policy-driven agent can enforce regulatory rules with traceable decisions, while a learning-based agent might detect anomalies that rules miss. A hybrid approach could flag high-risk cases for human review while autonomously handling routine screening. In a customer support center, a learning-based agent might handle nuanced conversations; a policy-driven layer can guarantee policy compliance for sensitive topics. In healthcare, where patient data privacy and explainability are paramount, a hybrid system with strong auditing could meet both safety and reliability requirements. Across sectors, tailoring the mix of agent archetypes to the task reduces risk and accelerates value.

Potential Pitfalls and How to Mitigate

Relying on a single archetype without governance leads to brittle systems and hidden risks. Hallucinations in learning-based agents, rule drift in policy-driven approaches, and integration delays in hybrids are common pitfalls. Mitigate by implementing comprehensive monitoring, automated testing, and clear escalation paths. Establish data governance, privacy protections, and security review cycles. Regularly review the alignment between business goals and agent behavior, and update evaluation criteria as needs evolve. A thoughtful approach to risk management makes it feasible to compare which ai agent is better in a real-world setting.

Decision Checklist: A 12-Point Guide

- Define the primary business objective for the agent.

- List required governance, compliance, and audit needs.

- Characterize data availability and quality.

- Establish latency and scalability targets.

- Assess explainability and traceability requirements.

- Map tasks to policy-driven, learning-based, or hybrid categories.

- Plan a pilot with agreed success metrics.

- Build monitoring for drift, bias, and unsafe outputs.

- Create a clear rollback and governance protocol.

- Align incentives across product, security, and operations.

- Budget for tooling, data, and compute.

- Iterate based on pilot results and stakeholder feedback.

Implementation Considerations: Governance, Data, and Security

Implementation decisions at the integration layer impact long-term viability. Prioritize data minimization, secure access controls, and encryption in transit and at rest. Design for auditable decision traces and robust logging. Define guardrails to prevent unintended consequences, such as feedback loops or data leakage. Establish a policy review cadence to maintain regulatory alignment and adapt to evolving requirements. When selecting which ai agent is better for your organization, ensure that governance, data integrity, and security receive equivalent weight to performance metrics.

Next Steps: Planning a Pilot to Decide Which AI Agent Is Better

After identifying candidate archetypes, design a controlled pilot with real-world tasks and measurable outcomes. Use a fixed evaluation timeframe, a small, representative dataset, and a clear success threshold. Involve stakeholders from product, security, and legal to ensure governance criteria are baked in. Use the pilot to refine your decision criteria and validate assumptions before scaling. The goal is to move from theoretical debates to tangible, auditable results that answer which ai agent is better for your exact needs.

Feature Comparison

| Feature | Policy-driven Agent | Learning-based Agent | Hybrid/Agent Orchestrator |

|---|---|---|---|

| Decision latency | Low and predictable due to rules | Moderate to high depending on model complexity | Balanced with orchestration and caching |

| Data requirements | Structured inputs, explicit schemas | Large data and compute for training and inference | Requires both rules and data pipelines |

| Explainability | High — traceable rules | Low to moderate — model reasoning may be opaque | High — combines rules with model traces |

| Flexibility | Low — best for routine tasks | High — adapts to diverse inputs | High — best of both worlds |

| Best for | Repeatable, compliance-heavy tasks | Open-ended reasoning and language tasks | Multi-domain, adaptable automation |

| Cost/Resource footprint | Predictable maintenance with lower compute | Variable depending on data and model usage | Moderate to high due to integration and tooling |

Positives

- Clear governance and compliance with policy-driven agents

- High explainability and auditable decisions

- Low initial data requirements for simple tasks

- Fast deployment for routine workflows

What's Bad

- Limited adaptability to unforeseen inputs

- Ongoing rule maintenance can be costly

- Model-based benefits require data governance and monitoring

- Hybrid setups add integration complexity

Hybrid/Orchestrator typically offers the best balance for mixed workloads

Policy-driven agents excel where governance is paramount; learning-based agents shine for open-ended tasks. The orchestrator approach integrates both strengths, reducing risk while delivering flexibility.

Questions & Answers

What factors determine which ai agent is better?

Choosing between agent types boils down to governance needs, data availability, required explainability, and the acceptable risk level. Consider your latency targets and maintenance capacity. A clear alignment to business outcomes helps determine which ai agent is better for your context.

Key factors include governance, data, explainability, and latency. Align these with your business goals to pick the best option.

Can I switch between agent types mid-project?

Yes, but it requires careful planning. Start with a modular architecture, use adapters to swap components, and run parallel pilots to measure impact. Governance and data provenance become critical during transitions.

You can switch, but plan, test, and monitor carefully.

Are there trade-offs between latency and accuracy?

Often yes. Policy-driven components favor latency and predictability, while learning-based systems can improve accuracy with more data but may incur higher latency. A hybrid approach can balance both but adds complexity.

Latency and accuracy trade-offs depend on the architecture; hybrids balance both, with some complexity.

How do governance and compliance affect agent selection?

Governance shapes choice toward agents with traceable decisions, auditable logs, and strict access controls. In high-risk domains, policy-driven layers often dominate to ensure compliance and accountability.

Governance pushes you toward transparent, auditable decisions and strict controls.

What role do data quality and privacy play?

Data quality directly affects model behavior and decision reliability. Privacy concerns drive architecture choices, data handling practices, and access controls. Clean, compliant data improves outcomes for any agent type.

High-quality, private data improves reliability and reduces risk for all agents.

What is a practical pilot plan to compare agents?

Define a focused scope with measurable success criteria, select representative tasks, and run concurrent pilots. Use consistent evaluation metrics, document assumptions, and plan a staged rollout based on results.

Run a focused pilot with clear metrics and a staged rollout.

Key Takeaways

- Define goals before selecting an agent type

- Map tasks to policy-driven, learning-based, or hybrid approaches

- Prioritize governance, explainability, and data controls

- Pilot with a small scope before full-scale rollout

- Invest in monitoring and safeguards for agent behavior