How is ai agent different from chatgpt? A Practical Comparison

Explore how AI agents differ from ChatGPT in autonomy, tooling, and workflow integration. An analytical guide for developers and leaders evaluating agentic AI approaches.

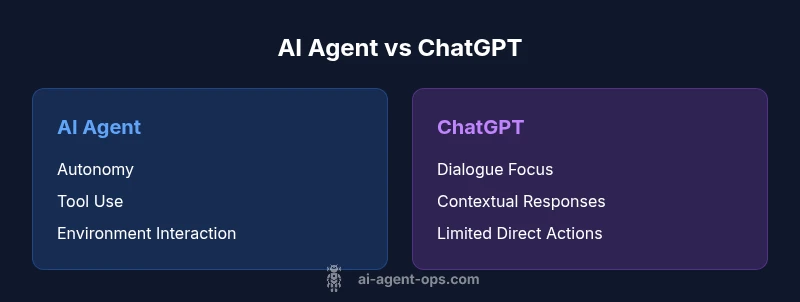

The short answer is that ai agents extend beyond chat-based interactions by including autonomy, tool-usage, and environment interaction. While ChatGPT excels at natural-language conversation and general reasoning, AI agents are designed to take goal-directed actions, reason about the world, and orchestrate tools or services to accomplish tasks. This distinction matters for teams planning automation, workflow orchestration, or real-time decision-making in complex environments.

Introduction: Framing the question in context

When someone asks how is ai agent different from chatgpt, they’re comparing two ends of the AI spectrum. ChatGPT operates as a powerful conversational model optimized for language understanding and generation. An AI agent, by contrast, combines a cognitive layer with action-oriented components that can interact with software, services, and data sources. In practical terms, ai agents are built to execute tasks with a degree of autonomy, while ChatGPT primarily assists through dialogue. This distinction matters for teams designing intelligent systems, automating business processes, and building agentic AI workflows. According to Ai Agent Ops, understanding these differences helps teams select the right tool for the job and avoid overreliance on a chat-centric paradigm when real-world action is required.

Core Concepts: What each term means

ChatGPT refers to a large language model-centered chat experience that excels in language tasks, reasoning prompts, and context-aware responses. AI agents extend that capability by adding a decision-making loop, tools integration (APIs, databases, apps), and memory to persist state across interactions. The result is a system that can set goals, choose actions, and measure outcomes, rather than merely respond to prompts. For developers, the key takeaway is that agents are designed for execution, while chat models are designed for conversation.

Comparison

| Feature | AI agent | ChatGPT |

|---|---|---|

| Autonomy | Supports goal-directed actions with decision loops | Primarily interactive dialogue with user prompts |

| Environment Interaction | Can call tools, APIs, and external services | No direct tool usage; relies on user prompts |

| Memory & State | Maintains state across sessions and tasks | Limited persistent memory; context resets with new sessions |

| Tooling & Orchestration | Orchestrates multi-step workflows and tool use | Generates responses without orchestrating external steps |

| Decision Speed & Latency | Operates in real-time with action feedback | Latency mostly tied to prompts and responses |

| Use Case Fit | Automation, integration-heavy processes, agentic workflows | Conversation-centric help, content generation, guidance |

Positives

- Enables automation and real-world task execution

- Supports modular, composable workflows with tools

- Improves consistency by persisting state and decisions

- Suitable for enterprise-grade agentic AI implementations

What's Bad

- Higher complexity and governance needs

- Requires tooling, infrastructure, and monitoring

- Potential security and data-access risks with tool usage

- Steeper learning curve for teams new to agents

AI agents win for execution and orchestration; ChatGPT wins for conversational depth

If your goal is to automate tasks across services, prefer an AI agent with tooling. If your priority is natural, nuanced dialogue, ChatGPT remains strong. For many teams, a hybrid approach offers the best of both worlds.

Questions & Answers

What exactly is an AI agent, and how does it differ from a chatbot?

An AI agent combines perception, decision-making, and action. It can call tools, manage state, and execute tasks autonomously. A chatbot (like ChatGPT) excels at language understanding and generation but typically does not directly perform external actions without explicit prompts or middleware.

An AI agent can act and use tools, while a chatbot mainly chats. Think of agents as workers and chatbots as advisers.

Can a ChatGPT-based system be upgraded to behave like an AI agent?

You can extend a ChatGPT-based system with plugins, tool integrations, and memory modules to approach agent-like behavior. However, achieving robust autonomy and governance requires architectural changes beyond a pure chat interface.

You can add tools and memory, but true autonomy needs a broader agent framework.

What are common use cases for AI agents?

AI agents shine in process automation, decision support with actionable outcomes, and orchestrating multi-step workflows across services. Typical scenarios include data ingestion, task delegation, and automated remediation tasks.

Automation across apps and services is where agents excel.

What governance considerations matter for AI agents?

Governance includes access control, data privacy, audit trails, and fail-safes. Monitoring for unintended actions and ensuring compliance with policies helps mitigate risk in agent-enabled systems.

Control who can instruct the agent and what it can access.

Is an AI agent always better than a ChatGPT-based solution?

Not necessarily. For dialogue-heavy tasks, ChatGPT may be preferable due to conversational quality. For automation and tool usage, AI agents typically outperform chat-only approaches.

It depends on the job: conversation vs action and automation.

Key Takeaways

- Identify whether outcomes require action beyond dialogue

- Evaluate tooling needs before choosing an approach

- Plan for governance, security, and monitoring from day one

- Consider a hybrid architecture to combine strengths

- Benchmark with realistic workflows to avoid overengineering