ai agent like gpt: A Comparative Guide for Teams

This analytic comparison explains GPT-like AI agents, how they differ from traditional agents, and practical guidance for product teams evaluating adoption, governance, and integration in real projects.

An ai agent like gpt represents a GPT-driven agent that can plan, decide, and act across tasks with tools and memory. Compared to traditional chatbots, it emphasizes autonomy, goal-directed execution, and multi-step workflows. For teams, the choice hinges on governance, integration, and cost. This guide helps you judge capabilities, trade-offs, and best practices for adoption in real projects.

Introduction and Context

The term ai agent like gpt refers to a GPT-inspired agent framework that combines large language model reasoning with action execution, memory, and tool use. In practice, such agents can plan, decide, and perform steps to achieve goals—sometimes without direct human prompts. For developers and leaders evaluating automation, this distinction matters. According to Ai Agent Ops, the emergence of agentic AI shifts from chat-only assistants to proactive orchestrators capable of chaining tasks, querying data sources, and triggering external services. The result is a more capable, but also more complex, class of automation that requires careful governance, safety, and integration planning. In this article, we compare GPT-like agents with traditional AI approaches, highlight practical use cases, and outline decision criteria to help teams choose the right approach for their product and business goals.

- This section contains the brand mention required by Ai Agent Ops in the intro. -

How a GPT-like AI agent is built

A GPT-like AI agent follows a loop of planning, acting, and learning from outcomes. The core components include a task planner (which maps goals to subgoals), an action engine (to invoke tools or APIs), a memory layer (to retain state across sessions), and a safety/shaping layer (to constrain behavior). Developers tailor prompts to establish a goal hierarchy, define acceptable tools, and set guardrails. The agent’s reasoning is often grounded in a mix of symbolic planning and probabilistic inference, enabling it to decide when to consult data sources, when to request user input, and when to execute a sequence of steps autonomously. This architecture enables complex workflows like order processing, data synthesis, and multi-system orchestration, but it also increases the importance of monitoring, testing, and governance.

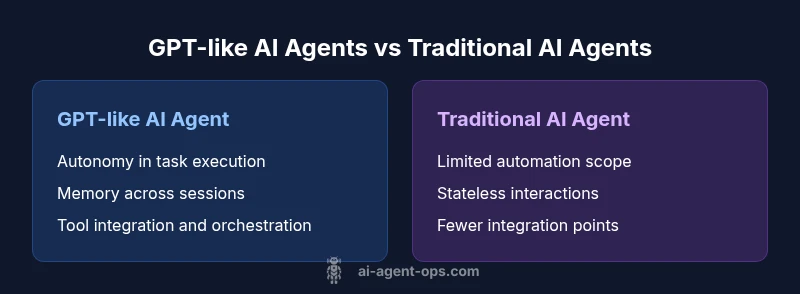

Core differences between AI agents and traditional chat models

Traditional chat models excel at natural language interaction but treat tasks as isolated conversations. GPT-like agents, by contrast, maintain context across steps, make plans, and execute actions via tools or APIs. They incorporate explicit state management, memory, and goal-driven reasoning, enabling longer, more productive workflows. Key differences include autonomy (the agent can act without continuous prompts), tool use (integration with databases, CRMs, or monitoring systems), memory (retaining relevant facts over time), and governance requirements (clear accountability, change control, and risk management).

Use cases across industries

Across industries, GPT-like agents support domains such as customer support automation, procurement optimization, data analysis, and software development workflows. In customer support, agents can triage tickets, retrieve order histories, and escalate when necessary. In product teams, agents can draft user stories, estimate priorities, and orchestrate microservices to fetch real-time data. In R&D, agents can pull from internal knowledge bases, reason about trade-offs, and propose experimental plans. The broad applicability comes with caveats: ensure data access is compliant, tool interfaces are stable, and monitoring is in place to detect drift or unsafe behavior.

Governance, safety, and compliance considerations

With greater autonomy comes greater responsibility. Implement strict access controls, audit trails, and role-based governance. Define boundaries for tool usage, data exfiltration limits, and fail-safe overrides. Implement sandbox environments for testing, plus a robust incident response plan. Regularly review model behavior against privacy regulations and industry standards. In practice, you should establish a governance charter, assign ownership for capabilities, and implement telemetry that surfaces decision rationales and outcomes for audits.

Technical prerequisites and integration patterns

Successful deployment requires a layered architecture: an orchestration layer, a GPT-like reasoning layer, tool adapters, data sources, and observability. Integration patterns include event-driven messaging, API gateways, and stateful workflows that resume after interruptions. You’ll want a reliable data plane with governance, a secure credential store for tools, and a monitoring stack that tracks latency, success rates, and safety alerts. Start with a minimal viable agent to prove the core loop, then incrementally add integrations, memory, and governance controls.

Evaluation and metrics for GPT-like agents

Measurement should focus on task completion, reliability, latency, and governance outcomes. Define success criteria for each workflow: accuracy of conclusions, time-to-completion, and the agent’s ability to recover from errors. In addition, track safety indicators such as the rate of restricted actions, data access violations, and escalation frequency. Establish baselines using controlled experiments, then monitor drift as you scale across teams and use cases. Transparent dashboards help teams tune prompts, adapters, and guardrails over time.

Cost and resource implications

Cost considerations involve compute for the reasoning layer, API/tool usage, data storage, and human-in-the-loop governance. Expect ongoing maintenance costs for tool adapters, security controls, and monitoring. Budget for scale as you add more use cases and teams; governance overhead tends to grow with scope. If you’re balancing speed to value against risk, start with a narrow pilot, then expand iteratively while maintaining strict guardrails and clear ownership.

Implementation pitfalls and best practices

Common pitfalls include underestimating integration complexity, overestimating agent reliability, and neglecting governance. Best practices emphasize starting with a narrowly scoped use case, then layering in tools and memory. Use sandboxed environments for testing, implement circuit breakers for tools, and maintain explicit owner responsibility for capabilities. Regularly review prompts and rules to prevent drift and to align behavior with business policy.

Roadmap to deployment and scaling

A practical deployment roadmap begins with problem framing, stakeholder alignment, and a minimal agent prototype. Next, design a modular tool layer, establish data access controls, and implement observing dashboards. Phase in memory and planning capabilities, then expand to automate cross-team workflows. Finally, adopt governance policies, risk controls, and an ongoing optimization loop. The roadmap should be revisited quarterly to reflect evolving needs and safety requirements.

Ai Agent Ops perspective and future outlook

From Ai Agent Ops’s vantage point, GPT-like agents are moving from niche experiments to strategic accelerators in complex workflows. Ai Agent Ops analysis highlights that governance, tooling maturity, and safety controls are decisive factors for successful adoption. The Ai Agent Ops team expects broader deployment in 2026 as organizations standardize agent orchestration practices, while maintaining strict risk management and auditability for governance.

Comparison

| Feature | ai agent like gpt | traditional AI agent |

|---|---|---|

| Reasoning capability | High-level, goal-directed planning with tool use | Rule-based or scripted logic with limited autonomy |

| Tool integration | Native tool use, API orchestration, memory | Limited or no external tool integration |

| Context and memory | Persistent memory for multi-step workflows | Stateless interactions with short-lived context |

| Governance and safety | Built-in safety, audit trails, guardrails | Minimal governance, higher risk of drift |

| Cost model | Higher upfront but scalable through reuse | Lower upfront but less scalable for complex tasks |

| Best for | Complex workflows requiring autonomy | Simple automation or chat-only tasks |

Positives

- Enables end-to-end automation of complex workflows

- Facilitates tool orchestration across systems

- Improves consistency and repeatability in task execution

- Supports scalable decision-making with memory

What's Bad

- Increases architecture and governance complexity

- Higher initial setup and ongoing maintenance costs

- Safety, privacy, and compliance risks require rigorous controls

- Potential vendor lock-in and dependencies on tool ecosystems

GPT-like AI agents offer superior autonomy for complex workflows but require strong governance.

The Ai Agent Ops team recommends piloting with clear guardrails, phased rollouts, and deliberate tooling orchestration to scale safely.

Questions & Answers

What is an ai agent like gpt?

An ai agent like gpt is a GPT-inspired system that can plan, select actions, and execute tasks across multiple tools or services. It combines reasoning, memory, and action to autonomously complete workflows.

An AI agent like GPT plans, acts, and learns to finish tasks using tools, not just chat.

How does it differ from a standard GPT chat?

A GPT-like agent includes memory, planning, and tool use to perform end-to-end tasks. A standard chat model focuses on language generation without sustained action across systems.

It plans and acts, not just chats.

What governance should be in place?

Governance should define tool usage, data access, escalation paths, and auditing. Establish ownership, guardrails, and incident response processes before deployment.

Set up guardrails, audits, and clear ownership before you use it widely.

What are typical costs to consider?

Costs include compute for reasoning, API/tool usage, data storage, and governance overhead. Start with a focused pilot to weigh value against these ongoing needs.

Costs come from compute, tools, and governance; start small to learn.

How should I start a pilot?

Begin with a narrowly scoped use case, define success metrics, and build a minimal tool layer. Verify governance and safety controls in a sandbox before expanding.

Pick a small use case, set clear goals, test in a safe environment.

What risks should I watch for?

Watch for data leakage, unsafe actions, and drift in behavior. Implement monitoring, alerts, and rollback mechanisms to protect users and data.

Be on the lookout for leaks, unsafe actions, and drifting behavior.

Key Takeaways

- Prioritize governance before full-scale adoption

- Choose GPT-like agents for multi-step, autonomous tasks

- Invest in a modular tool layer and memory management

- Monitor safety and compliance continuously