Ai Agents Routine Work Tasks Comparison

A data-driven comparison of how AI agents handle routine work tasks, guiding developers, product teams, and leaders in automation planning.

The ai agents routine work tasks comparison highlights how task type, data quality, and governance shape outcomes. A mixed setup of specialized agents plus orchestration layers generally yields more reliable automation for routine workflows than a single monolithic bot, while preserving oversight and adaptability for evolving needs.

Understanding ai agents routine work tasks

According to Ai Agent Ops, the modern approach to automation centers on combining AI agents with structured governance to execute routine work tasks. The goal is to extend human capabilities without sacrificing reliability or traceability. In practice, this means mapping daily activities—data gathering, transformation, decision support, and orchestration—into modular agents that can be composed into workflows. The phrase ai agents routine work tasks comparison serves as a framing device: it puts the emphasis on how different task types drive architecture choices, integration needs, and governance requirements. This section sets the stage for a deeper look at task categories, data dynamics, and the orchestration patterns that unlock scalable automation while avoiding brittle, monolithic systems.

The taxonomy of routine tasks in AI agents

Routine work tasks span several categories: data collection and cleansing, pattern recognition and alerts, decision support with rule-based checks, action initiation in downstream systems, and auditable handoffs to human operators. Each category has unique requirements for latency, data freshness, and explainability. Recognizing these distinctions is essential for selecting appropriate agent designs. The ai agents routine work tasks comparison framework helps teams distinguish where a task belongs and which agent type best handles it, from simple data routing to complex multi-system coordination.

Categorizing AI agents by task type and capability

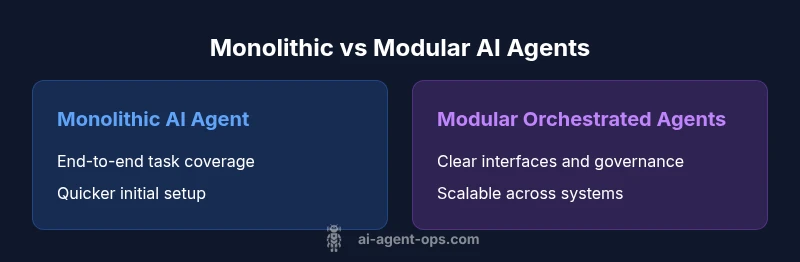

A robust automation strategy differentiates agents by capability rather than by tools alone. Basic agents may perform straightforward data routing or template responses; intermediate agents handle multi-step transformations; advanced agents orchestrate cross-system workflows and negotiate handoffs with humans when exceptions arise. In the context of ai agents routine work tasks comparison, we examine how capability levels influence architecture: monolithic agents excel at simple, tightly scoped tasks, while modular, orchestrated agents excel when tasks vary, data sources multiply, and governance needs increase. The right mix depends on the organization’s data maturity and risk tolerance.

Data quality and reliability as gatekeepers of success

Without high-quality, well-governed data, even the most sophisticated agents underperform. Data issues—missing values, inconsistent schemas, or lagging feeds—undercut model confidence and shorten automation benefits. The ai agents routine work tasks comparison approach emphasizes data contracts, schema registries, and end-to-end observability. Establishing clear data ownership, validation rules, and audit trails helps ensure repeatable outcomes across tasks and reduces remediation time when things go wrong. This section connects data reliability to real-world success metrics rather than theoretical promises.

Monolithic vs. modular agent architectures: a practical lens

Two archetypes dominate discussions about ai agents routine work tasks comparison: a single monolithic agent that attempts to handle end-to-end workflow steps, and a modular, orchestrated set of agents that communicate through defined interfaces. Monolithic architectures can be simpler to set up initially but often suffer from rigidity and maintenance challenges as scope expands. In contrast, modular architectures promote decoupling, easier testing, and scalable governance, albeit at the cost of additional design considerations and orchestration overhead. This section contrasts these approaches using concrete workflow patterns rather than abstract buzzwords.

Governance, safety, and reliability: designing for trust

Automation without governance risks drift, untraceable decisions, and inconsistent outcomes. The ai agents routine work tasks comparison framework places governance as a first-order design constraint. Teams should enforce explainability of agent decisions, establish escalation paths, implement access controls, and define remediation procedures. Reliability practices—monitoring, retries, circuit breakers, and graceful degradation—help maintain service levels even when individual components fail. Aligning these practices with business outcomes ensures automation remains a strategic enabler rather than a technical curiosity.

Real-world deployment patterns and pitfalls to avoid

In practice, organizations find success by starting with a narrow pilot that targets high-volume, rule-driven tasks. This approach reduces risk and creates early, usable feedback for governance processes. Common pitfalls include over-engineering early, under-investing in data pipelines, and neglecting human-in-the-loop oversight. The ai agents routine work tasks comparison perspective suggests a staged scale-up: validate the workflow, codify decision criteria, and expand to adjacent tasks only after establishing reliable performance and governance feedback loops. This disciplined approach supports durable automation outcomes.

Building a decision framework for selecting the right mix of agents

A practical framework blends task taxonomy, data readiness, governance requirements, and business impact. Start by cataloging routine tasks, assign ownership, and determine acceptable risk levels. Then assess whether a modular orchestration approach or a simpler monolithic setup best matches each task category. The outcome is a tailored blend of agents and orchestration layers that balances speed, accuracy, and oversight. This framework, grounded in the ai agents routine work tasks comparison, guides teams from pilots to scalable production.

Conclusion and next steps in the ai agents routine work tasks comparison journey

This section consolidates the key takeaways and outlines concrete next steps for teams planning automation initiatives. Begin with a pilot focused on high-volume, low-variance tasks and define success criteria aligned with governance principles. Expand incrementally, monitor outcomes, and iteratively refine the agent mix. The broader objective is to evolve toward a resilient, auditable workflow ecosystem that scales with data maturity and organizational goals.

Comparison

| Feature | Monolithic AI Agent | Modular Orchestrated AI Agents |

|---|---|---|

| Integration complexity | High coupling in a single unit; harder to replace parts | Low coupling with clear interfaces; easier to swap components |

| Performance in routine tasks | Consistent for narrowly scoped tasks, but brittle with changes | Higher adaptability through composition and reusability |

| Maintainability and evolution | Tight coupling makes evolution slow and risky | Decoupled components enable incremental upgrades |

| Governance and auditing | Limited visibility into decisions without design-level controls | Explicit handoffs and observability improve auditing |

| Total cost of ownership | Higher long-term maintenance if scope grows | Potentially lower incremental costs with phased investments |

| Best for | Organizations seeking fast start with centralized control | Teams prioritizing scalability, governance, and resilience |

Positives

- Improved task throughput and consistency

- Better scalability across teams

- Clear audit trails and governance

- Faster experimentation and iteration

What's Bad

- Higher initial setup and integration effort

- Requires ongoing governance discipline

- Increased complexity and required skills

Modular orchestration wins for long-term scalability and governance

This approach scales across teams and provides auditable workflows. The Ai Agent Ops team recommends starting with modular orchestration and expanding as data quality and governance mature.

Questions & Answers

What are AI agents and how do they differ from traditional automation?

AI agents are autonomous software entities that perform defined tasks by interacting with data and systems. Unlike traditional automation that follows fixed scripts, AI agents can adapt to changing inputs and coordinate across multiple services. This flexibility is central to the ai agents routine work tasks comparison, which weighs architecture choices against governance and reliability needs.

AI agents are autonomous tools that adapt to data and can coordinate across systems. In the comparison, we look at how they fit your workflows and governance needs.

What constitutes routine work tasks in AI agents?

Routine tasks include data collection, cleansing, simple decision support, and triggering actions in other systems. The goal is to automate repetitive, high-volume activities while keeping humans in the loop for exceptions. The ai agents routine work tasks comparison framework helps map each task to an appropriate agent design.

Routine tasks are the high-volume, repeatable activities that agents can automate, with humans stepping in for special cases.

How do you measure the effectiveness of AI agents in daily tasks?

Effectiveness is measured through reliability, accuracy of outcomes, time saved, and governance compliance. In production, you track service levels, error rates, and explainability. The comparison emphasizes designing measurable criteria before scale-up to avoid hidden costs.

Track reliability, accuracy, time saved, and governance adherence to gauge effectiveness.

What is agent orchestration and why is it important?

Agent orchestration coordinates multiple AI agents and services, enabling end-to-end workflows with clear handoffs. It improves scalability and governance by decoupling components and providing observable decision points. This is a central theme in the ai agents routine work tasks comparison.

Orchestration wires several agents together for end-to-end workflows with clear oversight.

When should you consider no-code AI tools vs custom agents?

No-code AI tools are suitable for prototyping, small teams, or straightforward tasks without heavy data integration. Custom agents excel when data quality, governance, and integration into complex systems matter more. The decision rests on task complexity, data readiness, and organizational risk tolerance.

No-code is great for quick pilots; custom agents pay off when you need deep integration and governance.

What governance and safety concerns should you plan for?

Governance concerns include explainability, auditability, access control, and incident response. Safety concerns cover data privacy, bias mitigation, and failure handling. A solid framework addresses these with clear policies and automated monitoring.

Plan for explainability, audits, access, and safety controls to reduce risk.

Key Takeaways

- Define task categories to guide agent selection

- Prefer modular architectures for scalability and governance

- Balance automation with human oversight to manage risk

- Pilot early and measure outcomes with governance in mind