AI Agent vs Reinforcement Learning: Practical Comparison

An objective, data-driven comparison of AI agents and reinforcement learning to guide developers, product teams, and leaders exploring agentic AI workflows.

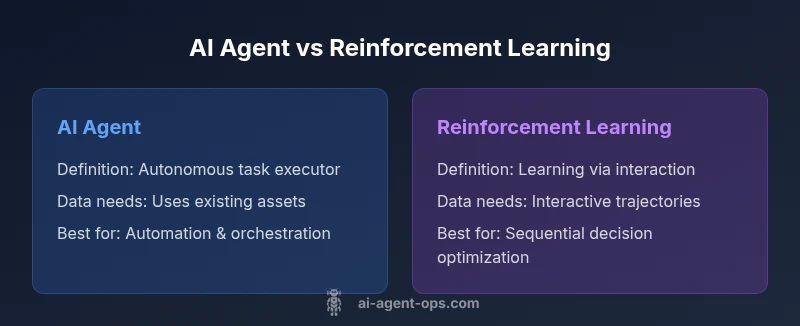

ai agent vs reinforcement learning are related but distinct concepts. AI agents are autonomous systems that perceive, reason, and act to achieve goals, often combining perception, planning, and action in a single loop. Reinforcement learning is a learning paradigm that optimizes behavior through trial-and-error interactions with an environment. Choosing between them depends on your goals, data availability, and deployment constraints.

What ai agent vs reinforcement learning means in practice

In the modern landscape of intelligent automation, the distinction between ai agent vs reinforcement learning is foundational. An AI agent is an autonomous software entity designed to perceive its environment, reason about possible actions, and execute those actions to achieve predefined objectives. It can incorporate perception modules, planners, planners, and action channels, and it often operates as part of a broader agentic AI workflow that includes human-in-the-loop controls and governance hooks. According to Ai Agent Ops, many teams underestimate how deeply these concepts diverge in real-world projects, leading to mismatched tooling and inflated budgets. In practice, a well-designed AI agent may orchestrate multiple services, apply business rules, and adapt to changing conditions without constant retraining. Reinforcement learning, by contrast, is a learning method that optimizes policies through direct interaction with an environment, guided by a reward signal. It emphasizes long-horizon decision-making and exploration, but it also introduces considerations around sample efficiency, safety, and reward design. Understanding the strengths, limits, and integration points of both concepts is essential for developers and leaders who want to build robust, scalable automation. This article draws on practical examples, avoids hype, and emphasizes decision criteria that matter in product teams and executive planning.

What reinforcement learning brings to the table

Reinforcement learning (RL) defines a loop where an agent observes a state, takes an action, and receives a reward, gradually shaping a policy that maximizes cumulative reward over time. RL shines in sequential decision problems, such as game-playing, robotic control, or dynamic resource management, where the optimal next step depends on future consequences. In many cases, RL is used to train the decision-making component inside an AI agent, but it can also be applied as a standalone learning loop for specialized tasks. When combined with simulation environments, RL can accelerate experimentation and enable safer, controlled exploration before deployment in the real world. At the same time, RL can be data-hungry and compute-intensive, which raises questions about efficiency, scalability, and governance—topics Ai Agent Ops often highlights for teams balancing velocity with reliability. For organizations exploring agentic AI workflows, a clear view of RL’s capabilities and its limits is essential to avoid over-optimization of toy benchmarks at the expense of real-world performance.

Core differences in scope and outcome

The most consequential distinction between ai agent vs reinforcement learning lies in scope. An AI agent is typically a complete automation unit designed to perform tasks, reason about goals, and act in a way that advances a business objective. It may incorporate planning, perception, memory, tool usage, and human-in-the-loop controls. RL, meanwhile, is a method for learning how to act within a given environment. It does not prescribe a full system architecture; rather, it provides a policy that can be embedded inside an agent or used to train one. Practitioners emphasize that RL’s strength is optimizing long-horizon outcomes under uncertainty, while AI agents excel at orchestrating capabilities across systems, applying domain knowledge, and maintaining consistent behavior over time. These differences matter when you design your automation stack, select data pipelines, and plan governance and monitoring across deployment lifecycles.

Data, learning, and deployment considerations

Data considerations separate the two approaches in meaningful ways. Reinforcement learning depends on interactive data—state-action-reward trajectories generated by running the agent in an environment, which can be simulated or real. This data can be expensive to obtain, and learning can be brittle if the environment shifts after deployment. AI agents, by contrast, can leverage existing data assets, domain rules, and modular components that don’t require continuous retraining. They are often deployed as reusable services that can be upgraded component-wise, enabling faster iteration and safer governance. For organizations, the decision to adopt AI agents, RL, or a hybrid approach should be grounded in data availability, risk appetite, and desired time-to-value. Ai Agent Ops’s perspective emphasizes aligning data strategy with governance goals to minimize surprises during scale-up.

Benchmarking and evaluation approaches

Evaluating ai agent vs reinforcement learning requires distinct lenses. For AI agents, evaluation focuses on end-to-end task completion, reliability, latency, and maintainability of the automation stack. For RL, evaluation emphasizes policy quality, sample efficiency, robustness to distributional shifts, and safety of exploration. In practice, teams often run parallel pilots: an RL-based policy is trained in a simulated environment, while a rule-based or learned agent handles routine tasks and governance checks. When you merge these approaches, you’ll want a rigorous evaluation plan that includes offline testing, simulation-based validation, and carefully monitored live rollout to track how the agent behaves under real-world conditions. Ai Agent Ops highlights the importance of transparent metrics and governance dashboards that keep stakeholders aligned throughout the experimentation lifecycle.

Practical deployment patterns and architecture

A practical AI automation stack often features a layered architecture. At the base are perception modules, data pipelines, and interfaces to external systems. In the middle layer, planners, executors, and decision modules coordinate actions and monitor outcomes. The top layer handles safety, governance, and human oversight. When RL enters the mix, it typically contributes a policy module that can be plugged into the decision layer, either as a standalone component or as a learning-enabled controller. The integration pattern matters: consider offline RL to pre-train policies before live deployment, use policy distillation to simplify models, and design reward shaping carefully to avoid unintended incentives. The goal is to balance responsiveness, safety, and learning capability while preserving maintainability and auditability for stakeholders. For teams building agentic AI workflows, this architecture supports experimentation, scale, and governance.

Comparison

| Feature | AI agent | Reinforcement learning |

|---|---|---|

| Definition / Focus | Autonomous system that perceives, reasons, and acts toward goals | A learning paradigm that optimizes behavior via trial-and-error in an environment |

| Learning vs Deployment | Often combines perception, planning, and action for ongoing tasks | Primarily a learning method; may require a separate deployment architecture |

| Data requirements | Integrates existing data, perception inputs, and domain knowledge | Requires rich interactive data from simulations or real environments |

| Sample efficiency | Can be ready-to-deploy with modular components | Can be sample-inefficient without careful design and tooling |

| Adaptability | Designed for broad automation across domains with reusable components | Optimizes sequential decisions; may need retraining for new tasks |

| Best use cases | Automation, orchestration, tool usage, and end-to-end workflows | Robotics, games, and control problems with clear reward signals |

| Risks & governance | Integration complexity, maintainability, and explainability | Reward design challenges, safety in exploration, and distribution shift |

| Cost & time to value | Often faster to deploy with existing tooling and services | Potentially high upfront training costs and compute needs |

Positives

- Faster time-to-value when reusing prebuilt agent components

- Supports modular, reusable automation across tasks

- RL can optimize long-horizon decisions with goal-driven rewards

- Agentic workflows enable tool-using capabilities and collaboration with humans

- Clear governance and monitoring when combining approaches

What's Bad

- RL can be data-hungry and costly to train

- AI agents add integration and maintenance complexity

- Reward design in RL can cause unintended behaviors

- Brittleness under distribution shift without robust testing

AI agents generally offer a practical, scalable path for end-to-end automation, with reinforcement learning best reserved for optimizing specific sequential decisions.

If your priority is rapid deployment and governance, start with AI agents. Use RL when you need to maximize long-horizon rewards in a controlled environment, and consider hybrid agentic AI to blend strengths.

Questions & Answers

What is the main difference between an AI agent and reinforcement learning?

AI agents are autonomous systems that act to achieve goals, while reinforcement learning is a method for learning policies through interactions with an environment. RL can train agents, but not all agents rely on RL.

AI agents are autonomous systems; RL is a learning method. RL can train an agent, but not all agents rely on RL.

Can reinforcement learning be used to build AI agents?

Yes. RL can train the decision policies inside an AI agent, especially for sequential tasks. In practice, many agentic AI systems combine RL with planning and rule-based components.

Yes. RL can train an agent's decision rules, often alongside planning.

When should I choose an AI agent over reinforcement learning?

Choose AI agents when you need reliable automation with reusable components and faster deployment. Choose RL when you must optimize long-horizon rewards in interactive environments and have sufficient data and compute.

Go with AI agents for rapid automation; RL for long-horizon optimization with enough data.

What are common pitfalls when combining AI agents with RL?

Reward misdesign, distribution shift, and integration complexity are common risks. Ensure robust evaluation, monitoring, and guardrails when blending agentic AI with RL.

Watch for reward design and safety issues when mixing agents with RL.

Is reinforcement learning always better for games and robotics?

Not always. For many business automation tasks, AI agents with rule-based or planning components can outperform RL due to data availability and deployment constraints.

RL is great for games and robotics in the right setup, but not always ideal for business automation.

How do you evaluate AI agent performance in practice?

Use task-specific metrics, safety and reliability criteria, and simulation-driven testing. Compare against baselines and monitor real-world outcomes to ensure alignment with goals.

Evaluate with task metrics, safety checks, and ongoing monitoring.

Key Takeaways

- Assess task scope before choosing approach

- Leverage agent architecture to separate perception, planning, and action

- Use RL to refine decision policies where sequential reasoning matters

- Plan for governance and safety from the start