ai agent vs rag: A Practical Comparison for AI Workflows

An analytical guide comparing AI agents and RAG (retrieval-augmented generation) for smarter automation. Learn use cases, tradeoffs, and best practices for agentic AI workflows in modern systems.

In the AI automation landscape, ai agent vs rag are two core approaches for building intelligent workflows. AI agents orchestrate actions across tools with memory and state, while retrieval-augmented generation (RAG) grounds responses in external data through a live knowledge base. The choice hinges on control, latency, data freshness, and governance. This article helps teams evaluate which path best fits their AI agent strategy.

Defining AI Agents and RAG

AI agents are software entities that autonomously perform a sequence of actions by calling tools, services, and APIs. They maintain internal state, make decisions, and adapt to changing circumstances with minimal human input. Retrieval-augmented generation (RAG) combines a generative model with a retrieval layer that sources evidence from a knowledge base, documents, or live feeds to ground outputs in verifiable data. The distinction isn’t merely technical; it changes how teams design control, memory, and governance into their AI workflows. For teams evaluating ai agent vs rag, the starting point is understanding each approach’s core objective: proactive orchestration versus data-grounded generation.

Why this matters: In practice, many organizations blend both patterns to achieve autonomous action while preserving factual grounding where it matters most.

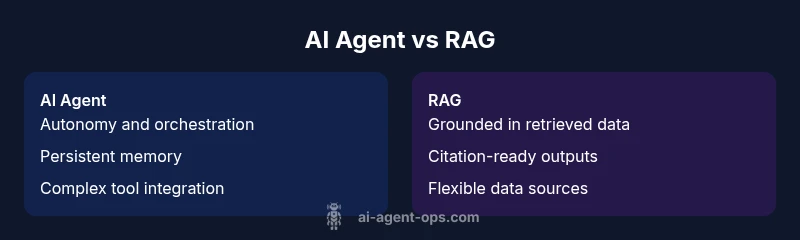

Core Differences at a Glance

- Primary objective: AI agents aim to automate end-to-end tasks with decision-making policies, whereas RAG focuses on generating responses anchored to retrieved documents.

- Memory and state: Agents retain long-running context; RAG relies on external sources for grounding, with limited internal memory unless coupled with a memory layer.

- Data freshness: Agents can operate with live data through integrations; RAG depends on the freshness of the retrieval corpus and indexing strategy.

- Latency and cost: Agents may incur planning and orchestration overhead; RAG adds retrieval latency plus generation costs.

Takeaway: The choice hinges on whether your priority is autonomous control and process orchestration (agent) or guaranteed factual grounding with flexible sourcing (RAG).

Decision Factors: When to Choose AI Agents

If your goals include automating complex workflows, orchestrating tool use, and maintaining a consistent policy for action, AI agents are typically the stronger choice. They excel in domains where tasks require multi-step reasoning, conditional branching, and long-term state. Agents shine when integration depth with enterprise tools, adapters, and custom plugins is feasible, enabling proactive automation, exception handling, and auditability. For teams pursuing agentic AI, investing in a robust action policy, memory management, and clear ownership models pays dividends. Best-fit signals: complex process automation, high autonomy needs, strict governance requirements.

Patterns to consider: (1) modular agent skills that can be composed, (2) a persistent memory layer for context, (3) explicit safety rails and approval gates.

Decision Factors: When to Choose RAG

RAG is attractive when your priority is data-grounded outputs that must reflect external facts or documents. It’s ideal for answering questions with citations, policy-driven responses, or knowledge-based assistance where the data source can be updated frequently. RAG lowers the burden of building expansive internal memory and policies, at the cost of retrieval setup and potential latency. For teams evaluating ai agent vs rag, RAG is often preferred in scenarios with dynamic knowledge needs, regulatory citation requirements, and fast iteration on data sources. Best-fit signals: factual grounding, frequent data updates, citation requirements.

Patterns to consider: (1) a vector store or search index for quick retrieval, (2) a retriever that’s tuned for domain relevance, (3) a generator configured to incorporate retrieved evidence clearly.

Architecture and Dataflows

AI agents typically implement a loop: observe input, plan a sequence of actions, execute tool calls, and update internal state. They may use planning modules, sub-skills, and a policy engine to decide which tools to call and when to stop. Data flows emphasize internal memory, decision logs, and action traces. RAG architectures insert a retrieval step before generation: user input feeds a retriever, which returns relevant documents; a generator then crafts the response grounded in those documents. Optional post-processing, citation rendering, and user feedback loops complete the cycle. A practical takeaway is that agents prioritize actionability, while RAG prioritizes factual grounding.

Hybrid patterns include a supervisor that can override autonomous agent decisions based on retrieved facts, or a shared memory layer that blends grounded evidence with agentic memory.

Performance, Latency, and Cost

Latency is a key factor in choosing ai agent vs rag. Autonomous agents may incur multi-turn tool calls, policy evaluation, and memory writes, which can increase end-to-end latency. RAG adds retrieval latency; depending on the index design and caching, this can be mitigated but still adds overhead. Cost is typically driven by compute for planning and tool usage in agents, and by API calls plus storage for retrieval in RAG. For teams evaluating total cost of ownership, it’s essential to model expected call frequency, data volume, and the cost of maintaining the knowledge store. A hybrid approach can balance latency with grounding by locating critical paths within an agent while routing others to retrieval.

Governance, Safety, and Compliance

Governance is often more explicit with AI agents because decisions are observable and auditable. Tool calls, memory updates, and decision logs provide traces for compliance and debugging. RAG requires governance around data sources, licensing, and retrieval policies to ensure sources are reliable and citations are traceable. Both patterns benefit from guardrails, but agents demand stronger controls for action, while RAG requires stringent data provenance and citation management.

Practical governance tips: clear ownership for decision policies, auditable tool usage, and strict data handling rules for any retrieved content. Combine both patterns with guardrails that require human-in-the-loop approval for high-risk actions.

Tooling and Ecosystem Compatibility

The tool landscape matters. AI agents integrate deeply with orchestration platforms, workflow engines, and enterprise APIs. They often require adapters, SDKs, and observability tooling to monitor decisions and performance. RAG ecosystems hinge on reliable retrieval stacks: vector databases, search indices, and data pipelines for indexing and refresh. Effective agent-based systems often leverage hybrid tooling to connect decision-making with data retrieval, enabling resilient, explainable AI workflows. When evaluating ai agent vs rag, assess your existing tech stack, data governance constraints, and the availability of connectors for your preferred data sources.

Real-World Use Cases by Industry

- Finance and Risk: Agents automate trading workflows or compliance checks; RAG supports policy lookup and evidence-backed explanations.

- Healthcare: Agents orchestrate patient care tasks; RAG grounds medical answers with up-to-date guidelines.

- Customer Support: Agents drive multi-step fulfillment; RAG provides product documentation and policy citations.

- Real Estate: Agents coordinate property listings and workflows; RAG supplies market data with citations.

- Tech Ops: Agents automate incident response; RAG retrieves runbooks and troubleshooting docs.

In each industry, a thoughtful blend can deliver both autonomous actions and fact-backed outputs.

Implementation Patterns: Hybrid Approaches

Hybrid patterns pair agents with retrieval layers so that critical decisions are grounded in evidence. A common approach is to route high-stakes tasks through a supervisor that can veto actions if retrieved facts contradict the planned steps. Another pattern is to maintain a lightweight memory layer for context while using RAG to fetch supporting data. Finally, monitor and iterate: start with a hybrid pilot, measure task success, latency, and grounding reliability, then scale.

Best Practices for Evaluation and Migration

- Define success metrics: task completion rate, grounding accuracy, latency, and user satisfaction.

- Run controlled pilots comparing autonomous agent execution against retrieval-grounded outputs.

- Instrument decision logs, tool calls, and retrieved sources for auditing.

- Plan for gradual migration: begin with a hybrid architecture and evolve toward stronger autonomy if governance allows.

Common Pitfalls and How to Avoid Them

- Over-reliance on automation without guardrails, leading to unsafe actions.

- Underestimating data freshness needs and retrieval latency.

- Fragmented memory models that impair context continuity.

- Inadequate evaluation plans that miss grounding quality or tool reliability.

- Insufficient governance around data sources and access controls.

Developers should prioritize clear ownership, documented policies, and measured rollouts to avoid these common traps.

Comparison

| Feature | AI Agent | RAG (Retrieval-Augmented Generation) |

|---|---|---|

| Primary Objective | Automate end-to-end tasks with autonomy and policy-driven decisions | Ground outputs in retrieved documents and external data |

| Memory/State | Persistent internal state and context over sessions | Rely on external sources; memory optional via separate layer |

| Data Freshness | Live data through integrations; freshness depends on tooling | Freshness depends on retrieval corpus and indexing strategy |

| Latency | Planning and tool calls can add steps; potential higher latency | Retrieval + generation adds retrieval and compute overhead |

| Tooling Integration | Deep API/plugin integrations and orchestration | Connectors to data sources and retrieval systems |

| Data Privacy | Governance for tool access and secrets; complex ownership | Needs data-provenance policies and access controls |

| Cost Model | Compute, memory, and orchestration costs | API calls, retrieval ops, and storage costs |

| Best Use Case | Complex, autonomous workflows requiring policy consistency | Data-grounded responses with up-to-date information |

Positives

- Clear ownership of automation behavior

- Rich tool orchestration enables end-to-end workflows

- Strong governance and traceability

- Modular and reusable components

- Flexibility to mix with retrieval systems

What's Bad

- Potentially higher upfront complexity

- Latency and cost can scale with integration depth

- Data governance and privacy challenges

- Requires ongoing maintenance of memory and policies

AI agents excel for autonomous orchestration with memory, while RAG shines for data-grounded, rapidly updated responses.

If you need end-to-end automation with controllable behavior, choose AI agents. If you require factual grounding with easily updatable data sources, choose RAG. In many cases, a hybrid approach delivers the best of both worlds.

Questions & Answers

What is an AI agent in this context?

An AI agent is a software entity that can autonomously perform a sequence of actions by calling tools and services, guided by decision policies and memory. It aims to execute complex tasks without requiring constant human input.

An AI agent automates tasks by talking to tools and making decisions on its own, based on learned policies and memory.

What is RAG and when should I use it?

RAG stands for retrieval-augmented generation. It combines a retriever with a generator to ground responses in external data. Use RAG when factual accuracy and citations matter or when data sources change frequently.

RAG uses retrieved documents to back up what the AI generates, which is great for facts and citations.

Can AI agents and RAG be used together?

Yes. Hybrid architectures route certain tasks through agents for automation while leveraging RAG for data-grounded insights. This pattern enables autonomy where safe and grounding where facts matter.

Absolutely—many teams blend the two to get automation plus facts tied to sources.

How do I evaluate grounding quality?

Evaluate grounding by measuring citation accuracy, source traceability, and the consistency between retrieved evidence and generated responses. Include human-in-the-loop reviews for critical domains.

Check how well the sources back up what the model says and whether those sources are traceable.

What are common pitfalls when choosing between them?

Common pitfalls include underestimating data freshness needs, over-optimizing for latency at the expense of accuracy, and neglecting governance. Start with a pilot that tests both patterns under realistic workloads.

Watch out for latency versus accuracy and for governance gaps when you pick a path.

Is there a recommended starting point for teams new to this?

A practical starting point is a hybrid pilot: deploy a lightweight AI agent for automation on non-critical tasks, while testing a RAG approach for data-heavy queries. Monitor success metrics and iterate.

Try a small hybrid setup first, then expand based on what you learn.

Key Takeaways

- Assess data freshness needs before choosing

- Prioritize automation autonomy for AI agents

- Plan for retrieval overhead with RAG

- Consider a hybrid approach to balance strengths