AI Agent vs Persona: A Practical Comparison

A rigorous comparison of AI agents and personas, detailing autonomy, governance, UX, and implementation considerations to help developers and leaders decide when to deploy agentic AI versus scripted personas.

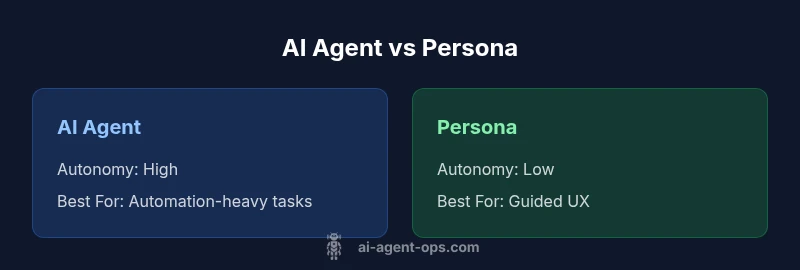

AI agents and personas serve different goals. An ai agent is an autonomous system that can observe, decide, and act to complete tasks, while a persona is a designed interaction style that shapes how users experience a system through scripted dialogue. For automation and flexible workflows, ai agents usually outperform fixed personas; for guided user interactions and strict governance, personas can be safer and easier to manage. The choice hinges on autonomy needs and risk tolerance.

Defining AI agent and persona

According to Ai Agent Ops, an ai agent is an autonomous software entity that can observe, reason, and take action to complete tasks in a real-world environment or a digital stack. It coordinates tools, makes decisions, and can adapt its behavior based on outcomes. A persona, by contrast, is a designed interaction style—a character, tone, and script that shapes how the system communicates with users. Personas do not typically initiate actions on their own; they guide user experience and set expectations. In practice, teams use AI agents to automate workflows, while personas anchor user trust and consistency. Understanding the distinction is essential, because the ai agent vs persona choice affects data flows, governance, and how developers structure the system. In many enterprise scenarios, both approaches are used in tandem, with an agent handling autonomous tasks behind the scenes and a persona shaping user-facing interactions. This distinction matters for app design, API orchestration, and the organizational processes that govern AI behaviors. The Ai Agent Ops team emphasizes that selecting the right model hinges on autonomy needs, risk tolerance, and the desired balance between speed and control.

Core distinctions: autonomy, scope, and adaptivity

The most visible difference between an AI agent and a persona is autonomy. An AI agent operates with a sense-plan-act loop, frequently invoking external tools, APIs, and data sources to complete tasks without human prompting for every step. A persona, meanwhile, relies on scripted prompts and pre-defined response patterns, delivering a predictable user experience but limited capacity for independent action. Decision-making scope follows the same logic: agents handle end-to-end tasks, including retries, orchestration, and exception handling; personas support guided flows with predefined decision points. Adaptivity also diverges: agents learn from feedback and environment signals, adjusting their behavior over time; personas typically stay static unless a human updates the scripts or tone. Data access reveals further contrast: agents need access to live data and control over systems, while personas primarily present information and collect input within a bounded context. Governance and safety considerations scale with autonomy: agents demand stronger oversight and auditing; personas are easier to validate but offer less flexibility.

When an AI agent is the right tool vs a persona

Choosing between ai agent vs persona hinges on the problem you are solving. If the goal is to automate complex, multi-step processes that involve decision-making under uncertainty, an AI agent is often the right tool. In domains like order orchestration, supply chain, or proactive customer support, autonomous agents can reduce latency and human workload. Conversely, if your priority is a brand voice, regulated user interactions, and predictable outcomes, a persona may be safer and sufficient. Personas excel in front-end experiences where the goal is consistency, tone, and quick, rule-based responses. In many systems, teams blend both approaches: agents do the heavy lifting behind the scenes, while personas manage user-facing dialogue. This hybrid model can balance autonomy with control, but it requires clear handoffs and robust interfaces between components. The Ai Agent Ops framework suggests starting with a clear boundary: what the agent can control autonomously, and where human-in-the-loop oversight should exist to maintain trust and compliance.

Architecture patterns: agentic AI vs persona-based systems

Agentic AI patterns center on sense-think-act loops, memory, and tool use. An agent environment includes a planner, a set of capabilities, and a memory module to retain context across sessions. External tools—APIs, databases, and event streams—are core to the agent’s action repertoire. Security and governance are built into the workflow from the start with robust auditing, intent classification, and rollback strategies. Persona-based systems adopt a more scripted, prompt-driven architecture. They rely on a strong tone and style guide, a dialogue manager to steer conversations, and a deterministic response mechanism. Memory (short-term context) is typically kept within a session or a user profile, with changes gated by explicit prompts. The integration surface tends to be narrower but easier to validate. When designing either pattern, architects should consider latency, observability, and error-handling pathways, plus the trade-offs between interpretability and speed. The Ai Agent Ops guidance highlights that choosing an architecture is as much about governance as software design, with clear escalation rules and robust logging being foundational.

Governance, safety, and accountability

Autonomous agents raise governance and safety questions that go beyond typical software development. Decision traceability, explainability, and compliance with data handling rules must be baked in from the start. Clear escalation paths, human-in-the-loop triggers, and strict role-based access controls help manage risk. For personas, governance still matters, but the risk surface is narrower; ensuring brand safety, tone consistency, and privacy controls is essential. Across both approaches, maintaining an auditable trail of actions, decisions, and outcomes supports accountability and post-incident analysis. Ai Agent Ops emphasizes applying a risk-based framework: classify tasks by criticality, implement guardrails for highly consequential actions, and continuously monitor for drift in behavior or alignment with policy. Regular red-teaming and scenario testing are recommended to uncover edge cases before deployment.

UX and developer considerations

From a user experience perspective, AI agents require clear signals about what the system is doing and why. Transparency about autonomous actions builds trust, while providing users with easy ways to intervene when necessary. For developers, the challenge is building modular, observable components with well-defined interfaces. Personas, on the other hand, emphasize voice, tone, and user expectations. Writers and UX designers encode the brand’s character into prompts, ensuring consistency across interactions. In both cases, performance matters: latency, reliability, and visibility into system health shape user satisfaction. A practical rule is to design for graceful degradation: when the agent cannot complete a task, the system should fail safely and offer a transparent alternative path. The balance between speed and control often determines whether you lean toward agents or personas in a given product area.

Practical implementation pitfalls

Implementers frequently stumble over ambiguous task boundaries, data access constraints, and integration fragility. For AI agents, unclear ownership of decisions and insufficient monitoring can lead to unpredictable outcomes. It is crucial to set explicit success criteria, define safe operating envelopes, and implement robust testing across diverse data scenarios. For personas, the risk is drift in tone or misalignment with policy as prompts evolve; keeping a living style guide and prompt library helps mitigate this. Data privacy, retention, and consent become central when agents access sensitive systems. Establishing a reproducible development environment, versioned tool integrations, and clear rollback procedures reduces risk. A common pitfall is treating the two paradigms as interchangeable; success comes from deliberate trade-offs and an explicit governance model that maps autonomy levels to control mechanisms.

Real-world examples and scenarios

Consider an ecommerce company deploying an ai agent to manage order routing and inventory checks. The agent autonomously probes stock levels, places replenishment requests, and triggers alerts when thresholds are breached, reducing manual intervention. In parallel, a customer support channel uses a persona to preserve a friendly, consistent brand voice, escalating complex issues to human agents as needed. In a finance organization, an ai agent might monitor risk signals across multiple data feeds and execute hedging actions, while a persona guides users through compliance-aware workflows with strict prompts. The combination of agentic automation behind the scenes and persona-driven front-end interactions demonstrates how the two approaches complement each other when designed with clear handoffs and guardrails. Across these examples, governance and monitoring are essential to maintain trust and safety.

How to evaluate and choose

A practical framework starts with defining success metrics: task completion rate, time-to-resolution, user satisfaction, and policy compliance. Map tasks to autonomy levels: high-autonomy tasks belong with AI agents; flow-based, brand-driven interactions fit personas. Build a minimal viable version of each approach, then conduct parallel pilots with real users and stakeholder reviews. Measure not just performance, but explainability and controllability. Consider a hybrid pattern where agents handle autonomous steps behind a curated persona that conveys tone and brand, with a documented escalation path to humans. Finally, align with governance requirements: data handling, retention, and auditability. The Ai Agent Ops team recommends using a staged rollout, explicit escalation policies, and continuous monitoring to maintain safety and trust while unlocking the benefits of both AI agents and personas.

Comparison

| Feature | AI Agent | Persona |

|---|---|---|

| Autonomy | High autonomy and action in dynamic tasks | Low autonomy; relies on scripted interactions |

| Decision-Making Scope | End-to-end task execution and retries | Guided flows with predefined decision points |

| Learning/Adaptation | Continuous learning from feedback and environment | Static behavior unless updated by humans |

| Interaction Style | Open-ended, action-oriented conversations with actions | Structured prompts with fixed responses |

| Data Access & Execution | Access to external APIs, live data, and system control | Limited to input prompts and stored data |

| Governance & Safety | High governance requirements but scalable across domains | Easier to audit but less flexible |

| Best For | Automation-heavy workflows needing autonomous action | Controlled UX with strict compliance |

Positives

- Drives automation and faster decision-making across tasks

- Scales across multiple domains with reusable components

- Enables continuous improvement through feedback loops

- Reduces manual workloads for operators

What's Bad

- Higher risk if governance and safety controls are weak

- Complex integration and maintenance costs

- Potential data privacy and security concerns

- Can lead to user mistrust if behavior is opaque

AI agents generally outperform personas on autonomy and scalability; personas excel in controlled UX.

For broad automation and adaptive workflows, AI agents are recommended. If governance, predictability, and tightly-scoped interactions are paramount, personas may be the safer choice. The Ai Agent Ops team notes that combining both approaches can be effective when governance and clear handoffs are in place.

Questions & Answers

What is the main difference between an AI agent and a persona?

An AI agent can autonomously execute actions and make decisions, while a persona guides interactions through scripted prompts and a defined tone. The agent acts; the persona guides how users feel during the interaction.

An AI agent can take actions on its own, while a persona shapes how you talk to users with scripts.

When should I use an AI agent?

Use an AI agent when you need autonomous task execution, dynamic decision-making, and orchestration across services. It is ideal for end-to-end workflows that benefit from reduced human intervention.

Use an AI agent when you need autonomous task handling across services.

When is a persona sufficient?

A persona is sufficient when the priority is consistent brand voice, predictable user interactions, and strong controls over conversation flow. It’s easier to govern and audit in highly regulated contexts.

Choose a persona for consistent brand voice and controlled interactions.

Can you combine AI agents with personas?

Yes. A hybrid design uses agents to handle autonomous tasks behind the scenes while a persona guides user-facing dialogue, with explicit handoffs and escalation paths.

Yes, you can blend both: agents behind scenes and personas for front-end interactions.

What are safety concerns with AI agents?

Safety concerns include misalignment, data privacy, and unintended actions. A governance model with auditing, guardrails, and monitoring reduces risk.

Key safety concerns are misalignment and privacy; guardrails help.

What metrics matter when evaluating these approaches?

Measure task success rate, time-to-resolution, user satisfaction, and compliance. Include deployability, reliability, and explainability in your evaluation.

Track success, speed, and satisfaction to compare approaches.

Key Takeaways

- Define autonomy needs before choosing a pattern

- Incorporate governance early in design

- Balance UX with scriptability for consistent experiences

- Prototype both approaches to learn constraints