AI Agent Test Loop: A Practical How-To for Developers

A practical guide to designing and executing an ai agent test loop that verifies behavior, reliability, and safety across iterations. Learn structured testing, tooling, metrics, and best practices from Ai Agent Ops to elevate agentic AI workflows.

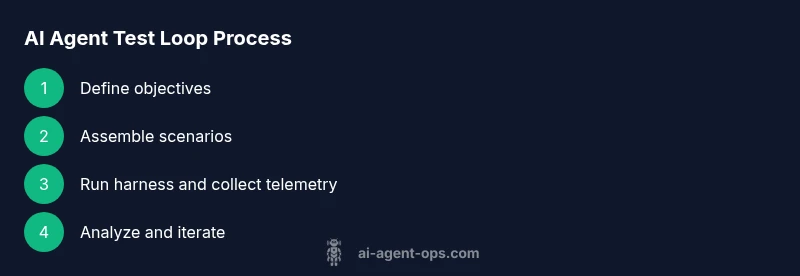

In this guide you will learn to design and run an effective ai agent test loop that validates agent behavior across iterations. You will set objectives, assemble test scenarios, and establish a repeatable workflow for evaluation, refinement, and safety checks. According to Ai Agent Ops, a well-constructed test loop accelerates learning, reduces risk, and helps teams ship robust agentic AI capabilities.

What is the ai agent test loop and why it matters

An ai agent test loop is a disciplined sequence of planning, execution, evaluation, and iteration used to validate autonomous agent behavior in real-time tasks. It combines synthetic scenarios, telemetry, and human review to uncover edge cases, misinterpretations, and unsafe prompts before they reach production. For developers and product leaders, the loop provides a living contract: every iteration should improve reliability, interpretability, and safety. Ai Agent Ops emphasizes that a loop is not a single test but a continuous feedback mechanism that scales with the complexity of agentic workflows. The loop is especially critical when agents operate in dynamic environments or collaborate with humans, where small misalignments can compound over time.

In practice, you’ll balance breadth (covering diverse task types) with depth (probing failure modes) and ensure traceability (clear experiment IDs, seeds, and telemetry) so results are reproducible across teams. As you begin, articulate what success looks like in measurable terms—accuracy, latency, safety constraints, and user satisfaction—and tie these metrics to concrete test cases. The ai agent test loop is your tool for turning exploratory development into disciplined, evidence-based progress.

Mapping objectives to concrete tests

The first step in any loop is to translate high-level goals into testable objectives. Create a matrix that maps each objective to a set of concrete tests, inputs, and expected outcomes. For example, if the objective is reliable decision-making under ambiguity, design prompts that present partial information, conflicting signals, and delayed feedback. For each test, capture the seed data, the version of the agent, the environment context, and the expected signal (e.g., confidence score, rationale trace, or policy adherence). This mapping ensures that the loop remains focused and that improvements are traceable to specific goals. Ai Agent Ops recommends documenting assumptions, failure modes, and recovery strategies alongside each test case.

Building a reusable test harness

A test harness standardizes how tests run, collects telemetry, and records results. It should support: (1) scripted test scenarios, (2) a lightweight orchestration layer to run tests in sequence or in parallel, (3) hooks to simulate external services, and (4) a robust logging/telemetry pipeline. Use versioned artifacts for prompts, tool configurations, and environment defaults so teams can reproduce results. The harness should also expose a simple API for querying current test status, listing failing cases, and triggering targeted reruns after fixes. Investing in a well-designed harness pays off as you scale up the number of agents and domains you test.

Designing diverse test scenarios and prompts

Diversity in scenarios prevents overfitting to a narrow task. Create a library of scenario templates that cover common tasks, edge cases, long-horizon planning, and real-time interaction with humans. Each template should specify input formats, expected outcomes, and edge-case triggers. Design prompts to reveal how the agent handles ambiguity, conflicting instructions, or shifting goals. Include both synthetic prompts and data derived from real user interactions, properly sanitized to protect privacy. This diversity ensures that the ai agent test loop exercises the agent across a broad spectrum of conditions.

Telemetry, logging, and traceability

Telemetry is the backbone of a useful test loop. Capture input prompts, agent outputs, internal reasoning traces when available, decision boundaries, confidence scores, latencies, and any external API calls. Store data in a structured format with unique experiment and run identifiers. Use standardized schemas for events and responses so you can aggregate results across iterations and agents. Effective telemetry enables you to answer questions like: where did the agent fail, under which conditions, and which prompt changes improved outcomes? Ai Agent Ops highlights that transparency in telemetry is essential for governance and audits.

Evaluation and failure analysis

After each test run, perform a structured analysis: summarize results, label failures by category (e.g., misinterpretation, prompt leakage, unsafe suggestion), and capture root causes. Leverage both automated signals (metrics, anomaly detection) and human review for nuanced judgments. Create a decision log that records whether a test is considered resolved, needs refinement, or should be deprecated. The goal is not to chase perfection but to steadily reduce risk and increase reliability as the loop evolves. Document learnings so future iterations inherit prior improvements.

Safeguards and ethical considerations

Incorporate guardrails that prevent unsafe outputs, sensitive data leakage, or biased behaviors. Include checks for prompt injection resilience, content policy adherence, and adherence to privacy constraints. Make sure your test data is sanitized and that you have approval processes for any data sharing across environments. Safety checks should be automated where possible but reviewed by a human in high-risk scenarios. A robust ai agent test loop treats safety as a first-class requirement rather than an afterthought.

Integrating the loop into CI/CD and product teams

Embed the test loop into your development lifecycle so that tests run automatically with each code change or model update. Create dashboards that summarize trend lines over time, highlight regression, and surface high-priority failures. Align the loop with product goals by scheduling regular reviews with stakeholders and updating acceptance criteria based on observed outcomes. By integrating the loop into CI/CD, you embed resilience into agentic AI workflows and ensure accountability across teams.

Practical governance: roles, artifacts, and cadence

Define who owns the loop at each stage—data stewards, safety leads, and product owners. Maintain artifacts such as test plans, experiment IDs, decision logs, and post-incident reviews. Establish cadence for test cycles, e.g., weekly sprints or monthly deep-dives, depending on the risk level of your domain. Clear governance reduces friction when tests uncover critical issues and ensures that improvements are implemented promptly. Ai Agent Ops recommends documenting the governance model in a living wiki accessible to all teams.

Tools & Materials

- Laptop or workstation with IDE(Ensure Python 3.x and Node.js are installed; create isolated environments per project)

- Test datasets (sanitized or synthetic)(Include prompts, scenarios, and context variations that reflect real usage)

- Agent framework or orchestration library(Support for running agents, prompts, and tool calls; versioned configurations)

- Logging and telemetry stack(Structured logs, traces, metrics; centralized storage for experiments)

- Test runner and assertion library(Ability to define expected outcomes and automatically flag deviations)

- Mock services and simulators(Useful for simulating external dependencies and rate-limiting scenarios)

Steps

Estimated time: 6-9 hours

- 1

Define testing objectives

Articulate what success looks like for the ai agent test loop. Identify primary use cases, failure modes, and safety requirements. Document measurable outcomes and establish acceptance criteria before writing tests.

Tip: Keep objectives aligned with real business goals to avoid scope creep. - 2

Assemble a diverse scenario set

Compile a library of tasks and prompts that cover typical usage, boundary conditions, and adversarial edge cases. Include ambiguous prompts to test resilience and prompt leakage scenarios.

Tip: Categorize scenarios by risk level to prioritize testing. - 3

Build a reusable test harness

Create a lightweight harness to orchestrate tests, collect telemetry, and replay seeds. Version-test artifacts to ensure reproducibility across iterations.

Tip: Expose a simple API for rerunning specific tests after fixes. - 4

Instrument telemetry and observability

Instrument prompts, outputs, reasoning traces, latency, and external calls. Use consistent schemas to enable cross-iteration comparisons.

Tip: Automate anomaly detection to surface regression quickly. - 5

Run iterative test cycles

Execute tests in short cycles, log results, and classify failures. Prioritize high-impact failures for immediate investigation.

Tip: Automate reruns for confirmed fixes to verify resolution. - 6

Perform post-run analysis

Review failures with a structured root-cause analysis. Capture decision logs, proposed fixes, and metric-driven conclusions.

Tip: Link findings to specific test cases for traceability. - 7

Incorporate safety checks

Integrate guardrails and content policies into tests. Validate that agents avoid unsafe or disallowed outputs under varied prompts.

Tip: Automate checks where possible, but include human review for high-risk outputs. - 8

Integrate with CI/CD and governance

Automatic test runs on code or model updates, with dashboards for trend analysis. Establish ownership, cadence, and escalation paths.

Tip: Document governance decisions in a shared knowledge base.

Questions & Answers

What is an ai agent test loop and why is it important?

An ai agent test loop is a disciplined sequence of planning, execution, evaluation, and iteration used to validate autonomous agent behavior. It helps teams identify edge cases, reduce risk, and improve reliability through continuous learning.

An ai agent test loop is a repeatable process for testing and improving autonomous agents, reducing risk and improving reliability.

How do you choose test scenarios for the loop?

Select scenarios that reflect real user tasks, include edge cases, and cover ambiguous prompts. Use a mix of synthetic and sanitized real data to ensure broad coverage.

Choose scenarios that reflect real tasks, including edge cases and ambiguity, using synthetic or sanitized data.

What metrics should be tracked in the loop?

Track metrics such as outcome correctness, response latency, safety violations, and stability across iterations. Use telemetry to surface trends and regressions.

Track correctness, latency, safety, and stability across iterations to spot trends.

How can safety be integrated into the test loop?

Embed guardrails and content policies in test prompts. Validate outputs against policy boundaries and include automated checks plus human review for high-risk cases.

Add guardrails and policy checks to prompts, with automated and human review for safety.

How is the loop integrated with CI/CD?

Automate test runs on changes to models or prompts, and surface results on dashboards. Tie failures to actionable remediation steps and owners.

Automate tests in CI/CD and show results on dashboards with clear ownership.

What common pitfalls should be avoided?

Avoid data leakage, overfitting to a narrow task, and brittle tests that break with minor prompts changes. Regularly refresh test data and scenarios.

Watch for data leakage and brittle tests; refresh data regularly.

Watch Video

Key Takeaways

- Define clear objectives prior to testing

- Build a reusable test harness for repeatability

- Capture structured telemetry for cross-iteration insights

- Prioritize safety and governance from day one

- Ai Agent Ops's verdict: implement an iterative ai agent test loop to steadily improve reliability and safety