Where Are AI Agents Built? A Practical Guide for 2026

Discover where AI agents are built across cloud, on-prem, and edge environments. Learn hosting patterns, governance implications, and practical architectures for scalable agentic workflows in 2026.

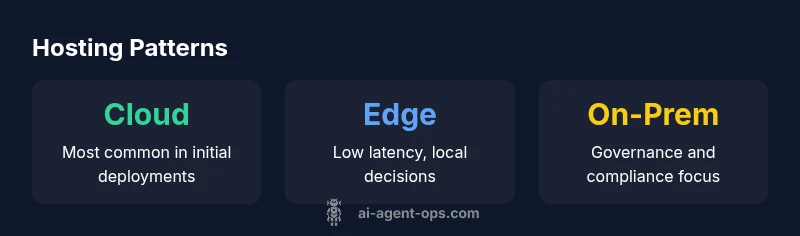

Where are ai agents built? They are deployed in cloud, on-premises, and edge environments using modular stacks that combine data pipelines, model runtimes, and orchestration layers. The exact hosting depends on latency, data governance, and scale needs, with many teams adopting hybrid architectures to balance speed, control, and cost. This article explains the patterns and trade-offs across hosting options.

Why hosting location matters for AI agents

Understanding where ai agents are built starts with recognizing that hosting location shapes latency, data residency, governance, and cost. The central question—where are ai agents built—drives data pipelines, model runtimes, and orchestration design. Cloud hosting offers scale and speed, on-premises provides control and compliance, and edge deployments unlock low-latency inference near data sources. Each option has trade-offs in reliability, security, and operational burden. The Ai Agent Ops team notes that the hosting decision should align with data gravity, regulatory requirements, and user experience expectations. In practice, teams often design hybrid architectures that blend central orchestration with local execution. A well-chosen hosting strategy reduces data transfer costs, accelerates decision latency, and improves resilience in the face of network outages.

Cloud hosting patterns for AI agents

Cloud-hosted AI agents typically rely on managed services, containerized runtimes, and orchestrated pipelines. Common patterns include centralized LLMs with tool-augmentation, model registries, and workflow engines that coordinate data extraction, transformation, and action. Kubernetes-based deployment, serverless functions for event-driven tasks, and ML pipelines (e.g., data validation, feature store, and model monitoring) enable rapid scaling. For teams, cloud offers rapid experimentation, a broad ecosystem, and built-in security features. However, cloud costs can accumulate with sustained usage, and governance must be carefully designed to prevent data leakage across tenants. Ai Agent Ops recommends starting with a narrow use case in the cloud and gradually expanding to more data-sensitive workflows as trust and controls mature.

On-premises deployments and governance

On-prem deployments are favored when data sovereignty, strict compliance, or low-latency requirements dominate. A typical on-prem stack includes private networks, dedicated hardware accelerators, and custom security controls. Key considerations include physical security, patch management, and the complexity of software updates. Although capital expenditure is higher upfront, total cost of ownership can be lower for long-running, regulated workloads. Governance becomes more transparent with full control of data pathways and audit logs. In regulated industries such as finance and healthcare, on-prem deployments often serve as the baseline for acceptable risk. The Ai Agent Ops team emphasizes that success hinges on robust monitoring, access controls, and a clear decommissioning plan for sensitive data.

Edge deployment and real-time decisions

Edge computing brings inference closer to data sources, reducing latency and preserving bandwidth. Lightweight runtimes, compressed models, and efficient toolchains enable real-time decision-making in devices, vehicles, and industrial equipment. Edge architectures must balance model quality with resource constraints, ensure secure model updates, and maintain offline capability when connectivity is intermittent. Data governance at the edge requires encryption in transit, minimal data retention, and local logging. Edge deployments shine in scenarios like autonomous devices, smart factories, and remote locations where cloud connectivity is expensive or unreliable. The trade-offs include limited debugging visibility and longer update cycles, which Ai Agent Ops suggests mitigated by staged rollouts and remote telemetry.

Hybrid architectures: The practical middle ground

Hybrid hosting combines cloud scalability with on-prem control and edge responsiveness. In a hybrid pattern, core orchestration and policy enforcement live in a trusted central environment, while edge devices handle latency-critical inference. A typical setup uses secure gateways, synchronized feature stores, and event-driven triggers that bridge cloud and edge. This approach supports data residency where required, reduces cross-network traffic, and enables fault-tolerant operation through local fallback paths. When designing hybrids, teams should define clear boundaries, data routing rules, and failover strategies. The Ai Agent Ops guidance is to implement end-to-end tracing and consolidated observability across locations to maintain a coherent view of agent behavior.

Core components of an AI agent stack

A robust AI agent stack consists of data pipelines, model runtimes, tools, and an orchestration layer. The data pipeline cleanses, validates, and routes data to the agent; model runtimes host LLMs or smaller models; tools provide external capabilities like search or calendaring; and the orchestration layer coordinates planning, action selection, and feedback. A well-architected stack supports modular upgrades, observability, and security controls. Organizations should emphasize interface consistency between components, versioning of tools, and controlled access to data. In practice, many teams build standardized reference architectures with templates for deployment, monitoring, and governance.

Architecture patterns by use case

Different use cases favor different hosting patterns. For customer support agents with moderate latency tolerance, cloud-based orchestration plus cloud-based tools can be ideal. For healthcare or finance use cases with strict data governance, on-prem or private cloud models may be preferred. For IoT-driven operations, edge-first patterns with cloud coordination for analytics often deliver the best balance. A general rule is to start with a minimal viable architecture in a single hosting location, then incrementally add locations as governance and performance metrics stabilize. The choice should be guided by business objectives, risk appetite, and the ability to measure success across environments.

Operational discipline: observability, security, and governance

Hosting AI agents across multiple locations demands strong observability, security, and governance practices. Centralized monitoring should track latency, error rates, data lineage, and model drift across all environments. Security controls must cover authentication, access management, and secure update paths for models and tools. Data governance policies should specify retention periods, data minimization rules, and compliance mappings to applicable regulations. Regular audits, simulated incident response, and drill-based testing ensure resilience. Finally, document the decision rationale for hosting choices to facilitate alignment among developers, operators, and business leaders.

Practical road map to hosting AI agents (actionable steps)

- Define success metrics: latency targets, data residency requirements, and governance constraints.

- Start with a cloud-native prototype for a narrow use case, then evaluate on-prem and edge options.

- Establish a modular stack with clearly defined interfaces between data pipelines, models, and orchestration.

- Implement observability and security controls from day one, including end-to-end tracing.

- Plan for hybrid expansion with risk assessments and change management processes. Ai Agent Ops recommends iterating in short cycles and documenting lessons learned.

Hosting patterns for AI agents by location

| Location Type | Typical Components | Key Considerations |

|---|---|---|

| Cloud | Model runtime, orchestration, data pipelines | Latency, scalability, governance |

| On-Premises | Private data stores, dedicated hardware, secure network | Compliance, maintenance, upgrade cycles |

| Edge | Lightweight runtimes, local inference, offline mode | Connectivity, resource limits, updates |

Questions & Answers

What does the phrase 'where are ai agents built' mean in practice?

It refers to the physical and logical location where the agent's components run—cloud, on-premises, or edge—each with distinct implications for latency, governance, and cost. Selecting a location involves evaluating data residency rules, performance needs, and the ability to manage updates.

Hosting refers to where the agent's components run, such as cloud, on-prem, or edge, with latency and governance as key factors.

What are the main hosting options for AI agents?

The main options are cloud, on-premises, and edge deployments. Cloud offers rapid scaling; on-prem provides strict governance and data control; edge brings low latency and local decision-making. Hybrid deployments blend these for flexibility and resilience.

The main options are cloud, on-prem, and edge, with hybrids blending them for flexibility.

How do I decide between cloud, on-prem, and edge?

Decisions hinge on latency requirements, data sensitivity, regulatory obligations, total cost of ownership, and organizational capability to manage updates. Start with a single environment for a narrow use case, then expand to others as governance and performance metrics improve.

Decide by latency, data sensitivity, and governance, starting small and expanding as you learn.

What are common challenges when hosting AI agents?

Typical challenges include data governance across locations, ensuring consistent tool APIs, monitoring model drift, and managing secure updates. Planning for observability, robust access controls, and clear ownership helps mitigate these risks.

Challenges include governance, drift, and secure updates; plan for observability and security.

Can AI agents run offline or in low-connectivity environments?

Yes, edge deployments enable offline or intermittent connectivity, but you trade off model size and capabilities. Effective offline agents use compact models, local caches, and synchronization strategies for when connectivity returns.

Yes, edge deployments can run offline with compact models and sync plans.

“AI agents perform best when hosting aligns with data governance, latency, and scale. A modular, observable architecture enables reliable hybrid deployments.”

Key Takeaways

- Define hosting goals before architecture.

- Choose hybrid when data governance matters.

- Balance latency, control, and cost across locations.

- Design for observability and secure data flows.