Building an AI Agent From Scratch: A Practical Guide

Learn to build an AI agent from scratch with a practical, step-by-step approach. Define scope, assemble a modular stack, implement sensing, reasoning, and action loops, then test, govern, and deploy responsibly.

Goal: Build an AI agent from scratch by defining the task, selecting a scalable stack, and implementing a closed-loop loop that senses, reasons, and acts. According to Ai Agent Ops, start with a modular design and an explicit objective, then prototype quickly with small tasks and iterate toward production quality. This guide covers architecture, testing, governance, and deployment considerations to ensure reliable operation.

Foundational concepts and mindset

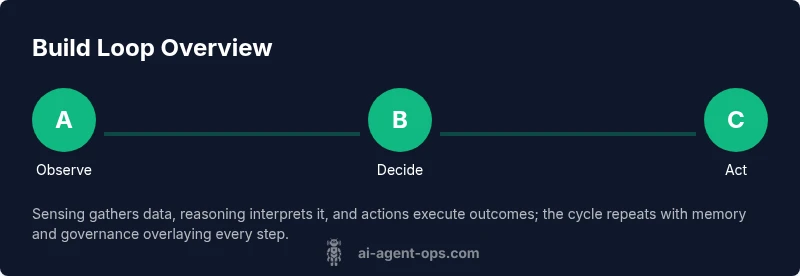

Building an AI agent from scratch starts with a clear mental model of what an agent is and how it should behave in the real world. At its core, an AI agent combines perception, reasoning, and action within a defined objective. The Ai Agent Ops team emphasizes that reliable agents are not just clever interlocutors; they are end-to-end systems that observe their environment, decide on the best action, and execute it, all while respecting safety and governance constraints.

The article distinguishes agents from simple bots: agents carry planning capabilities and memory, enabling longer-term goals and adaptive behavior. Before writing code, teams should agree on the agent's scope, success criteria, and risk tolerance. This means specifying tasks the agent will perform, the types of inputs it will handle, and the guardrails that limit harmful actions. A common mistake is to chase generically impressive AI features without a concrete use case or measurable outcomes.

A practical mindset combines architectural thinking with disciplined experimentation. Start with tiny, well-scoped tasks that demonstrate the loop: sense, think, act. Use small data, clear prompts, and deterministic evaluation where possible to reduce ambiguity. From a design perspective, favor modularity: separate the sensing layer, the reasoning module, and the action adapters so you can swap components without rewiring the entire system. Finally, embed governance from day one: logging, auditing, privacy controls, and safety checks should be part of the design, not after the fact.

Defining the agent's purpose and scope

Before you write a single line of code, define a precise objective for your agent. A well-scoped goal reduces ambiguity and guides all later decisions about data, prompts, and tools. Start with a real-world task that is narrow enough to measure but meaningful enough to demonstrate value— for example, an agent that triages customer requests and extracts intent, priority, and required data.

Next, map inputs and outputs. What senses will the agent use (email, chat messages, API signals, logs)? What actions can it perform (respond to the user, fetch data, trigger workflows, escalate)? Define the success criteria in observable terms: time to resolve, accuracy of categorization, or rate of correct escalations. Also set constraints: latency targets, privacy requirements, and any forbidden actions. Guardrails should be explicit: what the agent may not do, and what should trigger human review.

Consider the data that will fuel the agent. Identify sources, ownership, retention rules, and quality checks. Decide whether to enable learning from feedback, and if so, how to protect user privacy. Finally, outline a minimal evaluation plan: create a small, repeatable test scenario and establish a baseline. This early alignment saves time later and reduces rework when adding capabilities.

Core components of an AI agent

The architecture of an AI agent rests on four core components that work in a loop: sensing, reasoning, planning, and acting. The sensing layer ingests inputs from users, APIs, logs, and sensors you define for your specific domain. The reasoning module translates raw data into meaningful interpretation, using prompts, model calls, and rule-based logic to determine what to do next. A planning component sequences tasks, prioritizes actions, and manages dependencies, ensuring that longer workflows finish coherently.

A critical memory system stores short-term context and, when appropriate, longer-term knowledge. Short-term memory helps the agent remember recent user intents and tool states within a session, while long-term memory supports cross-session continuity, auditing, and learning signals. The action adapters are the bridge to reality: they execute commands, retrieve data, or trigger external services. Good practice requires strict input validation, error handling, and clear fallbacks to human operators when confidence is low.

To keep the design robust, separate concerns into modules with defined interfaces. This makes it easier to swap a model, a tool, or a database without rewriting the entire pipeline. It also enables parallel testing: you can evaluate each component in isolation before integrating them into the end-to-end loop. Finally, plan for governance: enable telemetry, tracing, and security checks that help you observe, diagnose, and improve behavior over time.

Choosing a technical stack and design patterns

A robust AI agent uses a modular, service-oriented architecture. Start with a lightweight orchestration layer that coordinates sensing, reasoning, planning, and acting. Choose an LLM or a mixture of models for different roles, but keep your data and prompts versioned to reproduce results. For the user-facing portion, a chat interface or API gateway provides a consistent surface, while a separate internal API handles tool calls and data access.

Design patterns to adopt include: (1) the perception-then-decide pattern, where inputs are normalized before passing to decision logic; (2) the action-then-verify pattern, which validates outcomes against expectations; (3) the memory-then-retrieve pattern, which stores critical context and fetches it when needed. Use a provider-agnostic layer so you can swap models or vendors without large rewrites. For data handling, apply strict privacy controls and audit trails, and isolate any training data from production inference wherever possible.

Hardware and hosting choices matter too. For early experimentation, local development with containerized services reduces friction. For production, consider scalable cloud infrastructure with autoscaling, rate limiting, and secure secret management. Finally, plan for observability: structured logging, metrics dashboards, and alerting on anomalies ensure you can detect drift and respond quickly. The right stack accelerates development and keeps the agent maintainable as requirements grow.

Building the agent loop: observe → decide → act

The core Loop is simple in concept and powerful in practice: observe inputs, decide on a course of action, and act by calling tools or returning results. Start by defining the loop interfaces: a Sense() function to collect data, a Decide() function to produce an intent, and an Act() function to perform the chosen action. Fill in concrete implementations for your domain, then connect them with a lightweight orchestrator.

In practice, you will implement a repeating cycle:

- Sense: gather latest signals (user message, system alert, or data fetch).

- Decide: transform signals into goals, prioritize tasks, and select tools.

- Act: execute tool calls, update memory, and return a response or trigger a workflow.

- Observe again: capture the outcome and measure success against your criteria.

To keep latency acceptable, run perception and decision steps in parallel where possible and cache frequent results. Use confidence thresholds to decide when to escalate to humans. Logging every decision and its outcome builds a traceable history that helps with debugging and auditing.

Code ideas are helpful but not mandatory. A lightweight pseudo-code sketch:

function Loop() { input = Sense() intention = Decide(input) result = Act(intention) UpdateMemory(input, intention, result) return result }

Remember to test each path: happy path, failure modes, and edge cases. This discipline prevents drift and keeps the agent predictable as it scales.

Testing, evaluation, and iteration

Testing an AI agent requires thinking about both correctness and reliability in real-world conditions. Start with a simple, deterministic scenario that exercises core capabilities: perception, reasoning, and action. Then add variability: different user intents, unexpected inputs, and tool failures. Define concrete success criteria in observable terms, such as accuracy of intent classification, robustness to noisy data, and latency under load.

Capture runs with telemetry: track decisions, outcomes, confidence scores, and any human interventions. Use a lightweight evaluation harness that can replay scenarios and compare results against a baseline. Ai Agent Ops recommends pairing automated tests with manual reviews for edge cases and safety concerns. Document drift: when the agent’s behavior changes after updates, you want to know why.

Iterate in small batches. After every improvement, re-run the same suite to verify regression resistance. Use A/B tests or staged rollouts to compare changes without impacting all users. Finally, archive evidence of experiments and decisions—this audit trail supports compliance and future learning while keeping governance transparent.

Deployment considerations and governance

Placing an AI agent into production requires attention to security, privacy, and compliance. Start with access controls, secret management, and encryption of sensitive data. Establish guardrails that prevent harmful actions and require human review for ambiguous cases. Implement robust logging and monitoring so you can detect anomalies, drift, and misuse early.

Consider the data lifecycle: what data is stored, where it lives, how long, and who can access it. Avoid training data leakage and ensure that prompts do not reveal sensitive information. Define rollback plans and a clear decommission path for outdated capabilities. Performance budgets are essential: set latency targets, throughput requirements, and budgetary constraints to keep operations predictable and affordable.

Governance also means ethics. Document assumptions, review potential bias, and provide transparency about how decisions are made. Prepare a playbook for responding to incidents, including communication plans for users and stakeholders. Finally, plan for scaling: modular deployments, feature flags, and rapid rollback mechanisms help you manage growth without compromising safety or reliability.

Practical example: a customer-support agent

Imagine an agent designed to triage customer inquiries received via chat. The sensing inputs are chat transcripts and order data; the agent extracts intent, urgency, and required actions. The reasoning module maps intents to workflows, such as lookup order status, initiate returns, or escalate to human support. The planning component sequences steps: verify identity, fetch order details, and determine resolution path. Action adapters call CRM APIs, update tickets, and notify agents.

In practice, you would start with a minimal version: a single intent, one tool, and a deterministic response. As you validate performance, you add additional intents and tools, always retaining guardrails and audit logs. Measure success by the accuracy of triage labels and the rate of correct escalations, not just the creativity of the chat. Document decisions, keep data access restricted, and monitor for policy violations. This incremental approach reduces risk while delivering tangible value to customers and the business.

Common pitfalls and how to avoid them

- Scope creep and feature bloat. Define a clear objective and exit criteria before coding.

- Overreliance on a single model. Use modular prompts and switchable tool options.

- Missing governance and observability. Implement logging, tracing, and guardrails from day one.

- Data privacy and security gaps. Enforce strong access controls and encryption.

- Drift after updates. Run regression tests and monitor behavior continuously.

Authority sources

- https://www.nist.gov/topics/ai

- https://ai.stanford.edu

- https://cacm.acm.org/

The Ai Agent Ops team recommends using these sources to inform design decisions and governance practices.

Tools & Materials

- Python 3.11+ environment(Install via official Python website; use venv or poetry for dependencies.)

- Node.js (optional for UI or tooling)(Use if your stack includes frontend tooling or certain agents.)

- OpenAI API access or alternative LLM(Key management and rate limits; have a fallback plan if access is restricted.)

- VS Code or equivalent IDE(Enable extensions for linting, debugging, and Docker integration.)

- Postman or REST client(Useful for testing API endpoints and tool calls.)

- Secrets management tool or environment variables(Keep API keys and sensitive data secure.)

- Version control (Git)(Track changes, enable collaboration, and manage branches.)

- Containerization (Docker)(Helpful for reproducible local development and deployment.)

- Test data and sample datasets(Use synthetic or de-identified data for safe testing.)

Steps

Estimated time: 4-6 weeks

- 1

Define objective

Articulate a narrow, measurable goal for the agent and agree on success criteria. This anchors all design decisions and testing efforts.

Tip: Write a one-page objective and share it with stakeholders before coding. - 2

Map inputs/outputs

List all senses the agent will use and all possible actions it can take. Define how success will be detected for each path.

Tip: Create a diagram of data flow from sensing to action. - 3

Choose components

Select sensing, reasoning, memory, and action adapters. Keep interfaces small and clearly defined to ease future swaps.

Tip: Prefer replaceable modules over monolithic blocks. - 4

Build sensing and decision loop

Implement a basic Sense → Decide → Act loop with a simple domain task to validate the pipeline.

Tip: Start with deterministic behavior for clarity and debugging. - 5

Add memory and guardrails

Incorporate short-term memory and an auditable set of safety checks that prevent harmful actions.

Tip: Document guardrails and the thresholds for escalation. - 6

Test and iterate

Create deterministic tests, then introduce variability to simulate real-world usage. Record results and adjust.

Tip: Maintain a regression test suite for every update. - 7

Plan deployment and governance

Define deployment strategies, monitoring, and incident response plans. Ensure privacy and security controls are in place.

Tip: Use feature flags to enable safe rollout and rollback.

Questions & Answers

What is an AI agent?

An AI agent perceives inputs, reasons about them, and takes actions to achieve a goal. It includes sensing, memory, and control components that enable end-to-end behavior.

An AI agent perceives, reasons, and acts to achieve a goal, with sensing, memory, and control components.

How does an AI agent differ from a chatbot?

A chatbot typically handles input and reply, while an AI agent includes planning, tool use, and memory to carry out sequences of actions.

A chatbot replies; an AI agent plans and executes actions with memory.

What are the major risks with AI agents?

Key risks include safety, privacy, misalignment, data leakage, and drift. Mitigation involves guardrails, auditing, and human oversight.

Risks include safety, privacy, and drift; guardrails and audits help manage them.

What skills do I need to build an AI agent from scratch?

You need software engineering, AI/ML fundamentals, data handling, system design, and governance practices.

Strong software engineering and AI basics are essential.

Where should I start if I’m new to agent design?

Begin with a tiny, well-scoped task, define a minimal loop, and iteratively add capabilities while maintaining guardrails.

Start with a small, focused task and grow from there.

Watch Video

Key Takeaways

- Define a clear objective before coding.

- Architect modular, replaceable components.

- Test early and often with measurable criteria.

- Embed governance and guardrails from day one.

- Monitor, iterate, and scale responsibly.