AI Agent for DevOps: Build, Deploy, Govern

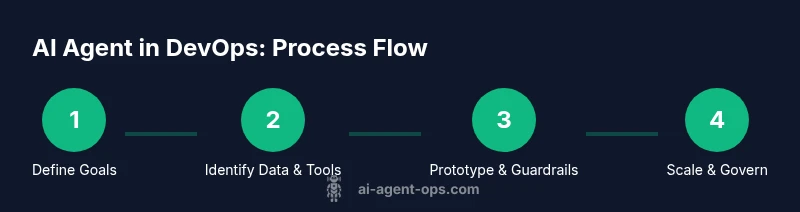

A practical, step-by-step guide to building and deploying AI agents for DevOps to automate tasks, improve incident response, and accelerate delivery with governance and safety guardrails.

By the end of this guide you will learn how to design and implement an ai agent for devops to automate repetitive tasks, improve incident response, and accelerate deploys. You’ll cover core concepts, architecture patterns, safety guardrails, and integration steps with existing tooling. The approach emphasizes measurable outcomes, governance, and incremental experimentation to minimize risk while maximizing automation.

What is an AI agent for DevOps?

An AI agent for DevOps is a software component that uses AI to observe, reason about, and act on your CI/CD, cloud, and monitoring stack. It can read logs, pull metrics, parse incident tickets, and then decide on actions such as triggering a deployment, rolling back a change, or issuing a runbook step. The Ai Agent Ops team defines this as a responsive, auditable agent that operates within governance boundaries and interacts with humans when necessary. Importantly, an AI agent is not a magic button; its value comes from the quality of inputs, the clarity of its objectives, and the safeguards built around it. In practice, you’ll start with a narrowly scoped problem, prototype with a lightweight data loop, and gradually broaden the agent’s capabilities as you validate outcomes. This foundation sets the stage for the rest of the guide by clarifying terminology, scope, and expected outcomes.

Core concepts: agent architecture and agentic AI

At a high level, an AI agent for DevOps consists of perception, reasoning, and action components, connected by a control loop. Perception ingests data from logs, traces, metrics, ticketing systems, and chat channels. Reasoning uses prompts, plans, and memory to decide what to do next. Action executes tasks by calling APIs, updating runbooks, or modifying configuration as code. Some teams build a single agent; others deploy a small team of agents that collaborate via shared repositories or a centralized orchestrator. The term agentic AI describes systems that demonstrate a degree of agency: they can set goals, plan steps, and adapt to changing conditions within the defined policy. Effective designs often rely on task decomposition, tool-using chains, and memory to avoid repeating work. Security and governance are baked in from the start, with explicit boundaries, audit logs, and rollback options. In this section you’ll learn about architecture patterns and how to balance autonomy with accountability.

Typical use cases in modern DevOps pipelines

AI agents bring automation to several core areas of DevOps. In incident response, an agent can triage alerts, fetch context, and propose remediation steps, reducing mean-time-to-acknowledge. In CI/CD, agents can orchestrate builds, perform rollback if a deployment fails an automated check, and adjust pipelines based on real-time feedback. For infrastructure as code, agents can detect drift, propose changes, or patch configurations while recording decisions for compliance. Change management can be accelerated by routing approvals through agent-guided workflows, while observability-driven automation can trigger self-healing actions when anomalies surface. Finally, AI agents can help enforce security policies by monitoring for risky configurations and suggesting safer alternatives. Across these use cases, you’ll want to measure tangible outcomes such as deployment speed, error rates, and mean time to recovery, while maintaining strict guardrails and human oversight.

Design patterns for reliability and safety

Reliability and safety hinge on robust design patterns. Start with a defendable boundary: clearly separate the agent’s decision space from direct user actions, and require human confirmation for high-risk steps. Use a kill switch and a timeout policy so the agent cannot run away with destructive actions. Employ a policy engine to veto actions that violate compliance rules, privacy constraints, or security policies. Implement observability hooks: every decision, data input, and action should be traceable in logs and auditable in a governance portal. Prefer deterministic prompts and memory management to reduce hallucinations; when uncertainty arises, present safe options and ask for human input. Finally, adopt incremental rollout: test in a staging environment, then in a limited production canary before full-scale deployment. Guardrails are not a luxury; they are essential for trust and safety.

Data sources and observability for agents

The agent’s effectiveness relies on high-quality, timely data. Core data streams include logs from applications and infrastructure, metrics from monitoring systems, traces from distributed traces, and tickets or runbooks from incident management platforms. Context from source code repositories, release notes, and change tickets helps the agent understand the environment. Data privacy and access controls must be respected; secrets should never flow through plain text. Observability should track input -> decision -> action mappings, with latency budgets and failure modes clearly surfaced. Choreograph these data streams with versioned schemas and data contracts to reduce drift. Establish a feedback loop where outcomes are evaluated post-action, and use this data to refine prompts, memory, and decision policies over time. In practice, start with a minimal set of data sources and gradually fold in additional signals as you prove value.

Integration with existing tools and workflows

An AI agent must connect to your toolchain through well-defined adapters. Build connectors to your CI/CD platform, chat ops, incident response tools, ticketing systems, cloud APIs, and your monitoring stack. Containerization and a modular plugin approach help you replace or upgrade components without rewriting core logic. Use a centralized orchestrator or a workflow engine to coordinate actions across tools, and ensure all calls are authenticated and auditable. Consider role-based access control and secret management integrated with your security posture. Documentation is critical: provide runbooks for normal operations and contingency plans for failures. Finally, design for event-driven operation: the agent should respond to alerts, new incidents, or code changes in real time, while preserving a clear history of decisions for governance audits.

Evaluation metrics and governance

Define success with measurable metrics: deployment speed, failure rate, mean time to recovery, and the accuracy of actions recommended by the agent. Track performance across environments (dev, staging, production) and over time to detect drift. Establish governance rituals: review boards for high-risk changes, periodic audits of prompts and policies, and transparent incident postmortems that include agent decisions. Build a risk register that categorizes potential failure modes, their likelihood, and mitigations. Regularly train staff on how to interpret agent suggestions and how to intervene when needed. A strong governance model combines technical controls with organizational discipline to sustain trust and continuous improvement.

Common pitfalls and how to avoid them

Expectations vs. reality is a common pitfall. Avoid assuming that an agent will replace human judgment entirely; instead, plan for it to augment expertise. Data leakage from test environments or prompts that memorize sensitive data can cause security issues; sanitize inputs and audit prompts. Inconsistent state across systems can occur if the agent makes changes without rollback plans. Ensure robust rollback procedures and reversible actions. Overfitting prompts to a single dataset reduces generalization; diversify data sources and test against edge cases. Finally, keep operational costs in mind: large language models can be expensive if misused; implement rate limits, caching, and escalation rules to balance value with cost.

Getting started: a practical blueprint

Begin with a narrow objective, such as automating daily incident triage. Define success criteria and gather high-quality data sources from your observability stack. Build a small prototype using a lightweight orchestrator and a single agent, with basic memory and a limited action set. Validate the prototype in a staging environment, measure key metrics, and document decisions. Iterate by expanding capabilities, adding guardrails, and inviting feedback from the operations team. Finally, prepare a rollout plan that includes risk assessment, security reviews, and a staged deployment path. This blueprint keeps experimentation safe while delivering real value, and it aligns with Ai Agent Ops guidance on agented automation.

AUTHORITY SOURCES

- NIST AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework

- Stanford AI Safety and Security resources: https://ai.stanford.edu

- MIT CSAIL research and publications: https://www.csail.mit.edu

Tools & Materials

- Development workstation with internet access(Powerful CPU, 16GB RAM minimum; capable of containerization)

- Docker or Podman runtime(Used to run adapters and microservices locally)

- Access to CI/CD, monitoring, and incident tools(Test privileges or sandbox credentials for safe experimentation)

- Secure secret store / vault(HashiCorp Vault or cloud secret manager for API keys and tokens)

- LLM API keys / model access(Manage costs with quotas and monitoring)

- Documentation and runbooks(Reference for governance and onboarding)

Steps

Estimated time: 3-5 hours

- 1

Define goals and success criteria

Identify a narrowly scoped objective for the MVP, such as automating alert triage or drift detection. Define concrete success metrics and acceptance criteria to measure impact.

Tip: Document the business value and set a guardrail for scope creep. - 2

Inventory data sources and establish contracts

List logs, metrics, traces, tickets, and release notes that will feed the agent. Create simple data contracts to ensure consistent input shape.

Tip: Prefer stable, high-quality data sources first; avoid overloading with noisy signals. - 3

Design the agent architecture and tool adapters

Choose whether to start with a single agent or a small team. Identify the adapters needed to call CI/CD APIs, incident systems, and cloud services.

Tip: Keep adapters modular to simplify future upgrades. - 4

Build a minimal prototype with guardrails

Implement a basic decision loop, a safe action set, and a human-review path for high-risk actions. Validate in a staging environment.

Tip: Enable a kill switch and timeouts for safeties. - 5

Introduce auditing, logging, and governance

Capture inputs, decisions, and outcomes in an auditable trail. Set up a governance board to review high-risk actions.

Tip: Automate post-incident reviews focusing on agent decisions. - 6

Test, measure, and iterate before production

Run the MVP through real-world scenarios, compare outcomes to KPI targets, and refine prompts, memory, and policies.

Tip: Iterate in short cycles and freeze features before production rollout.

Questions & Answers

What is an AI agent for DevOps?

An AI agent for DevOps is a software component that uses AI to observe data, reason about it, and take actions across your DevOps toolchain. It operates within governance boundaries and requires human oversight for high-risk decisions.

An AI agent in DevOps is a smart helper that watches data, makes decisions, and acts, but with guardrails and human oversight.

What are the main benefits of AI agents in DevOps?

They automate repetitive toil, speed up incident response, and improve consistency with auditable decision logs, improving governance and compliance.

They cut manual work, speed up responses, and keep decisions auditable.

What are the key risks and how can I mitigate them?

Risks include data leakage, misconfigurations, and runaway automation. Mitigate with guardrails, human-in-the-loop, staging tests, and thorough auditing.

Watch for data leakage and unsafe changes; use guardrails and human checks.

What skills does my team need to succeed?

A blend of DevOps engineering, AI literacy, prompt design, security awareness, and governance practices is essential.

Your team should mix DevOps know-how with AI fluency and governance.

How long does it take to build an MVP?

An MVP can be achieved in a few weeks with a focused scope and iterative sprints.

You can reach a basic MVP in a few weeks with a clear scope.

Which tools are best for prototyping AI agents in DevOps?

Use modular adapters, containerized services, and common LLM providers; prioritize open integrations and robust logging.

Go modular with adapters and strong logs.

Watch Video

Key Takeaways

- Define measurable goals before building agents.

- Prototype in a safe sandbox before production.

- Guardrails and human-in-the-loop are essential.

- Evaluate with real-world scenarios and metrics.