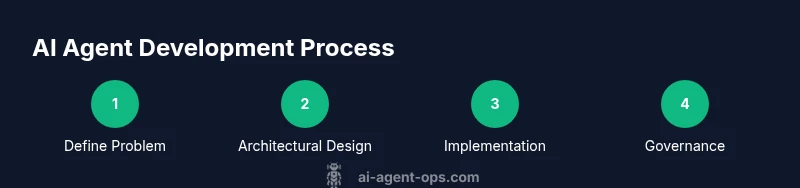

AI Agent Software Development: Practical How-To Guide

A comprehensive, step-by-step guide to building robust AI agent software. Learn architecture, tooling, safety, governance, and production readiness for agentic workflows in 2026.

This guide helps you master ai agent software development by outlining a practical, end-to-end process for planning, building, and operating autonomous agents. You will learn how to design scalable architectures, select tooling, implement perception–decision–action loops, and govern safety and performance. By following this steps-based approach, teams can ship reliable agents faster while maintaining clear traceability and governance across the lifecycle. The framework emphasizes reusable components, modular testing, and clear accountability to support business goals and risk management.

Understanding AI agent software development

The AI agent software development field focuses on building autonomous software agents that perceive inputs, reason about options, and act to achieve business goals. The goal is to balance capability with safety, governance, and maintainability. This practical guide, grounded in real-world patterns, emphasizes end-to-end discipline rather than isolated experiments. According to Ai Agent Ops, the most successful projects begin with a precise problem statement, a defined target user, and measurable outcomes. When you frame work around user value and risk, teams avoid scope creep and lay a foundation for scalable solutions. An AI agent typically combines three core capabilities: perception (data ingestion and sensing), decision-making (planning and reasoning), and action (execution and feedback). In practice, those capabilities map to modular components that can be developed, tested, and evolved independently. The objective is to design an architecture that supports incremental improvement, fast iteration, and observable behavior. This section sets up the vocabulary, goals, and success criteria you will use throughout the guide.

Architectural foundations for agentic systems

Agentic systems rely on an orchestrated set of components that collaborate to sense, decide, and act. A practical architecture often follows a three-layer pattern: perception, decision, and action. Perception gathers data from workers, devices, or software services; decision combines rules, policies, and learned heuristics; action executes tasks via APIs, scripts, or human-in-the-loop interventions. An efficient agent uses a memory layer to retain context across sessions, a planner to sequence tasks, and a tool-use mechanism to access external capabilities (databases, search, or specialized APIs). It’s essential to define clear interfaces between layers to enable independent testing and gradual migration from monoliths to microservices. Finally, you should plan for observability and governance from day one: traceable decisions, explainable outcomes, and auditable logs.

Data and tooling stack for building AI agents

Building reliable AI agents requires a robust data and tooling stack. Start with clean data sources and well-defined schemas to feed perception modules. Utilize vector databases and retrieval-augmented generation (RAG) for efficient context provisioning, and connect to language model APIs to provide reasoning capabilities. A typical stack includes data ingestion pipelines, a feature store for signals, a memory module for short- and long-term context, and a sandboxed execution environment to test agent actions safely. Version-controlled policies and guardrails help enforce constraints, while experimentation platforms support rapid iteration. You should also establish a reproducible environment using containerization and a lightweight CI/CD pipeline to ensure consistent deployments across environments.

Designing safe and reliable agents

Safety and reliability are non-negotiable in AI agent software development. Define guardrails that constrain actions, establish fail-safe modes, and implement human-in-the-loop checkpoints for critical decisions. Safety should cover data privacy, bias mitigation, and system safety in edge cases. Build robust testing regimes, including unit tests for perception, integration tests for decision cycles, and end-to-end evaluations with realistic workloads. Document decision rationales and outcomes to support auditing. Finally, implement monitoring alerts for drift, latency, and failures, and maintain a rollback plan to recover from misbehavior quickly.

Developing an end-to-end workflow

A pragmatic workflow starts with problem scoping and success criteria, followed by architecture design and environment setup. Implement perception, decision, and action modules in iterative increments, with continuous integration and automated tests at each milestone. Maintain a shared component library to promote reuse across teams and domains. Establish data lineage, versioned policies, and secure access controls. Use feature flags to roll out capabilities gradually, and implement telemetry that captures both success metrics and failure modes. Regular review cycles keep the project aligned with business objectives and regulatory requirements.

Performance, monitoring, and governance

Operational excellence for ai agent software development requires robust monitoring and governance. Track metrics such as task completion rate, latency, and accuracy of decisions, plus resource usage and failure modes. Implement alerting, dashboards, and runbooks to support rapid incident response. Governance should address data privacy, model risk management, and compliance with industry standards. Use anomaly detection to catch drift in behavior, and maintain a changelog for policy updates. Regular security audits and penetration testing help protect the agent from exploitation. Ai Agent Ops’s guidance emphasizes building a culture of responsibility and continuous improvement across product teams.

Practical examples and case patterns

Two common patterns emerge in practice: (1) planner-driven agents that sequence tasks using a high-level goal and (2) reactive agents that respond to events in real time. Planner-driven designs are strong for structured workflows like customer support or data processing pipelines, while reactive agents excel in monitoring and incident response. A hybrid approach often works best: a planner coordinates broad goals, with reactive agents handling time-critical tasks. For each pattern, define interfaces, success criteria, and safety constraints. Document trade-offs to help stakeholders understand why a particular architecture was chosen.

Common pitfalls and how to avoid them

Most failures in ai agent software development come from vague scopes, brittle data pipelines, and weak governance. Avoid scope creep by writing explicit success criteria and exit criteria for each milestone. Invest in reliable data ingestion, verify inputs with validation steps, and guard rails that prevent unsafe actions. Do not neglect monitoring; without telemetry, diagnosing failures becomes guesswork. Finally, maintain governance and documentation to ensure compliance and facilitate teamwork across disciplines.

Getting started blueprint

Begin with a one-page charter that defines the problem, success criteria, and measurable outcomes. Create a lightweight prototype focusing on perception, decision, and action blocks, with clear interfaces and test cases. Set up a minimal data pipeline, a small memory store, and a basic policy that governs actions. Establish a CI/CD flow and simple monitoring to observe behavior in a controlled environment. Iterate weekly, validating results against business objectives and updating safety guardrails as you learn.

Tools & Materials

- Integrated Development Environment (IDE)(Choose a modern IDE with linting, debugging, and project templates.)

- Version control system (Git)(Use a branching strategy (main, develop, feature/*).)

- Programming runtime (Python/Node.js)(Select language based on agent components and libraries.)

- LLM provider access(Have authentication tokens and rate limits defined.)

- Vector database(For semantic search and memory; options include FAISS-like or managed services.)

- Container runtime(Docker or containerd to ensure consistent environments.)

- Experiment tracking(Tools like MLflow or similar to capture experiments and results.)

- CI/CD platform(Automate build, test, and deployment for agents across environments.)

- Testing framework(Unit, integration, and end-to-end testing tailored to agent behavior.)

Steps

Estimated time: 2-6 weeks

- 1

Define problem and success criteria

Articulate the business goal, user personas, and concrete KPIs. Establish acceptance criteria and a clear exit condition for the project phase.

Tip: Write a one-page problem statement and list 3 measurable outcomes. - 2

Design agent architecture

Choose an architecture pattern (planner-driven, reactive, or hybrid) and define module boundaries, interfaces, and data contracts.

Tip: Capture interface diagrams and data flow early to reduce integration risk. - 3

Set up development environment

Configure the runtime, version control, and CI/CD skeleton. Create a minimal repository structure for perception, decision, and action modules.

Tip: Use template repos with standardized onboarding and lint rules. - 4

Implement perception layer

Ingest data from sources, normalize formats, and extract signals used by the decision layer. Build data validation and pre-processing steps.

Tip: Include unit tests for edge cases and data quality checks. - 5

Implement decision-making layer

Develop the planner or policy modules that reason over goals and constraints. Integrate with memory and tool access.

Tip: Prioritize safe decision paths and log decision rationales for auditing. - 6

Implement action layer

Connect to external APIs, scripts, or human-in-the-loop interfaces. Ensure action outcomes feed back into perception.

Tip: Use idempotent APIs and add retry/backoff logic. - 7

Add safety and governance

Implement guardrails, data privacy, bias checks, and logging for accountability. Define escalation paths for unsafe decisions.

Tip: Document all guardrails and ensure they can be toggled in production. - 8

Testing and evaluation plan

Create test scenarios that cover normal operation, edge cases, and failure modes. Run end-to-end tests with realistic workloads.

Tip: Automate test coverage to maintain confidence during iterations. - 9

Deploy, monitor, and iterate

Launch in a controlled environment, monitor telemetry, and collect feedback. Iterate based on observed performance and business impact.

Tip: Use feature flags to roll out gradually and revert quickly if needed.

Questions & Answers

What is AI agent software development?

AI agent software development is the process of creating autonomous software agents that perceive data, reason about actions, and execute tasks to achieve defined goals. It combines perception, decision-making, and action with safety, governance, and monitoring.

AI agent development is about building autonomous software that perceives, decides, and acts to reach goals, with safety and monitoring built in.

How does AI agent development differ from traditional software development?

AI agent development emphasizes dynamic decision-making, probabilistic reasoning, interaction with external tools, and ongoing evaluation of behavior, whereas traditional software tends to be more deterministic and rule-based.

AI agent development focuses on learning, adaptation, and decision-making, while traditional software is usually more static and rule-driven.

What architectures are common for AI agents?

Common architectures include planner-driven agents, reactive agents, and hybrid systems that combine planning with reactive responses. Each pattern serves different task profiles and governance needs.

Planner-driven, reactive, and hybrid architectures are the typical approaches for AI agents.

How do you test AI agents effectively?

Testing should cover perception accuracy, decision quality, action reliability, and safety guardrails. Use unit tests, integration tests, and end-to-end evaluations with realistic workloads.

Test agents with unit tests, integration tests, and end-to-end simulations to ensure reliable behavior.

What safety considerations are most important?

Prioritize data privacy, bias mitigation, controllable behavior, and robust auditing. Establish escalation paths for unsafe decisions and maintain guardrails.

Key safety concerns are privacy, bias, and controllable, auditable behavior.

Where should I start a new AI agent project?

Begin with a one-page charter, a minimal architecture, and a small pilot focused on a single mission. Iterate quickly and scale as confidence grows.

Start with a clear charter, a small pilot, and iterate fast.

Watch Video

Key Takeaways

- Plan with measurable outcomes and governance from day one

- Choose a modular architecture for scalable agent systems

- Prioritize safety, testing, and observability during development

- Iterate in small increments with controlled deployments